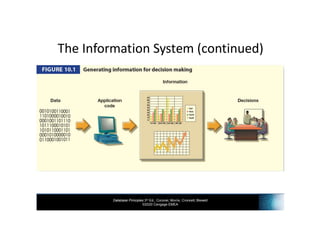

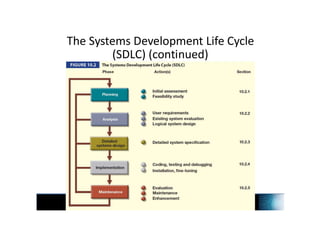

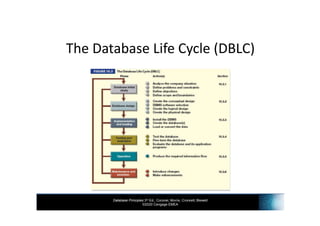

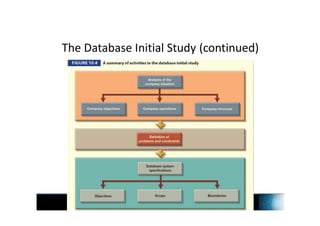

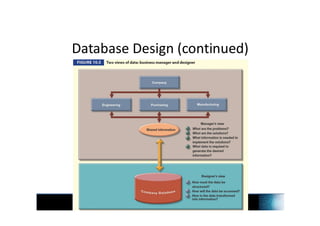

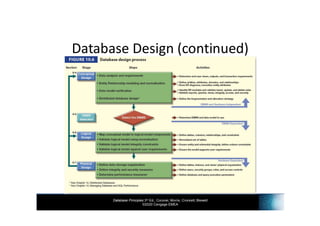

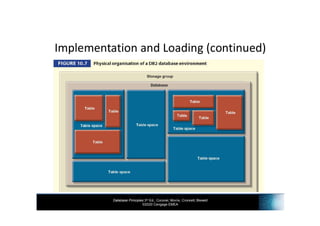

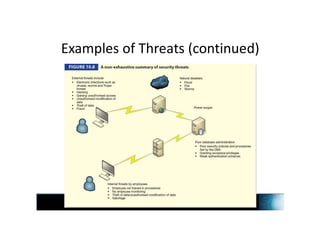

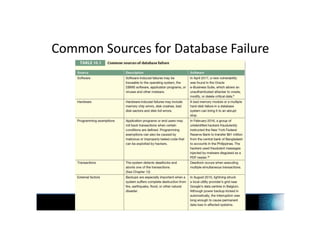

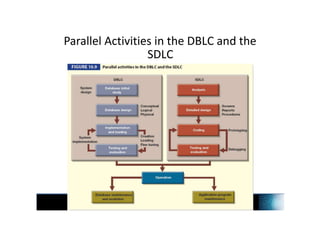

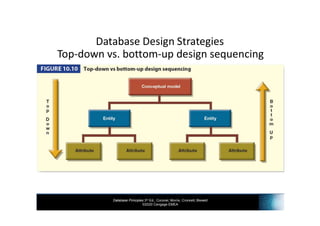

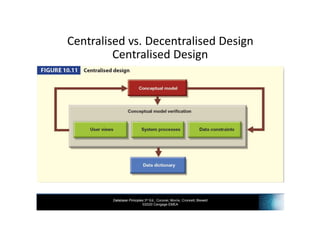

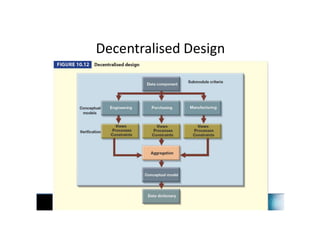

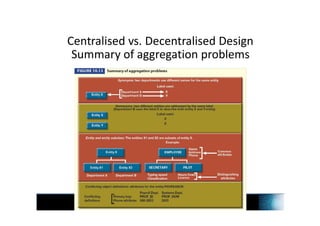

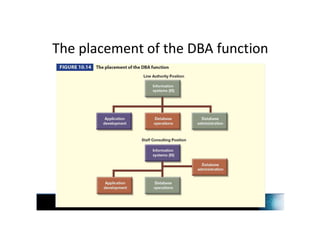

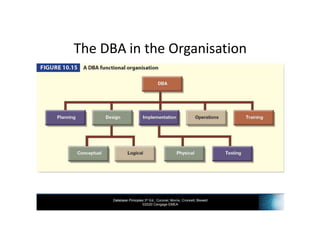

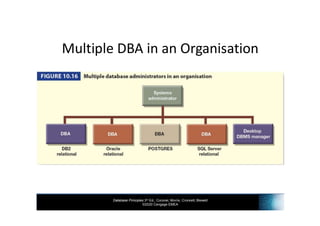

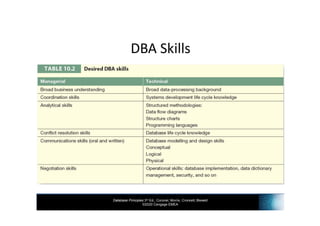

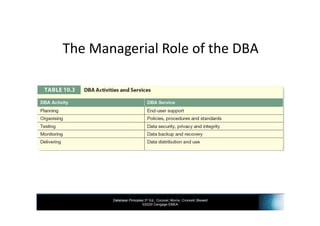

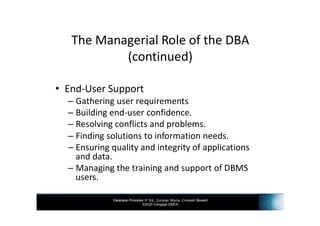

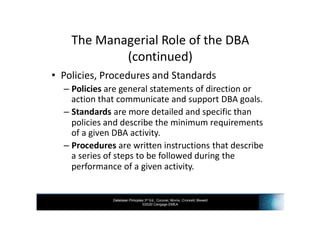

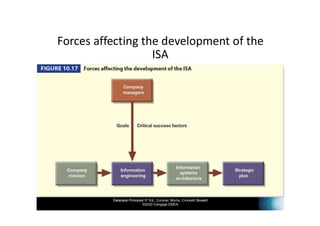

Chapter 10 of 'Database Principles' discusses the database development process, emphasizing the significance of integrating database design within the larger information system framework. It outlines the systems development life cycle (SDLC) and the database life cycle (DBLC), detailing the phases from planning to maintenance, and highlights the importance of database security and administration. Additionally, it covers database design strategies, potential security threats, and necessary measures for data protection.