Ig2 task 1 work sheet

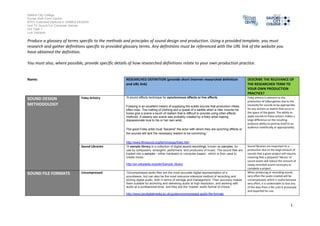

- 1. Salford City College Eccles Sixth Form Centre BTEC Extended Diploma in GAMES DESIGN Unit 73: Sound For Computer Games IG2 Task 1 Luis Vazquez 1 Produce a glossary of terms specific to the methods and principles of sound design and production. Using a provided template, you must research and gather definitions specific to provided glossary terms. Any definitions must be referenced with the URL link of the website you have obtained the definition. You must also, where possible, provide specific details of how researched definitions relate to your own production practice. Name: RESEARCHED DEFINITION (provide short internet researched definition and URL link) DESCRIBE THE RELEVANCE OF THE RESEARCHED TERM TO YOUR OWN PRODUCTION PRACTICE? SOUND DESIGN METHODOLOGY Foley Artistry ‘A sound effects technique for synchronous effects or live effects. Foleying is an excellent means of supplying the subtle sounds that production mikes often miss. The rustling of clothing and a queak of a saddle when a rider mounts his horse give a scene a touch of realism that is difficult to provide using other effects methods. A steamy sex scene was probably created by a foley artist making dispassionate love to his or her own wrist. The good Foley artist must "became" the actor with whom they are synching effects or the sounds will lack the necessary realism to be convincing.’ http://www.filmsound.org/terminology/foley.htm Foley artistry is relevant to the production of video games due to the necessity for sounds to be appropriate for any actions or events that occur in the space of the game. The ability to apply sounds to these actions makes a large difference on the resulting products ability to portray itself to an audience realistically or appropriately. Sound Libraries ‘A sample library is a collection of digital sound recordings, known as samples, for use by composers, arrangers, performers, and producers of music. The sound files are loaded into a sampler - either hardware or computer-based - which is then used to create music.’ http://en.wikipedia.org/wiki/Sample_library Sound libraries are important to a production due to the large amount of sounds that a given project will require, meaning that a prepared ‘library’ of sound assets will reduce the amount of newly recorded sound necessary to complete a project. SOUND FILE FORMATS Uncompressed ‘Uncompressed audio files are the most accurate digital representation of a soundwave, but can also be the most resource-intensive method of recording and storing digital audio, both in terms of storage and management. Their accuracy makes them suitable for archiving and delivering audio at high resolution, and working with audio at a professional level, and they are the 'master' audio format of choice.’ http://www.jiscdigitalmedia.ac.uk/guide/uncompressed-audio-file-formats When producing or recording sound, very often the audio created will be uncompressed, which is useful because very often, it is undesirable to lose any of the data from a file until it processed and exported for use.

- 2. Salford City College Eccles Sixth Form Centre BTEC Extended Diploma in GAMES DESIGN Unit 73: Sound For Computer Games IG2 Task 1 Luis Vazquez 2 .wav ‘A Wave file is an audio file format, created by Microsoft, that has become a standard PC audio file format for everything from system and game sounds to CD-quality audio. A Wave file is identified by a file name extension of WAV (.wav). Used primarily in PCs, the Wave file format has been accepted as a viable interchange medium for other computer platforms, such as Macintosh. This allows content developers to freely move audio files between platforms for processing, for example. In addition to the uncompressed raw audio data, the Wave file format stores information about the file's number of tracks (mono or stereo), sample rate, and bit depth.’ http://whatis.techtarget.com/definition/Wave-file .wav is the format that the majority of sound manipulation and production programs export in, and as .wav files will have the vast majority of the data for a sound file; the format is useful as ‘raw’ data that can be modified into a different file type. .aiff ‘AIFF is short for Audio Interchange File Format, which is an audio format initially created by Apple Computer for storing and transmitting high-quality sampled audio data. It supports a variety of bit resolutions, sample rates, and channels of audio. This format is quite popular upon Apple platforms, and is commonly adopted in professional programs that handle digital audio waveforms. AIFF files are uncompressed, making the files quite large compared to the ubiquitous MP3 format. AIFF files are comparable to Microsoft's wave files; because they are high quality they are excellent for burning to CD. There is also a compressed variant of AIFF known as AIFF-C or AIFC, with various defined compression codecs. ‘ http://www.abyssmedia.com/formats/aiff-format.shtml .aiff is a file type that is commonly associated with Apple computer systems and programs. As it is an uncompressed file format, files of this type are fairly large in size. .au ‘The Au file format is a simple audio file format introduced by Sun Microsystems. The format was common on NeXT systems and on early Web pages. Originally it was header less, being simply 8-bit µ-law-encoded data at an 8000 Hz sample rate. Hardware from other vendors often used sample rates as high as 8192 Hz, often integer factors of video clock signals. Newer files have a header that consists of six unsigned 32-bit words, an optional information chunk and then the data (in big endian format). Although the format now supports many audio encoding formats, it remains associated with the law logarithmic encoding. This encoding was native to the SPARCstation 1 hardware, where SunOS exposed the encoding to application programs through the /dev/audio interface. This encoding and interface became a de facto standard for Unix sound. ‘ https://www.princeton.edu/~achaney/tmve/wiki100k/docs/Au_file_format.html .smp ‘An ".smp" file may be one of several different types of audio file. For example, it could be a SampleVision audio sample file. This 16-bit audio file was originally used by Turtle Beach SampleVision; you can open it with Adobe Auction, Sound Forge Pro or Awave Studio. It could also be a sample file for AdLib Gold, a PC sound card released in 1992; Scream Tracker, a mid-

- 3. Salford City College Eccles Sixth Form Centre BTEC Extended Diploma in GAMES DESIGN Unit 73: Sound For Computer Games IG2 Task 1 Luis Vazquez 3 1990s music editing program; or Swell. Reason, a music recording and production program, uses the ".smp" extension for sampler instrument patches.’ http://www.ehow.com/info_12198596_file-smp.html Lossy Compression ‘Lossy file compression results in lost data and quality from the original version. Lossy compression is typically associated with image files, such as JPEGs, but can also be used for audio files, like MP3s or AAC files. The "lossyness" of an image file may show up as jagged edges or pixelated areas. In audio files, the lossyness may produce a watery sound or reduce the dynamic range of the audio. Because lossy compression removes data from the original file, the resulting file often takes up much less disk space than the original. For example, a JPEG image may reduce an image's file size by more than 80%, with little noticeable effect. Similarly, a compressed MP3 file may be one tenth the size of the original audio file and may sound almost identical. The keyword here is "almost." JPEG and MP3 compression both remove data from the original file, which may be noticeable upon close examination. Both of these compression algorithms allow for various "quality settings," which determine how compressed the file will be. The quality setting involves a trade-off between quality and file size. A file that uses greater compression will take up less space, but may not look or sound as good as a less compressed file. Some image and audio formats allow lossless compression, which does not reduce the file's quality at all.’ http://www.techterms.com/definition/lossy Lossy compression is a method of processing audio into files that can maintain similar a quality of audio while reducing the amount of space that the file takes up by ‘losing’ data from the ‘raw’ files in such a way that the difference would not be very pronounced from the output. .mp3 ‘The name of the file extension and also the name of the type of file for MPEG, audio layer 3. Layer 3 is one of three coding schemes (layer 1, layer 2 and layer 3) for the compression of audio signals. Layer 3 uses perceptual audio coding and psychoacoustic compression to remove all superfluous information (more specifically, the redundant and irrelevant parts of a sound signal. The stuff the human ear doesn't hear anyway). It also adds a MDCT (Modified Discrete Cosine Transform) that implements a filter bank, increasing the frequency resolution 18 times higher than that of layer 2. The result in real terms is layer 3 shrinks the original sound data from a CD (with a bit rate of 1411.2 kilobits per one second of stereo music) by a factor of 12 (down to 112-128kbps) without sacrificing sound quality. Because MP3 files are small, they can easily be transferred across the Internet.’ http://www.webopedia.com/TERM/M/MP3.html .mp3 files are a commonly used file type for compressed audio. It is a lossy compression audio type, which means that it loses data and quality from the ‘raw’ audio files in order to create smaller files. AUDIO LIMITATIONS Sound Processor Unit (SPU) ‘A sound card (also known as an audio card) is an internal computer expansion card that facilitates the input and output of audio signals to and from a computer under control of computer programs. The term sound card is also applied to external audio interfaces that use software to generate sound, as opposed to using hardware inside the PC. Typical uses of sound cards include providing the audio component for multimedia applications such as music composition, editing video or audio, presentation, education and entertainment (games) and video projection. Sound Processors are vital to a production process, as the method by which a computer interprets and outputs sound. Sound cards also affect how quickly and how well a computer can receive and output sound of a given quality, meaning that the capabilities

- 4. Salford City College Eccles Sixth Form Centre BTEC Extended Diploma in GAMES DESIGN Unit 73: Sound For Computer Games IG2 Task 1 Luis Vazquez 4 Sound functionality can also be integrated onto the motherboard, using basically the same components as a plug-in card. The best plug-in cards, which use better and more expensive components, can achieve higher quality than integrated sound. The integrated sound system is often still referred to as a "sound card". ‘ http://en.wikipedia.org/wiki/Sound_card and compatibilities of sound cards must be taken into consideration when producing audio based media. Digital Sound Processor (DSP) ‘Digital Signal Processors (DSPs) take real-world signals like voice, audio, video, temperature, pressure, or position that have been digitized and then mathematically manipulate them. A DSP is designed for performing mathematical functions like "add", "subtract", "multiply" and "divide" very quickly. Signals need to be processed so that the information that they contain can be displayed, analyzed, or converted to another type of signal that may be of use. In the real-world, analog products detect signals such as sound, light, temperature or pressure and manipulate them. Converters such as an Analog-to-Digital converter then take the real-world signal and turn it into the digital format of 1's and 0's. From here, the DSP takes over by capturing the digitized information and processing it. It then feeds the digitized information back for use in the real world. It does this in one of two ways, either digitally or in an analog format by going through a Digital-to-Analog converter. All of this occurs at very high speeds. During the recording phase, analog audio is input through a receiver or other source. This analog signal is then converted to a digital signal by an analog-to-digital converter and passed to the DSP. The DSP performs the MP3 encoding and saves the file to memory. During the playback phase, the file is taken from memory, decoded by the DSP and then converted back to an analog signal through the digital-to-analog converter so it can be output through the speaker system. In a more complex example, the DSP would perform other functions such as volume control, equalization and user interface. ’ http://www.analog.com/en/content/beginners_guide_to_dsp/fca.html Digital Sound Processors are important for a sound production that intends to use recorded sound. DSPs allow signals that have been converted to data to be manipulated, and can also process manipulated data to transfer it into a digital or analogue form. Random Access Memory (RAM) ‘Alternatively referred to as main memory, primary memory, or system memory, Random Access Memory (RAM) is a computer storage location that allows information to be stored and accessed quickly from random locations within DRAM on a memory module. Because information is accessed randomly instead of sequentially like a CD or hard drive the computer is able to access the data much faster than it would if it was only reading the hard drive. However, unlike ROM and the hard drive RAM is a volatile memory and requires power in order to keep the data accessible, if power is lost all data contained in memory is lost.’ http://www.computerhope.com/jargon/r/ram.htm Random Access Memory is an important part of all processes of a computer, but it is especially relevant in sound production where a sound may need to be played back and processed repeatedly, so the faster and more capable RAM is on the computer, the speedier the production process. Mono Audio ‘Mono or monophonic describes a system where all the audio signals are mixed together and routed through a single audio channel. Mono systems can have multiple loudspeakers, and even multiple widely separated loudspeakers. The key is that the signal contains no level and arrival time/phase information that would replicate or simulate directional cues. Common types of mono systems include single channel centre clusters, mono split cluster systems, and distributed loudspeaker systems with and without architectural delays. Mono systems can still Mono Audio is useful when producing sound that does not need, or benefits from not having directional information. As all audio is directed through a single channel in Mono the signal is consistent for each system that

- 5. Salford City College Eccles Sixth Form Centre BTEC Extended Diploma in GAMES DESIGN Unit 73: Sound For Computer Games IG2 Task 1 Luis Vazquez 5 be full-bandwidth and full-fidelity and are able to reinforce both voice and music effectively. The big advantage to mono is that everyone hears the very same signal, and, in properly designed systems, all listeners would hear the system at essentially the same sound level. This makes well-designed mono systems very well suited for speech reinforcement as they can provide excellent speech intelligibility.’ http://www.mcsquared.com/mono-stereo.htm outputs the sound. Stereo Audio ‘True stereophonic sound systems have two independent audio signal channels, and the signals that are reproduced have a specific level and phase relationship to each other so that when played back through a suitable reproduction system, there will be an apparent image of the original sound source. Stereo would be a requirement if there is a need to replicate the aural perspective and localization of instruments on a stage or platform, a very common requirement in performing arts centres. This also means that a mono signal that is panned somewhere between the two channels does not have the requisite phase information to be a true stereophonic signal, although there can be a level difference between the two channels that simulates a position difference, this is a simulation only. That's a discussion that could warrant a couple of web pages all by itself. ‘ http://www.mcsquared.com/mono-stereo.htm Stereo Audio systems use two audio channels to create the simulation of sound that has directional information, or so that sound can be processed as if it did have directions associated with it. This is useful when creating sound that is simulated to exist within a ‘physical’ space (such as a character’s movements in a video). Surround Sound ‘Surround sound audio is, simply put, sound that completely surrounds you. It means a speaker in virtually every corner of the room, projecting high-quality digital sound at you from all angles just as though you were in a theater. In terms of nuts and bolts, it means a set of speakers, usually five including the all-important “center speaker,” and a subwoofer for powerful bass. This is where the term “5.1” comes from -- five speakers and a subwoofer. ‘ http://peripherals.about.com/od/speakersandheadphones/a/whatis_ss.htm Surround Sound is a phrase that can refer to any number of audio systems that have in common the assumption of existing in an approximate 360 degree space around a recipient of the audio output. Direct Audio (Pulse Code Modulation – PCM) ‘Pulse code modulation (PCM) is a digital representation of an analog signal that takes samples of the amplitude of the analog signal at regular intervals. The sampled analog data is changed to, and then represented by, binary data. PCM requires a very accurate clock. The number of samples per second, ranging from 8,000 to 192,000, is usually several times the maximum frequency of the analog waveform in Hertz (Hz), or cycles per second, which ranges from 8 to 192 KHz.’ http://www.techopedia.com/definition/24128/pulse-code-modulation-pcm PCM is a form of interpretation of an analogue system into a digital form, which is useful for allowing digital systems to interpret and use data from an analogue system or storage media. AUDIO RECORDING SYSTEMS Analogue ‘Simply put, an analog system sends information by encoding it as a continuous change in voltage or current. The receiving end then decodes these changes back into usable information. For example, an analog clock represents the sun circling the sky. An analog device converts a pattern, such as light or sound patterns, into electrical signals with similar patterns. Analogue systems use a single continuous signal as a pattern to represent information, such as audio. Since it relies on these small physical

- 6. Salford City College Eccles Sixth Form Centre BTEC Extended Diploma in GAMES DESIGN Unit 73: Sound For Computer Games IG2 Task 1 Luis Vazquez 6 The definition of an analog signal can also reveal its shortcomings. Analog signals, because they rely on minute variations in voltage or current, are very susceptible to interference from outside sources. Even a small change in the analog signal from an outside source, such as electromagnetic interference, can cause dramatic changes in the signal and how the signal is interpreted.’ http://www.theprojectorpros.com/learn-s-learn-p-theater_analog_digital.htm changes from a single continuous signal to interpret information, it is very susceptible to interference from physical sources of any magnitude. Digital Mini Disc ‘One easy way to think about a MiniDisc is like a floppy disk -- you can record and erase files on a MiniDisc just as easily as you can on a floppy disk. The big difference between the a MiniDisc and a floppy disk is that a MiniDisc can hold about 100 times more data (about 140 megabytes in data mode, 160 megabytes in audio mode vs. 1.44 megabytes for a floppy).’ http://electronics.howstuffworks.com/question55.htm DMDs were an older storage medium that have largely fallen out of use. It had the advantage over early CDs in that DMDs were more easily recorded onto. Compact Disc (CD) ‘A compact disc [sometimes spelled disk] (CD) is a small, portable, round medium made of molded polymer (close in size to the floppy disk) for electronically recording, storing, and playing back audio, video, text, and other information in digital form. Tape cartridges and CDs generally replaced the phonograph record for playing back music.’ http://searchstorage.techtarget.com/definition/compact-disc CDs are one of the most commonly used physical storage mediums, and are compatible with most computers and other disc-based devices, which are often built with CD reading hardware. Digital Audio Tape (DAT) ‘Digital Audio Tape (DAT or R-DAT) is a signal recording and playback medium developed by Sony and introduced in 1987.[1] In appearance it is similar to a compact audio cassette, using 4 mm magnetic tape enclosed in a protective shell, but is roughly half the size at 73 mm × 54 mm × 10.5 mm. As the name suggests, the recording is digital rather than analog. DAT has the ability to record at higher, equal or lower sampling rates than a CD (48, 44.1 or 32 kHz sampling rate respectively) at 16 bits quantization. If a digital source is copied then the DAT will produce an exact clone, unlike other digital media such as Digital Compact Cassette or non-Hi-MD MiniDisc, both of which use lossy data compression.’ http://www.princeton.edu/~achaney/tmve/wiki100k/docs/Digital_Audio_Tape.html MIDI ‘MIDI stands for Musical Instrument Digital Interface. The development of the MIDI system has been a major catalyst in the recent unprecedented explosion of music technology. MIDI has put powerful computer instrument networks and software in the hands of less technically versed musicians and amateurs and has provided new and time-saving tools for computer musicians. The system first appeared in 1982 following an agreement among manufacturers and developers of electronic musical instruments to include a common set of hardware connectors and digital codes in their instrument design. In 1983, the MIDI 1.0 Specification was formally released by the International MIDI Association* as Roland, Yamaha, Korg, Kawai and Sequencial Circuits all came out with MIDI-capable instruments that year.’ http://www.indiana.edu/~emusic/etext/MIDI/chapter3_MIDI.shtml MIDI is the standard interface that is common to the software that is used in the production of music, which means that I will use it to create sounds that have not been recorded using a microphone, such as music. Software Sequencers ‘A sequencing software package designed to be loaded into a computer. Software sequencers usually have more features and has the advantage of showing you a lot more information at Software sequencers are important to my production process, as I do not use

- 7. Salford City College Eccles Sixth Form Centre BTEC Extended Diploma in GAMES DESIGN Unit 73: Sound For Computer Games IG2 Task 1 Luis Vazquez 7 once because they use the computer's screen and aren't locked into the knobs or buttons or display of a hardware sequencer.’ http://www.wannaplaymusic.com/get-started/keyboard-terminology specialised hardware to manipulate sound files. These programs give me an interface to manipulate and create sound directly and independently. Software Plug-ins ‘A plug-in is a (sometimes essential) piece of software code that enables an application or program to do something it couldn’t by itself. One of the more common plug-ins is Adobe Flash Player. Without Flash Player you won’t, for example, be able to view BBC News bulletins embedded into web pages. Other plug-ins are available for different things. There are plug-ins for social media networking, foreign language alphabets and many other things. One plug-in allows for the display of Microsoft Office 2007 documents within the browser.’ http://www.bbc.co.uk/webwise/guides/about-plugins Plug-ins are necessary to my production process, as the base software for programs such as sequencers are limited in the types of manipulations that they can achieve. Using plug-ins, it becomes possible to create new sounds that were not possible with the options available without them. MIDI Keyboard Instruments ‘A Musical Instrument Digital Interface (MIDI) keyboard is a musical instrument like a piano keyboard. The MIDI portion indicates that the instrument has a communication protocol built in that allows it to communicate with a computer or other MIDI-equipped instrument. The MIDI interface is now so easy to implement that almost all keyboards sold today are some type of MIDI keyboard. This ranges from a simple 100 US dollar (USD) MIDI keyboard sold at the local department store to a 30,000 USD grand piano with a built-in controller. Every type can connect to any other type of musical instrument that sports a MIDI interface. The 30,000 USD instrument will sound much better than the 100 USD instrument, but both can be controlled by the computer or other instrument in the same way.’ http://www.wisegeek.com/what-is-a-midi-keyboard.htm MIDI instruments allow for in-software productions to be created using real world musical skill, as an interface to that software. MIDI instruments also themselves often are created and distributed with options for the creations of sounds using the interface, which can be simulated in Software Sequencers using plug-ins. AUDIO SAMPLING File Size Constraints - Bit- depth ‘Audio recorded at a lower bit depth will sound grainy and less defined therefore recording audio at 24 bit will be of a higher quality than something recorded at 16 bit. So why doesn't everyone record at 24 bit? Well we have identified the benefits of recording at higher bit depths but what about the costs? If something is recorded at 24 bits then it is using more bits in a file and as such the files are going to be much bigger. They will take up more disc space and they also require more computing power to process. So how do you choose what bit depth to work in? The best option is to provide yourself with the choice, buy an audio interface capable of recording at higher bit depths but only use the higher bit depths if you know your project will benefit from it. For example it may be more efficient to stick to 16 bits if your project is aimed at FM transmissin or internet streaming.’ http://www.dolphinmusic.co.uk/article/120-what-does-the-bit-depth-and-sample-rate-refer- to-.html Bit depth is relevant in sound production as a method of balancing the quality of a given audio file, and the amount of space that that file takes up, which can be changed to suit a variety of practical purposes. File Size Constraints - Sample Rate ‘Basically, sample rate is how often the ADC (Analog to Digital Converter) takes a snapshot of the audio. Ideally, the sample rate would be infinite, so the ADC was continuously translating the analog signal to digital, but that would require infinite disk space, so we settle for a less than perfect translation, and hopefully we can’t notice the difference. ‘ The sample rate of an audio file affects how precisely it is read by any given software, which means that the sound taken from that file will be of a higher

- 8. Salford City College Eccles Sixth Form Centre BTEC Extended Diploma in GAMES DESIGN Unit 73: Sound For Computer Games IG2 Task 1 Luis Vazquez 8 http://progulator.com/digital-audio/sampling-and-bit-depth/ quality. However, higher sample rates increase the size of a file exponentially.