GSOC proposal

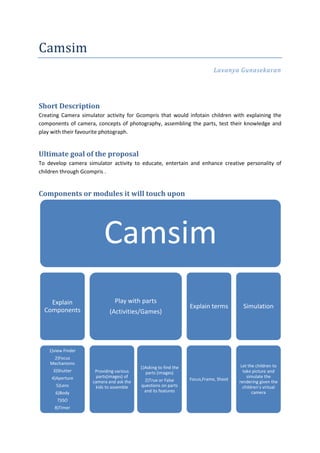

- 1. Camsim Lavanya Gunasekaran Short Description Creating Camera simulator activity for Gcompris that would infotain children with explaining the components of camera, concepts of photography, assembling the parts, test their knowledge and play with their favourite photograph. Ultimate goal of the proposal To develop camera simulator activity to educate, entertain and enhance creative personality of children through Gcompris . Components or modules it will touch upon Camsim Explain Play with parts Explain terms Simulation Components (Activities/Games) 1)view Finder 2)Focus Mechanisms 1)Asking to find the Let the children to 3)Shutter Providing various parts (images) take picture and 4)Aperture parts(images) of simulate the 2)True or False Focus,Frame, Shoot camera and ask the rendering given the 5)Lens kids to assemble questions on parts children’s virtual 6)Body and its features camera 7)ISO 8)Timer

- 2. Explain Terms Explaining the kids about the terms behind photography so that they can learn to take good photographs. 1) Focus It can be explained by fixing lens image in middle, object at one end and allow the kids to move the camera (image) front and back at other end. And hence we can explain them that in order to keep the image of a close objects sharp, the lens must be moved relative to the screen (or camera sensor). This process is called focusing. Likewise the following terms can also be explained to kids using activities. 2) Lighting techniques Outdoor Lighting Existing Light(sun) Fluorescent Light 3) Contrast Tonal Contrast Color Contrast 4) Optics Explanation about lenses (normal, wide-angle, long focus) and the radiation of light comes under this activity. 5) Rule of Thirds The “Rule of Thirds” one of the first things that budding digital photographers learn about in classes on photography and rightly so as it is the basis for well balanced and interesting shots. http://digital-photography-school.com/rule- of-thirds 6 ) Camera Orientation Modes Portrait Landscape

- 3. Explain Components Information about the camera, say its types (pinhole/SLR/DSLR) and features. Brief explanation about the various parts like aperture, shutter, ISO, lens cover and the role played by them in camera using Screen read and Audio Explanation. For example, Explaining Aperture as “it is a hole or an opening through which light travels” using few pictures (as slideshow) and with audio explanation. Play with parts Activity to test the knowledge of kids on the parts (images) of the camera. Can be tested using True or False questions Providing name of camera parts and asking them to choose between the images. Identifying the camera part using the image.

- 4. Simulation Switching between virtual Images Having few background images (say 4 to 5 images of sceneries, toys, animals, etc.) and allowing kids to choose between those images and using it as object for taking pictures using camera. Focus,Frame,shoot Adjusting focal length, optical zoom, lighting, shutter speed. The image is to be captured is blended according to the adjustments done by kids on focal length, Zoom etc. Image can be blended using the Image Enhance Modules in PIL (python Image Library).

- 5. viewing previous images Allows kids to take sequence of pictures and save it as album. To watch photos taken previously as slideshow. Images will be stored in sqlite3 database and fetched and previewed as slideshow. What benefits does it have for GNOME and its community? Going by the KISS principle, this simple application is about making users, children and adults alike understand the components and working of a digital camera. As a photography enthusiast, this would be my humble contribution so users would learn and have fun. Why you’d like to complete this project? My passion for photography and FOSS has finally met with this project. Through this I would like to kick start the inquisitive nature of children as well as imparting my knowledge about photography and camera. How do you plan to achieve completion of your project? Milestone 1: April 24 - May 20 (Community Bonding Period) Discussing the activity ideas with the mentor. Final list of activities to be implemented under GCompris Camsim Study documentation on PyGoocanvas, PyGTK, and Python GCompris API. Setting up the development environment. Study the overview of game sequence & interaction between GCompris core & activity plugin. Getting familiar with writing a GCompris activity using the code snippets of python test & python template activities. Assembling skins, sounds, and content. Milestone 2: May 21- July 9 (Interim Period) Start Coding! Designing the UI for the activities Building up algorithms for these two activities. Code integration of activity plugin with the UI. task Milestone 3: Mid Term Evaluation Submit two activities camera simulator and camera explainer along with documentation.

- 6. Milestone 4: July 14 - August 12(Interim period) Designing UI for Art camera activity with the UI Document Work, Debug, and reduce code complexity. Milestone 5: August13-August20 (Pencils down) Testing, documentation & debugging Final Release. What will show able at mid-term [1]? Submit two activities camera simulator and camera explainer along with documentation. Why are you the right person to work on this project? About Me I am Lavanya Gunasekaran, pursuing first year post-graduation in Anna University, Chennai, India. I consider myself fortunate to have come from rural background to have made to one of the premier technology institutions in India. In a way I have had the opportunity to see the best of both worlds. I enjoyed the interactions with laidback lifestyle and serene landscape of my native town and the bustling, technological hub of South India, Chennai. Naturally these contrasting settings have stroked my interest in photography and I try to capture those moments where ever I come across. I have been FOSS enthusiast for more than a year now. The first thing that came in my mind when I learnt about FOSS was its potential in changing the technological landscape and thus the quality and standard of life throughout India. It is my conviction to contribute and promote my ideas, passion, and hobby through FOSS. This project is one of my many steps towards that goal. I am well versed in python, C, C++ and Java languages. I have worked with MYSQL, SQLITE, INGRESQL, POSTGRESQL and have coded few flash games. I have also worked as campus ambassador for www.twenty19.com (website for student opportunities) and www.knowafest.com (website for campus fest) I developed leadership abilities by taking up responsibility as Event Coordinator for my department festival (OLAP). Qualities that I developed over years have given me patience, perseverance, and confidence which I can effectively implement for this project and beyond. Github:https://github.com/laya IRC: lavaa at freenode Email: lavanyagunasekar@gmail.com Twitter: @lava_g Website: http://lava.co.nr

- 7. What are your past experiences with the open source world as a user and as a contributor? Attended Chennai WikiMedia Hackathon and developed scrap for Wiki-Content- Downloader Developed a project for IRIS RECOGNITION using Java and got Best project award of the year from my college. Active member in developing website (using PHP) of my college. Please include a link to the bug you fixed for the GNOME module your proposal is related to. Bug 665258 – resolved the problem that GCompris crashes if the database is Read Only using the following patch For any clarifications please feel free to contact me.Thanks for spending your precious time and reading my proposal.