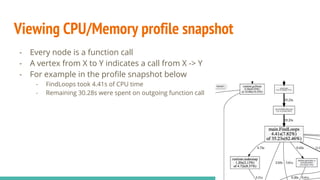

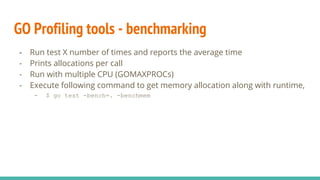

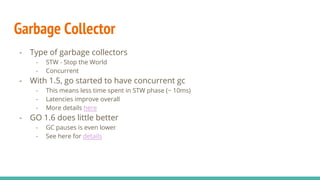

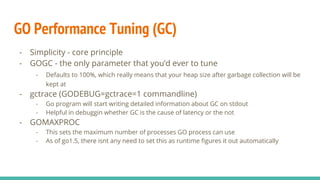

The document discusses performance tooling in Go, emphasizing the importance of measuring latency using percentiles and profiling to understand application performance at scale. It introduces Go's profiling tools, such as pprof, which helps capture CPU and memory usage, and offers insights on garbage collection and performance tuning strategies. Recommendations include using simpler data structures, reusing objects, and enabling web pprof to trace live processes for better performance management.