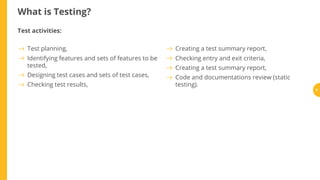

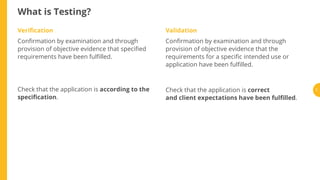

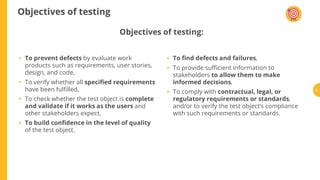

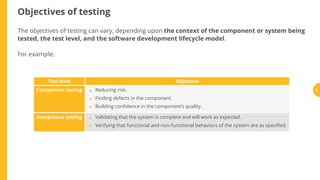

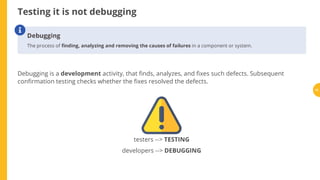

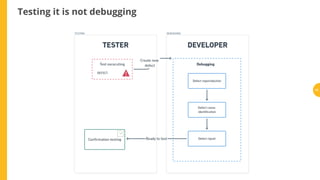

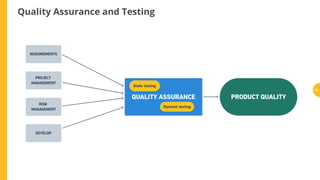

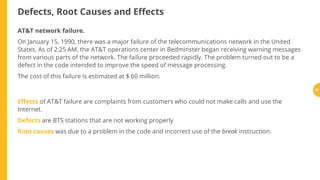

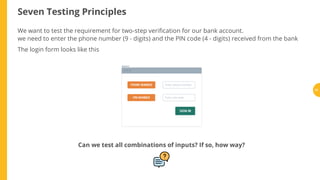

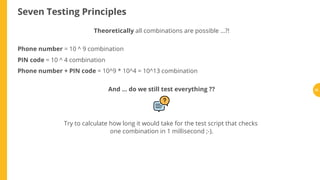

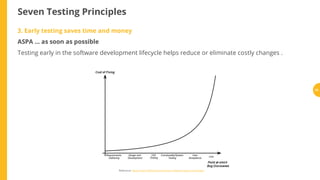

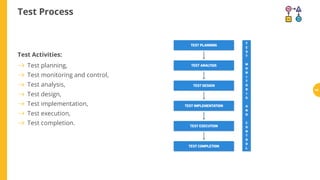

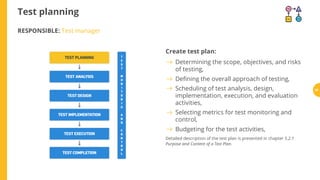

Testing is a process that verifies software or systems meet their requirements and are fit for purpose. It involves planning, preparing, and evaluating components and systems through activities like test planning, design, implementation, execution, and completion. Testing aims to prevent defects, verify requirements are fulfilled, validate stakeholder expectations are met, build confidence in quality, and find failures. While testing cannot prove absence of defects, it helps reduce risks and costs when done systematically throughout the development lifecycle. Key principles of testing include that exhaustive testing is impossible, early testing saves time and money, defects cluster together, and testing must be tailored to its context.