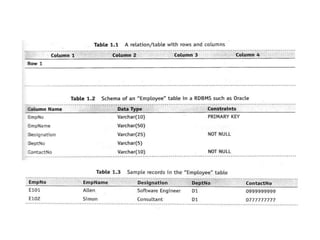

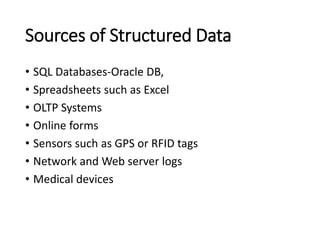

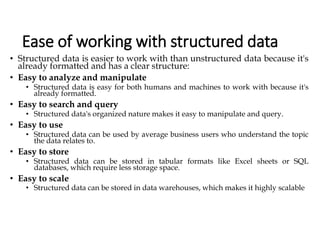

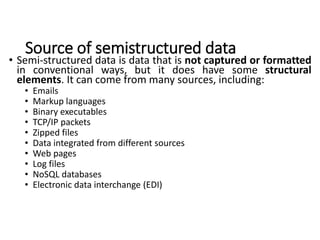

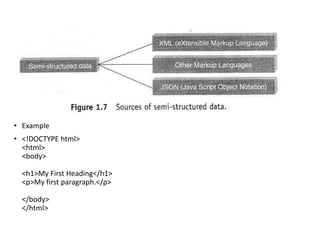

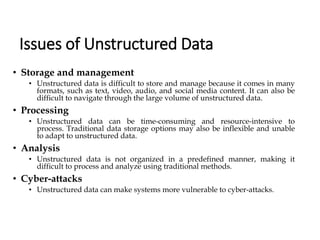

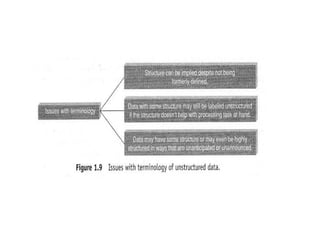

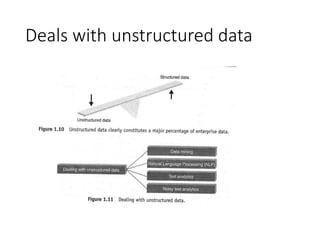

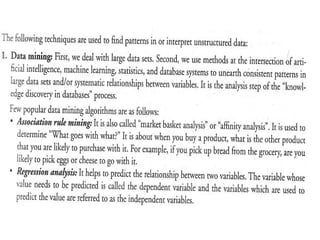

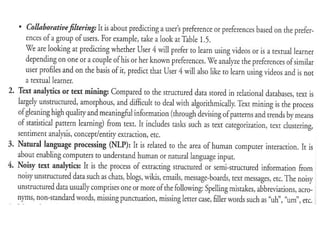

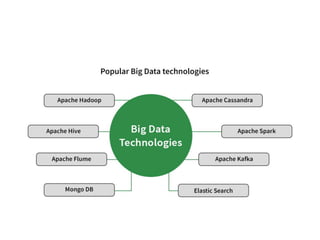

The document discusses digital data types, categorizing them into structured, semi-structured, and unstructured data, highlighting their characteristics and challenges. It also introduces big data and big data analytics, emphasizing the need for innovative processing solutions due to the large volume, velocity, and variety of data generated. Key technologies for managing big data include Hadoop, Tableau, and machine learning algorithms, necessary for gaining insights and making informed decisions.