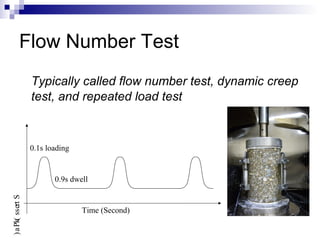

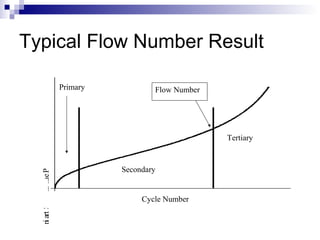

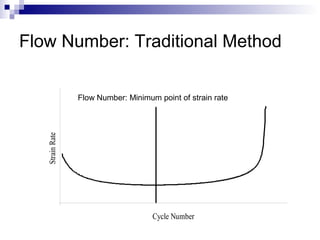

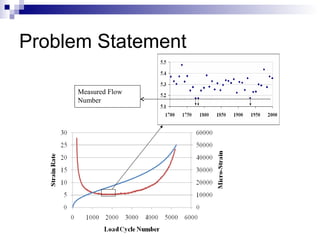

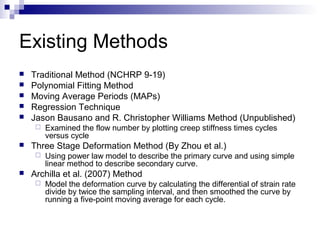

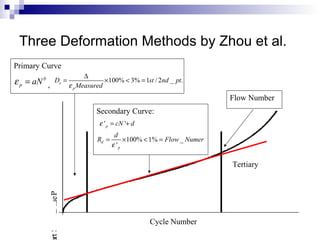

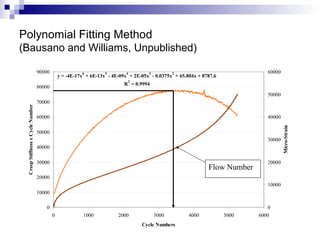

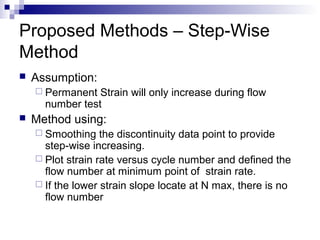

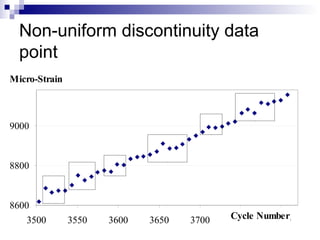

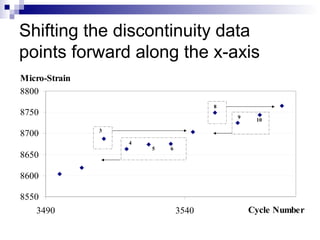

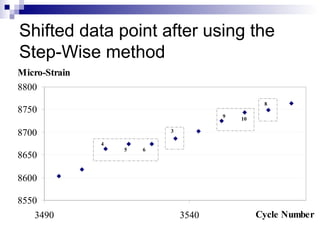

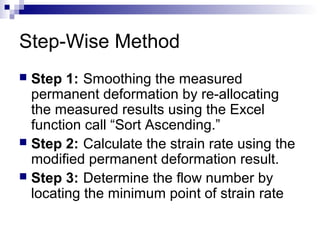

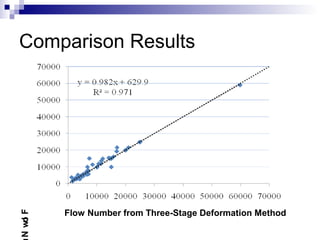

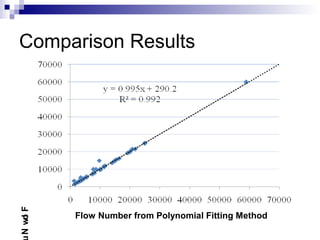

The document presents a step-wise method for determining the tertiary flow in repeated load tests, addressing limitations in existing methods. It evaluates various techniques, including polynomial fitting and three-stage deformation, and demonstrates the effectiveness of the proposed method in providing consistent results. Comparison of flow numbers shows strong correlations with traditional methods, which underlines the validity of the new approach.