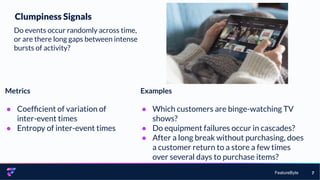

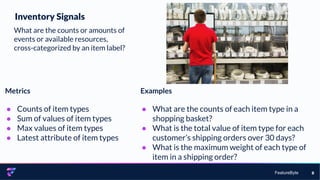

The document discusses the importance of feature engineering in machine learning, emphasizing how effective feature engineering can enhance model performance and decision-making through better understanding and handling of data. It presents various signal types that can be derived from data, such as clumpiness, inventory, similarity, stability, change, diversity, location, regularity, recency, frequency, and monetary signals, each with metrics and practical examples. It concludes by suggesting that using these signal types and entities can inspire and guide the creation of more robust features in data analysis.