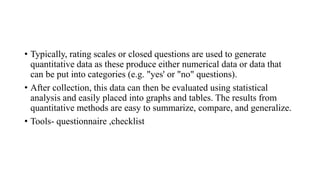

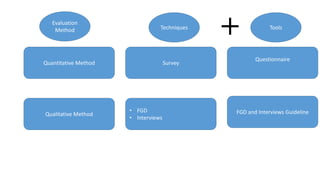

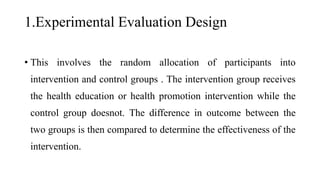

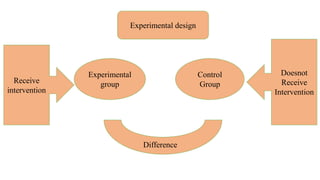

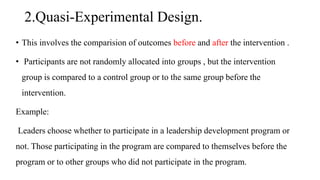

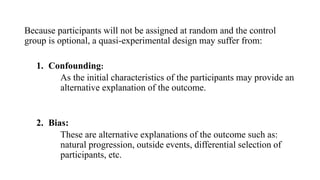

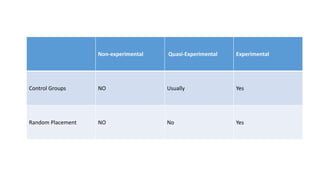

The document outlines three types of evaluation designs: experimental, quasi-experimental, and observational, detailing their methods and differences. Experimental design involves random allocation to intervention and control groups, while quasi-experimental design involves non-random assignment and direct comparison of outcomes before and after the intervention. Observational design entails recording behaviors and outcomes without intervention, and the document further discusses evaluation methods, including quantitative and qualitative approaches.

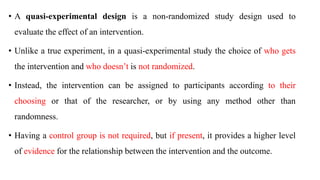

![Experimental Study (a.k.a. Randomized

Controlled Trial)

Quasi-Experimental Study

Objective Evaluate the effect of an intervention or a treatment Evaluate the effect of an intervention or a treatment

How participants get

assigned to groups?

Random assignment

Non-random assignment (participants get assigned

according to their choosing or that of the researcher)

Is there a control group? Yes

Not always (although, if present, a control group will

provide better evidence for the study results)

Is there any room for

confounding?

No (although check Manson et al. for a detailed

discussion on post-randomization confounding in

randomized controlled trials)

Yes (however, statistical techniques can be used to

study causal relationships in quasi-experiments)

Level of evidence

A randomized trial is at the highest level in the

hierarchy of evidence

A quasi-experiment is one level below the

experimental study in the hierarchy of evidence

[source]

Advantages Minimizes bias and confounding

– Can be used in situations where an experiment is not

ethically or practically feasible

– Can work with smaller sample sizes than

randomized trials

Limitations

– High cost (as it generally requires a large sample

size)

– Ethical limitations

– Generalizability issues

– Sometimes practically infeasible

Lower ranking in the hierarchy of evidence as losing

the power of randomization causes the study to be

more susceptible to bias and confounding](https://image.slidesharecdn.com/evaluationdesignppt-240201133559-760b7a72/85/Evaluation-Design-presentation-public-health-10-320.jpg)