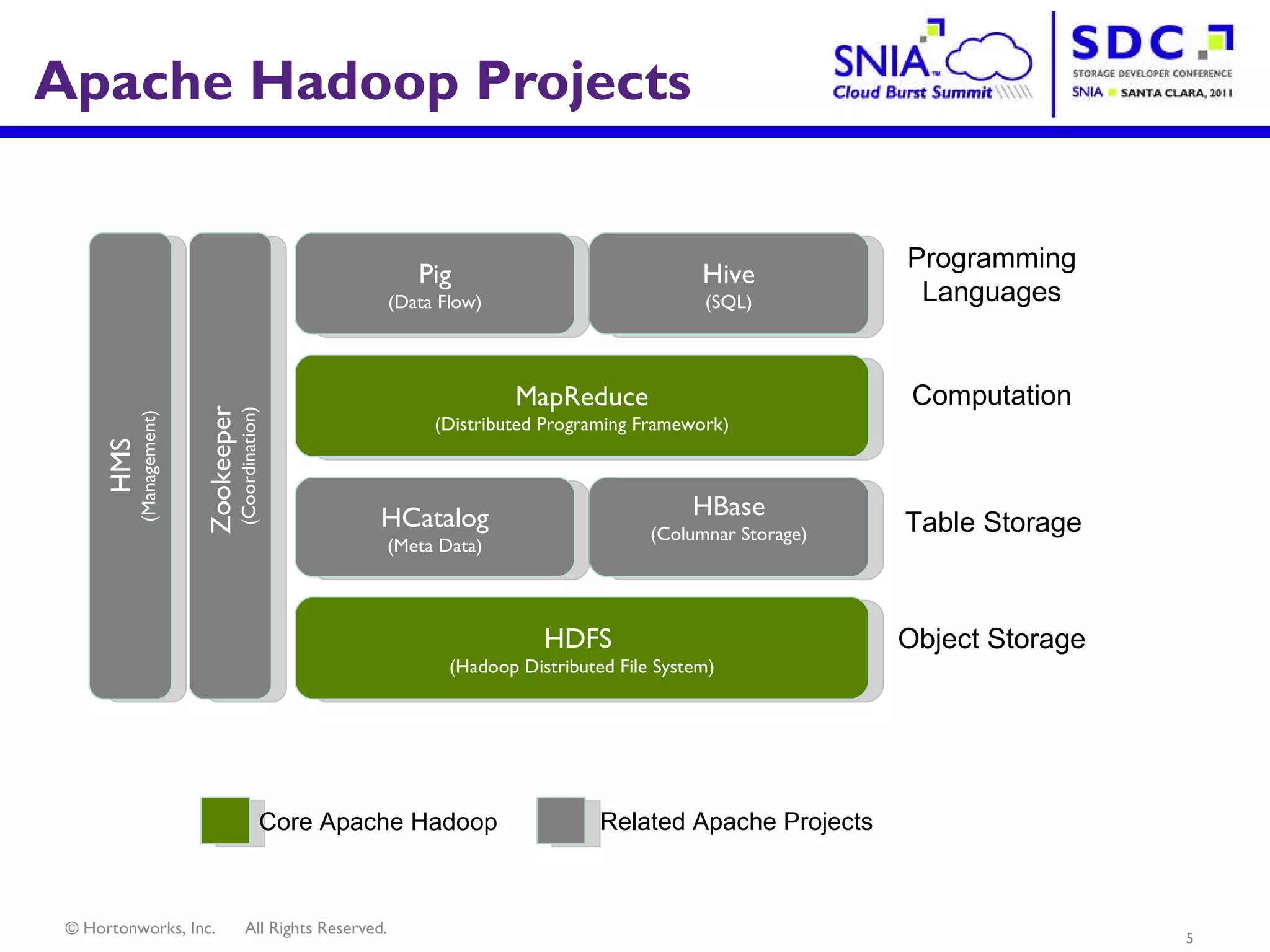

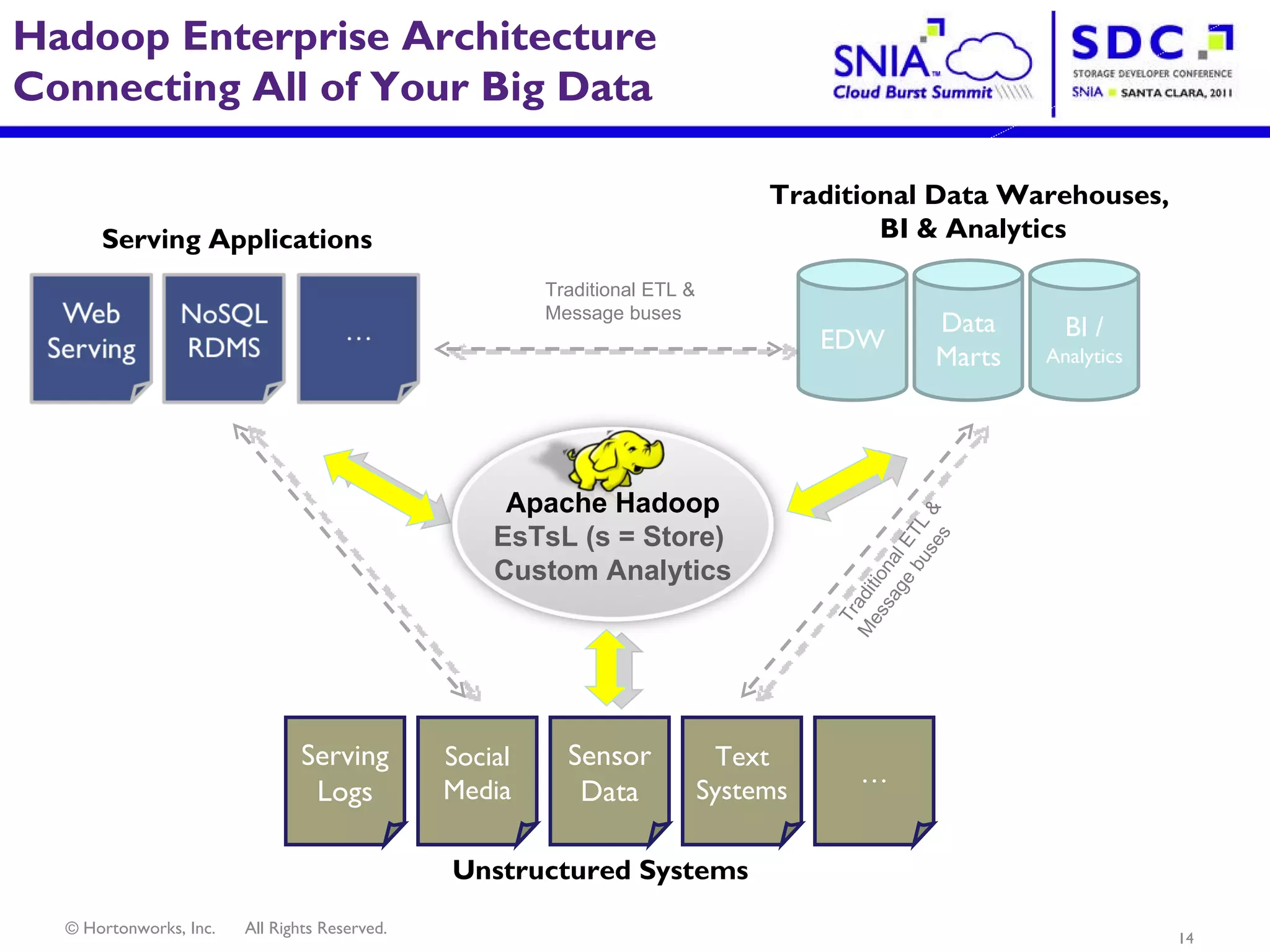

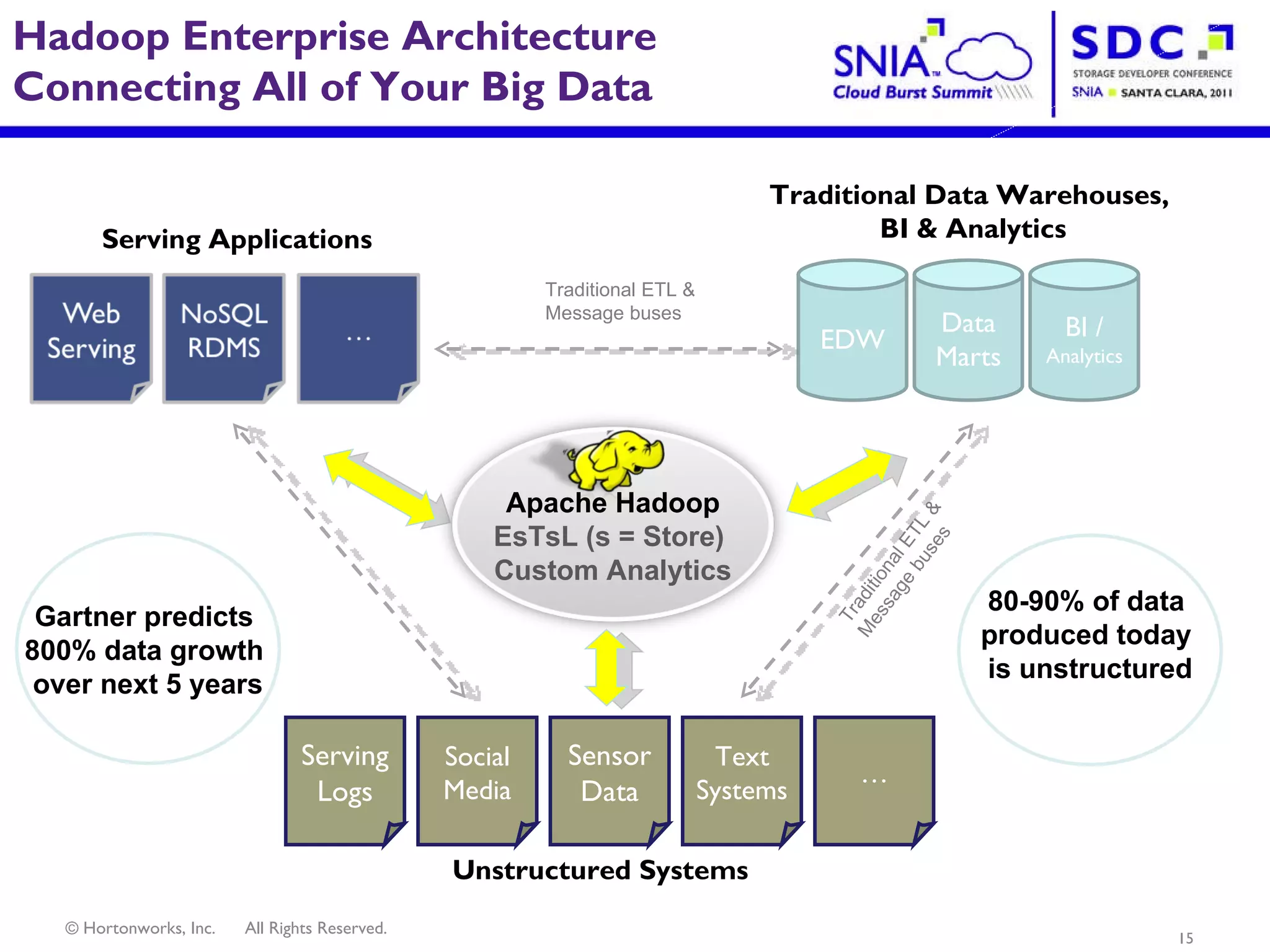

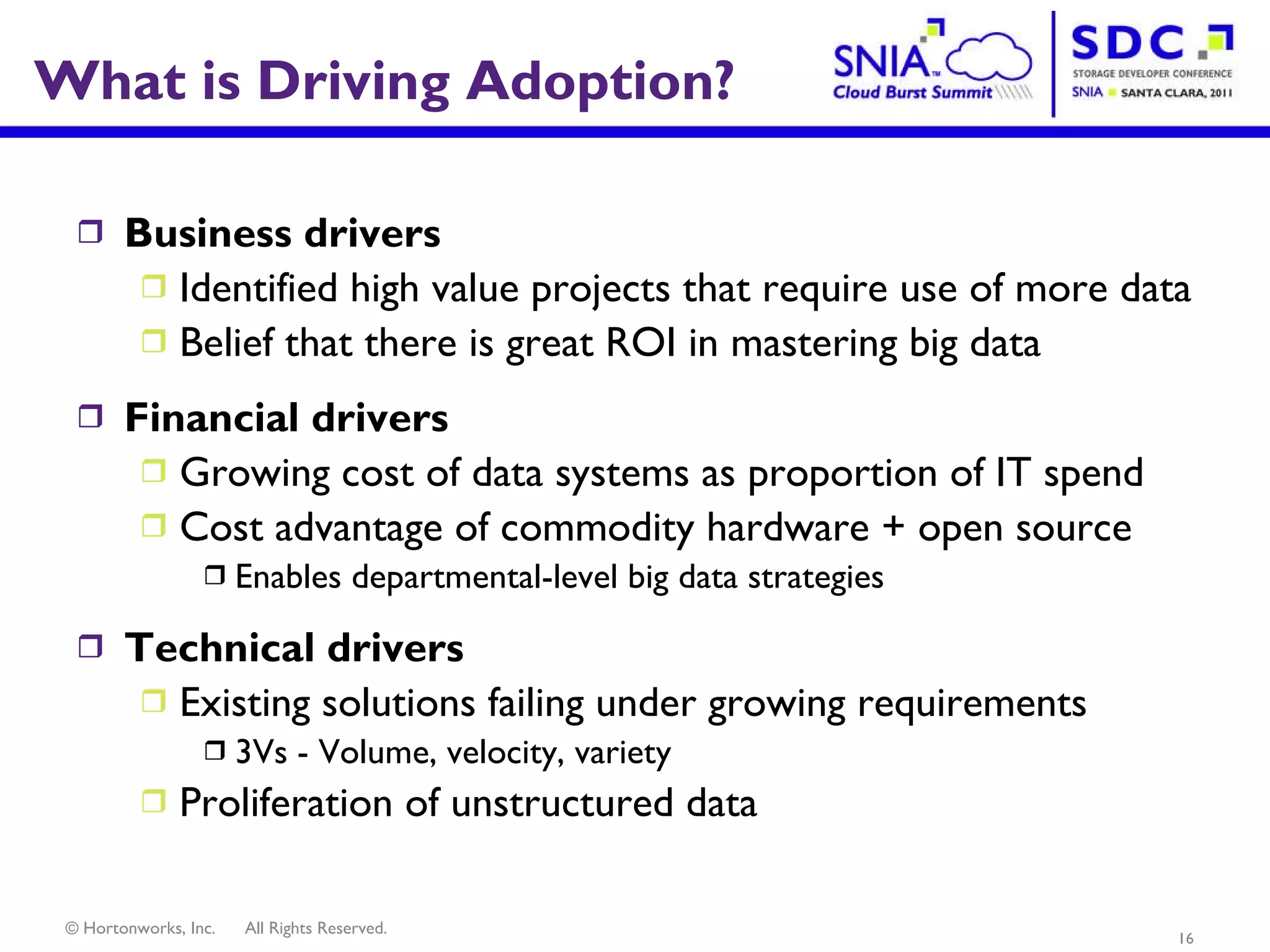

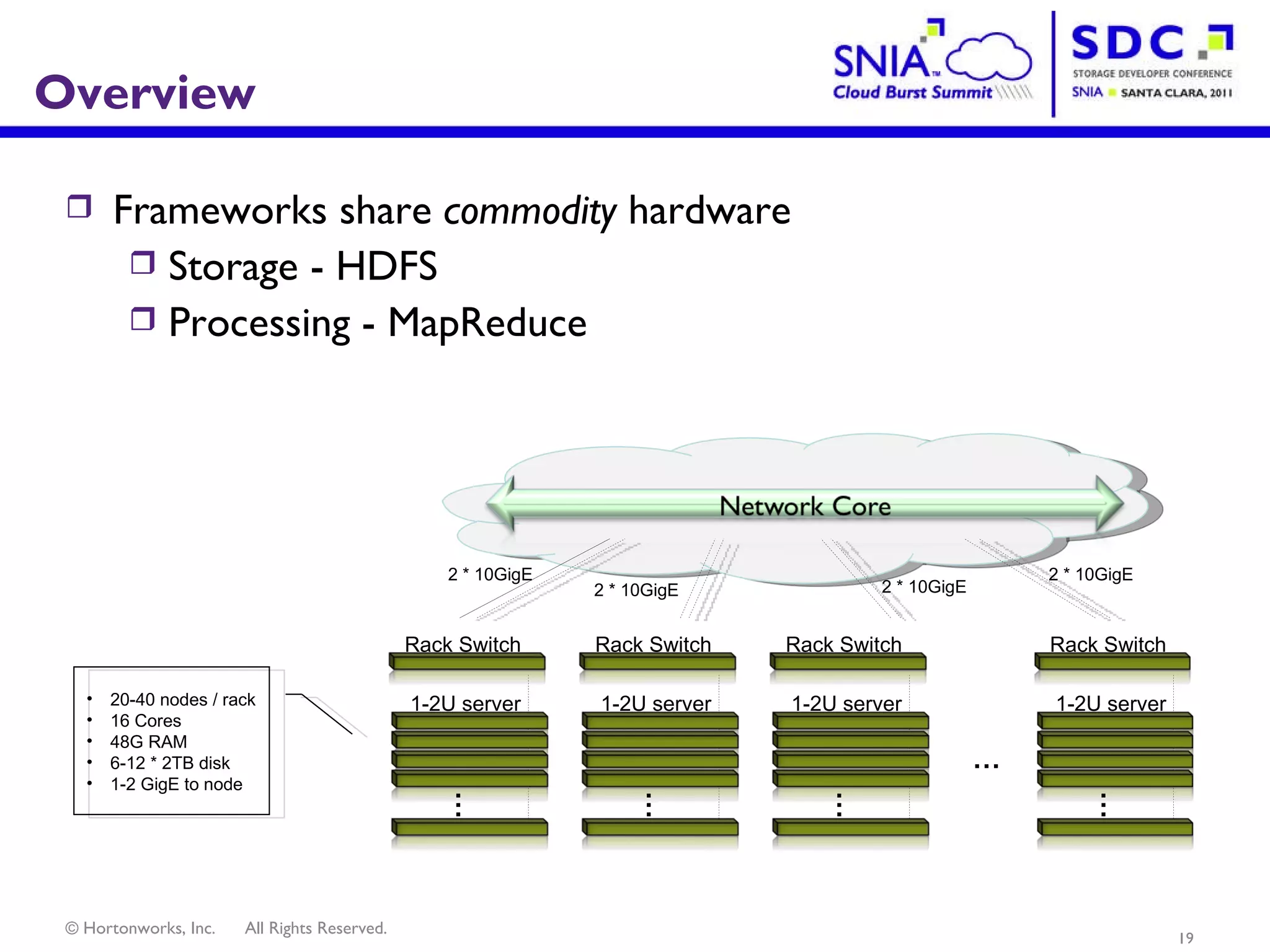

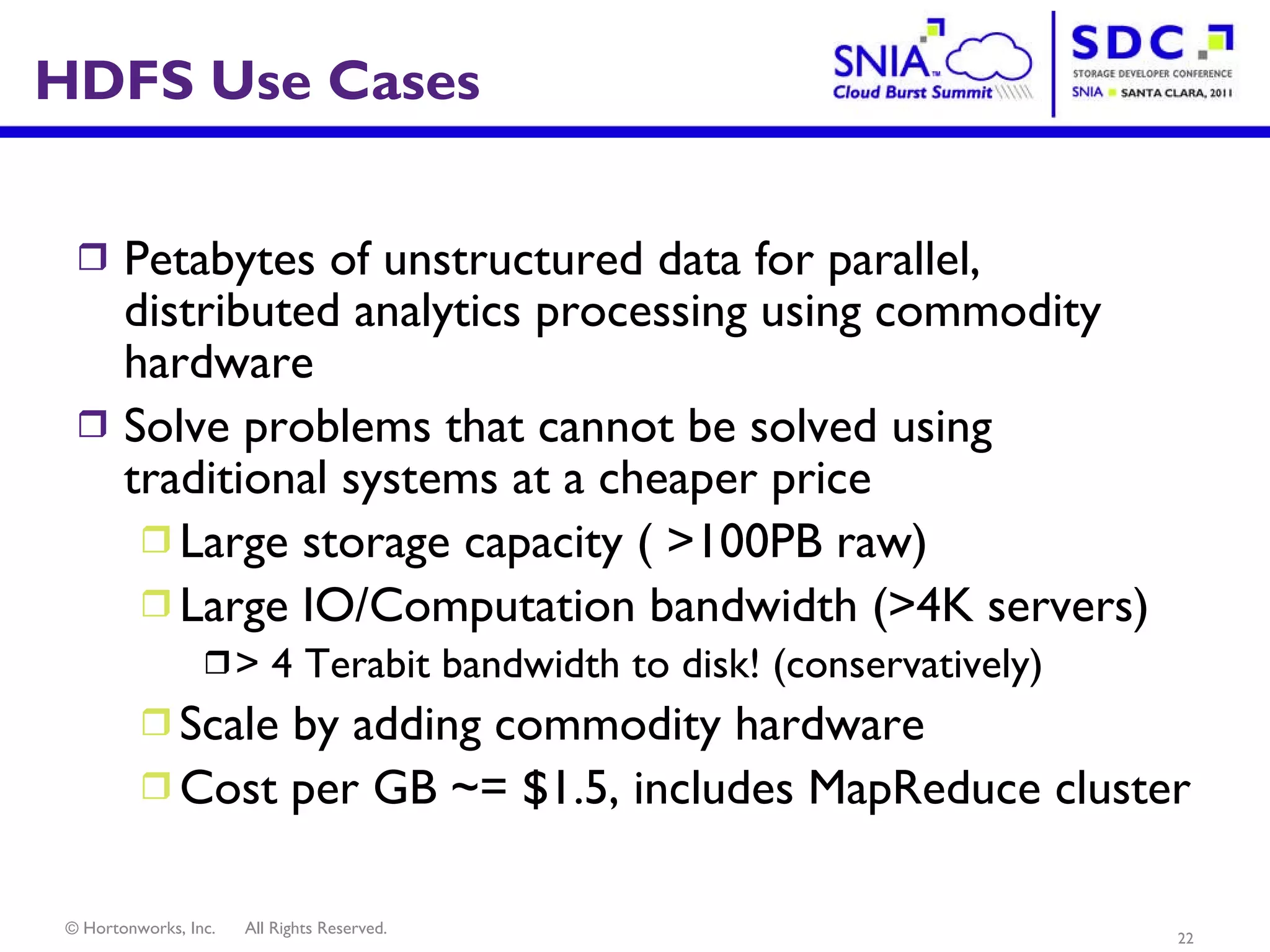

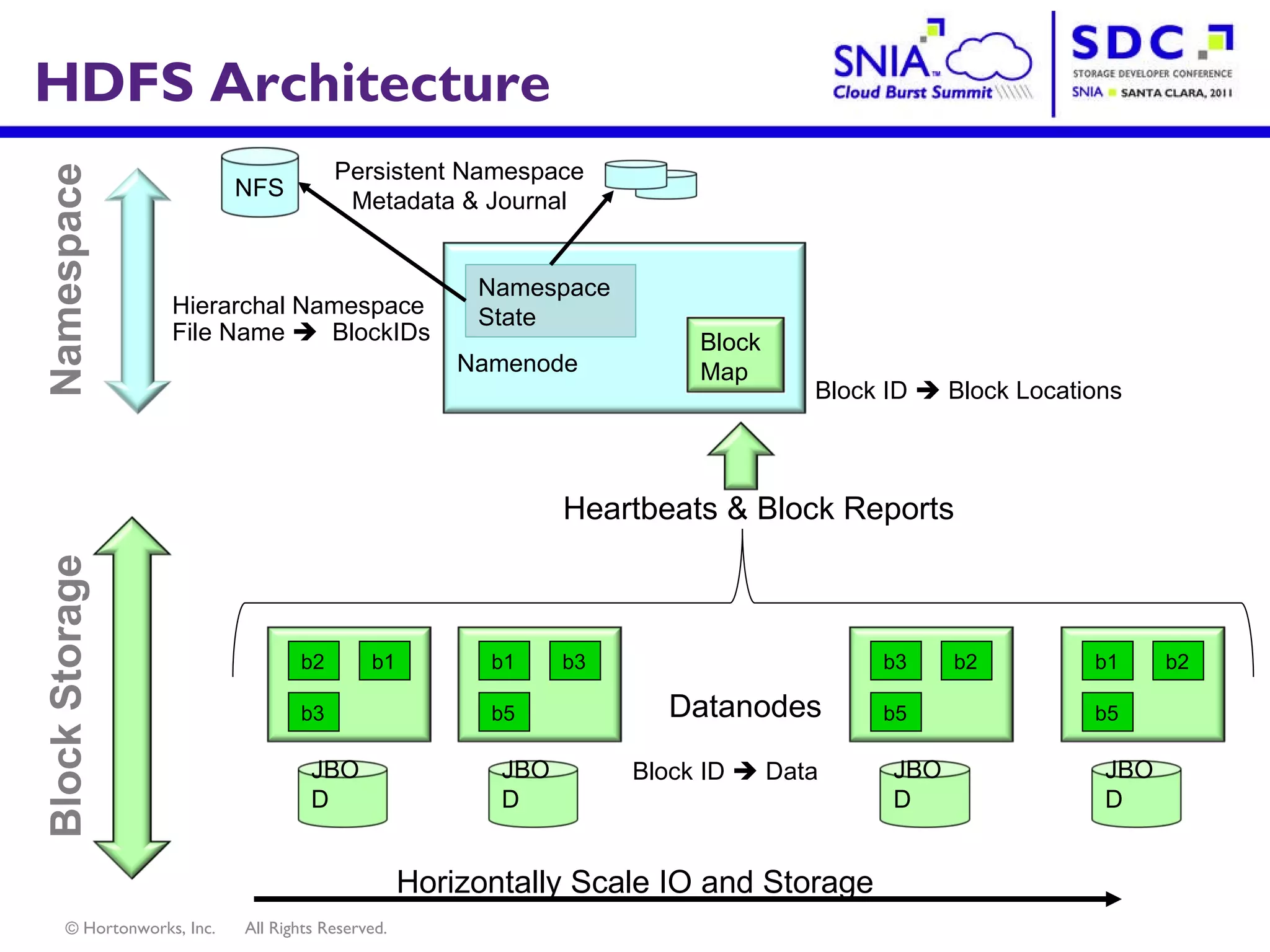

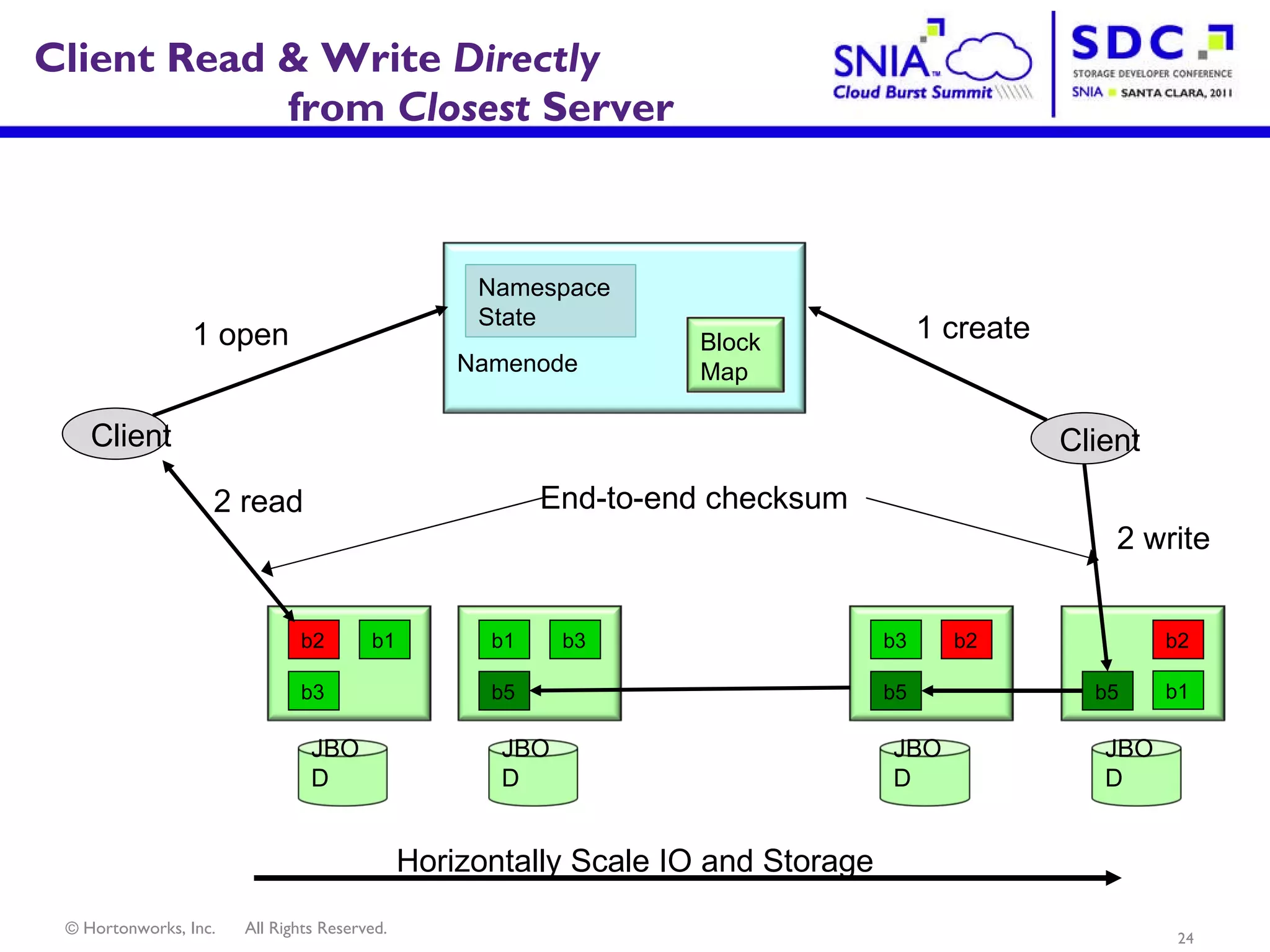

- Apache Hadoop is an open-source software framework for distributed storage and processing of large datasets across clusters of computers. It allows for the reliable storage of petabytes of data and large-scale computations across commodity hardware.

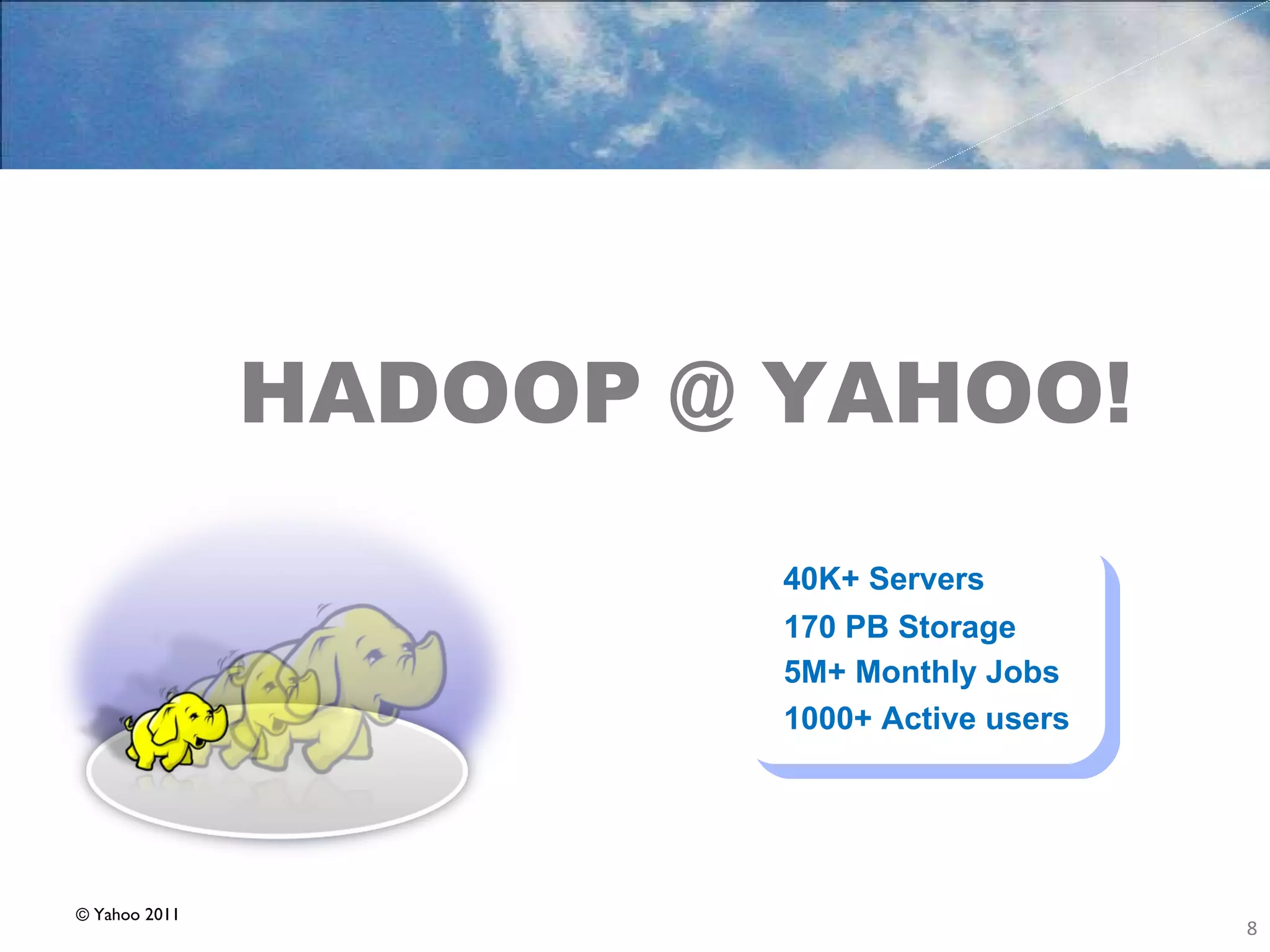

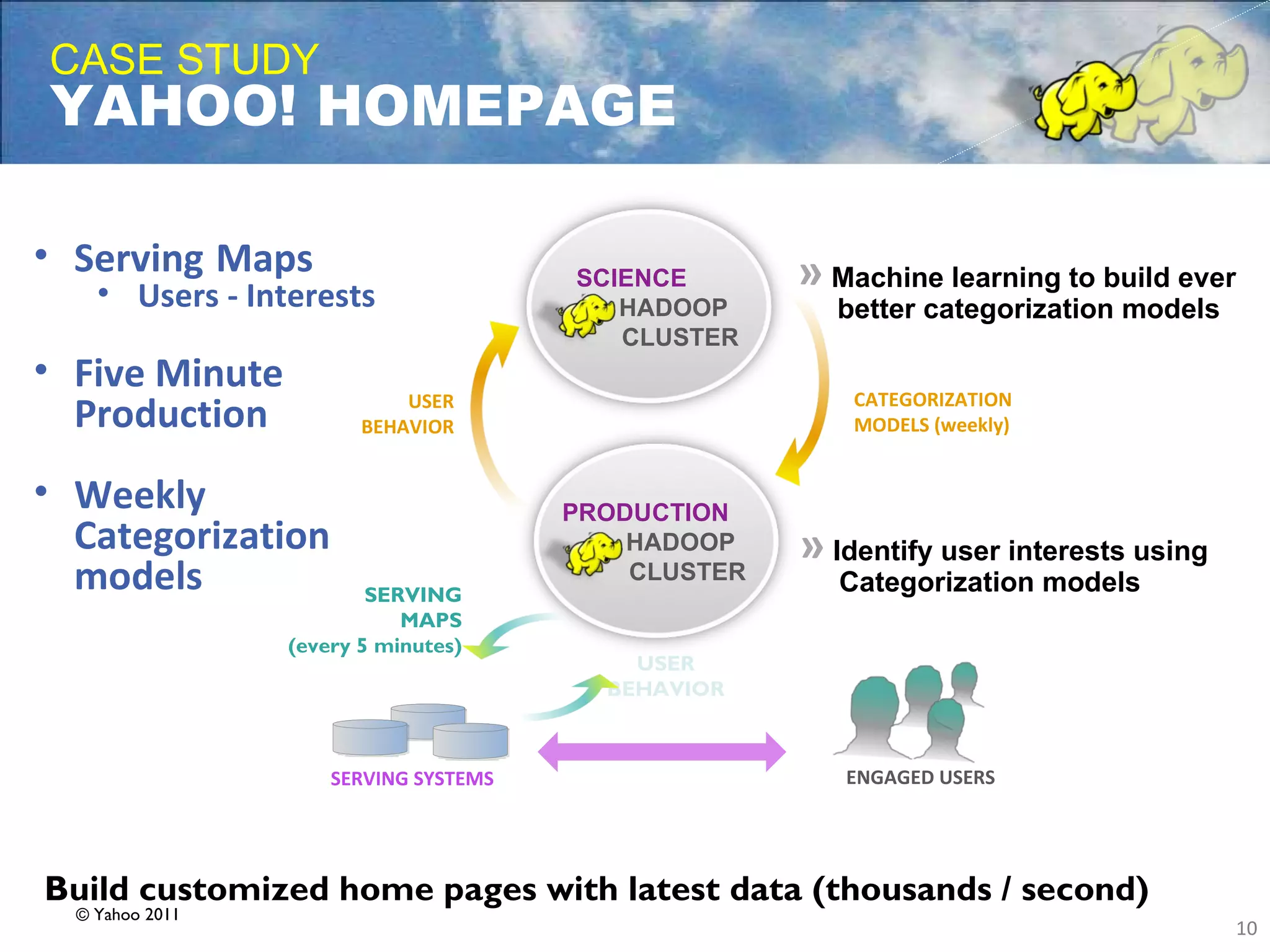

- Apache Hadoop is used widely by internet companies to analyze web server logs, power search engines, and gain insights from large amounts of social and user data. It is also used for machine learning, data mining, and processing audio, video, and text data.

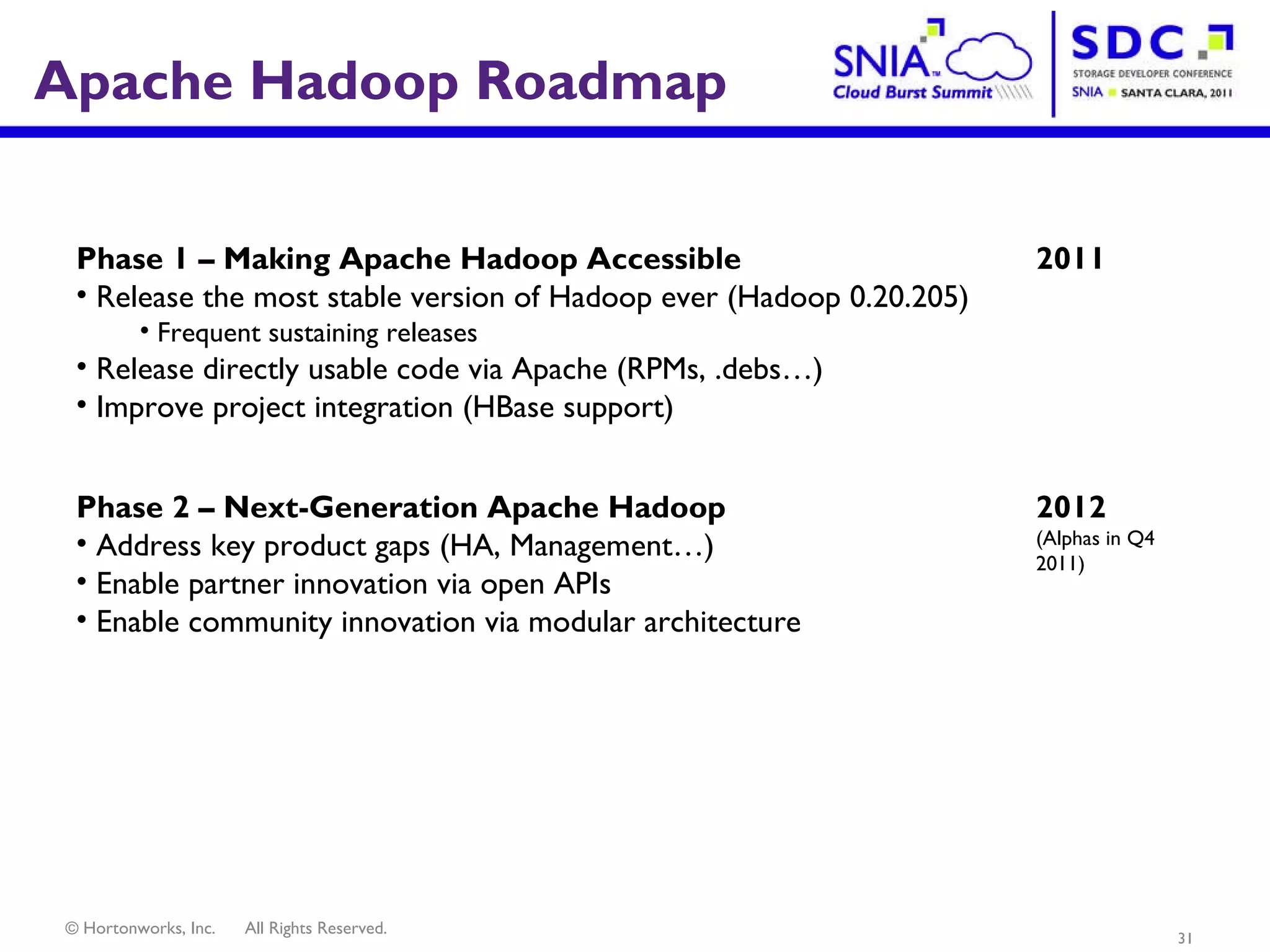

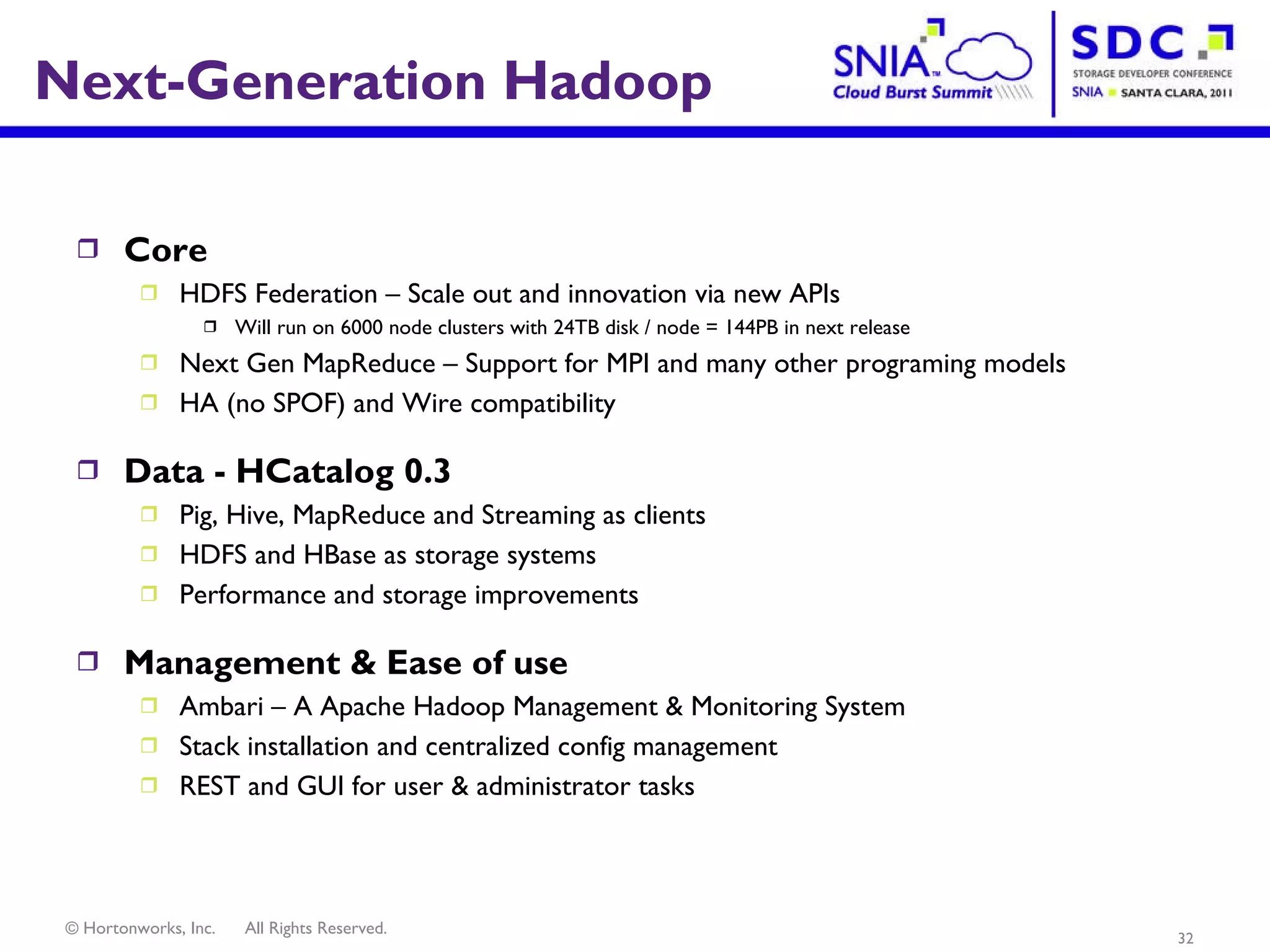

- The future of Apache Hadoop includes making it more accessible and easy to use for enterprises, addressing gaps like high availability and management, and enabling partners and the community to build on it through open APIs and a modular architecture.