Drupal for government

•Download as KEY, PDF•

0 likes•361 views

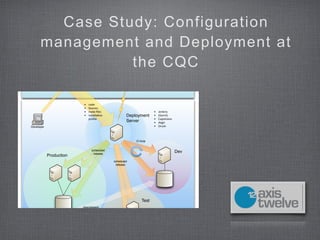

A brief case study by Daviid Stuart of axistwelve.com on configuration management in Government at the Care Quality Commission

Report

Share

Report

Share

Recommended

How to successfully migrate to bazel from maven or gradle

When your code base and dependency graph become big you should consider moving to bazel as your build tool. It's both extremely fast and highly accurate. You'll need to decide and think about 5 key points in order to achieve a successful migration.

How to successfully migrate to Bazel from Maven or Gradle - Riga Dev Days

At Wix We decided to switch to the Bazel build tool. The result was a dramatic improvement in performance and accuracy.

As Wix Backend grew exponentially with more than 700 micro-services, it became obvious our build times on Maven have been slowing us down. We decided to switch to the Bazel build tool while harnessing the “remote build execution” feature. The result was a dramatic improvement in performance and accuracy of builds.

In this talk, I will share with you how to achieve a successful migration to Bazel from Maven or Gradle, focusing on 5 important areas you have to think about and decide on the right approach for you, ranging from choosing the right build unit granularity to remote caching best practices.

I will also describe and demonstrate some of the available tools in the eco-system that help with the migration and with making everyday work easier.

How to successfully migrate to Bazel from Maven or Gradle - JeeConf

Bazel is both fast and accurate. There are 5 questions you should ask yourself before you start migrating.

Building scala with bazel

The document discusses using Bazel for building Scala projects. It begins with an overview of Bazel and how it uses small targets and rules to build code more incrementally and in parallel compared to tools like Maven and Gradle. It then covers the rules_scala plugin, which provides rules for compiling Scala code into JARs and running tests. Features of rules_scala like dependency management and support for multiple Scala versions are also summarized. Overall the document promotes Bazel and rules_scala as enabling significantly faster builds of large Scala codebases.

Puppet Camp Berlin 2015: Andrea Giardini | Configuration Management @ CERN: G...

In 2011, CERN decided to start using Puppet as main tool for development, machines configuration and provisioning as replacement of Quattor.

Since then the infrastructure has changed a lot, the "Agile infrastructure" project evolved is a series of tools and softwares that currently allow more than 10.000 nodes to be configured and provisioned following custom definitions.

Foreman, Git, Openstack and our homemade librarian Jens are only a few of the tools that will be described during the talk, that aims to give an overview about the current workflow for machines lifecycle at CERN.

This talk will cover how Puppet allows us to deal with several hundred of installations a day and, at the same time, provide highly customizable machine configurations for service owners.

Modern Infrastructure from Scratch with Puppet

This document provides an overview of how to get started with Puppet to automate infrastructure from scratch. It defines the key components of the infrastructure including virtualization with Vagrant, version control with Git, configuration management with Puppet, test and deployment automation with Jenkins, log aggregation with ELK, and monitoring with Sensu. It describes modeling the infrastructure with roles, profiles, classes and modules in Puppet and using Hiera for data abstraction. It also demonstrates setting up the infrastructure with links to running services.

Getting to push_button_deploys

A rundown of the tools and procedures that I have implemented at Moovweb to increase the velocity of software deployment.

Ruby and Rails Packaging to Production

This document discusses packaging Ruby and Rails applications for production. It covers using system packages versus gems, configuration management tools like Chef and Puppet, creating Debian packages, packaging gems, build servers, pain points like outdated Rubygems packages, and ideas for deeper Bundler integration and packaging gems by default. Overall it presents strategies for deploying Ruby applications as system packages for production servers.

Recommended

How to successfully migrate to bazel from maven or gradle

When your code base and dependency graph become big you should consider moving to bazel as your build tool. It's both extremely fast and highly accurate. You'll need to decide and think about 5 key points in order to achieve a successful migration.

How to successfully migrate to Bazel from Maven or Gradle - Riga Dev Days

At Wix We decided to switch to the Bazel build tool. The result was a dramatic improvement in performance and accuracy.

As Wix Backend grew exponentially with more than 700 micro-services, it became obvious our build times on Maven have been slowing us down. We decided to switch to the Bazel build tool while harnessing the “remote build execution” feature. The result was a dramatic improvement in performance and accuracy of builds.

In this talk, I will share with you how to achieve a successful migration to Bazel from Maven or Gradle, focusing on 5 important areas you have to think about and decide on the right approach for you, ranging from choosing the right build unit granularity to remote caching best practices.

I will also describe and demonstrate some of the available tools in the eco-system that help with the migration and with making everyday work easier.

How to successfully migrate to Bazel from Maven or Gradle - JeeConf

Bazel is both fast and accurate. There are 5 questions you should ask yourself before you start migrating.

Building scala with bazel

The document discusses using Bazel for building Scala projects. It begins with an overview of Bazel and how it uses small targets and rules to build code more incrementally and in parallel compared to tools like Maven and Gradle. It then covers the rules_scala plugin, which provides rules for compiling Scala code into JARs and running tests. Features of rules_scala like dependency management and support for multiple Scala versions are also summarized. Overall the document promotes Bazel and rules_scala as enabling significantly faster builds of large Scala codebases.

Puppet Camp Berlin 2015: Andrea Giardini | Configuration Management @ CERN: G...

In 2011, CERN decided to start using Puppet as main tool for development, machines configuration and provisioning as replacement of Quattor.

Since then the infrastructure has changed a lot, the "Agile infrastructure" project evolved is a series of tools and softwares that currently allow more than 10.000 nodes to be configured and provisioned following custom definitions.

Foreman, Git, Openstack and our homemade librarian Jens are only a few of the tools that will be described during the talk, that aims to give an overview about the current workflow for machines lifecycle at CERN.

This talk will cover how Puppet allows us to deal with several hundred of installations a day and, at the same time, provide highly customizable machine configurations for service owners.

Modern Infrastructure from Scratch with Puppet

This document provides an overview of how to get started with Puppet to automate infrastructure from scratch. It defines the key components of the infrastructure including virtualization with Vagrant, version control with Git, configuration management with Puppet, test and deployment automation with Jenkins, log aggregation with ELK, and monitoring with Sensu. It describes modeling the infrastructure with roles, profiles, classes and modules in Puppet and using Hiera for data abstraction. It also demonstrates setting up the infrastructure with links to running services.

Getting to push_button_deploys

A rundown of the tools and procedures that I have implemented at Moovweb to increase the velocity of software deployment.

Ruby and Rails Packaging to Production

This document discusses packaging Ruby and Rails applications for production. It covers using system packages versus gems, configuration management tools like Chef and Puppet, creating Debian packages, packaging gems, build servers, pain points like outdated Rubygems packages, and ideas for deeper Bundler integration and packaging gems by default. Overall it presents strategies for deploying Ruby applications as system packages for production servers.

Scaling your jenkins master with docker

This document discusses scaling a Jenkins master using Docker. It describes how the company Wyplay was using a single Jenkins master to manage over 500 jobs, which led to performance and reliability issues. It then explains how Wyplay set up multiple Docker containers running Jenkins masters to address these issues. It provides details on installing and configuring Jenkins in Docker containers, managing plugins, backups, and monitoring across multiple containerized masters. Finally, it discusses future directions like using orchestration tools and programmatically configuring Jenkins systems.

Cloud-Native Sling

Kubernetes is quickly becoming the de facto deployment platform for container runtimes. Sling provides a quick out-of-the box experience using the Starter jar, but this kind of setup is not always easy to deploy in containers.

In this talk we will present how a Sling application can be deployed on a Kubernetes platform, exploring various patterns such as scaling out, centralised logging and monitoring, content distribution and persistence.

After this talk participants will gain a better understanding about how Sling can be molded into a cloud-native applications without sacrificing the features that make Sling a strong development platform.

Node.js Build, Deploy and Scale Webinar

Topics covered in this webinar:

Automating builds directly from GitHub

Scaling processes horizontally and vertically

Working with Nginix load-balancer

Managing Node.js processes with Docker containers

Microservices deployment and Docker orchestration

Puppet Camp Paris 2015: Continuous Integration of Puppet Code (Intermediate)

Puppet Camp Paris 2015: Continuous Integration of Puppet Code (Intermediate) - François Gouteroux, D2SI

Continuous Infrastructure: Modern Puppet for the Jenkins Project - PuppetConf...

This document summarizes Tyler Croy's presentation on managing the Jenkins infrastructure using Puppet. It describes how the infrastructure evolved from an unmanaged setup at Sun/Oracle to using masterless Puppet and eventually Puppet Enterprise. Key aspects covered include managing services, hardware, code layout, testing, and deployment process. Special thanks are given to Puppet Labs for their support of the project.

Getting started with puppet and vagrant (1)

The document discusses using Puppet and Vagrant together to create a test environment for infrastructure configuration. Vagrant allows setting up and provisioning virtual machines quickly, while Puppet configures the desired state of systems. The demo project uses Vagrant to launch a CentOS virtual machine and Puppet to configure it based on roles like webserver or database.

Deployment Patterns in the Ruby on Rails World

This document discusses different deployment patterns for Ruby on Rails applications. It covers using Git for version control and continuous integration for running tests on code commits. Different packaging and deployment options are presented like RPMs, Debian packages, RubyGems, and exporting artifacts. Configuration management tools can then be used to deploy packages to servers and configure applications.

Puppet Camp Düsseldorf 2014: Continuously Deliver Your Puppet Code with Jenki...

Continuously Deliver Your Puppet Code with Jenkins, r10k and Git (Intermediate) - Toni Schmidbauer, IT Solutions at Spardat GmbH given at Puppet Camp Düsseldorf 2014

Killer R10K Workflow - PuppetConf 2014

The document describes the evolution of a workflow for managing thousands of Puppet modules using R10K and related tools. It started with all modules and code in a single monolithic repository, which led to long test cycles and all-or-nothing deployments. Introducing R10K and the Puppetfile allowed each module to be in its own repository and specify dependencies, enabling faster targeted testing and deployments. Additional tools like Reaktor were created to automate releases and notifications. The optimized workflow provides dynamic environments, simplified development, and easy production deployments.

Deploying Drupal using Capistrano

This document discusses using Capistrano to deploy Drupal applications. It covers prerequisites, installing Capistrano, configuring deploy stages and servers, and customizing the deploy process. Drupal-specific tasks are added to backup the database, import configurations, clear caches, and set the site offline/online during deployment. The deploy flow is modified to run these tasks before and after Capistrano's default deploy steps. Jenkins can be configured to run the Capistrano deployment scripts for continuous integration.

Docker to the Rescue of an Ops Team

Slides from my DockerCon EU 2017 Talk.

Find the abstract below:

"In this talk, we'll discover how Docker comes to the rescue of the Ops Team, while rebuilding from scratch our monitoring infrastructure. We'll start by quickly describing the challenges, to focus on why and how using docker saved the project. From fixing dependencies and isolation issues, implementing rolling upgrades and new features hot addition, to building a completely modular, scalable and resilient infrastructure, we'll talk about why CI/CD workflows, docker tooling and Docker Swarm were the key to success."

JUC 2015 Pipeline Scaling

The document summarizes a presentation given by Damien Coraboeuf at the 2015 Jenkins World Tour in London on scaling Jenkins pipelines. The presentation discussed using the Seed plugin and pipeline libraries to define pipelines as code stored with source branches. This allows for self-service configuration of pipelines, security, simplicity, and extensibility across many similar pipelines in a Jenkins environment.

Capistrano与jenkins(hudson)在java web项目中的实践

Capistrano and Jenkins can be used together to automate the build, deployment, and management of Java web applications on clusters of servers. Capistrano allows deploying code to multiple servers and managing services, while Jenkins provides continuous integration by automatically building, testing, and deploying code changes to different environments like development, testing, and production. When a build succeeds in Jenkins, it can trigger Capistrano tasks to deploy the new code to servers and restart services. This achieves automated and versioned software releases across server clusters.

Puppet Camp Atlanta 2014: r10k Puppet Workflow

The document discusses r10k, a Puppet workflow tool that helps manage Puppet code, modules, and data across different environments using Git and the Puppetfile. It recommends configuring r10k to store each environment as a Git branch, with a Puppetfile to define Forge modules and custom code and data in separate directories. r10k can then sync code and deploy changes to environments on the Puppet master automatically based on the Git configuration. This simplifies promotion of code, modules, and data through development, test, and production environments.

NODE NYC

Discover PM2 an open source process manager for NodeJS (https://github.com/Unitech/pm2) and Keymetrics the monitoring solution

Docker at Digital Ocean

This document discusses best practices for configuring Docker containers for Ruby on Rails applications hosted on DigitalOcean. It recommends using environment variables stored in files like Figaro or Dotenv for database credentials and other config, avoiding secrets in Dockerfiles, bundling gems before building images, and running the app command in the container.

Live deployment, ci, drupal

1. The document discusses setting up a continuous integration workflow for Drupal projects using tools like Jenkins, Drush, and Vagrant.

2. It identifies problems with current development practices like code being merged without testing and different environments between dev and production.

3. The workflow proposed uses scripts to automate rebuilding development and production environments from source control, running tests, and deploying code.

Advanced Git Techniques: Subtrees, Grafting, and Other Fun Stuff

Your team has adopted Git, and are happily coding along. But is that all? Can you do more with it? You bet! Join the always-animated Nicola Paolucci to learn advanced techniques for grafting multiple repositories, managing project dependencies with git subtree, splitting commits, and finding the best merge strategy for your staging servers. If you've ever wondered how to collate the histories of different projects, or how to split a sub-directory into it's own project without destroying its history, this session is for you.

CIbox - OpenSource solution for making your #devops better

This document describes an old and new development workflow for code reviews and continuous integration. The old workflow involved directly committing code to a shared master branch and deploying to a development server, while the new workflow uses feature branches, pull requests, and local virtual environments for development. It also introduces CIBox, an open source project that provides tools and automation to implement the new workflow, including provisioning a CI server and setting up initial project files.

Drupal contrib module maintaining

This document discusses Drupal's project management tools and resources for module maintainers, including automated testing, documentation, issue tracking, and community support. It highlights how some popular modules grew large developer communities that fixed over 90% of critical bugs through these resources. The document encourages contributors to write tests before committing code and review patches through the issue queue. It also lists projects needing maintenance help and provides contact information.

Enterprise search in_drupal_pub

This document provides an introduction to using Apache Solr for enterprise search on a website. It discusses the configuration options for Solr, including solrconfig.xml and schema.xml. It also summarizes the Apache Solr module that comes with Drupal, which provides search indexing, filters, and hooks out of the box. Additional Solr modules are mentioned, including Views and Attachments integrations, as well as a multiserver module. The document aims to give an overview of Solr and how it can be implemented with Drupal.

More Related Content

What's hot

Scaling your jenkins master with docker

This document discusses scaling a Jenkins master using Docker. It describes how the company Wyplay was using a single Jenkins master to manage over 500 jobs, which led to performance and reliability issues. It then explains how Wyplay set up multiple Docker containers running Jenkins masters to address these issues. It provides details on installing and configuring Jenkins in Docker containers, managing plugins, backups, and monitoring across multiple containerized masters. Finally, it discusses future directions like using orchestration tools and programmatically configuring Jenkins systems.

Cloud-Native Sling

Kubernetes is quickly becoming the de facto deployment platform for container runtimes. Sling provides a quick out-of-the box experience using the Starter jar, but this kind of setup is not always easy to deploy in containers.

In this talk we will present how a Sling application can be deployed on a Kubernetes platform, exploring various patterns such as scaling out, centralised logging and monitoring, content distribution and persistence.

After this talk participants will gain a better understanding about how Sling can be molded into a cloud-native applications without sacrificing the features that make Sling a strong development platform.

Node.js Build, Deploy and Scale Webinar

Topics covered in this webinar:

Automating builds directly from GitHub

Scaling processes horizontally and vertically

Working with Nginix load-balancer

Managing Node.js processes with Docker containers

Microservices deployment and Docker orchestration

Puppet Camp Paris 2015: Continuous Integration of Puppet Code (Intermediate)

Puppet Camp Paris 2015: Continuous Integration of Puppet Code (Intermediate) - François Gouteroux, D2SI

Continuous Infrastructure: Modern Puppet for the Jenkins Project - PuppetConf...

This document summarizes Tyler Croy's presentation on managing the Jenkins infrastructure using Puppet. It describes how the infrastructure evolved from an unmanaged setup at Sun/Oracle to using masterless Puppet and eventually Puppet Enterprise. Key aspects covered include managing services, hardware, code layout, testing, and deployment process. Special thanks are given to Puppet Labs for their support of the project.

Getting started with puppet and vagrant (1)

The document discusses using Puppet and Vagrant together to create a test environment for infrastructure configuration. Vagrant allows setting up and provisioning virtual machines quickly, while Puppet configures the desired state of systems. The demo project uses Vagrant to launch a CentOS virtual machine and Puppet to configure it based on roles like webserver or database.

Deployment Patterns in the Ruby on Rails World

This document discusses different deployment patterns for Ruby on Rails applications. It covers using Git for version control and continuous integration for running tests on code commits. Different packaging and deployment options are presented like RPMs, Debian packages, RubyGems, and exporting artifacts. Configuration management tools can then be used to deploy packages to servers and configure applications.

Puppet Camp Düsseldorf 2014: Continuously Deliver Your Puppet Code with Jenki...

Continuously Deliver Your Puppet Code with Jenkins, r10k and Git (Intermediate) - Toni Schmidbauer, IT Solutions at Spardat GmbH given at Puppet Camp Düsseldorf 2014

Killer R10K Workflow - PuppetConf 2014

The document describes the evolution of a workflow for managing thousands of Puppet modules using R10K and related tools. It started with all modules and code in a single monolithic repository, which led to long test cycles and all-or-nothing deployments. Introducing R10K and the Puppetfile allowed each module to be in its own repository and specify dependencies, enabling faster targeted testing and deployments. Additional tools like Reaktor were created to automate releases and notifications. The optimized workflow provides dynamic environments, simplified development, and easy production deployments.

Deploying Drupal using Capistrano

This document discusses using Capistrano to deploy Drupal applications. It covers prerequisites, installing Capistrano, configuring deploy stages and servers, and customizing the deploy process. Drupal-specific tasks are added to backup the database, import configurations, clear caches, and set the site offline/online during deployment. The deploy flow is modified to run these tasks before and after Capistrano's default deploy steps. Jenkins can be configured to run the Capistrano deployment scripts for continuous integration.

Docker to the Rescue of an Ops Team

Slides from my DockerCon EU 2017 Talk.

Find the abstract below:

"In this talk, we'll discover how Docker comes to the rescue of the Ops Team, while rebuilding from scratch our monitoring infrastructure. We'll start by quickly describing the challenges, to focus on why and how using docker saved the project. From fixing dependencies and isolation issues, implementing rolling upgrades and new features hot addition, to building a completely modular, scalable and resilient infrastructure, we'll talk about why CI/CD workflows, docker tooling and Docker Swarm were the key to success."

JUC 2015 Pipeline Scaling

The document summarizes a presentation given by Damien Coraboeuf at the 2015 Jenkins World Tour in London on scaling Jenkins pipelines. The presentation discussed using the Seed plugin and pipeline libraries to define pipelines as code stored with source branches. This allows for self-service configuration of pipelines, security, simplicity, and extensibility across many similar pipelines in a Jenkins environment.

Capistrano与jenkins(hudson)在java web项目中的实践

Capistrano and Jenkins can be used together to automate the build, deployment, and management of Java web applications on clusters of servers. Capistrano allows deploying code to multiple servers and managing services, while Jenkins provides continuous integration by automatically building, testing, and deploying code changes to different environments like development, testing, and production. When a build succeeds in Jenkins, it can trigger Capistrano tasks to deploy the new code to servers and restart services. This achieves automated and versioned software releases across server clusters.

Puppet Camp Atlanta 2014: r10k Puppet Workflow

The document discusses r10k, a Puppet workflow tool that helps manage Puppet code, modules, and data across different environments using Git and the Puppetfile. It recommends configuring r10k to store each environment as a Git branch, with a Puppetfile to define Forge modules and custom code and data in separate directories. r10k can then sync code and deploy changes to environments on the Puppet master automatically based on the Git configuration. This simplifies promotion of code, modules, and data through development, test, and production environments.

NODE NYC

Discover PM2 an open source process manager for NodeJS (https://github.com/Unitech/pm2) and Keymetrics the monitoring solution

Docker at Digital Ocean

This document discusses best practices for configuring Docker containers for Ruby on Rails applications hosted on DigitalOcean. It recommends using environment variables stored in files like Figaro or Dotenv for database credentials and other config, avoiding secrets in Dockerfiles, bundling gems before building images, and running the app command in the container.

Live deployment, ci, drupal

1. The document discusses setting up a continuous integration workflow for Drupal projects using tools like Jenkins, Drush, and Vagrant.

2. It identifies problems with current development practices like code being merged without testing and different environments between dev and production.

3. The workflow proposed uses scripts to automate rebuilding development and production environments from source control, running tests, and deploying code.

Advanced Git Techniques: Subtrees, Grafting, and Other Fun Stuff

Your team has adopted Git, and are happily coding along. But is that all? Can you do more with it? You bet! Join the always-animated Nicola Paolucci to learn advanced techniques for grafting multiple repositories, managing project dependencies with git subtree, splitting commits, and finding the best merge strategy for your staging servers. If you've ever wondered how to collate the histories of different projects, or how to split a sub-directory into it's own project without destroying its history, this session is for you.

CIbox - OpenSource solution for making your #devops better

This document describes an old and new development workflow for code reviews and continuous integration. The old workflow involved directly committing code to a shared master branch and deploying to a development server, while the new workflow uses feature branches, pull requests, and local virtual environments for development. It also introduces CIBox, an open source project that provides tools and automation to implement the new workflow, including provisioning a CI server and setting up initial project files.

Drupal contrib module maintaining

This document discusses Drupal's project management tools and resources for module maintainers, including automated testing, documentation, issue tracking, and community support. It highlights how some popular modules grew large developer communities that fixed over 90% of critical bugs through these resources. The document encourages contributors to write tests before committing code and review patches through the issue queue. It also lists projects needing maintenance help and provides contact information.

What's hot (20)

Puppet Camp Paris 2015: Continuous Integration of Puppet Code (Intermediate)

Puppet Camp Paris 2015: Continuous Integration of Puppet Code (Intermediate)

Continuous Infrastructure: Modern Puppet for the Jenkins Project - PuppetConf...

Continuous Infrastructure: Modern Puppet for the Jenkins Project - PuppetConf...

Puppet Camp Düsseldorf 2014: Continuously Deliver Your Puppet Code with Jenki...

Puppet Camp Düsseldorf 2014: Continuously Deliver Your Puppet Code with Jenki...

Advanced Git Techniques: Subtrees, Grafting, and Other Fun Stuff

Advanced Git Techniques: Subtrees, Grafting, and Other Fun Stuff

CIbox - OpenSource solution for making your #devops better

CIbox - OpenSource solution for making your #devops better

Viewers also liked

Enterprise search in_drupal_pub

This document provides an introduction to using Apache Solr for enterprise search on a website. It discusses the configuration options for Solr, including solrconfig.xml and schema.xml. It also summarizes the Apache Solr module that comes with Drupal, which provides search indexing, filters, and hooks out of the box. Additional Solr modules are mentioned, including Views and Attachments integrations, as well as a multiserver module. The document aims to give an overview of Solr and how it can be implemented with Drupal.

Netpay Presentation

NetPay offers various online payment solutions including credit card processing, ACH, direct debit, cash payments, and mobile payments. It also facilitates payouts to affiliates, partners, and suppliers through debit cards, wire transfers, and check clearing. NetPay authenticates each transaction through its proprietary risk assessment platform to identify fraudulent transactions and reduce chargebacks.

Tendencias Fitness del mercado americano

El documento resume las principales características del mercado de gimnasios en Estados Unidos. En 2010 había alrededor de 30.000 gimnasios con unos 45 millones de socios. Las actividades más populares son el uso de máquinas de resistencia, cintas de correr y pesas libres. La mayoría de los gimnasios ofrecen entrenamiento personal, clases de ejercicio y entrenamiento con pesas. Los clientes de gimnasios tienden a ser personas con ingresos altos y educación universitaria. El mercado ha crecido en términ

Viewers also liked (6)

Similar to Drupal for government

Continuous Integration/Deployment with Docker and Jenkins

“Continuous Integration doesn’t get rid of bugs, but it does make them dramatically easier to find and remove” M. Fowler

Jenkins and Docker are cool technologies. Here's how they serve in a continuous integration based process and how they could be exploited to deliver new version of the same software.

The slides present the whole process along with real code snippets.

Webinar: Creating an Effective Docker Build Pipeline for Java Apps

It's easy to make mistakes when Dockerizing your Java applications. In this webinar, Alexei Ledenev (Cheif Researcher at Codefresh) shared his experience on how to craft the perfect Java-Docker build flow. He explained best practices and common pitfalls, then demonstrated how to create a build pipeline that consistently produces small, efficient, and secure Docker images. View the webinar recording and summary here- https://codefresh.io/blog/webinar-creating-efficient-docker-build-pipeline-java-apps/

Leveraging Docker for Hadoop build automation and Big Data stack provisioning

Apache Bigtop as an open source Hadoop distribution, focuses on developing packaging, testing and deployment solutions that help infrastructure engineers to build up their own customized big data platform as easy as possible. However, packages deployed in production require a solid CI testing framework to ensure its quality. Numbers of Hadoop component must be ensured to work perfectly together as well. In this presentation, we'll talk about how Bigtop deliver its containerized CI framework which can be directly replicated by Bigtop users. The core revolution here are the newly developed Docker Provisioner that leveraged Docker for Hadoop deployment and Docker Sandbox for developer to quickly start a big data stack. The content of this talk includes the containerized CI framework, technical detail of Docker Provisioner and Docker Sandbox, a hierarchy of docker images we designed, and several components we developed such as Bigtop Toolchain to achieve build automation.

Leveraging docker for hadoop build automation and big data stack provisioning

https://dataworkssummit.com/san-jose-2017/sessions/leveraging-docker-for-hadoop-build-automation-and-big-data-stack-provisioning/

Continuous Integration Testing in Django

Continuous Integration is like having a robot that cleans up after you: it installs your dependencies, builds your project, run your tests, and reports back to you. This presentation outlines two methods for CI: Travis and Jenkins.

Docker to the Rescue of an Ops Team

In this talk, we'll discover how Docker comes to the rescue of the Ops Team, while rebuilding from scratch our monitoring infrastructure. We'll start by quickly describing the challenges, to focus on why and how using docker saved the project. From fixing dependencies and isolation issues, implementing rolling upgrades and new features hot addition, to building a completely modular, scalable and resilient infrastructure, we'll talk about why CI/CD workflows, docker tooling and Docker Swarm were the key to success.

Drools, jBPM OptaPlanner presentation

The document outlines Mark Proctor's journey working with Drools, jBPM and OptaPlanner over 20 years, including the evolution of the products from early Drools in 2000 to the current focus on cloud-native applications using Quarkus, GraalVM and Kubernetes. It also discusses recent efforts under the Submarine project to develop domain-specific services for decisions, processes, events and optimizations using a canonical executable model and polyglot capabilities. The engineering team for Drools and jBPM has quadrupled in size since 2011 to support these initiatives.

Drupal 8 DevOps . Profile and SQL flows.

How to automate your Drupal 8 team

What types of development flow should you select for best results

DCSF 19 Building Your Development Pipeline

Oliver Pomeroy, Docker & Laura Tacho, Cloudbees

Enterprises often want to provide automation and standardisation on top of their container platform, using a pipeline to build and deploy their containerized applications. However this opens up new challenges; Do I have to build a new CI/CD Stack? Can I build my CI/CD pipeline with Kubernetes orchestration? What should my build agents look like? How do I integrate my pipeline into my enterprise container registry? In this session full of examples and how-to's, Olly and Laura will guide you through common situations and decisions related to your pipelines. We'll cover building minimal images, scanning and signing images, and give examples on how to enforce compliance standards and best practices across your teams.

Deploying software at Scale

This document discusses deploying software at scale through automation. It advocates treating infrastructure as code and using version control, continuous integration, and packaging tools. The key steps are to automate deployments, make them reproducible, and deploy changes frequently and consistently through a pipeline that checks code, runs tests, builds packages, and deploys to testing and production environments. This allows deploying changes safely and quickly while improving collaboration between developers and operations teams.

The Docker "Gauntlet" - Introduction, Ecosystem, Deployment, Orchestration

This document summarizes Docker's growth over 15 months, including its community size, downloads, projects on GitHub, enterprise support offerings, and the Docker platform which includes the Docker Engine, Docker Hub, and partnerships. It also provides overviews of key Docker technologies like libcontainer, libchan, libswarm, and how images work in Docker.

What's new in Docker - InfraKit - Docker Meetup Berlin 2016

This document provides an overview of Docker and its products and initiatives:

1. Docker provides tools for container isolation using Linux kernel features like namespaces and cgroups. It also utilizes image layers for packaging applications.

2. Docker's products focus on the developer experience through tools like Docker for Mac/Windows, as well as orchestration with Swarm mode and services in Docker 1.12.

3. For operations, Docker provides tools to integrate with load balancers, templates, and other infrastructure through products like Docker Universal Control Plane and Docker Cloud. Docker is building tools to program infrastructure as code.

PaaSTA: Running applications at Yelp

PaaSTA, Yelp's platform as a service (PaaS) built on top of open source tools, provides tooling for developers to quickly turn their microservice into a monitored, highly available application spanning multiple data centers and cloud regions. Nathan Handler outlines the technologies that power PaaSTA and discusses how Yelp uses PaaSTA to empower developers and solve key problems.

Video: https://youtu.be/vISUXKeoqXM

Using Docker EE to Scale Operational Intelligence at Splunk

With more than 14,000 customers in 110+ countries, Splunk is the market leader in analyzing machine data to deliver operational intelligence for security, IT and the business. Our rapid growth as a company meant that our Infrastructure Engineering Team, responsible for all the common tooling, build and test systems and frameworks utilized by the Splunk engineers, was bogged down with a sprawl of virtual machines and physical servers that were becoming incredibly difficult to manage. And as our customer’s demand for data has grown, testing at the scale of petabytes/day has become our new normal. We needed a reliable and scalable “Test Lab” for functional and performance testing.

With Docker Enterprise Edition, our engineers are able to create small test stacks on their laptop just as easily as creating multi-petabyte stacks in our Test Lab. Support for Windows, Role Based Access Control and having support for both the orchestration platform and the container engine were key in deciding to go with Docker over other solutions.

In this talk, we will cover the architecture, tooling, and frameworks we built to manage our workloads, which have grown to run on over 600 bare-metal servers, with tens of thousands of containers being created every day. We will share the lessons learned from running at scale. Lastly, we will demonstrate how we use Splunk to monitor and manage Docker Enterprise Edition.

Jazoon12 355 aleksandra_gavrilovska-1

This document discusses continuous integration for iOS projects. It describes the iOS app distribution process and practices of continuous integration like automating builds and testing. It provides an overview of using Jenkins to configure Mac slaves and jobs for iOS builds. It also describes Netcetera's custom build system using Rake tasks to build, test, package and deploy iOS apps. Benefits include control over the build process and project state while improvements could include automating certificate installation and code quality checks.

Scala, docker and testing, oh my! mario camou

Testing is important for any system you write and at eBay it is no different. We have a number of complex Scala and Akka based applications with a large number of external dependencies. One of the challenges of testing this kind of application is replicating the complete system across all your environments: development, different flavors of testing (unit, functional, integration, capacity and acceptance) and production. This is especially true in the case of integration and capacity testing where there are a multitude of ways to manage system complexity. Wouldn’t it be nice to define the testing system architecture in one place that we can reuse in all our tests? It turns out we can do exactly that using Docker. In this talk, we will first look at how to take advantage of Docker for integration testing your Scala application. After that we will explore how this has helped us reduce the duration and complexity of our tests.

DevOps Workflow: A Tutorial on Linux Containers

In this deck from the Stanford HPC Conference, Christian Kniep from Docker, Inc. gives a tutorial on linux containers.

"This tutorial provides a detailed overview of the components needed to run containerized applications and explores how distributed HPC applications can be tackled. We’ll explain the concept of Linux Containers and describe the bits and pieces participants will explore following step-by-step examples.

The workshop will introduce the predominant forms of orchestration in the industry; what problems they solve and how to approach the problem.

Attendees will explore the benefits and drawbacks of orchestrators first hand with their own small exemplary stack deployments.

Finally the workshop will introduce how HPC and Big Data workloads can be tackled on-top of these service-oriented clusters."

Watch the video: https://youtu.be/LJinZpCTyk0

Learn more: http://www.docker.com/

and

http://hpcadvisorycouncil.com

Sign up for our insideHPC Newsletter: http://insidehpc.com/newsletter

[Devopsdays2021] Roll Your Product with Kaizen Culture![[Devopsdays2021] Roll Your Product with Kaizen Culture](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

![[Devopsdays2021] Roll Your Product with Kaizen Culture](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

This document discusses how the author's team at Rakuten implemented DevOps practices including containerization and test automation to improve their development and deployment processes. Some key points:

1) Previously, testing and deploying took a long time which increased lead times.

2) They decided to focus first on containerizing their web applications using Kubernetes and automating UI tests.

3) These changes helped reduce testing time from 20 hours to 5 minutes and sped up deployments. It also improved productivity by allowing more bottom-up projects and deeper understanding of their product.

4) The synergy between containerization and test automation helped optimize their development workflow and significantly cut down on lead times.

The Future of Security and Productivity in Our Newly Remote World

Andy has made mistakes. He's seen even more. And in this talk he details the best and the worst of the container and Kubernetes security problems he's experienced, exploited, and remediated.

This talk details low level exploitable issues with container and Kubernetes deployments. We focus on lessons learned, and show attendees how to ensure that they do not fall victim to avoidable attacks.

See how to bypass security controls and exploit insecure defaults in this technical appraisal of the container and cluster security landscape.

Using Rancher and Docker with RightScale at Industrie IT

Many early Docker users are also now looking at clustering solutions such as Rancher. Industrie IT is using Docker, Rancher, and RightScale to help clients build digital applications using continuous integration (CI) and continuous delivery (CD) practices.

Similar to Drupal for government (20)

Continuous Integration/Deployment with Docker and Jenkins

Continuous Integration/Deployment with Docker and Jenkins

Webinar: Creating an Effective Docker Build Pipeline for Java Apps

Webinar: Creating an Effective Docker Build Pipeline for Java Apps

Leveraging Docker for Hadoop build automation and Big Data stack provisioning

Leveraging Docker for Hadoop build automation and Big Data stack provisioning

Leveraging docker for hadoop build automation and big data stack provisioning

Leveraging docker for hadoop build automation and big data stack provisioning

The Docker "Gauntlet" - Introduction, Ecosystem, Deployment, Orchestration

The Docker "Gauntlet" - Introduction, Ecosystem, Deployment, Orchestration

What's new in Docker - InfraKit - Docker Meetup Berlin 2016

What's new in Docker - InfraKit - Docker Meetup Berlin 2016

Using Docker EE to Scale Operational Intelligence at Splunk

Using Docker EE to Scale Operational Intelligence at Splunk

[Devopsdays2021] Roll Your Product with Kaizen Culture![[Devopsdays2021] Roll Your Product with Kaizen Culture](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

![[Devopsdays2021] Roll Your Product with Kaizen Culture](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

[Devopsdays2021] Roll Your Product with Kaizen Culture

The Future of Security and Productivity in Our Newly Remote World

The Future of Security and Productivity in Our Newly Remote World

Using Rancher and Docker with RightScale at Industrie IT

Using Rancher and Docker with RightScale at Industrie IT

Recently uploaded

By Design, not by Accident - Agile Venture Bolzano 2024

As presented at the Agile Venture Bolzano, 4.06.2024

20240605 QFM017 Machine Intelligence Reading List May 2024

Everything I found interesting about machines behaving intelligently during May 2024

GraphSummit Singapore | Graphing Success: Revolutionising Organisational Stru...

Sudheer Mechineni, Head of Application Frameworks, Standard Chartered Bank

Discover how Standard Chartered Bank harnessed the power of Neo4j to transform complex data access challenges into a dynamic, scalable graph database solution. This keynote will cover their journey from initial adoption to deploying a fully automated, enterprise-grade causal cluster, highlighting key strategies for modelling organisational changes and ensuring robust disaster recovery. Learn how these innovations have not only enhanced Standard Chartered Bank’s data infrastructure but also positioned them as pioneers in the banking sector’s adoption of graph technology.

Why You Should Replace Windows 11 with Nitrux Linux 3.5.0 for enhanced perfor...

The choice of an operating system plays a pivotal role in shaping our computing experience. For decades, Microsoft's Windows has dominated the market, offering a familiar and widely adopted platform for personal and professional use. However, as technological advancements continue to push the boundaries of innovation, alternative operating systems have emerged, challenging the status quo and offering users a fresh perspective on computing.

One such alternative that has garnered significant attention and acclaim is Nitrux Linux 3.5.0, a sleek, powerful, and user-friendly Linux distribution that promises to redefine the way we interact with our devices. With its focus on performance, security, and customization, Nitrux Linux presents a compelling case for those seeking to break free from the constraints of proprietary software and embrace the freedom and flexibility of open-source computing.

zkStudyClub - Reef: Fast Succinct Non-Interactive Zero-Knowledge Regex Proofs

This paper presents Reef, a system for generating publicly verifiable succinct non-interactive zero-knowledge proofs that a committed document matches or does not match a regular expression. We describe applications such as proving the strength of passwords, the provenance of email despite redactions, the validity of oblivious DNS queries, and the existence of mutations in DNA. Reef supports the Perl Compatible Regular Expression syntax, including wildcards, alternation, ranges, capture groups, Kleene star, negations, and lookarounds. Reef introduces a new type of automata, Skipping Alternating Finite Automata (SAFA), that skips irrelevant parts of a document when producing proofs without undermining soundness, and instantiates SAFA with a lookup argument. Our experimental evaluation confirms that Reef can generate proofs for documents with 32M characters; the proofs are small and cheap to verify (under a second).

Paper: https://eprint.iacr.org/2023/1886

Pushing the limits of ePRTC: 100ns holdover for 100 days

At WSTS 2024, Alon Stern explored the topic of parametric holdover and explained how recent research findings can be implemented in real-world PNT networks to achieve 100 nanoseconds of accuracy for up to 100 days.

Communications Mining Series - Zero to Hero - Session 1

This session provides introduction to UiPath Communication Mining, importance and platform overview. You will acquire a good understand of the phases in Communication Mining as we go over the platform with you. Topics covered:

• Communication Mining Overview

• Why is it important?

• How can it help today’s business and the benefits

• Phases in Communication Mining

• Demo on Platform overview

• Q/A

GraphSummit Singapore | The Future of Agility: Supercharging Digital Transfor...

Leonard Jayamohan, Partner & Generative AI Lead, Deloitte

This keynote will reveal how Deloitte leverages Neo4j’s graph power for groundbreaking digital twin solutions, achieving a staggering 100x performance boost. Discover the essential role knowledge graphs play in successful generative AI implementations. Plus, get an exclusive look at an innovative Neo4j + Generative AI solution Deloitte is developing in-house.

FIDO Alliance Osaka Seminar: The WebAuthn API and Discoverable Credentials.pdf

FIDO Alliance Osaka Seminar

20240607 QFM018 Elixir Reading List May 2024

Everything I found interesting about the Elixir programming ecosystem in May 2024

GDG Cloud Southlake #33: Boule & Rebala: Effective AppSec in SDLC using Deplo...

Effective Application Security in Software Delivery lifecycle using Deployment Firewall and DBOM

The modern software delivery process (or the CI/CD process) includes many tools, distributed teams, open-source code, and cloud platforms. Constant focus on speed to release software to market, along with the traditional slow and manual security checks has caused gaps in continuous security as an important piece in the software supply chain. Today organizations feel more susceptible to external and internal cyber threats due to the vast attack surface in their applications supply chain and the lack of end-to-end governance and risk management.

The software team must secure its software delivery process to avoid vulnerability and security breaches. This needs to be achieved with existing tool chains and without extensive rework of the delivery processes. This talk will present strategies and techniques for providing visibility into the true risk of the existing vulnerabilities, preventing the introduction of security issues in the software, resolving vulnerabilities in production environments quickly, and capturing the deployment bill of materials (DBOM).

Speakers:

Bob Boule

Robert Boule is a technology enthusiast with PASSION for technology and making things work along with a knack for helping others understand how things work. He comes with around 20 years of solution engineering experience in application security, software continuous delivery, and SaaS platforms. He is known for his dynamic presentations in CI/CD and application security integrated in software delivery lifecycle.

Gopinath Rebala

Gopinath Rebala is the CTO of OpsMx, where he has overall responsibility for the machine learning and data processing architectures for Secure Software Delivery. Gopi also has a strong connection with our customers, leading design and architecture for strategic implementations. Gopi is a frequent speaker and well-known leader in continuous delivery and integrating security into software delivery.

Generative AI Deep Dive: Advancing from Proof of Concept to Production

Join Maher Hanafi, VP of Engineering at Betterworks, in this new session where he'll share a practical framework to transform Gen AI prototypes into impactful products! He'll delve into the complexities of data collection and management, model selection and optimization, and ensuring security, scalability, and responsible use.

Goodbye Windows 11: Make Way for Nitrux Linux 3.5.0!

As the digital landscape continually evolves, operating systems play a critical role in shaping user experiences and productivity. The launch of Nitrux Linux 3.5.0 marks a significant milestone, offering a robust alternative to traditional systems such as Windows 11. This article delves into the essence of Nitrux Linux 3.5.0, exploring its unique features, advantages, and how it stands as a compelling choice for both casual users and tech enthusiasts.

Essentials of Automations: The Art of Triggers and Actions in FME

In this second installment of our Essentials of Automations webinar series, we’ll explore the landscape of triggers and actions, guiding you through the nuances of authoring and adapting workspaces for seamless automations. Gain an understanding of the full spectrum of triggers and actions available in FME, empowering you to enhance your workspaces for efficient automation.

We’ll kick things off by showcasing the most commonly used event-based triggers, introducing you to various automation workflows like manual triggers, schedules, directory watchers, and more. Plus, see how these elements play out in real scenarios.

Whether you’re tweaking your current setup or building from the ground up, this session will arm you with the tools and insights needed to transform your FME usage into a powerhouse of productivity. Join us to discover effective strategies that simplify complex processes, enhancing your productivity and transforming your data management practices with FME. Let’s turn complexity into clarity and make your workspaces work wonders!

Unlock the Future of Search with MongoDB Atlas_ Vector Search Unleashed.pdf

Discover how MongoDB Atlas and vector search technology can revolutionize your application's search capabilities. This comprehensive presentation covers:

* What is Vector Search?

* Importance and benefits of vector search

* Practical use cases across various industries

* Step-by-step implementation guide

* Live demos with code snippets

* Enhancing LLM capabilities with vector search

* Best practices and optimization strategies

Perfect for developers, AI enthusiasts, and tech leaders. Learn how to leverage MongoDB Atlas to deliver highly relevant, context-aware search results, transforming your data retrieval process. Stay ahead in tech innovation and maximize the potential of your applications.

#MongoDB #VectorSearch #AI #SemanticSearch #TechInnovation #DataScience #LLM #MachineLearning #SearchTechnology

Epistemic Interaction - tuning interfaces to provide information for AI support

Paper presented at SYNERGY workshop at AVI 2024, Genoa, Italy. 3rd June 2024

https://alandix.com/academic/papers/synergy2024-epistemic/

As machine learning integrates deeper into human-computer interactions, the concept of epistemic interaction emerges, aiming to refine these interactions to enhance system adaptability. This approach encourages minor, intentional adjustments in user behaviour to enrich the data available for system learning. This paper introduces epistemic interaction within the context of human-system communication, illustrating how deliberate interaction design can improve system understanding and adaptation. Through concrete examples, we demonstrate the potential of epistemic interaction to significantly advance human-computer interaction by leveraging intuitive human communication strategies to inform system design and functionality, offering a novel pathway for enriching user-system engagements.

Encryption in Microsoft 365 - ExpertsLive Netherlands 2024

In this session I delve into the encryption technology used in Microsoft 365 and Microsoft Purview. Including the concepts of Customer Key and Double Key Encryption.

Recently uploaded (20)

By Design, not by Accident - Agile Venture Bolzano 2024

By Design, not by Accident - Agile Venture Bolzano 2024

20240605 QFM017 Machine Intelligence Reading List May 2024

20240605 QFM017 Machine Intelligence Reading List May 2024

GraphSummit Singapore | Graphing Success: Revolutionising Organisational Stru...

GraphSummit Singapore | Graphing Success: Revolutionising Organisational Stru...

Why You Should Replace Windows 11 with Nitrux Linux 3.5.0 for enhanced perfor...

Why You Should Replace Windows 11 with Nitrux Linux 3.5.0 for enhanced perfor...

Monitoring Java Application Security with JDK Tools and JFR Events

Monitoring Java Application Security with JDK Tools and JFR Events

zkStudyClub - Reef: Fast Succinct Non-Interactive Zero-Knowledge Regex Proofs

zkStudyClub - Reef: Fast Succinct Non-Interactive Zero-Knowledge Regex Proofs

Pushing the limits of ePRTC: 100ns holdover for 100 days

Pushing the limits of ePRTC: 100ns holdover for 100 days

Communications Mining Series - Zero to Hero - Session 1

Communications Mining Series - Zero to Hero - Session 1

GraphSummit Singapore | The Future of Agility: Supercharging Digital Transfor...

GraphSummit Singapore | The Future of Agility: Supercharging Digital Transfor...

FIDO Alliance Osaka Seminar: The WebAuthn API and Discoverable Credentials.pdf

FIDO Alliance Osaka Seminar: The WebAuthn API and Discoverable Credentials.pdf

GDG Cloud Southlake #33: Boule & Rebala: Effective AppSec in SDLC using Deplo...

GDG Cloud Southlake #33: Boule & Rebala: Effective AppSec in SDLC using Deplo...

Generative AI Deep Dive: Advancing from Proof of Concept to Production

Generative AI Deep Dive: Advancing from Proof of Concept to Production

Goodbye Windows 11: Make Way for Nitrux Linux 3.5.0!

Goodbye Windows 11: Make Way for Nitrux Linux 3.5.0!

Essentials of Automations: The Art of Triggers and Actions in FME

Essentials of Automations: The Art of Triggers and Actions in FME

Unlock the Future of Search with MongoDB Atlas_ Vector Search Unleashed.pdf

Unlock the Future of Search with MongoDB Atlas_ Vector Search Unleashed.pdf

Epistemic Interaction - tuning interfaces to provide information for AI support

Epistemic Interaction - tuning interfaces to provide information for AI support

Encryption in Microsoft 365 - ExpertsLive Netherlands 2024

Encryption in Microsoft 365 - ExpertsLive Netherlands 2024

Drupal for government

- 1. Case Study: Configuration management and Deployment at the CQC Code repository Internet ! 607# ! 8#.4*#' ! 9.%#:;<#' ! "#$%&$' ! &$'+.<<.=0$: Deployment ! ()#**&+, /*0;<# ! -./&'+*.$0 Server ! 1#2&* Developer ! 3*4'5 CI loop scheduled release Dev Production scheduled release Test downstream dump of DB (post release)

- 2. Agenda ✤ Introduction ✤ Configuration management with Puppet ✤ Deployment

- 3. Introduction ✤ Care Quality Commission regulate, inspect and review all adult social care service in England ✤ Drupal 6 build with Apache Solr geolocal search ✤ Public can look up local services (GP, Hospital etc) ✤ Agile development methodology ✤ Approx. 15 million hits per month and growing ✤ Tasked with Registering all GP’s this year! ✤ Deployment and configuration management a manual process

- 4. Introduction cont.. ✤ Engaged Axis12 to automate their configuration management and deployment process ✤ Technologies used ✤ Red Hat ESX Virtual’s ✤ Git ✤ Puppet ✤ Capistrano ✤ Gerrit ✤ Jenkins

- 5. Configuration Management with Puppet class drupal::drush { exec { "download-drush": cwd => "/root", command => "/usr/bin/wget http://ftp.drupal.org/ files/projects/drush-All-Versions-2.1.tar.gz", creates => "/root/drush-All-Versions-2.1.tar.gz", } exec { "install-drush": cwd => "/var/www/drupal/sites/all/modules", command => "/bin/tar xvzf /root/drush-All- Versions-2.1.tar.gz", creates => "/var/www/drupal/sites/all/modules/ drush", require => [ Exec["download-drush"], File["/var/www/ drupal/sites/all/modules"] ], } file { "/usr/local/bin/drush": ensure => "/var/www/drupal/sites/all/modules/drush/ drush", } }

- 6. Deployment

- 7. Questions?

Editor's Notes

- \n

- \n

- \n

- \n

- \n

- \n

- \n