Machine learning concepts and techniques are summarized in three paragraphs. Key points include:

Learning allows systems to perform tasks more efficiently over time by modifying representations based on experiences. Major learning paradigms include supervised learning from labeled examples, unsupervised learning like clustering without labels, and reinforcement learning using feedback/rewards.

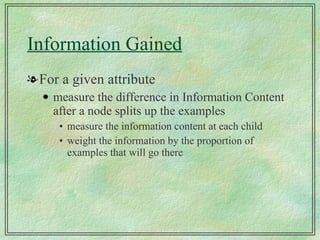

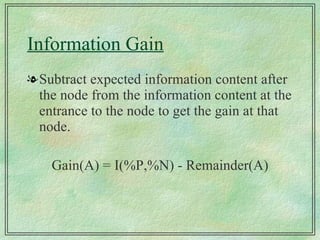

Decision trees are a common inductive learning approach that extrapolate patterns from training examples to classify new examples. They are built top-down by selecting attributes that best split examples into homogeneous groups. The attribute with highest information gain is selected at each node.

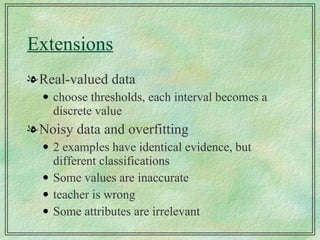

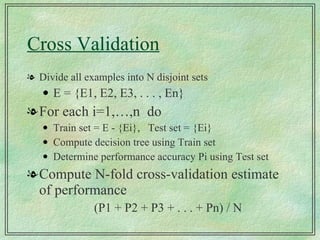

Decision trees may be evaluated on predictive accuracy and pruned to avoid overfitting. Rules can be extracted from trees' paths. Parameters are set using