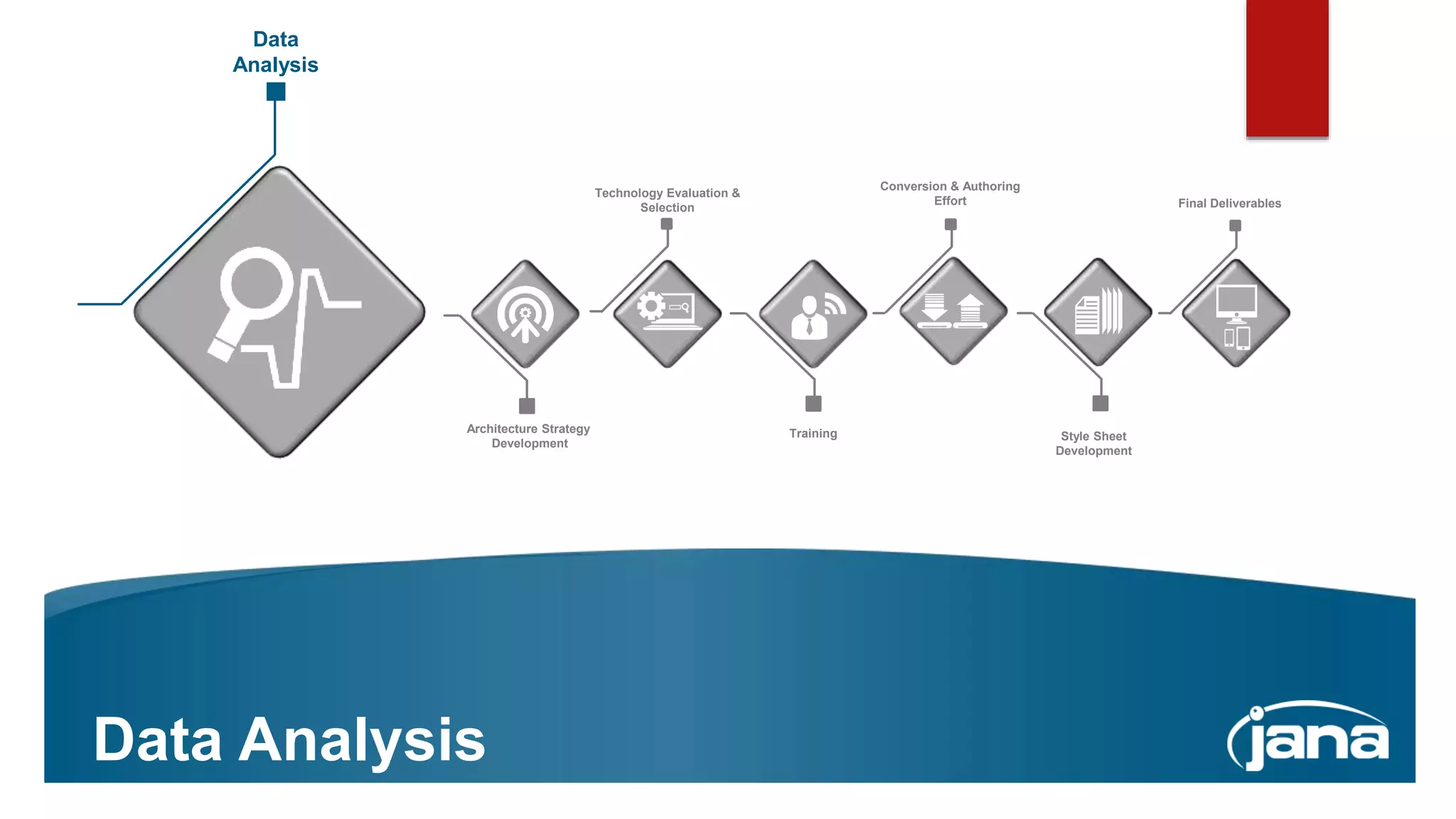

The document outlines a company's expertise in XML-based authoring and conversion, specifically focusing on DITA implementation and structured data methodologies. It includes information about team roles, workflows, project management, and key considerations for successful DITA integration. Additionally, it provides contact details for experts in the field, highlighting their extensive experience in the industry.