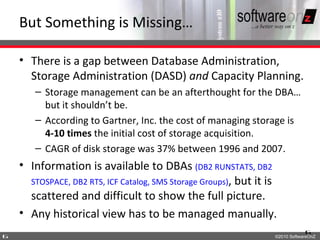

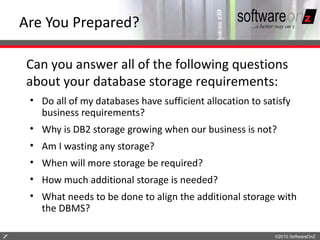

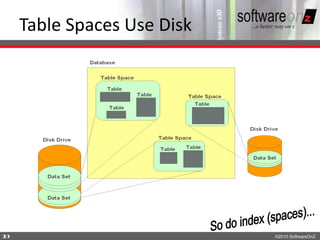

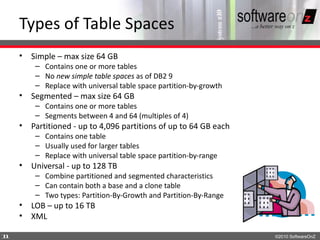

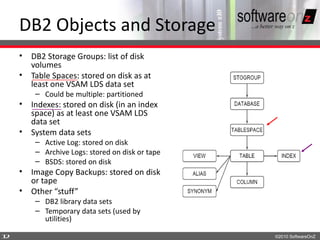

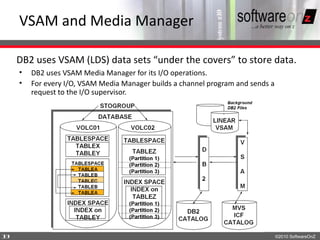

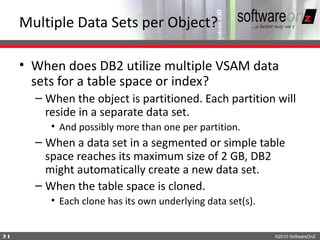

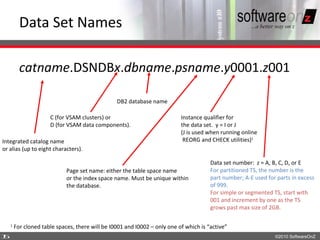

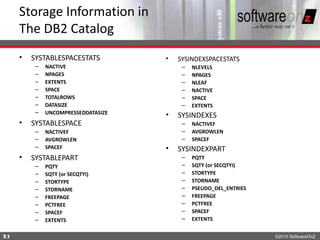

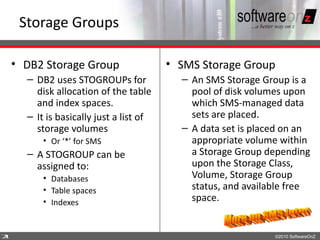

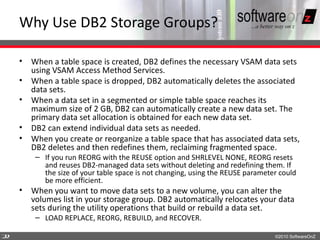

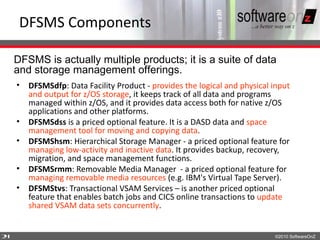

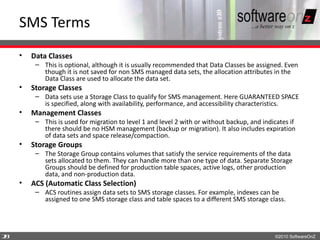

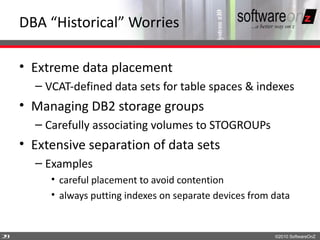

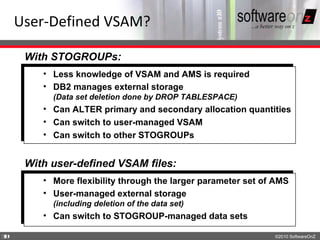

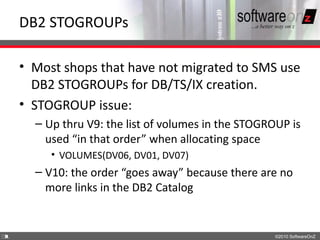

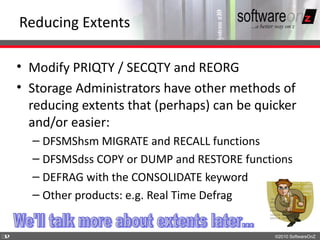

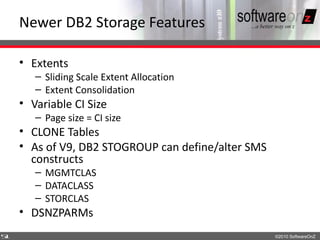

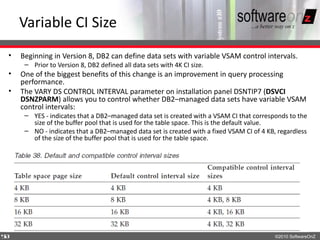

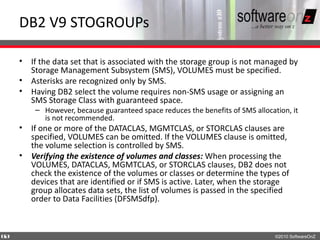

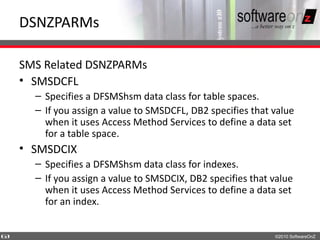

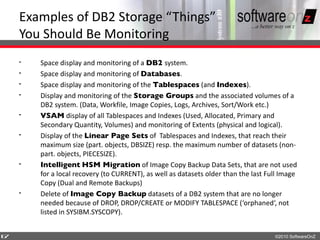

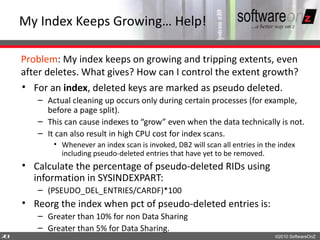

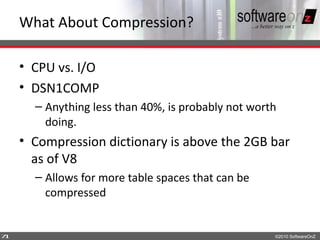

This document discusses the relationship between DB2 and storage management. It describes how DB2 uses storage through tablespaces, indexes, and other objects that are stored on disk as VSAM data sets. It also discusses how DB2 interacts with DFSMS to manage data sets and how storage groups and SMS can be used to simplify storage administration for DB2 objects. While DB2 provides storage management features, there is still a gap between DBA and storage administration that tools can help address.