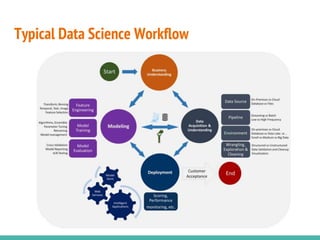

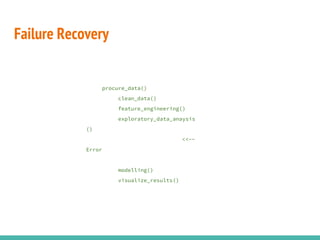

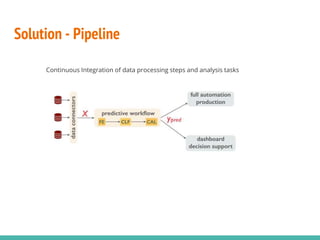

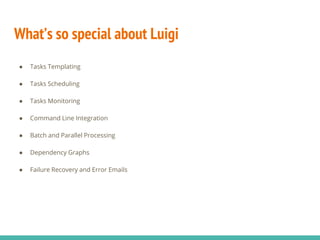

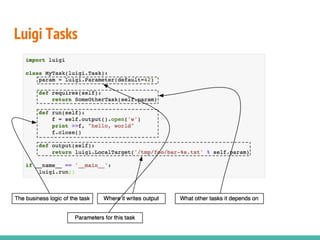

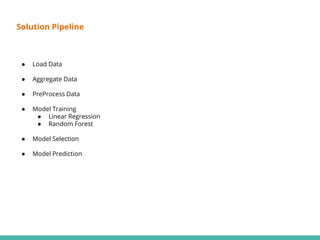

This document describes building data science pipelines in Python using Luigi. It discusses the typical data science workflow, challenges with the current workflow approach, and how data science pipelines with Luigi can help address these challenges. Key features of Luigi that make it useful for data science pipelines are presented, including task templating, scheduling, monitoring, failure recovery, and enabling batch and parallel processing. The document concludes with a demonstration Luigi pipeline example to predict the performance score of mobile game users.