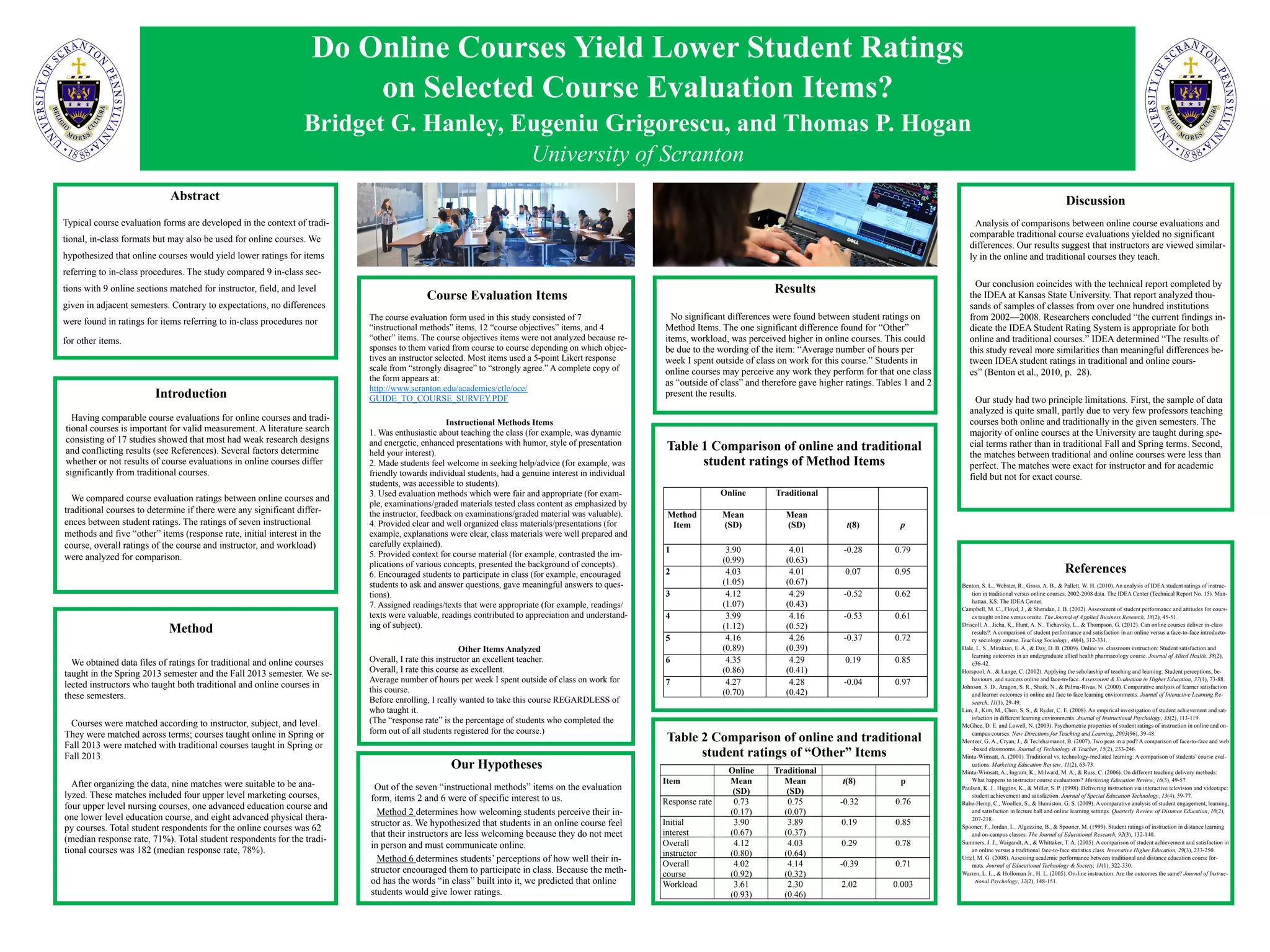

The document compares course evaluation ratings between online and traditional courses. Contrary to expectations, the study found no significant differences in ratings for most items, including those referring to in-class procedures. The only significant difference was that students in online courses perceived a higher workload than those in traditional courses, possibly because online students consider any work for the class as "outside of class." Overall, the results suggest that instructors are viewed similarly in online and traditional courses.