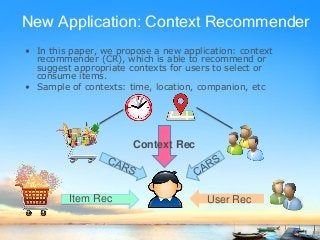

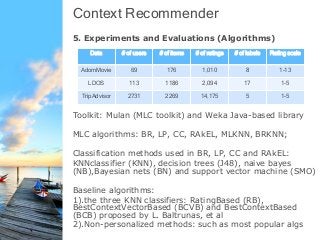

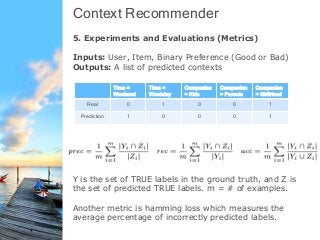

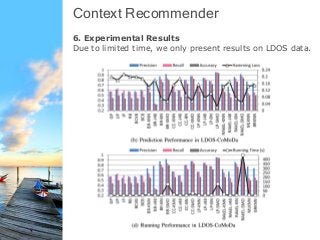

This document proposes a new type of recommender system called a context recommender that recommends appropriate contexts (e.g. time, location, companion) for users to consume items. It discusses how context recommenders are different than traditional and context-aware recommenders. It also presents the framework for context recommenders including algorithms using multi-label classification to directly predict contexts. The document reports on experiments comparing these algorithms on several datasets and finds that personalized algorithms outperform non-personalized ones and that certain multi-label classification algorithms like label powerset using support vector machines achieve the best performance.

![Intro – Type of Recommendations

• [Item Recommendations]

• [User Recommendations]](https://image.slidesharecdn.com/slidewic2014context-140805210546-phpapp01/85/WI-2014-Context-Recommendation-Using-Multi-label-Classification-3-320.jpg)

![References

• Context Recommendations

[1].Baltrunas, Linas, Marius Kaminskas, Francesco Ricci, Lior

Rokach, Bracha Shapira, and Karl-Heinz Luke. "Best usage

context prediction for music tracks." In Proceedings of the 2nd

Workshop on Context Aware Recommender Systems. 2010.

[2].Yong Zheng, Bamshad Mobasher, Robin Burke. "Context

Recommendation Using Multi-label Classification". In Proceedings

of the 13th IEEE/WIC/ACM International Conference on Web

Intelligence, 2014

• Multi-label Classifications

[1].Tsoumakas, Grigorios, and Ioannis Katakis. "Multi-label

classification: An overview." International Journal of Data

Warehousing and Mining (IJDWM) 3, no. 3 (2007): 1-13.

[2].Tsoumakas, Grigorios, Ioannis Katakis, and Ioannis Vlahavas.

"Mining multi-label data." In Data mining and knowledge

discovery handbook, pp. 667-685. Springer US, 2010.](https://image.slidesharecdn.com/slidewic2014context-140805210546-phpapp01/85/WI-2014-Context-Recommendation-Using-Multi-label-Classification-21-320.jpg)

![[WI 2014]Context Recommendation Using Multi-label Classification](https://image.slidesharecdn.com/slidewic2014context-140805210546-phpapp01/85/WI-2014-Context-Recommendation-Using-Multi-label-Classification-23-320.jpg)