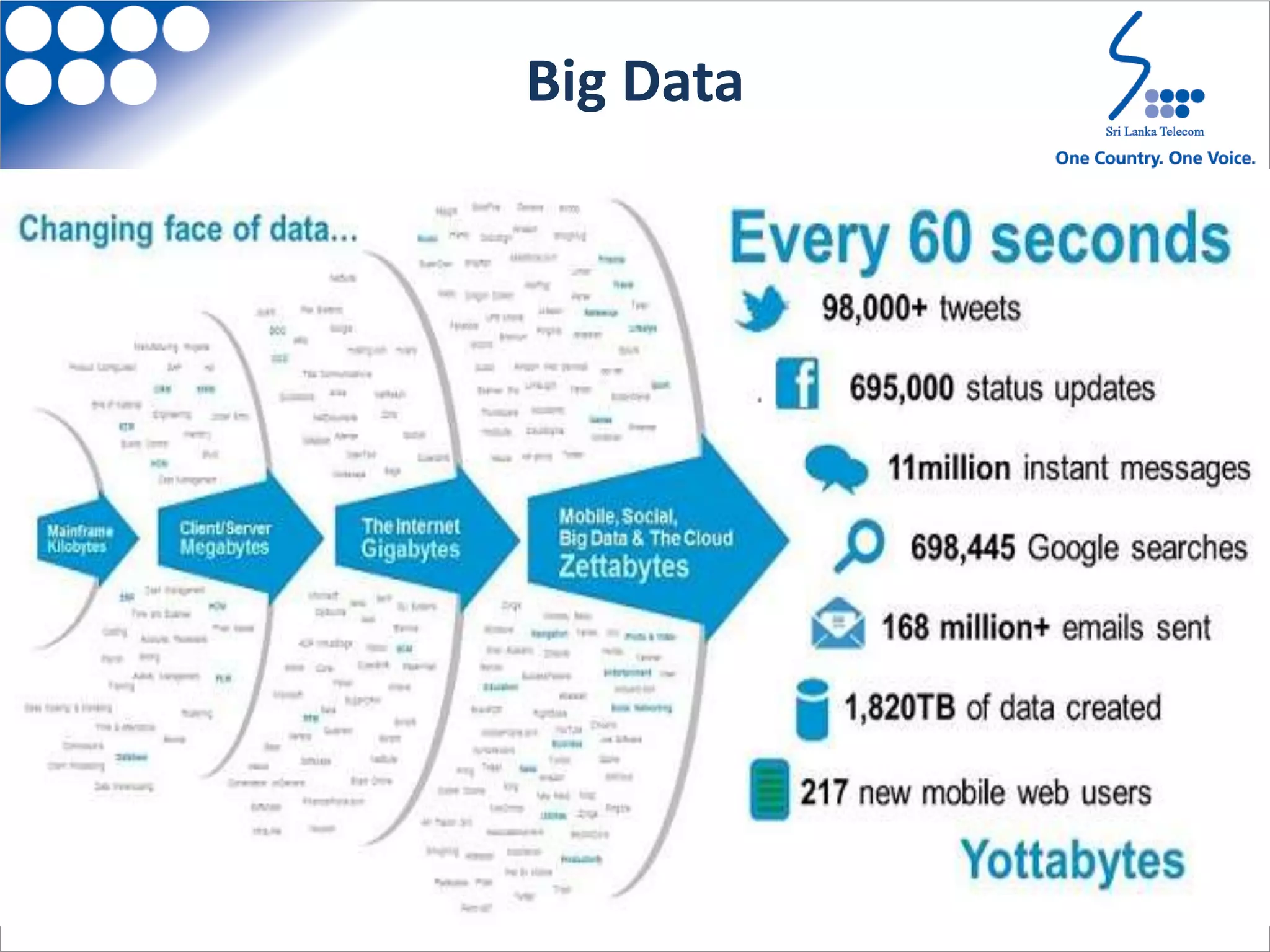

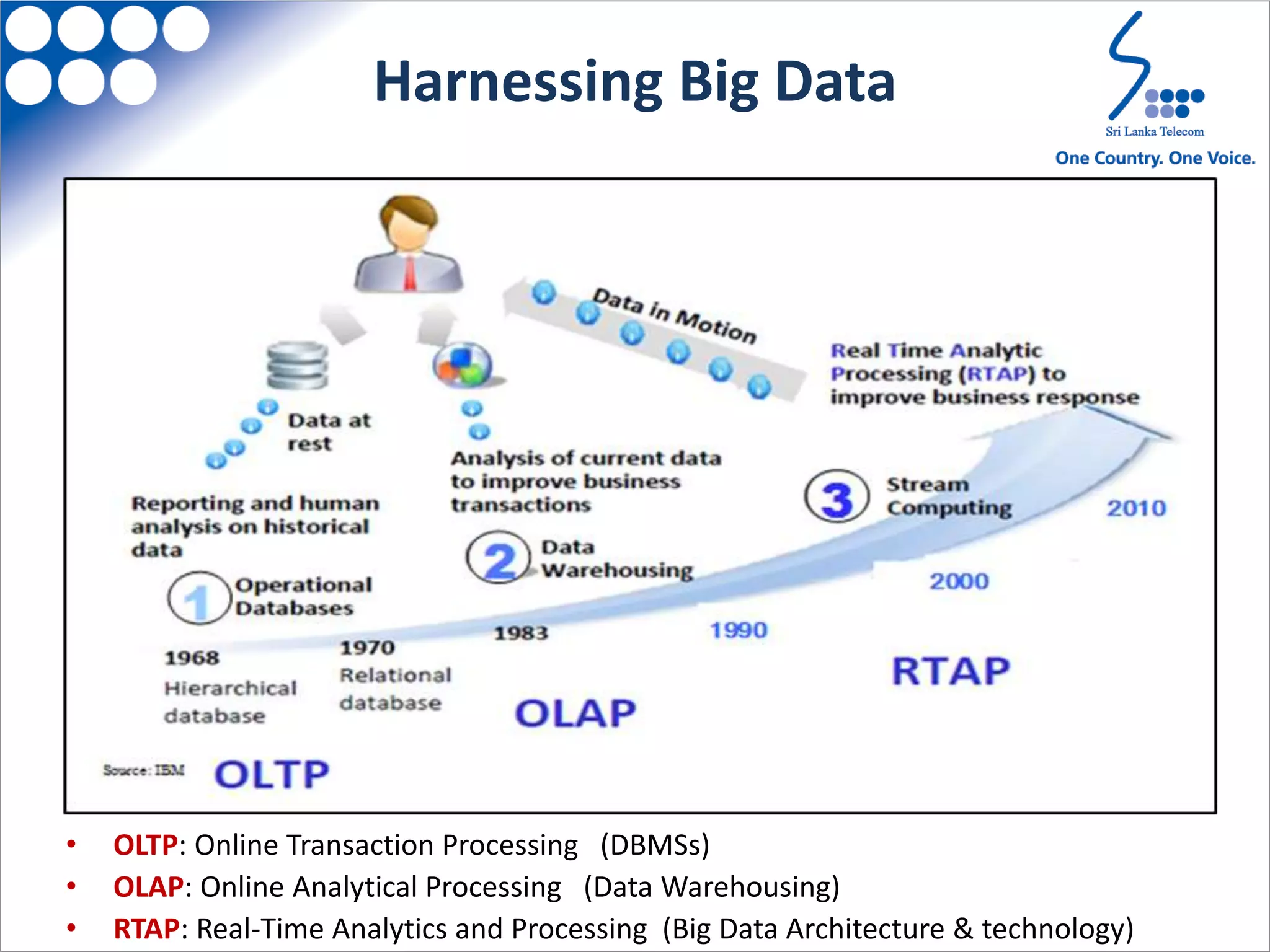

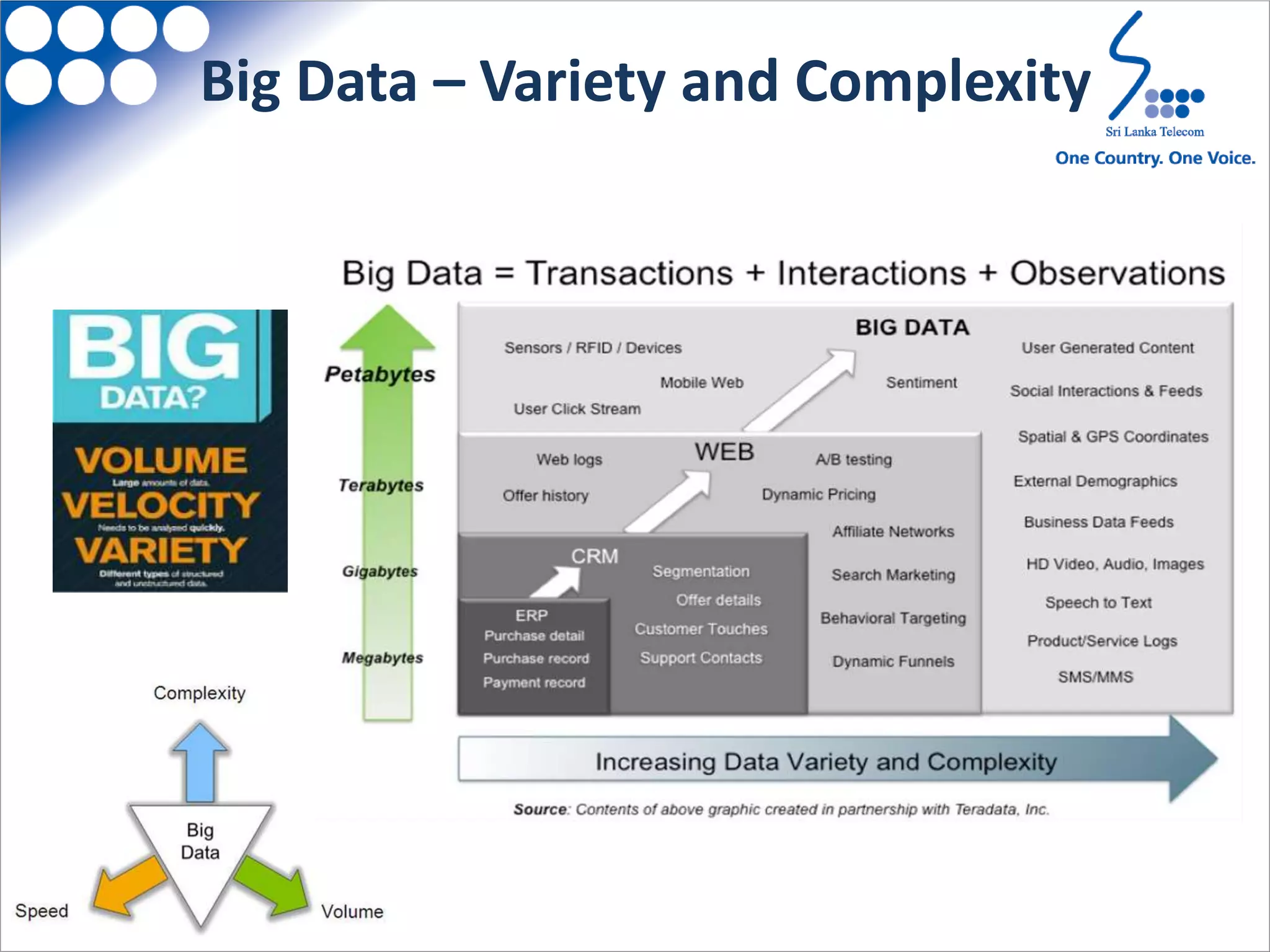

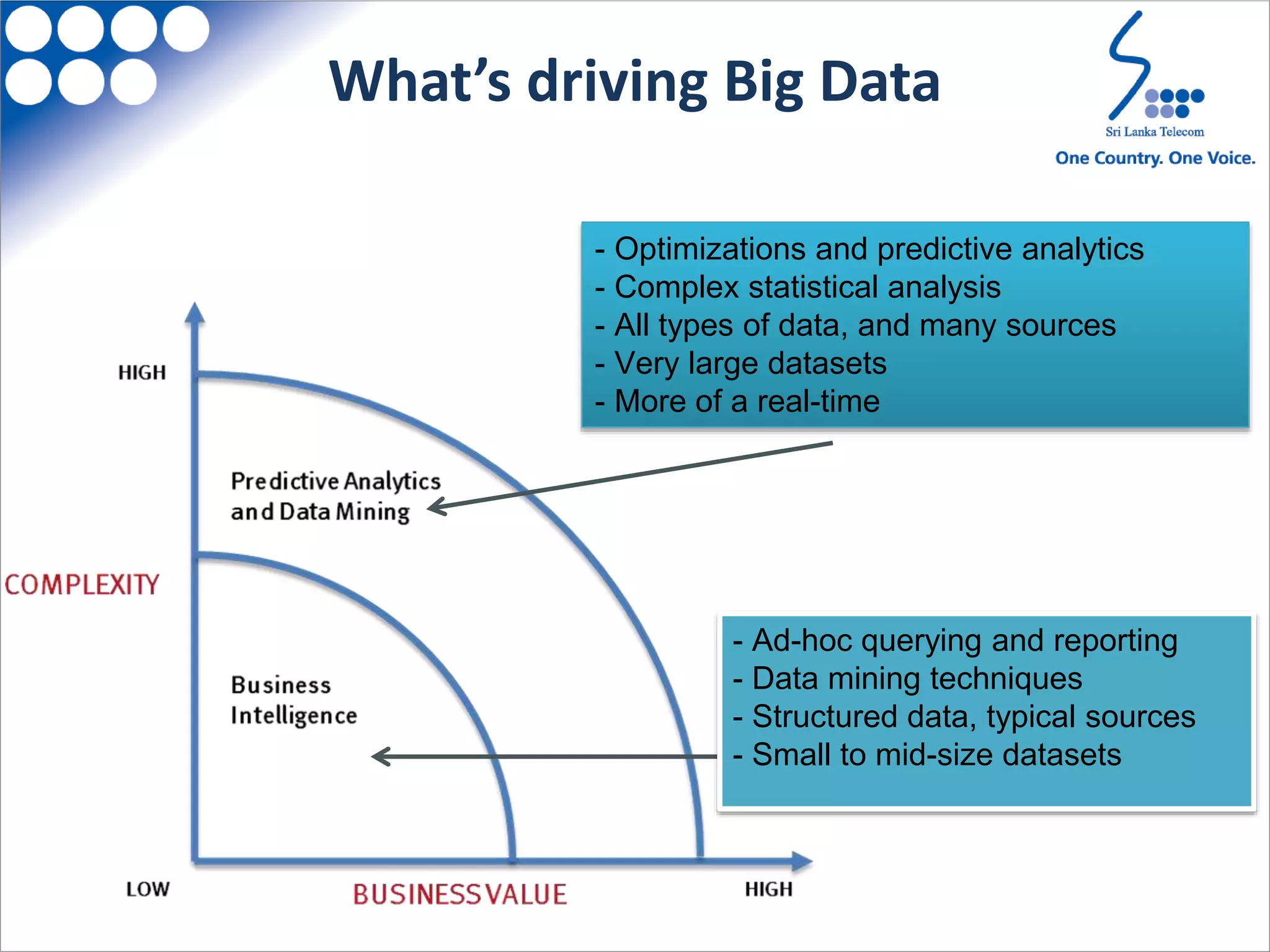

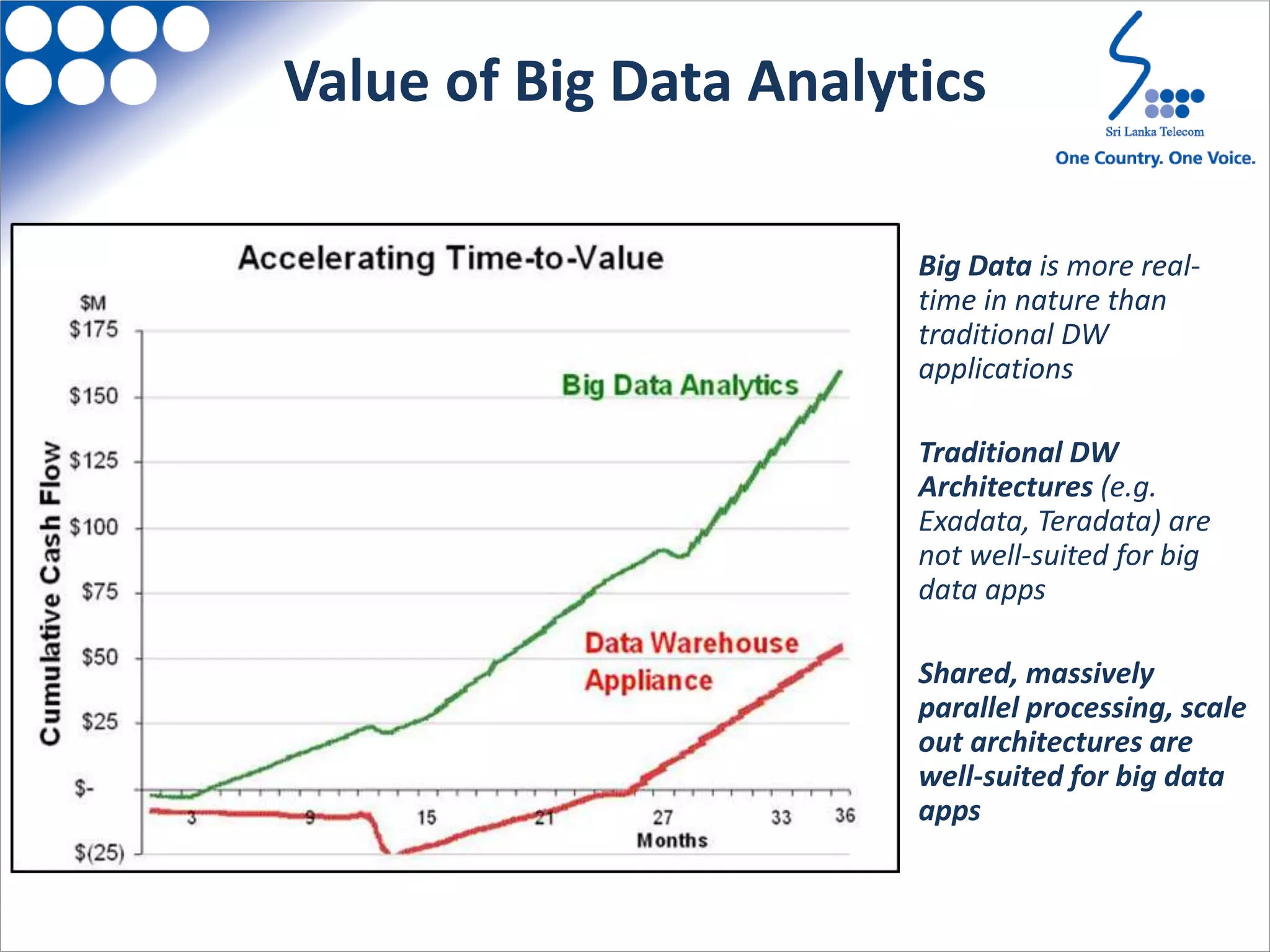

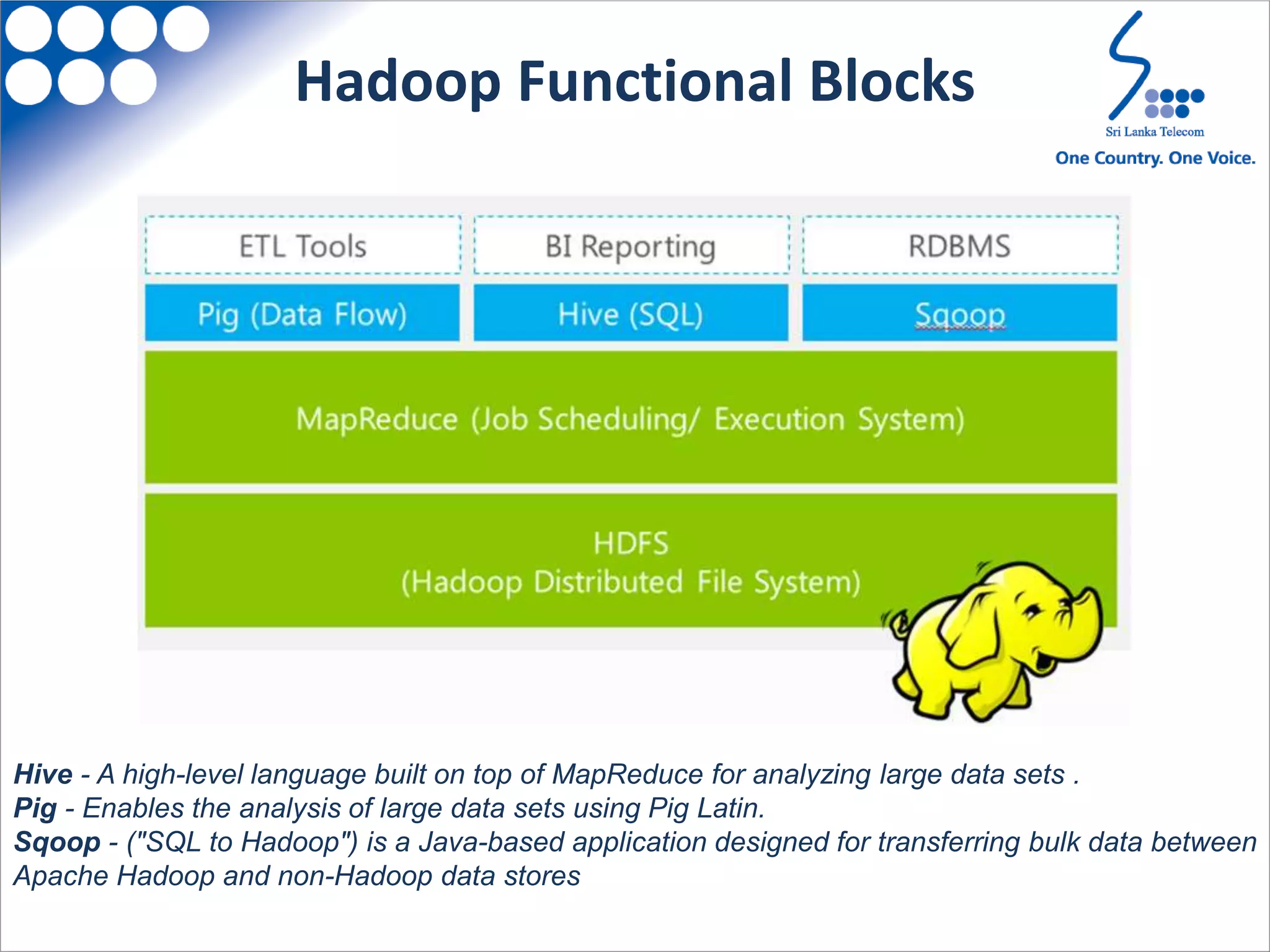

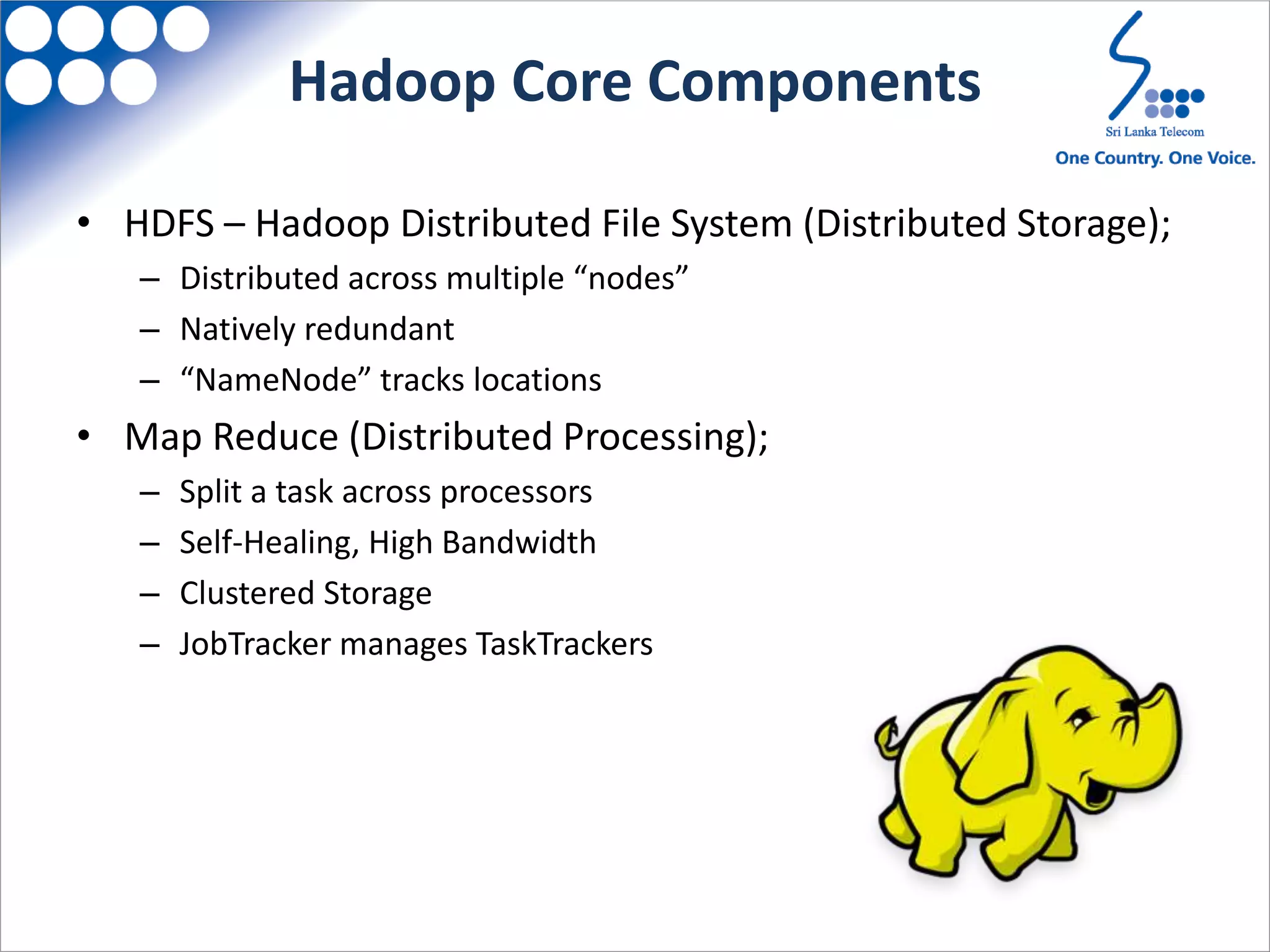

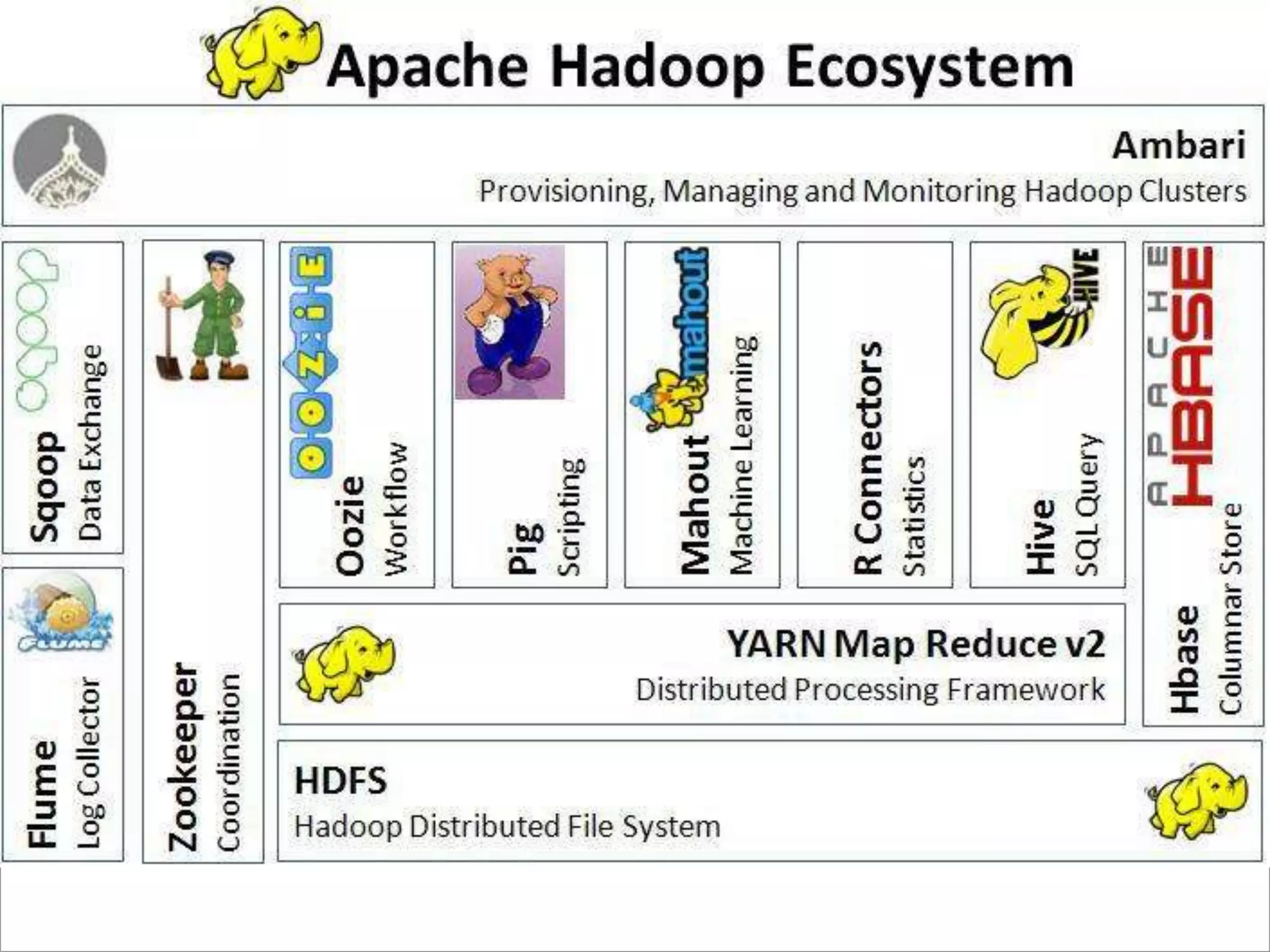

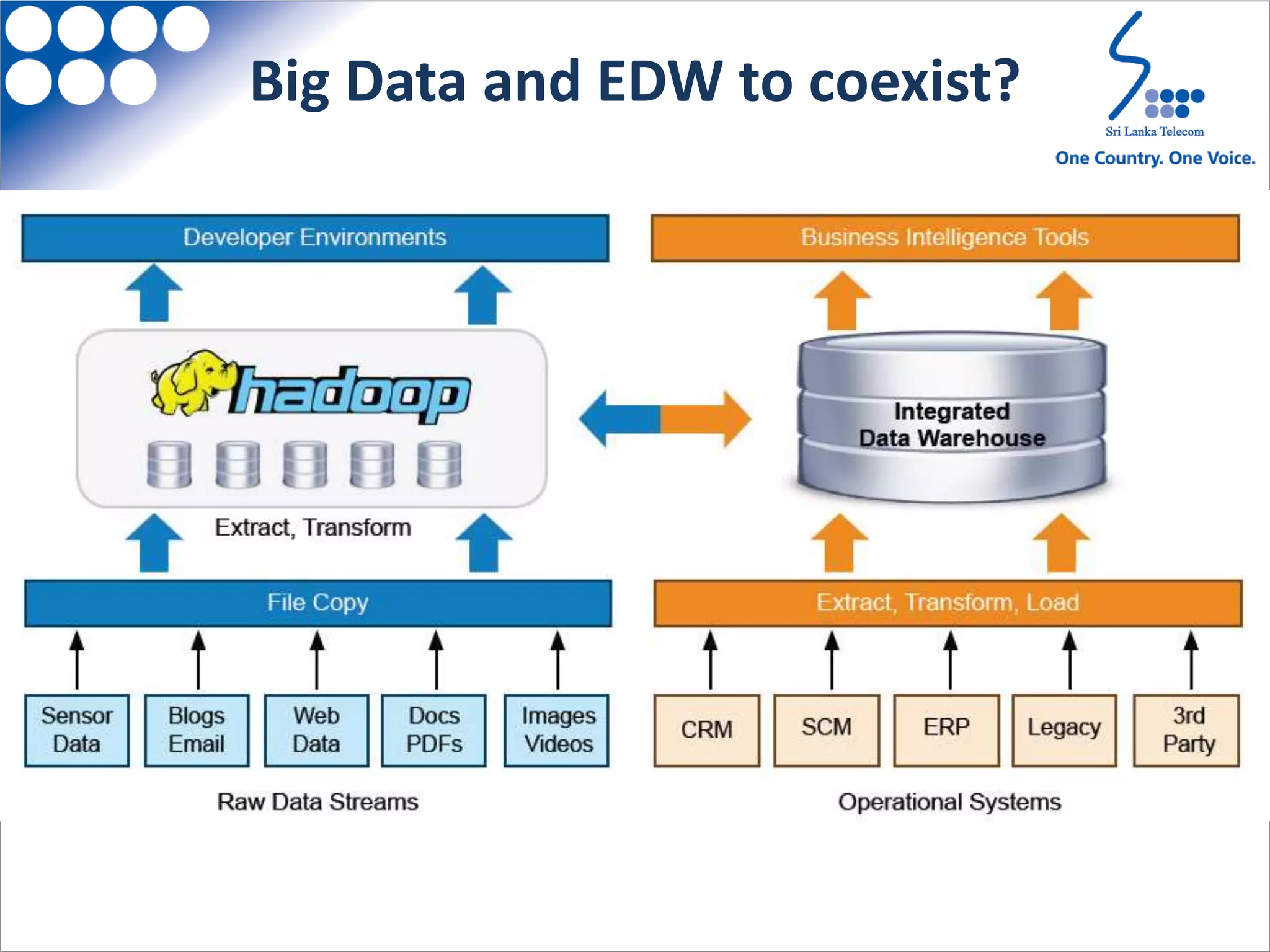

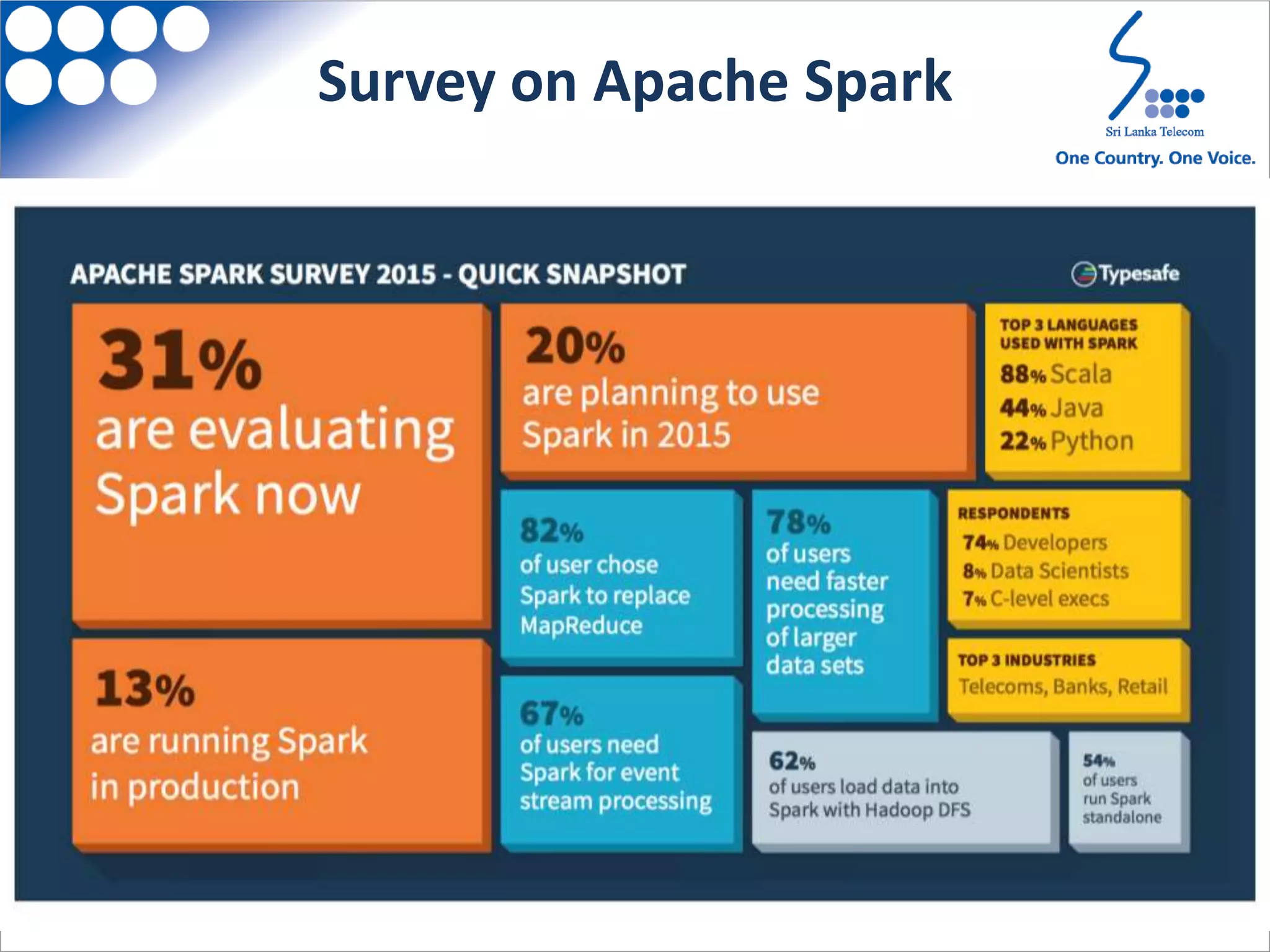

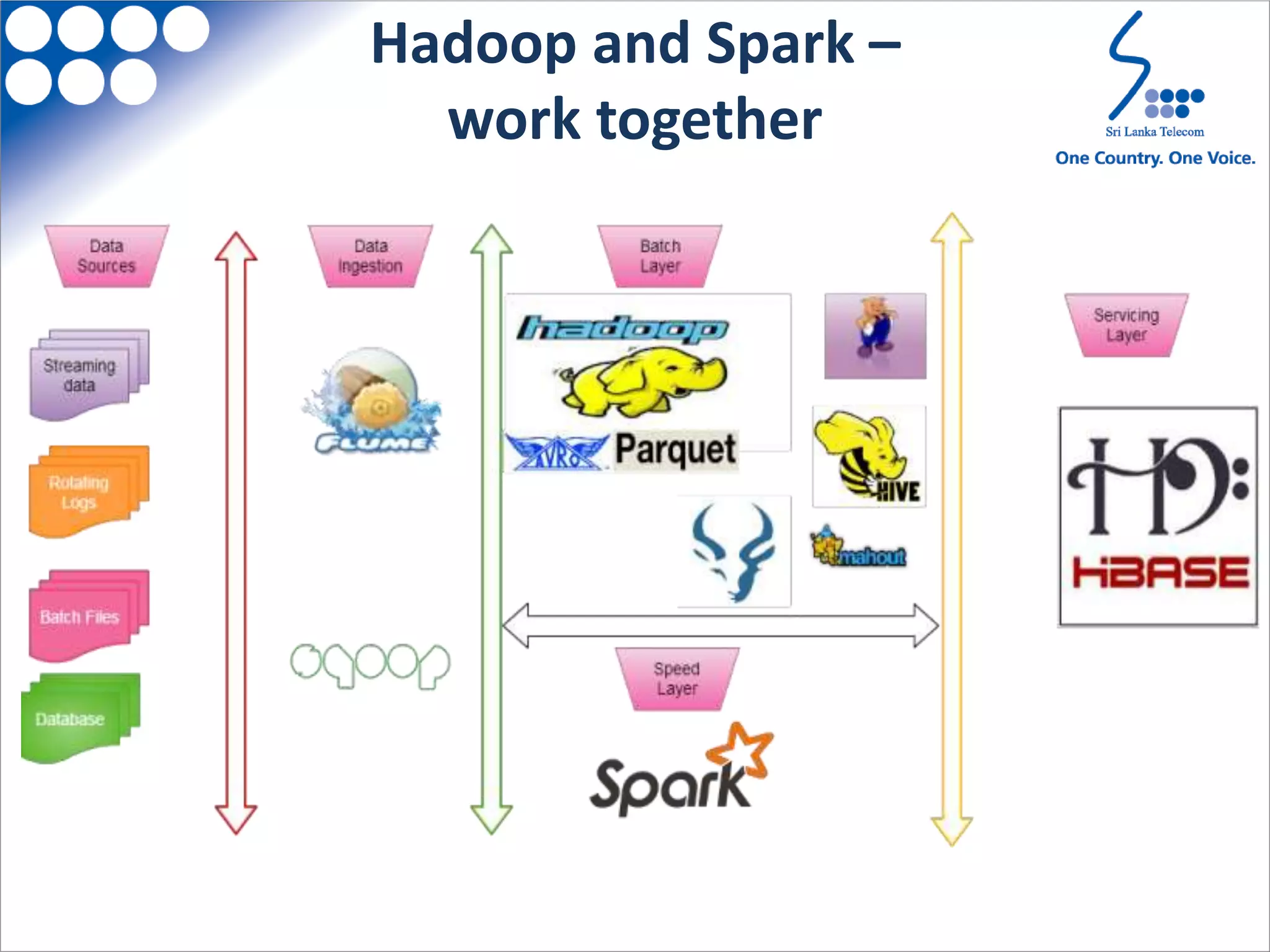

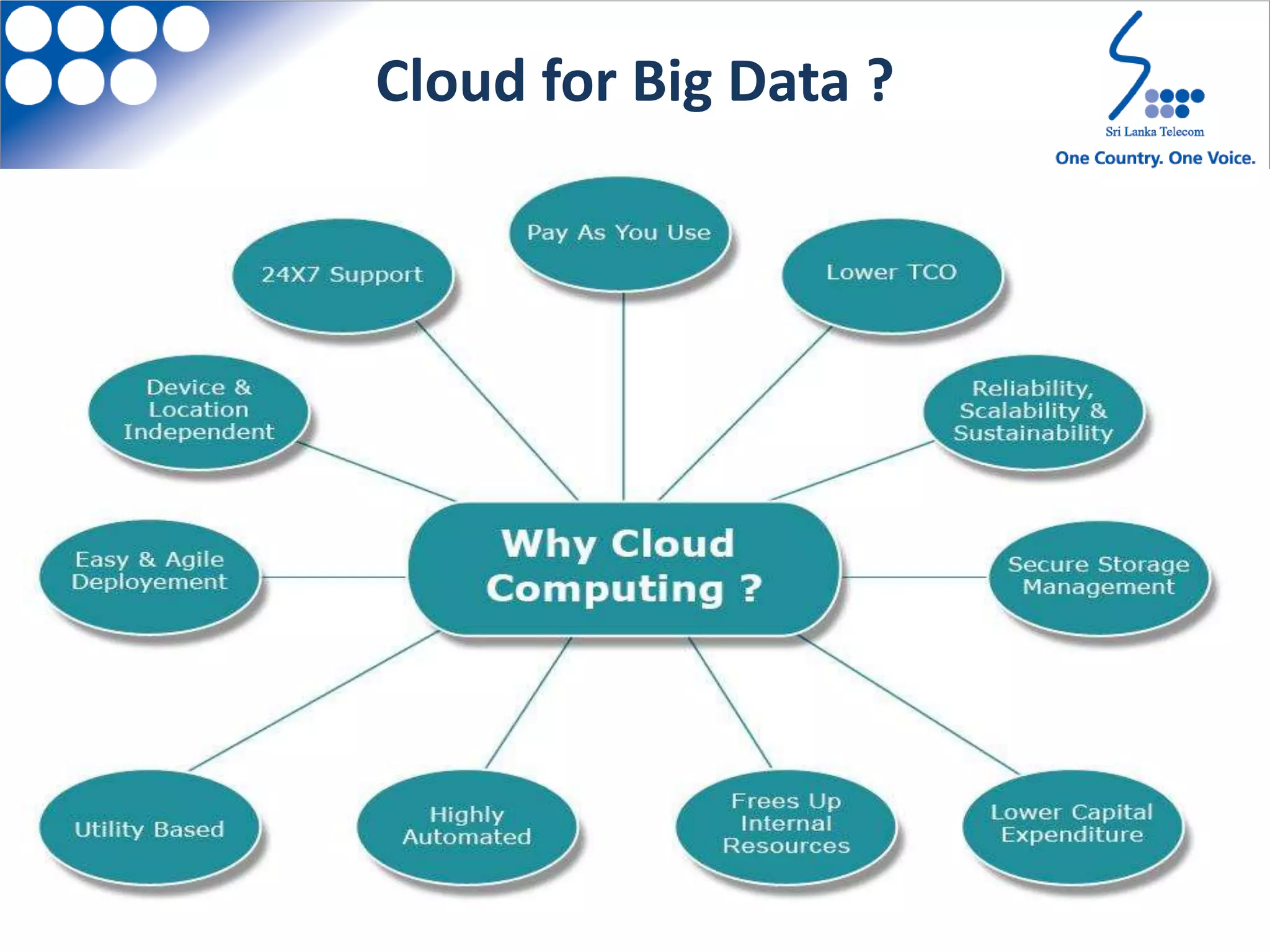

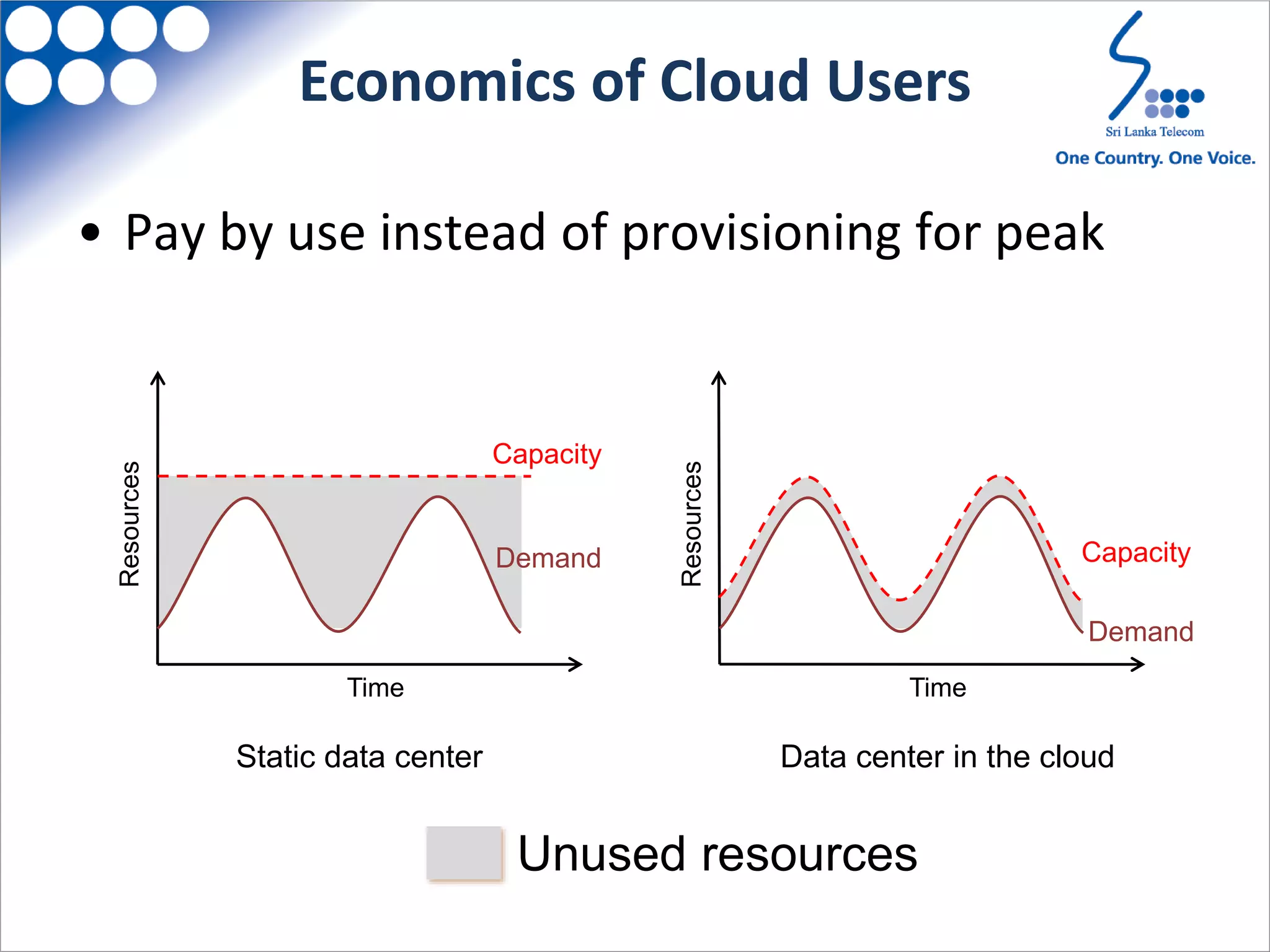

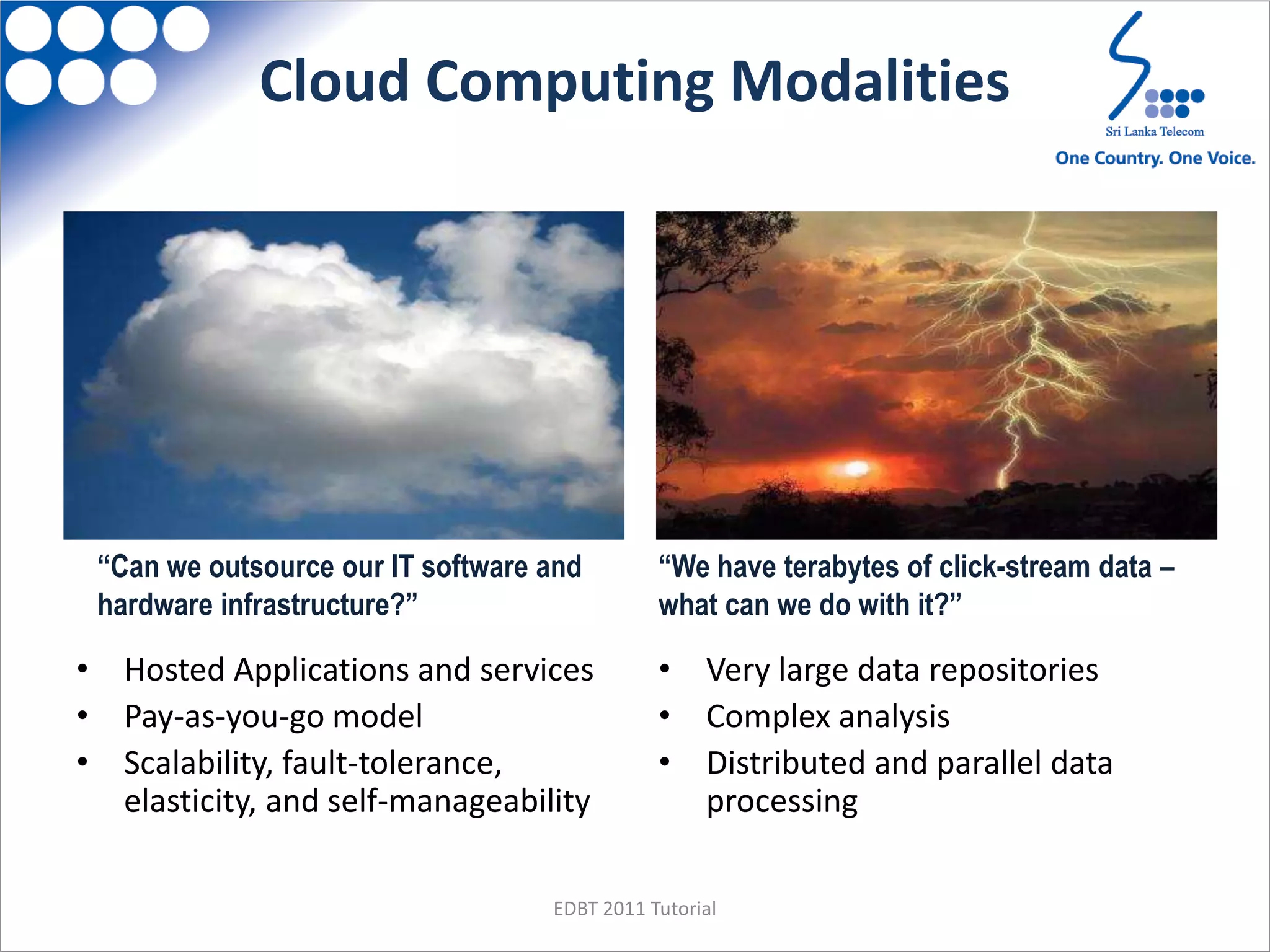

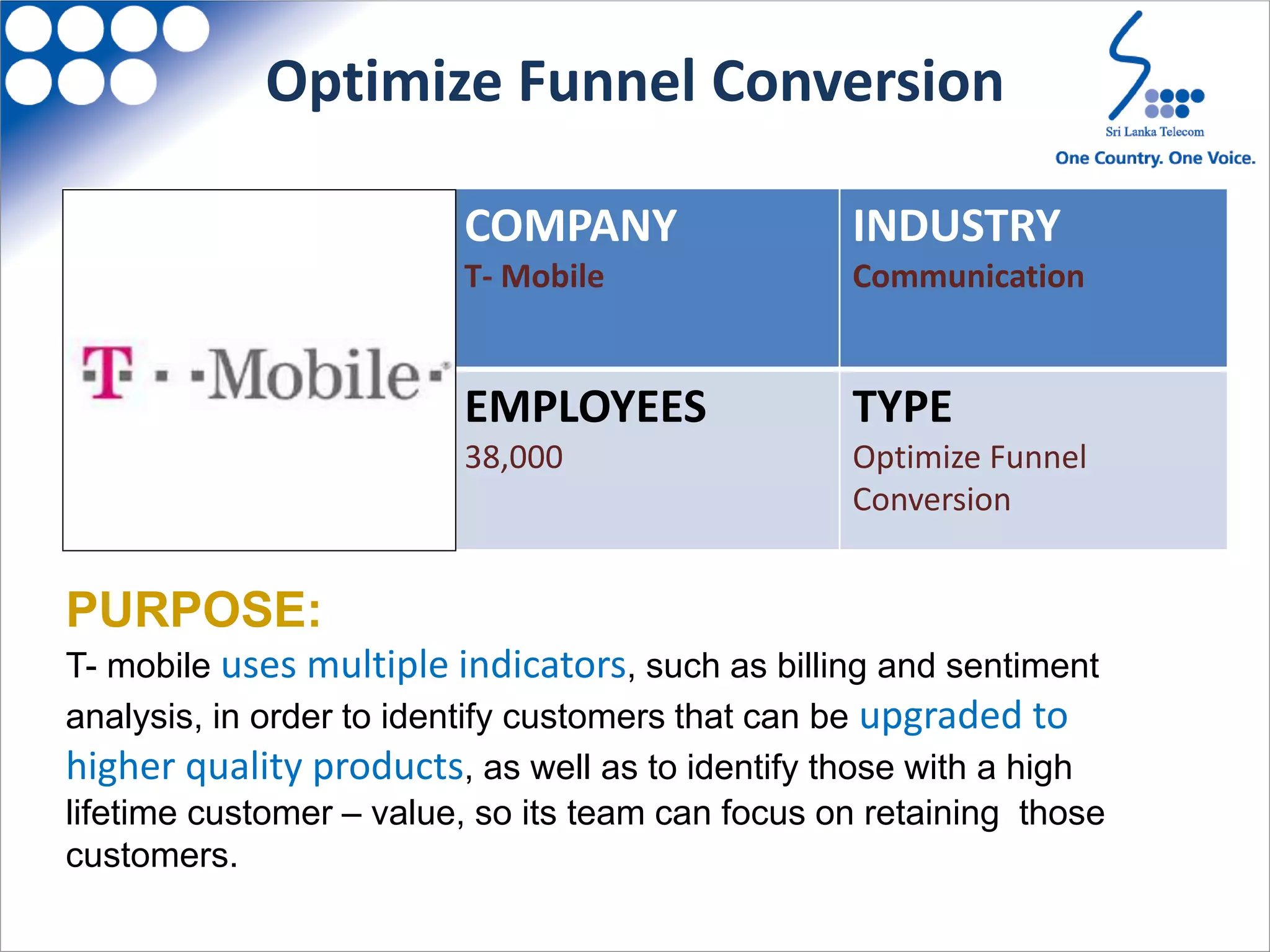

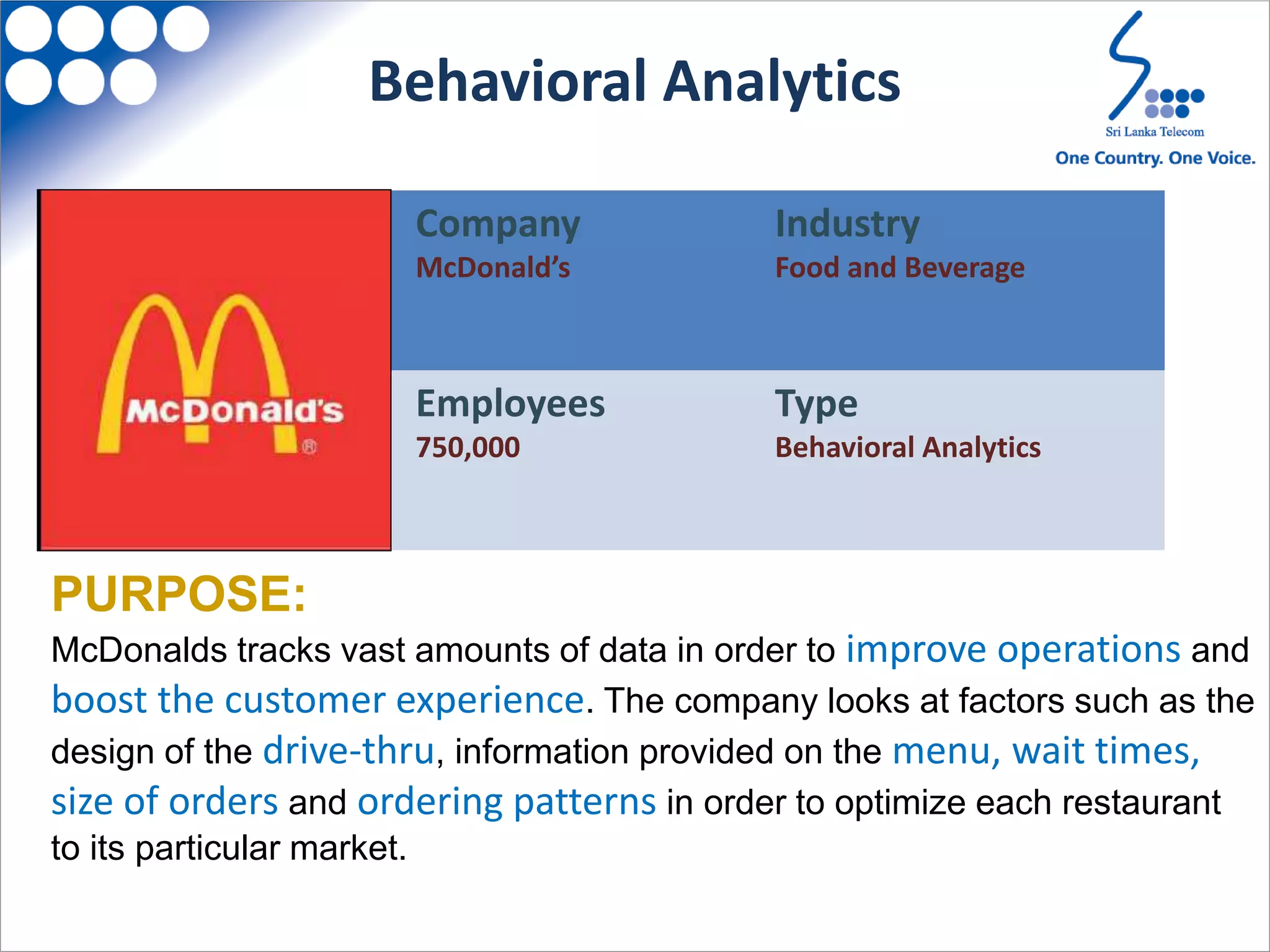

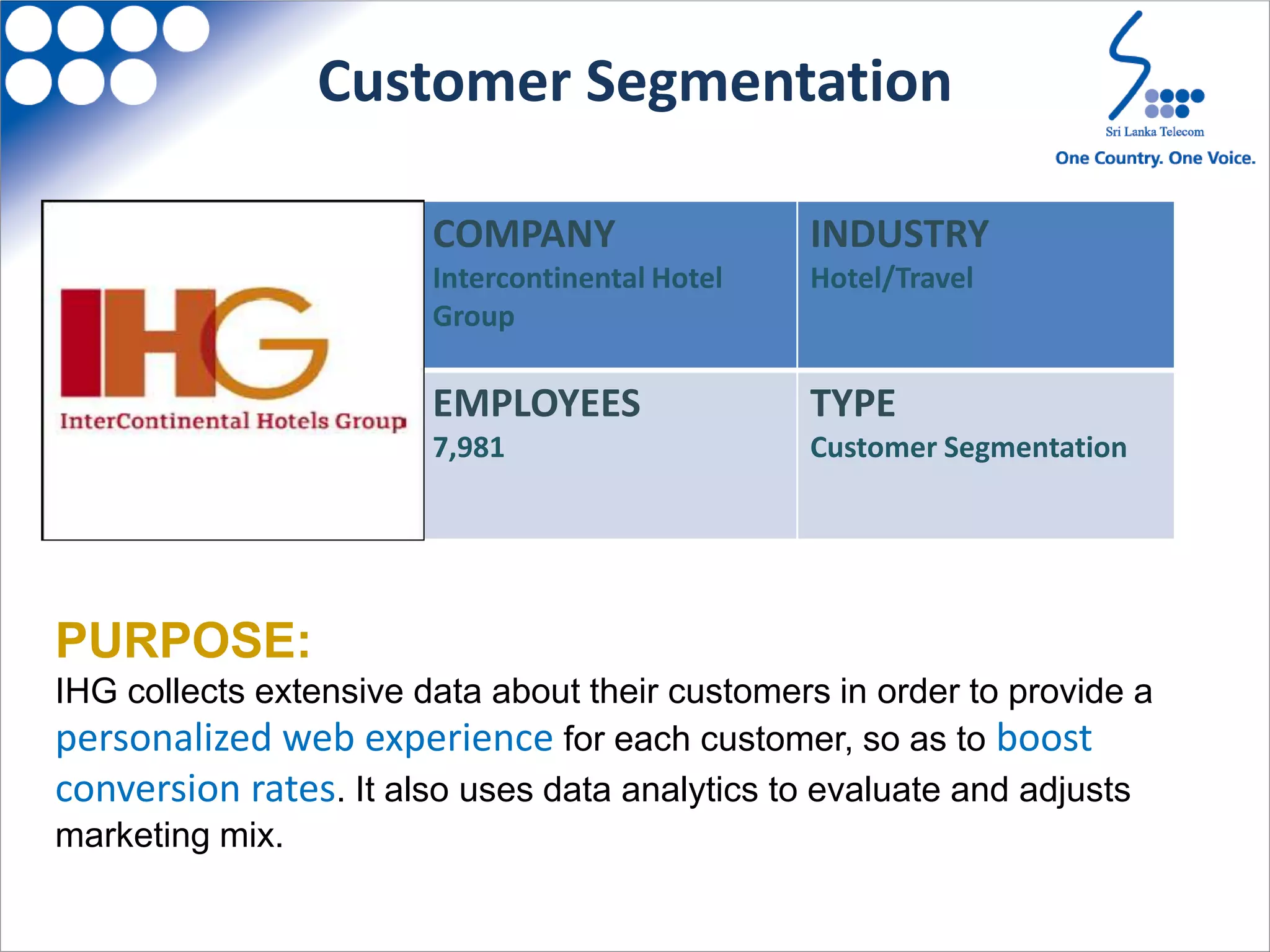

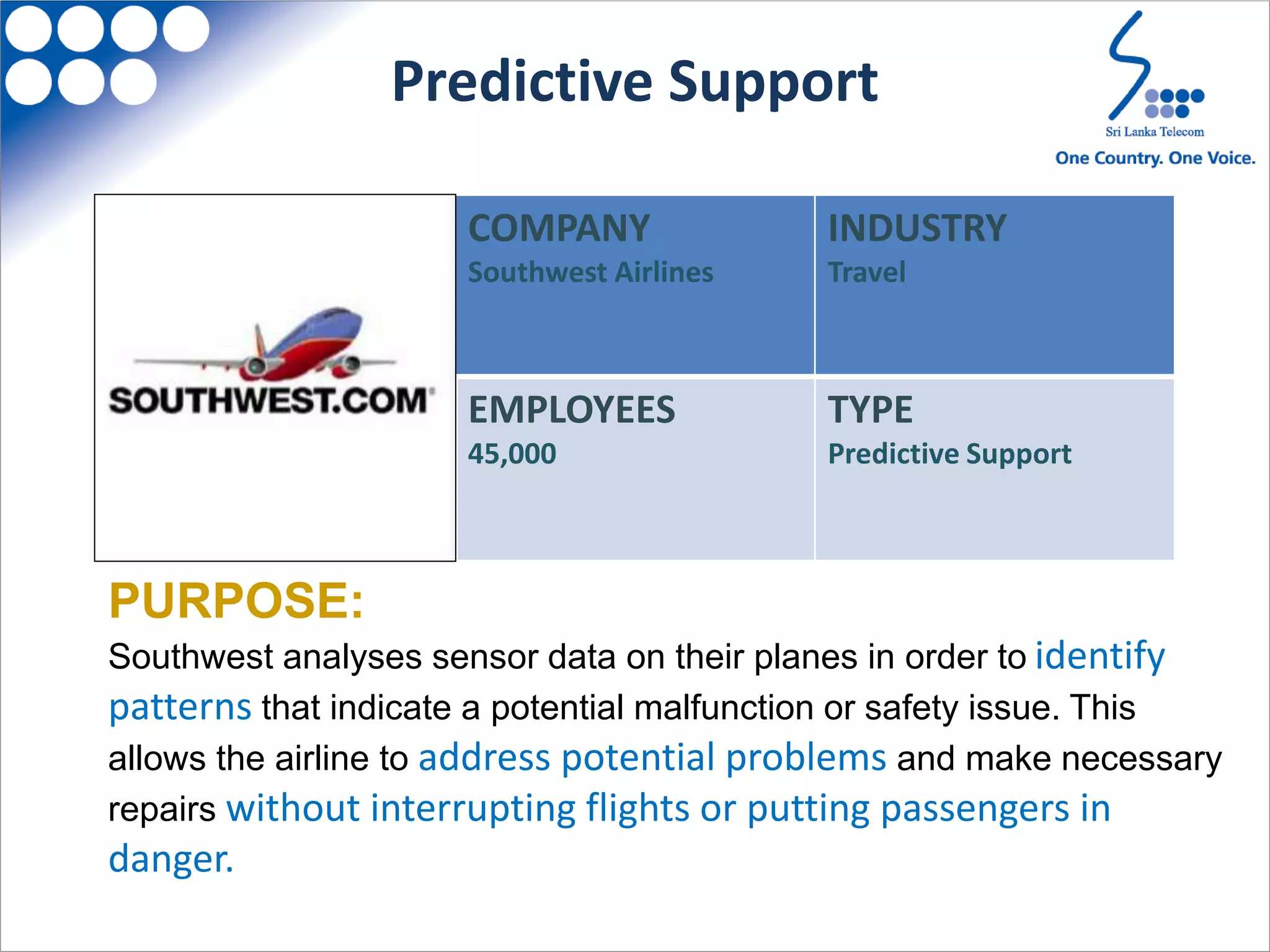

Big data solutions on the cloud are the future according to the author. Big data analytics and cloud computing both offer organizations valuable insights, innovations, and revenue opportunities while also enhancing agility, efficiency, and reducing costs. Hadoop is commonly used for big data but Spark is emerging as more flexible. The cloud is becoming a popular option for big data due to elastic infrastructure and ability to process data close to its source. Common big data use cases include optimizing sales funnels, behavioral analytics, customer segmentation, predictive maintenance, and market analysis.