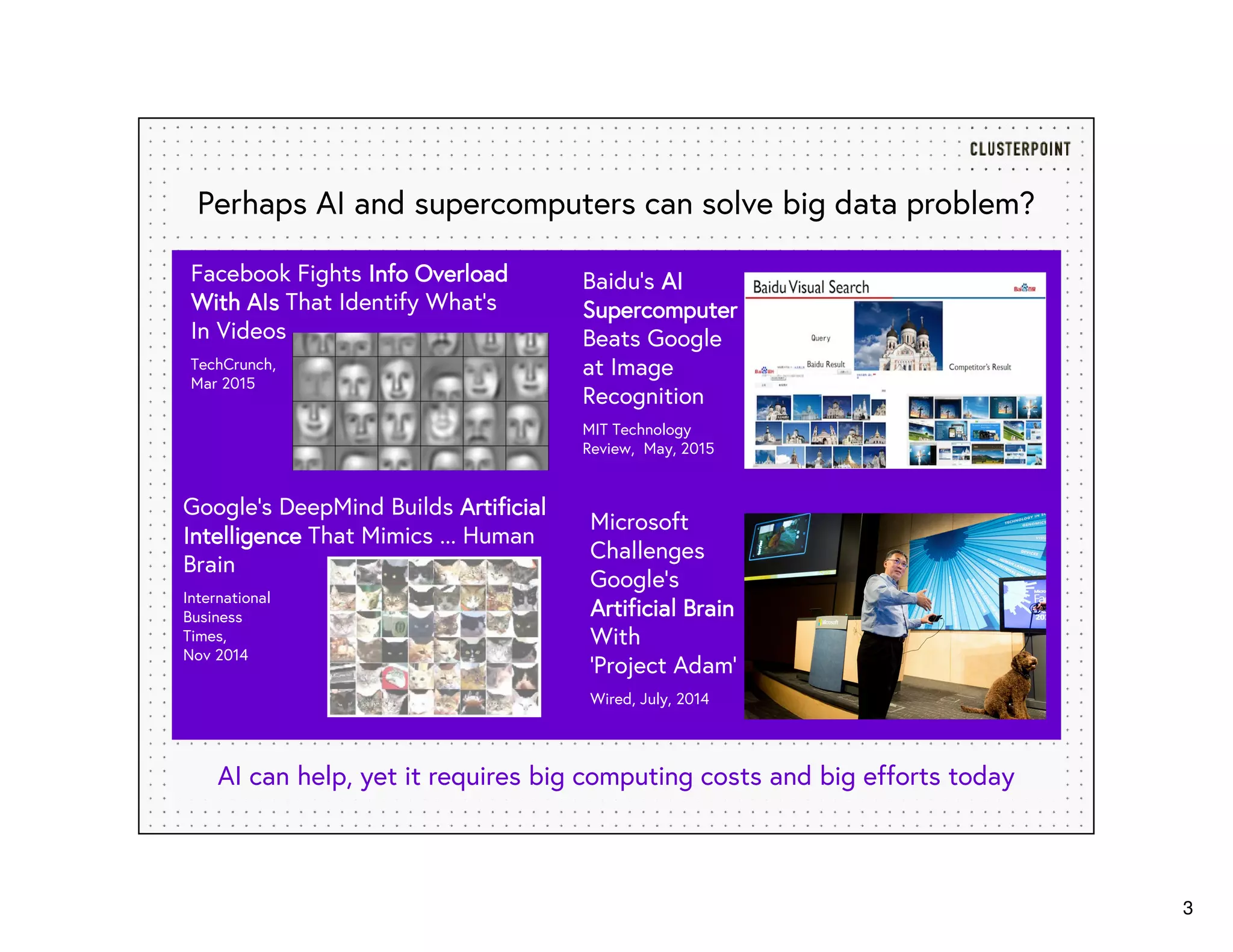

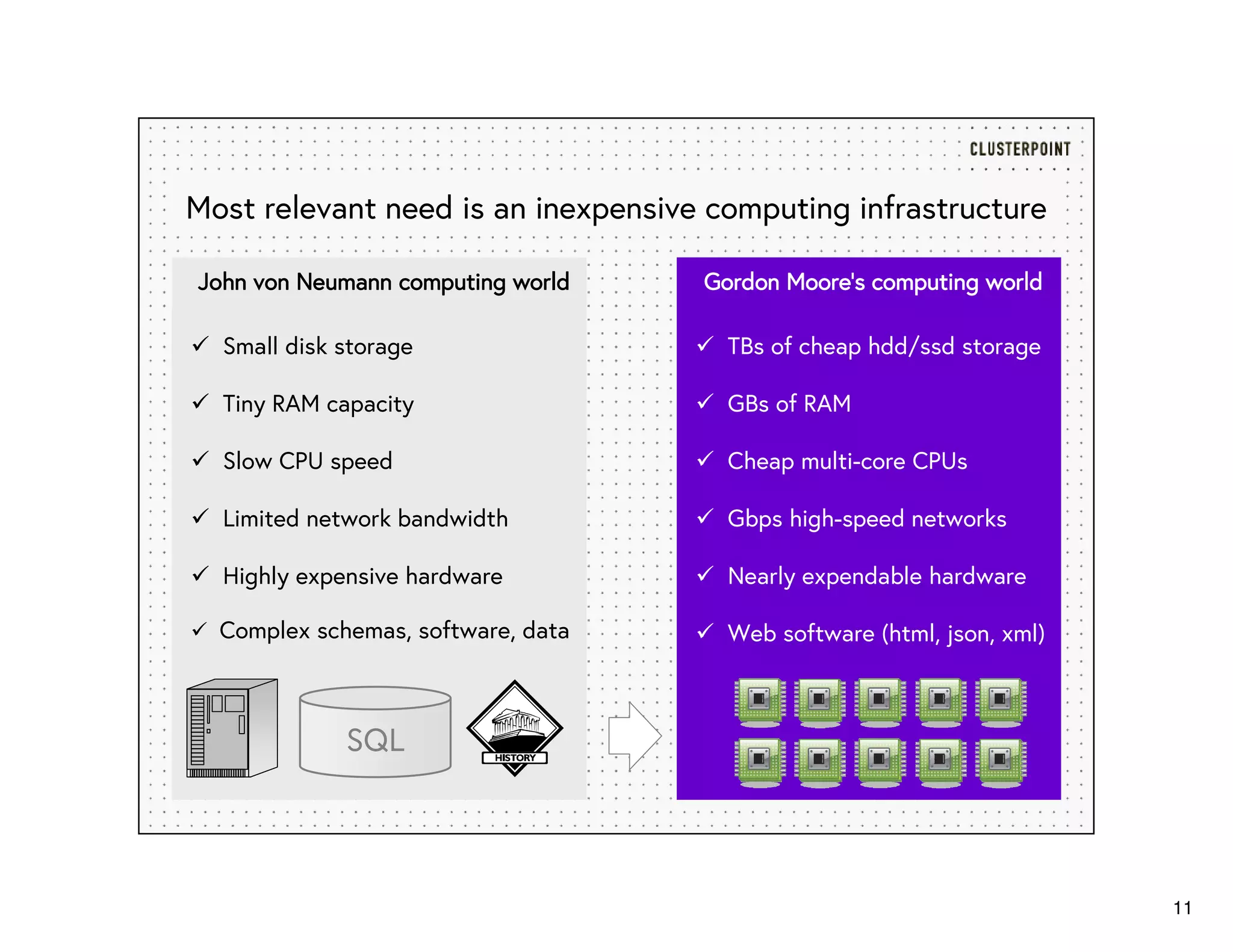

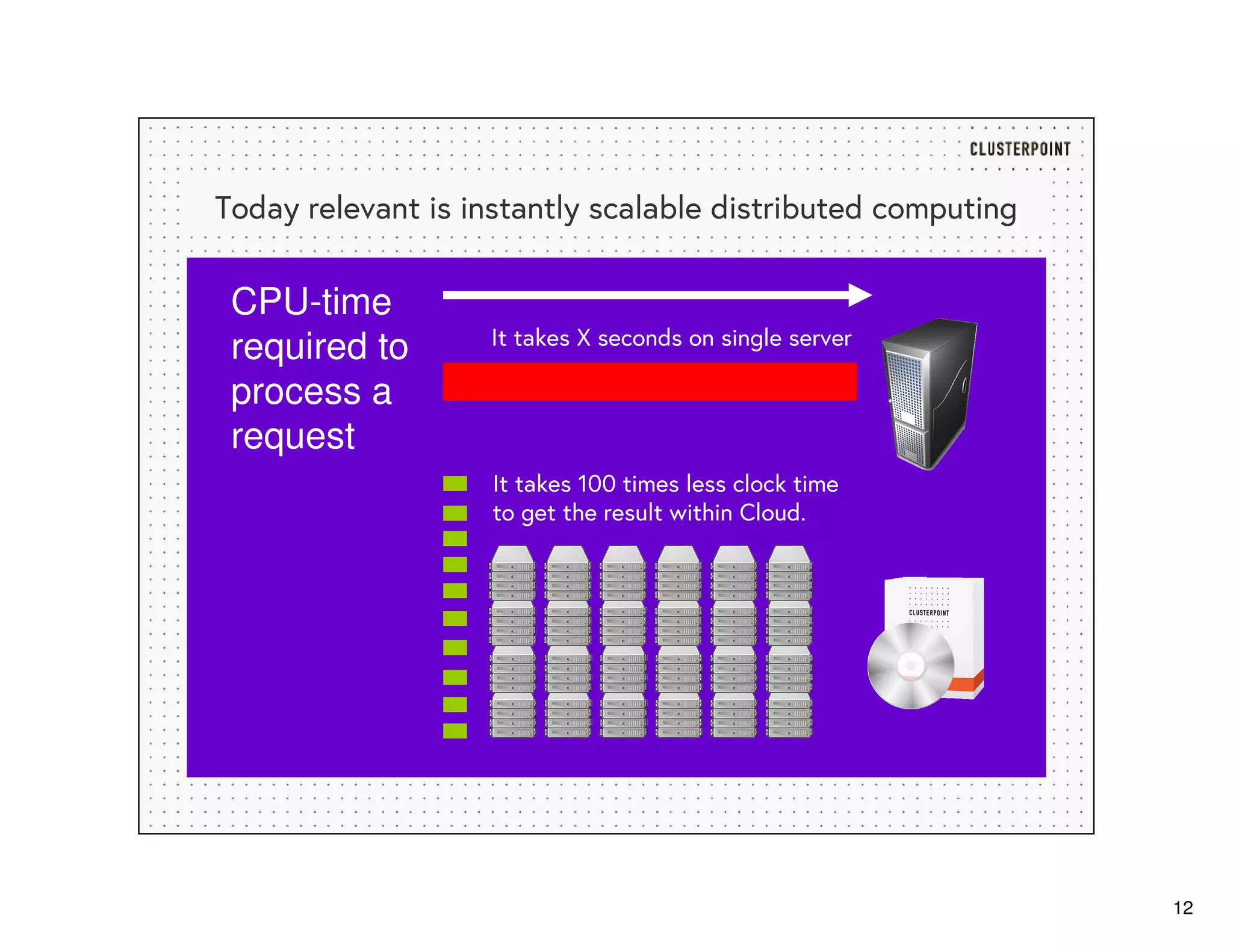

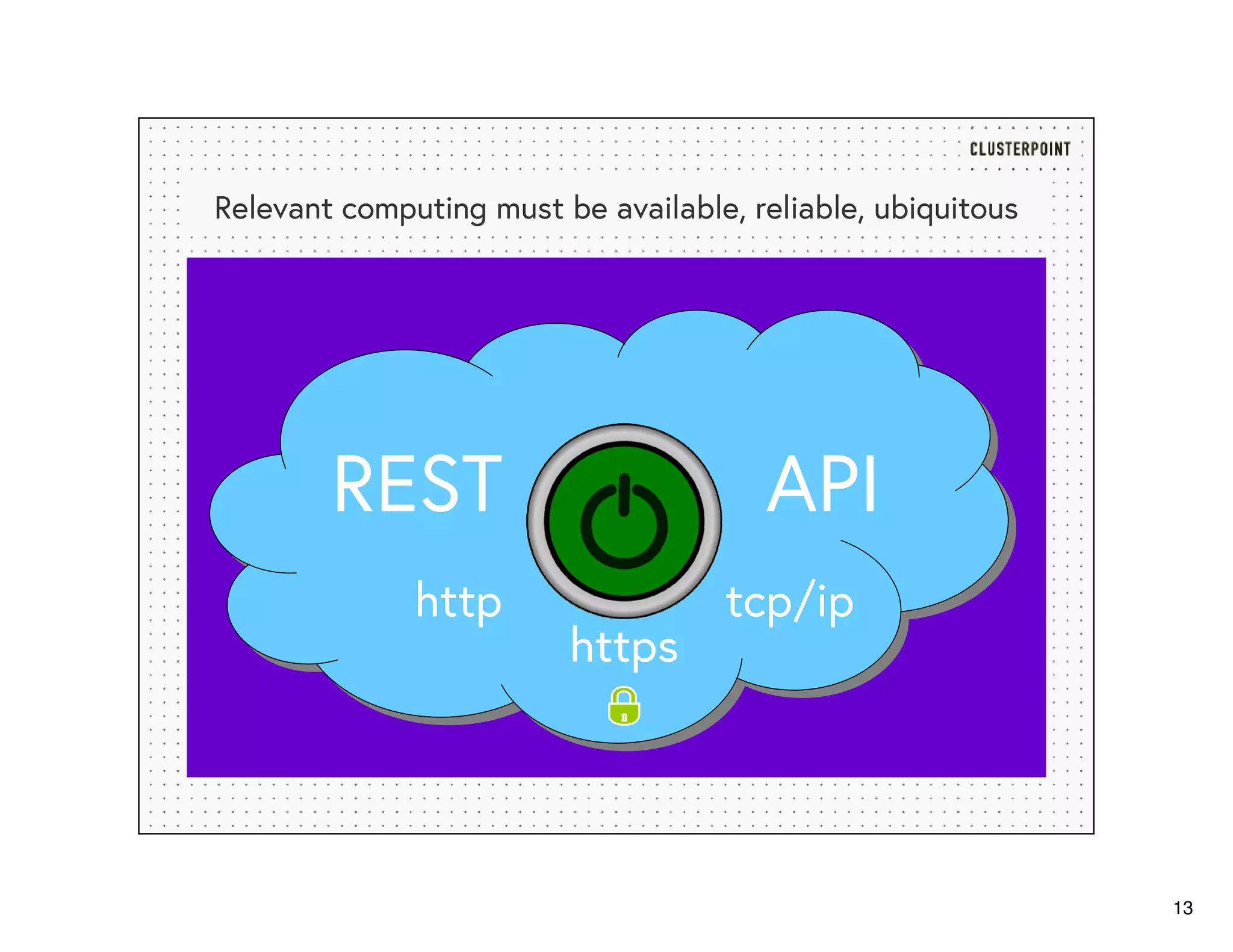

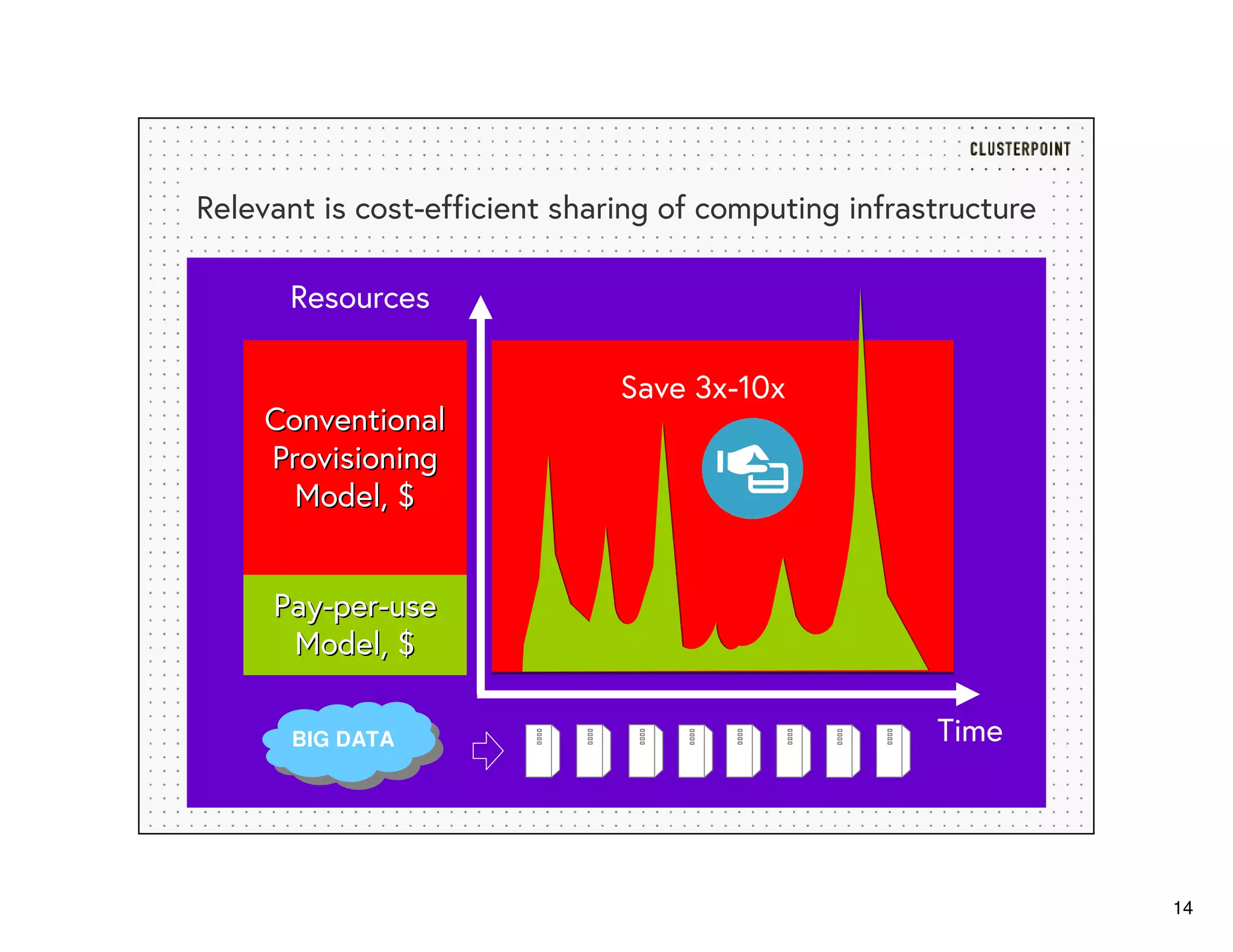

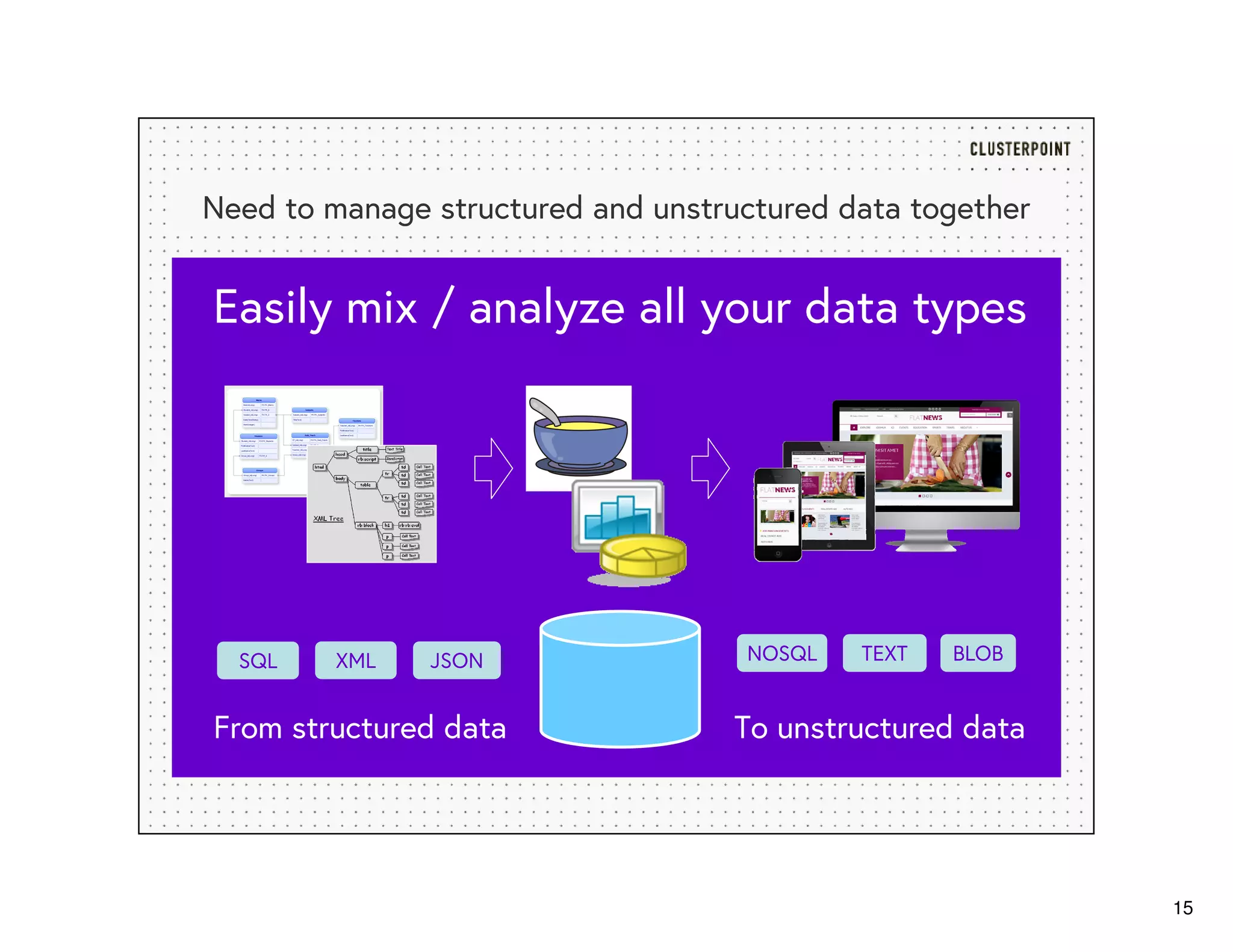

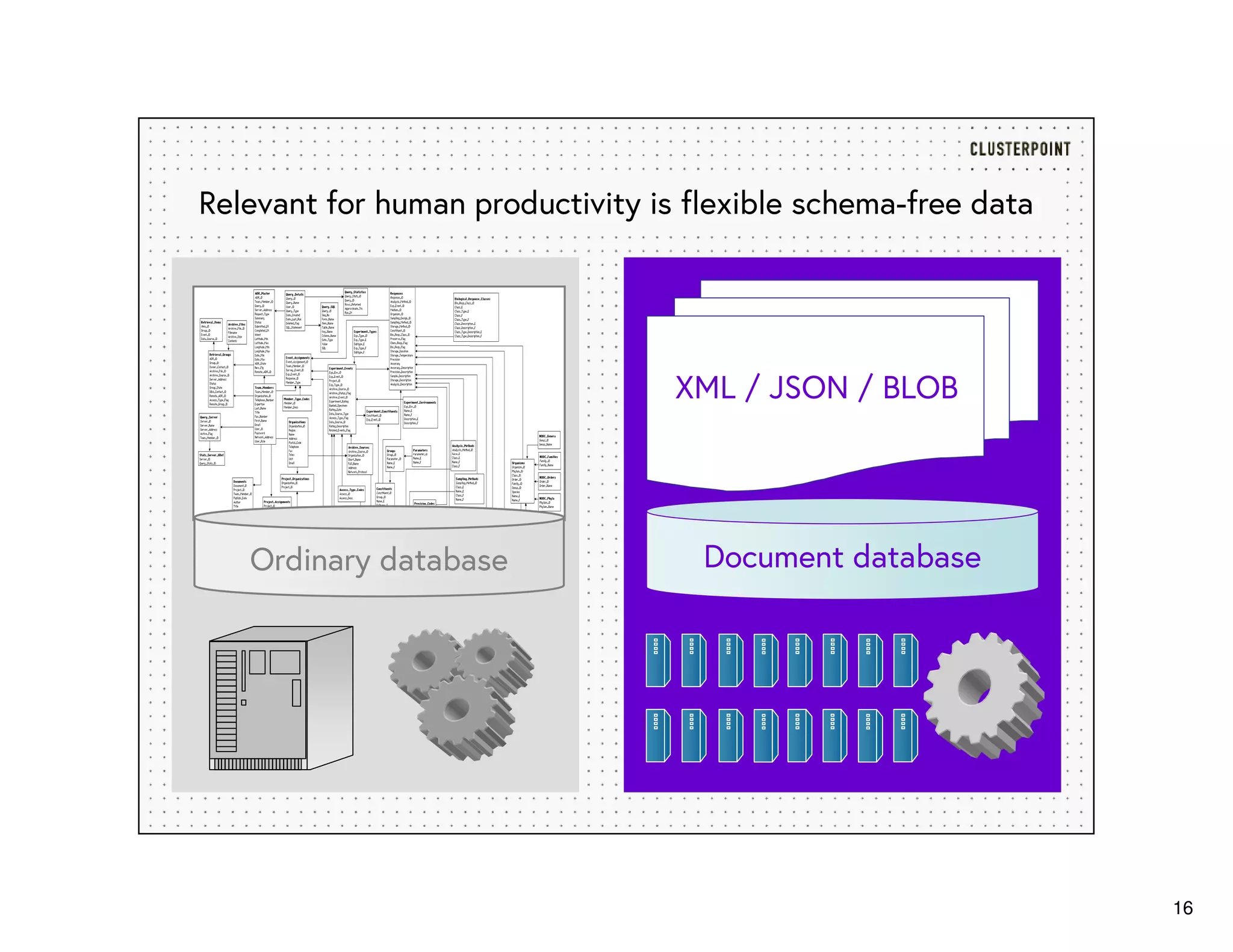

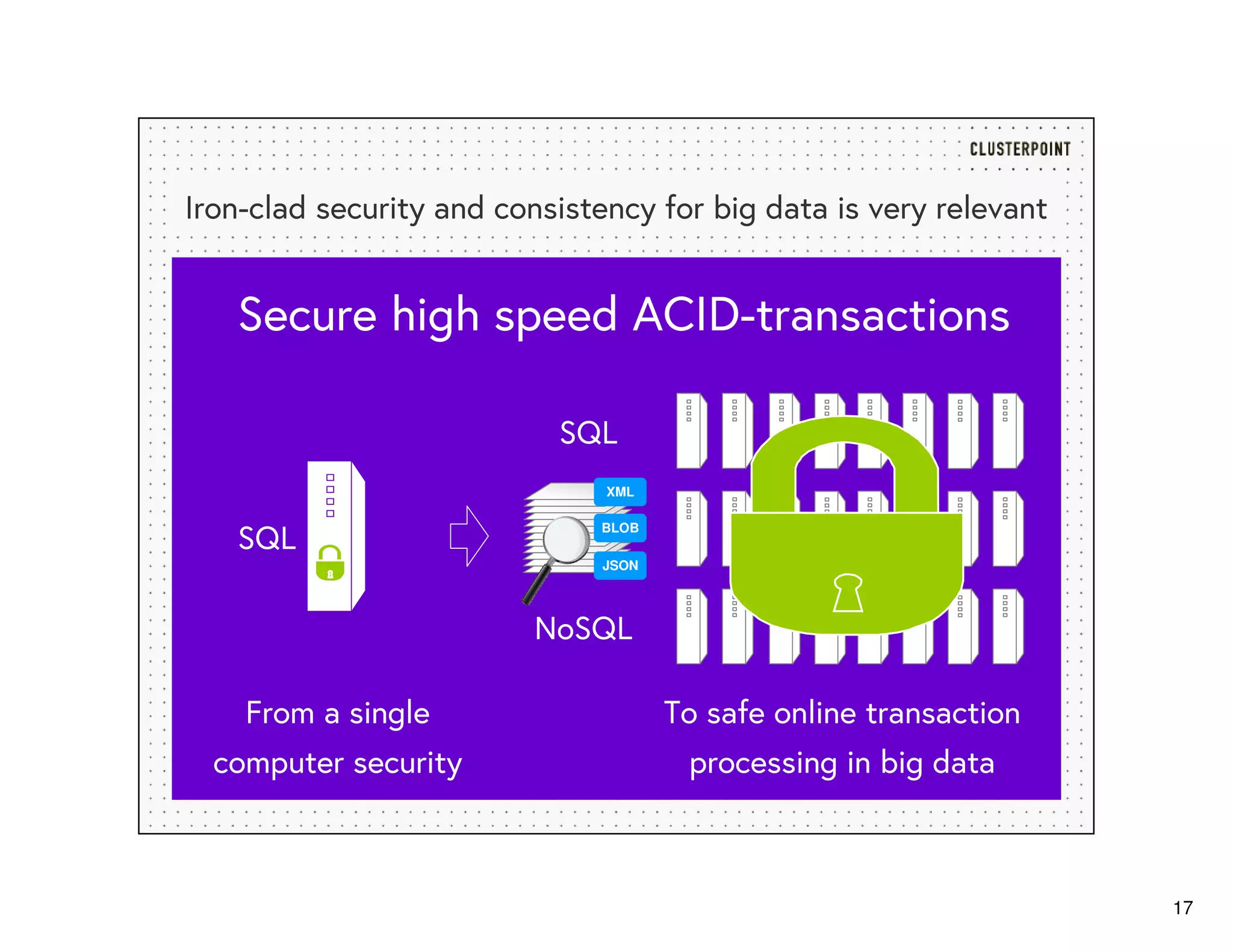

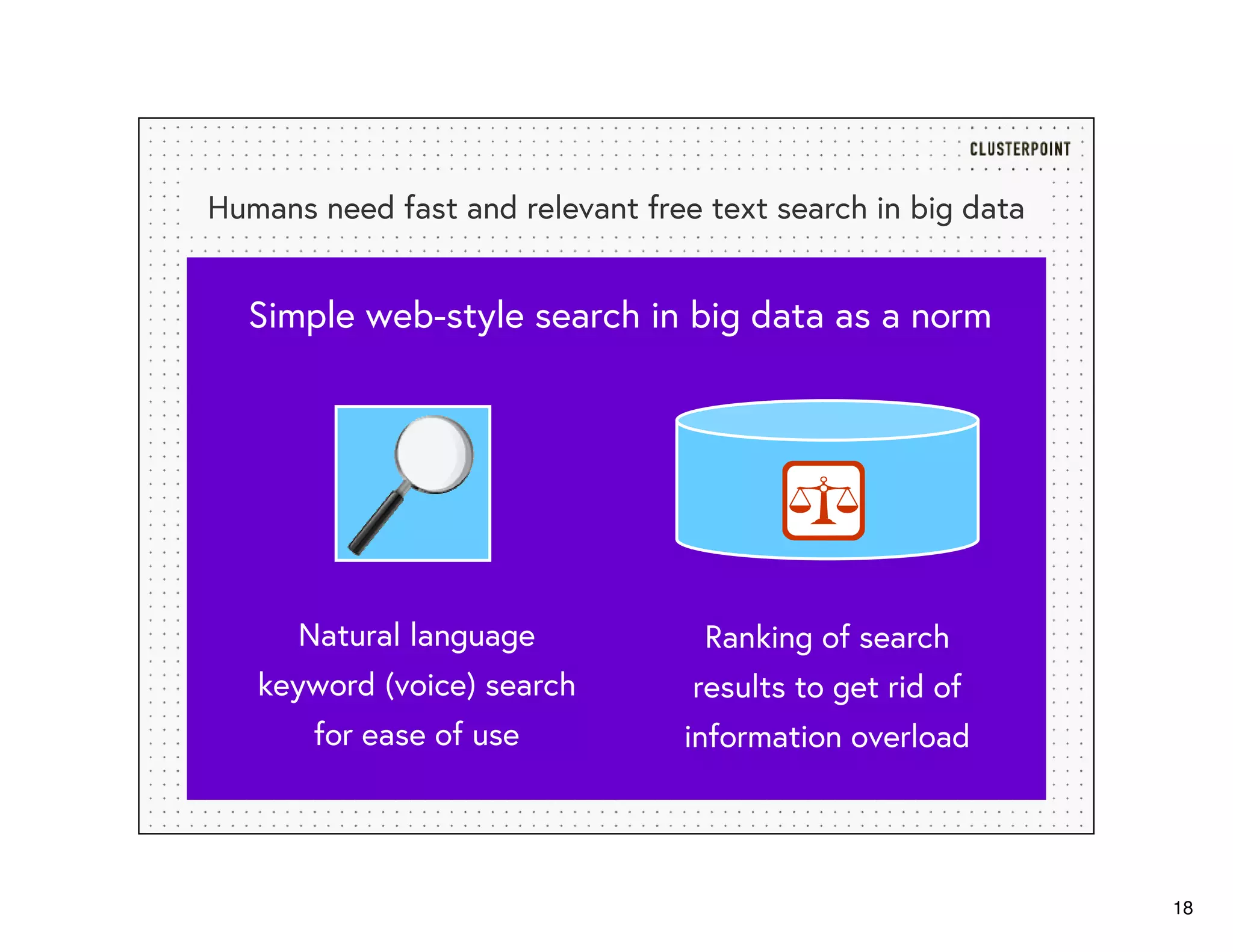

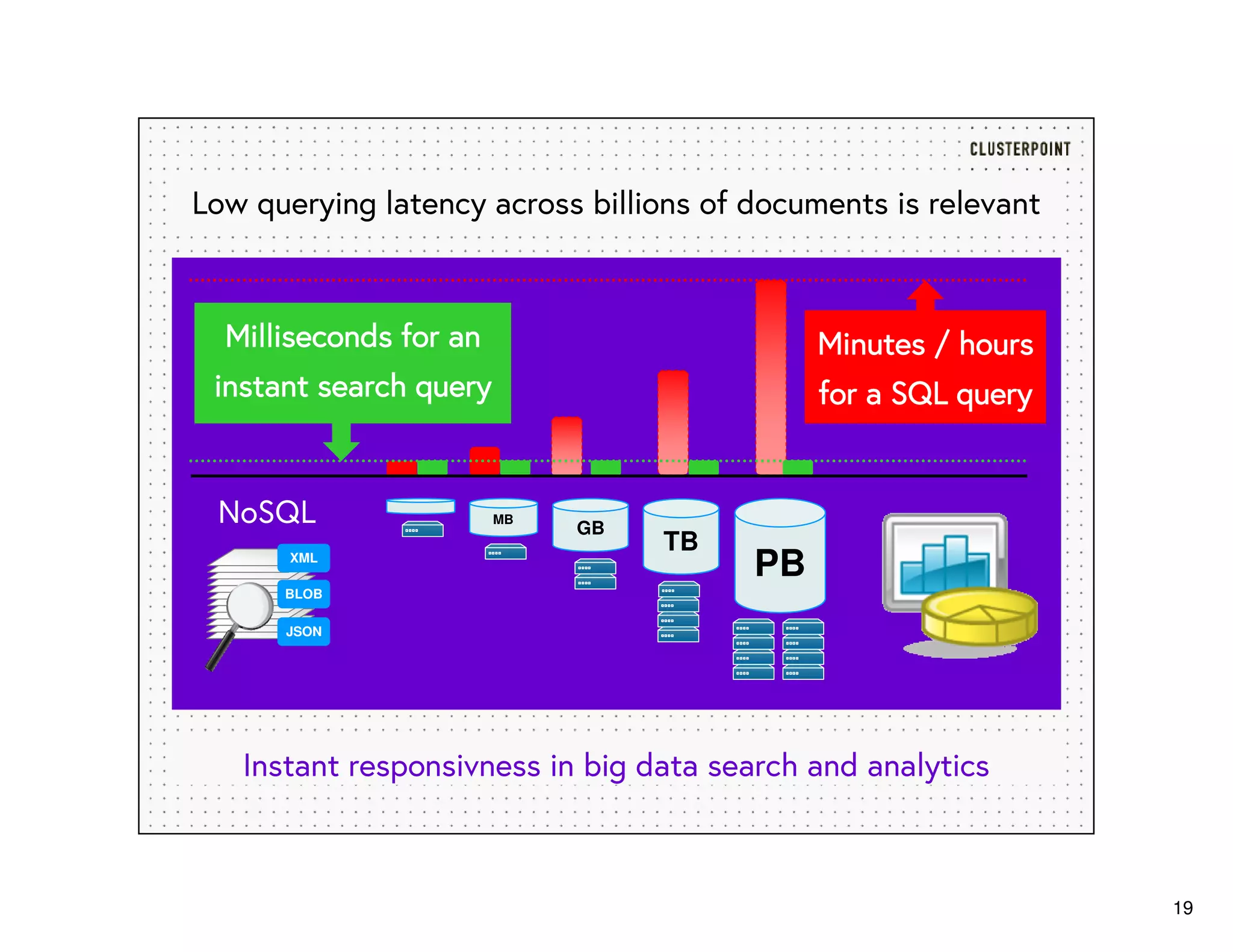

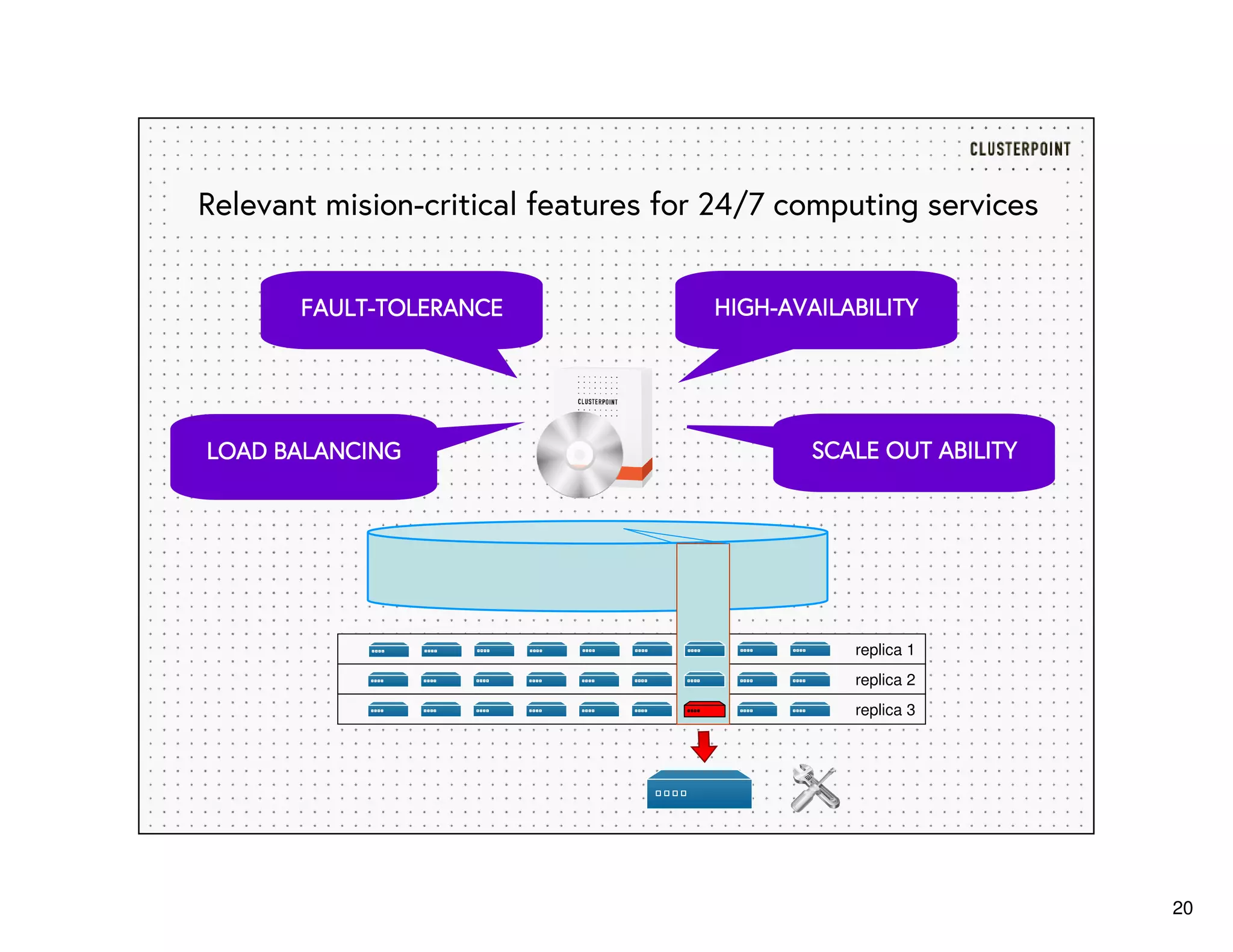

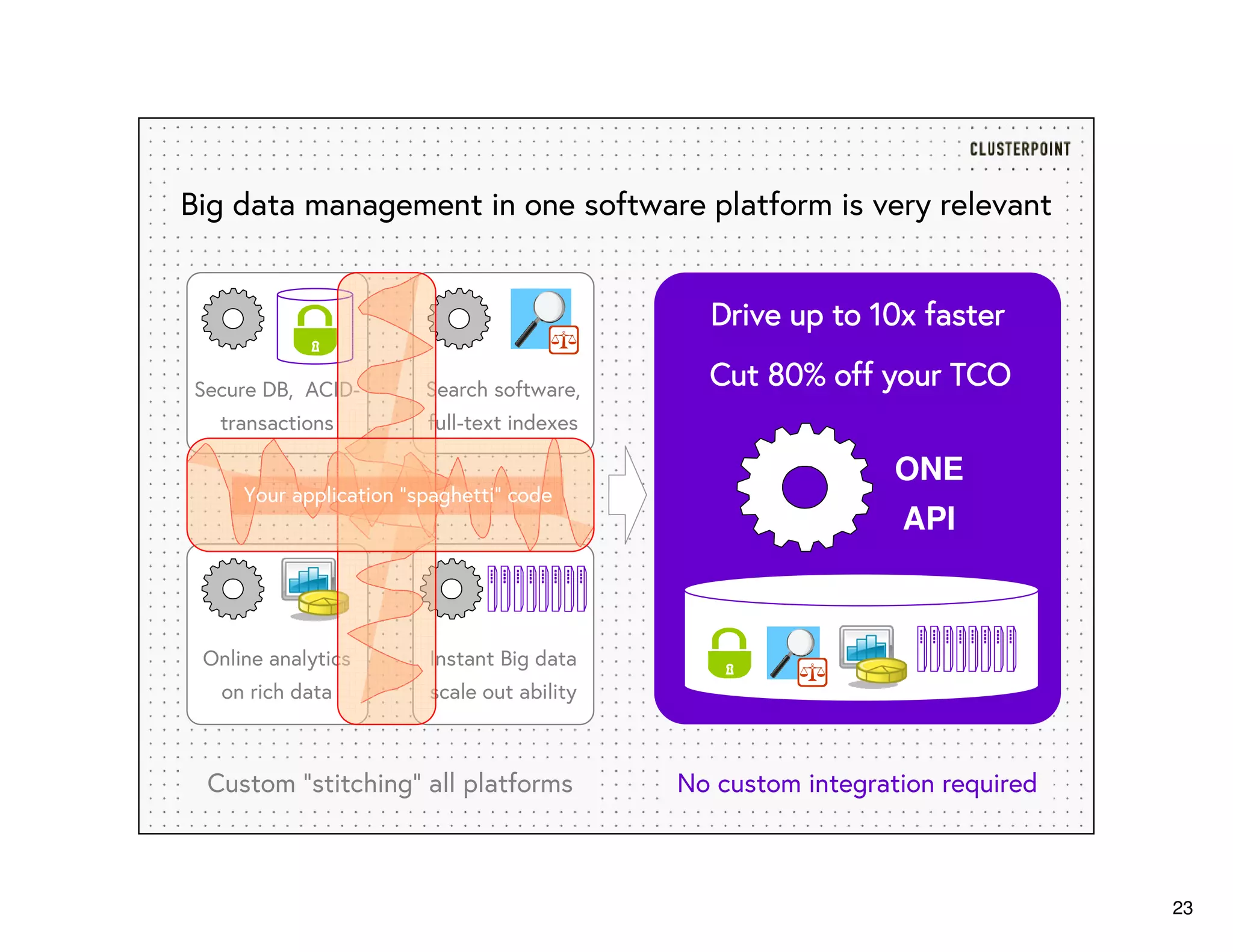

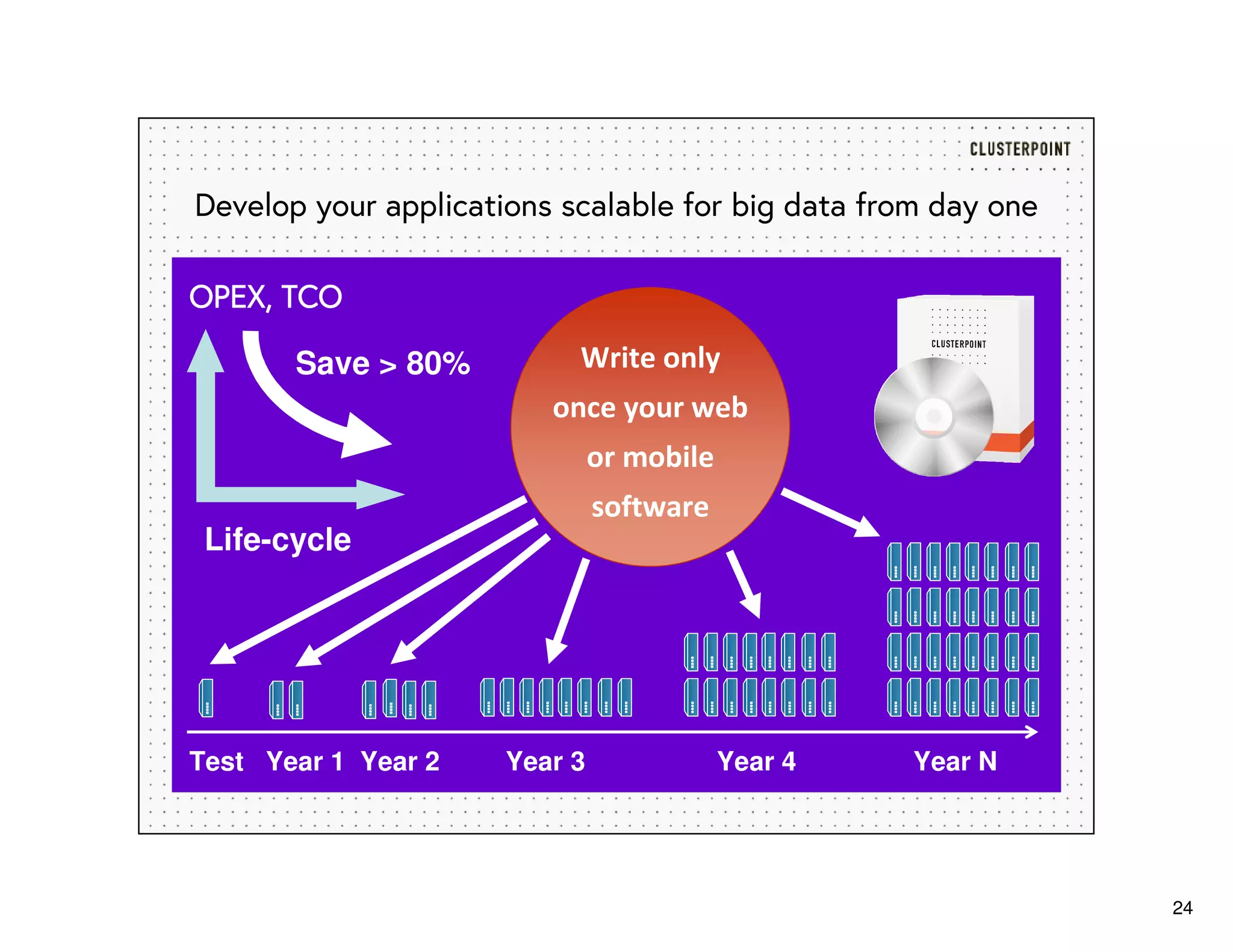

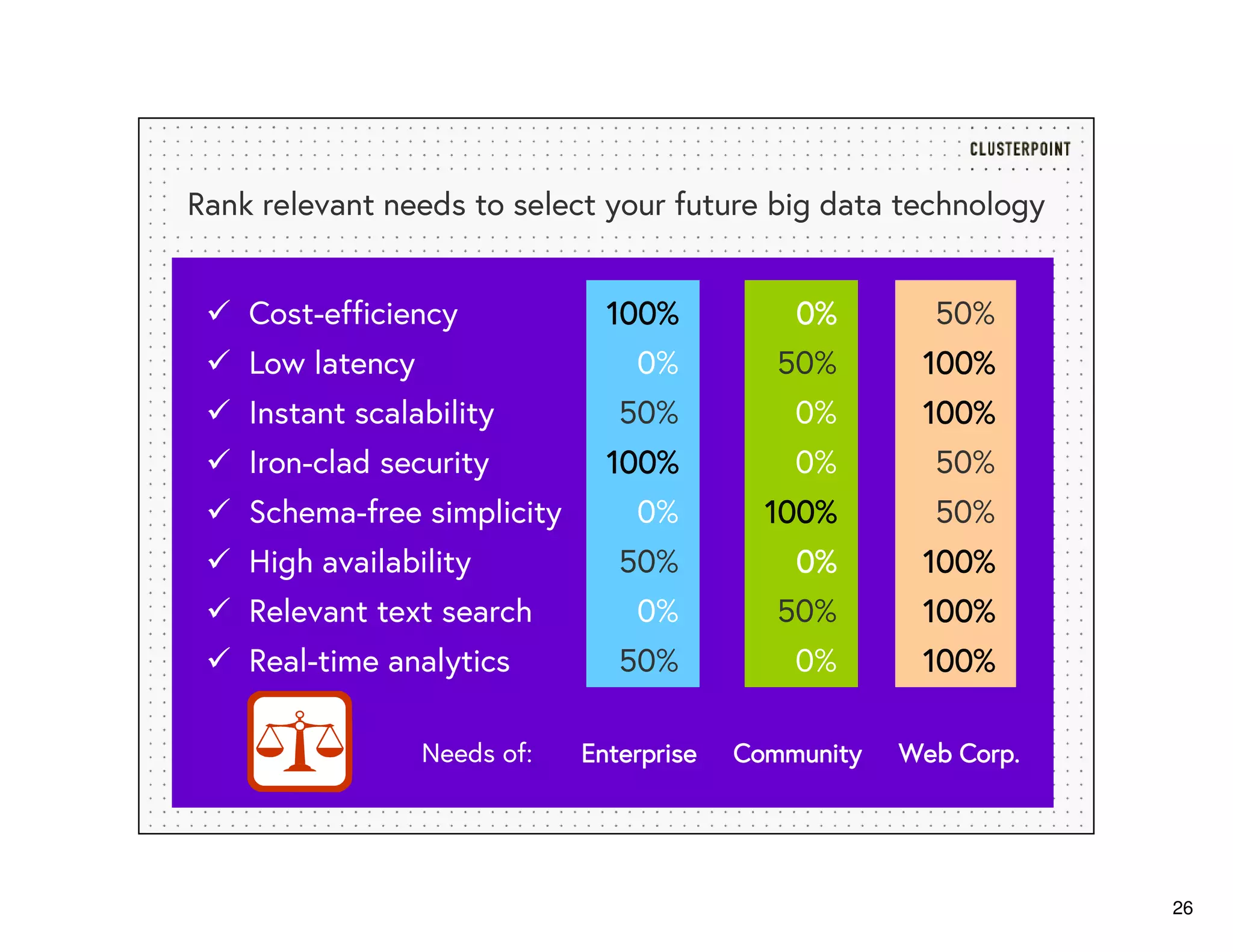

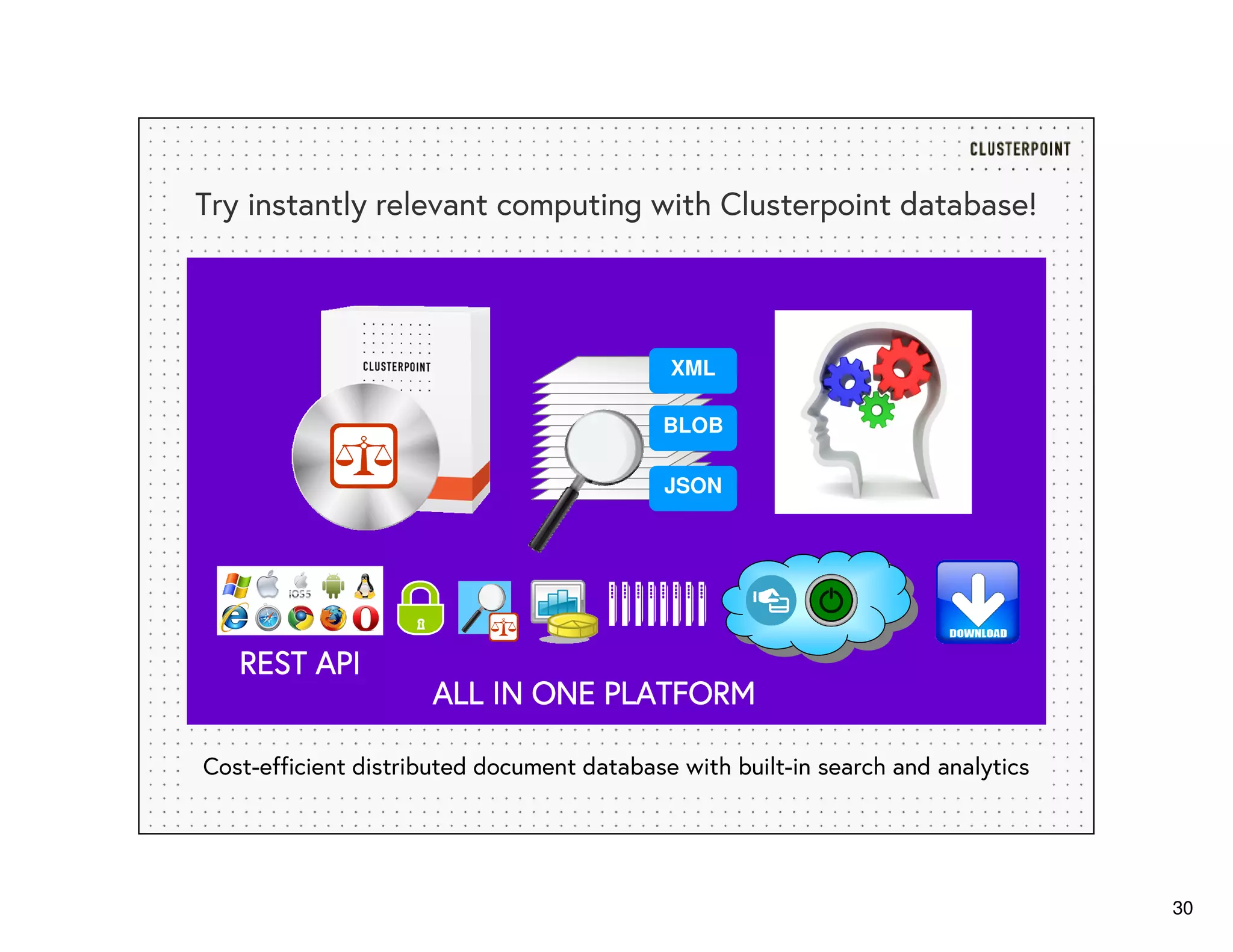

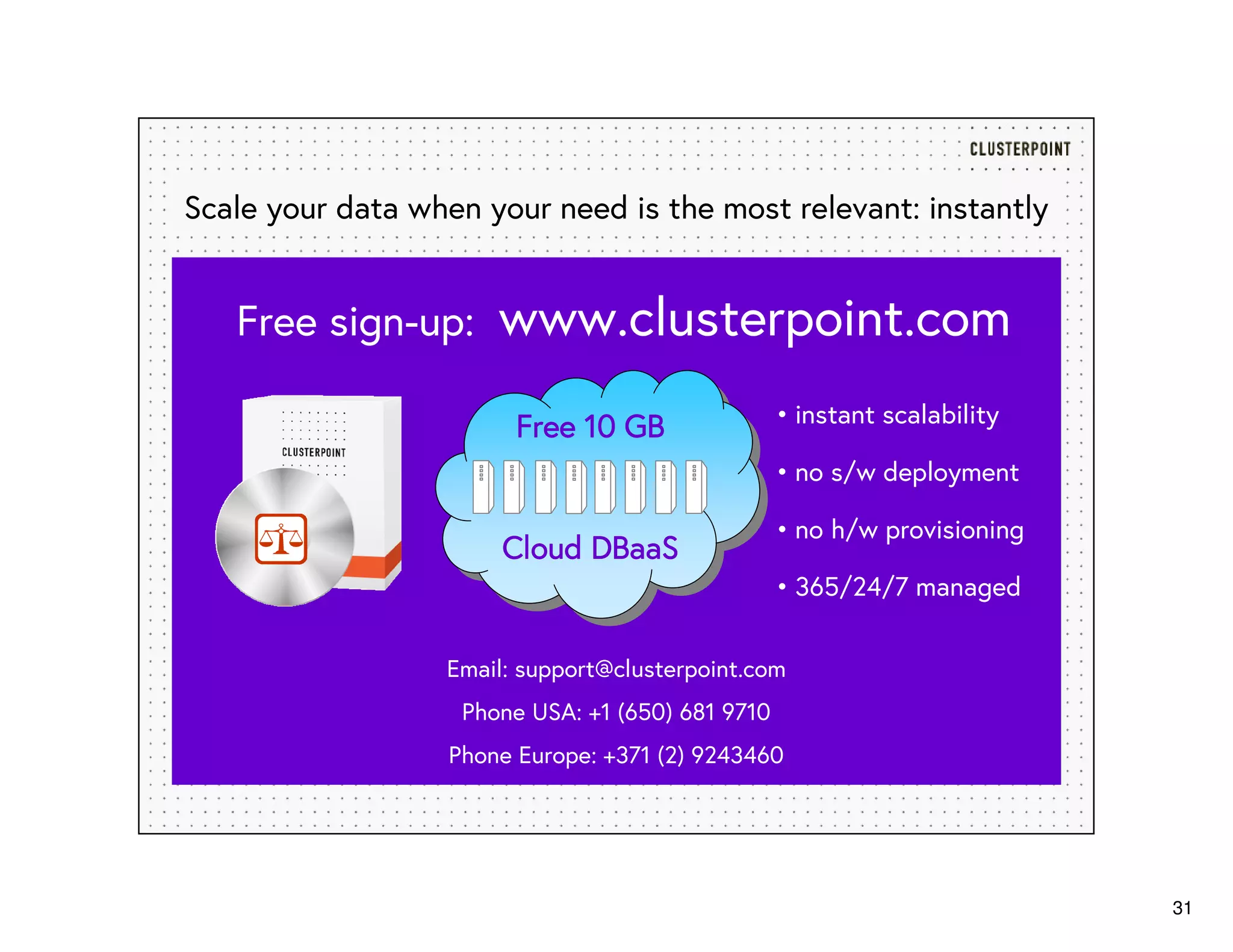

The document discusses the future of big data and the necessity for instantly relevant computing, emphasizing the importance of managing both structured and unstructured data effectively. It highlights various technologies that can help with real-time analytics and the significance of relevance ranking to combat information overload. Additionally, it outlines the capabilities of Clusterpoint's database software in providing cost-efficient, scalable solutions tailored for business needs.