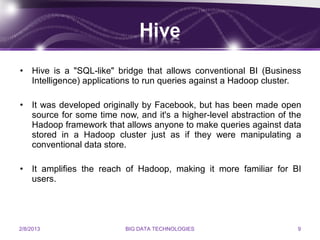

This document discusses technologies used for big data. It describes big data as massive volumes of structured and unstructured data that is difficult to process using traditional databases. It then lists and describes 10 technologies used for big data, including column-oriented databases, schema-less databases, MapReduce, Hadoop, Hive, Pig, WibiData, Platfora, storage technologies, and SkyTree. These technologies allow for processing and analyzing large datasets.