The document discusses several strategies and Amazon Web Services that can be used to build fault-tolerant applications, including:

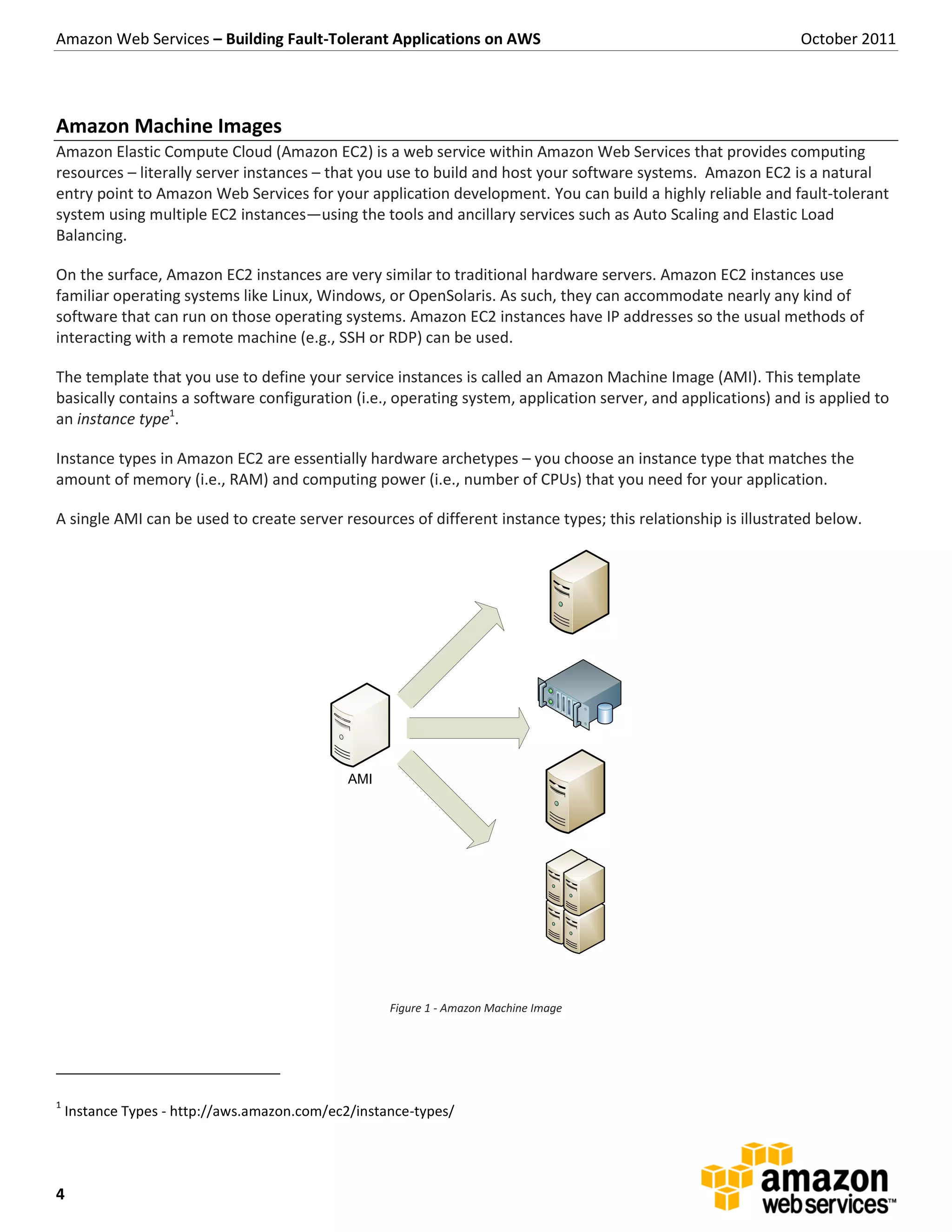

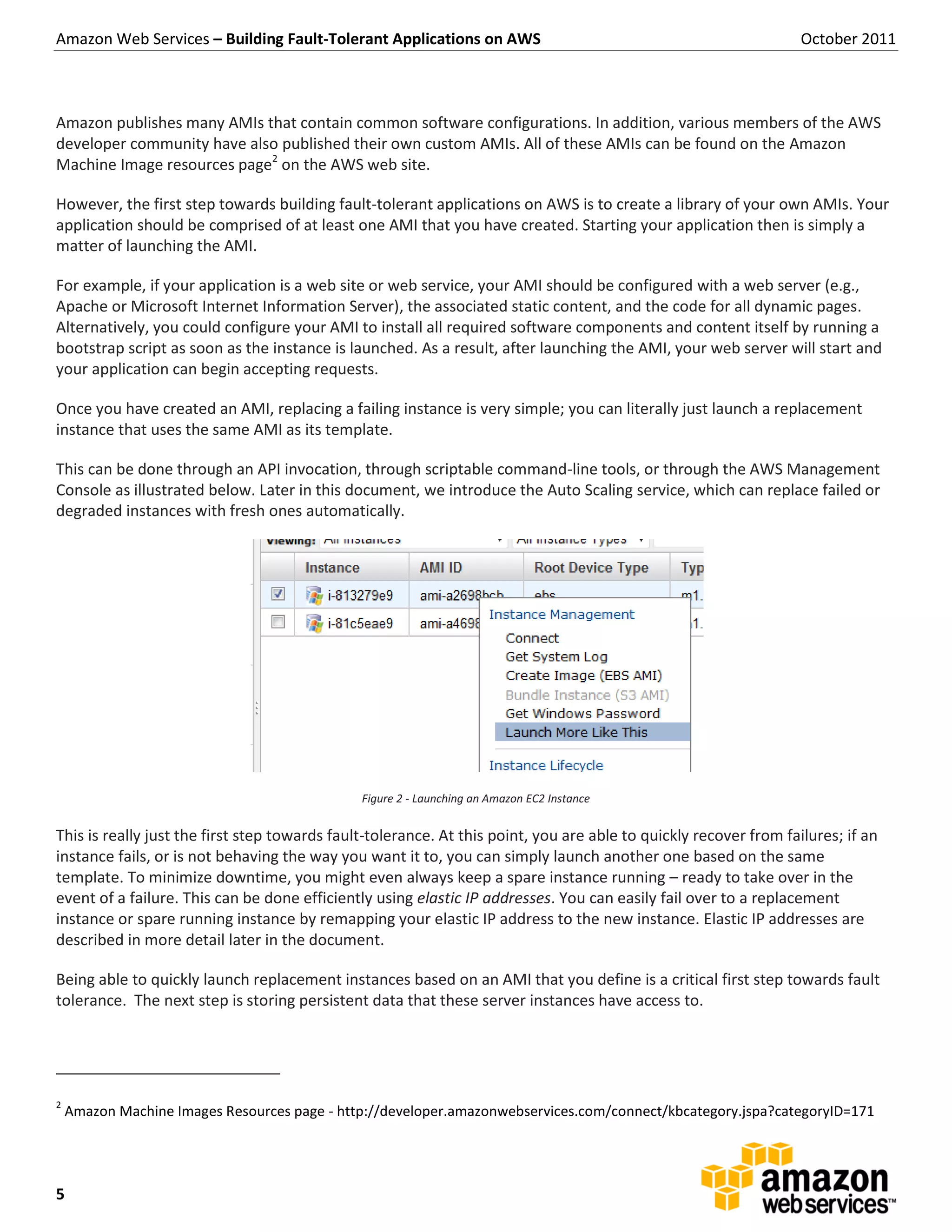

1) Using Amazon Machine Images (AMIs) to quickly launch replacement instances if one fails

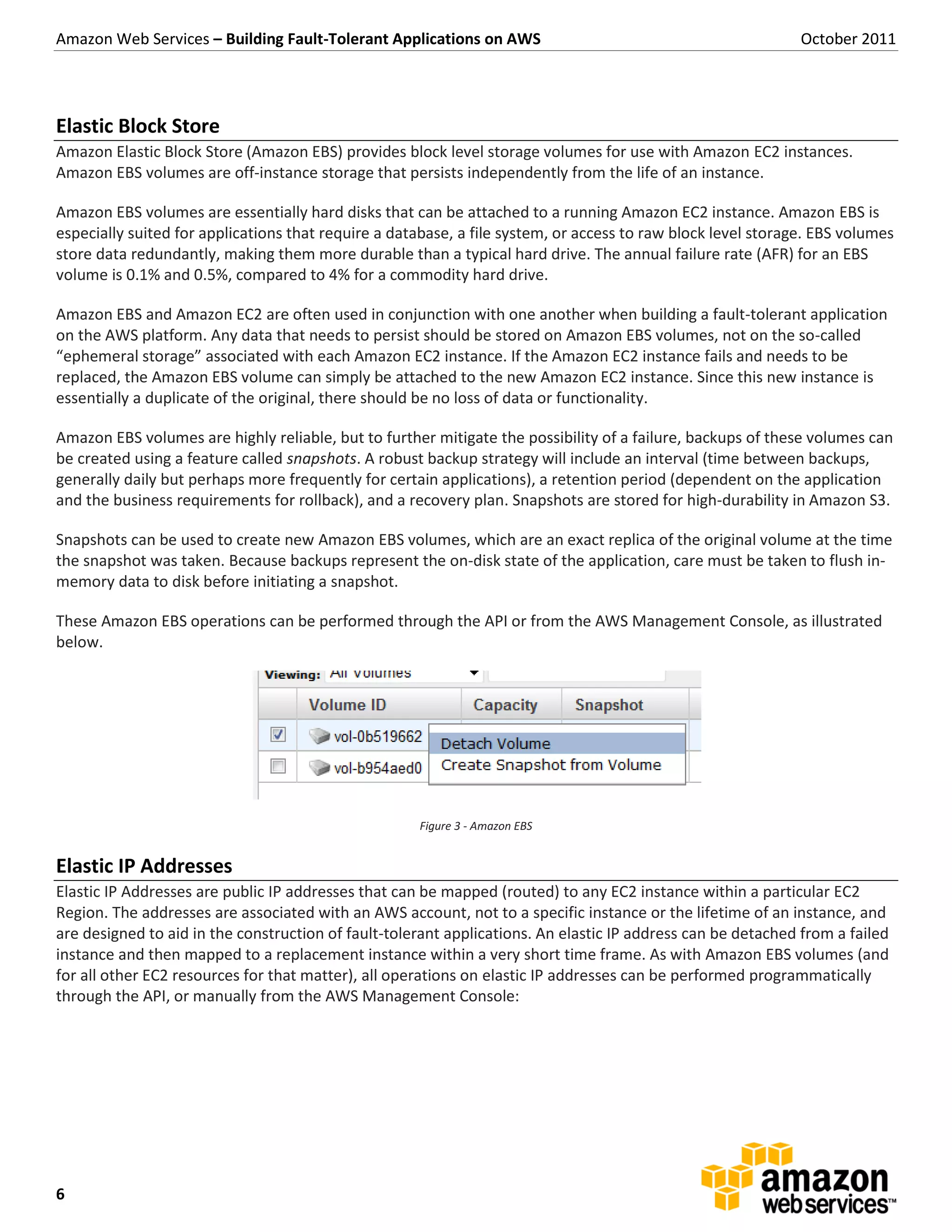

2) Storing persistent data on Amazon Elastic Block Store (EBS) volumes that can be attached to new instances

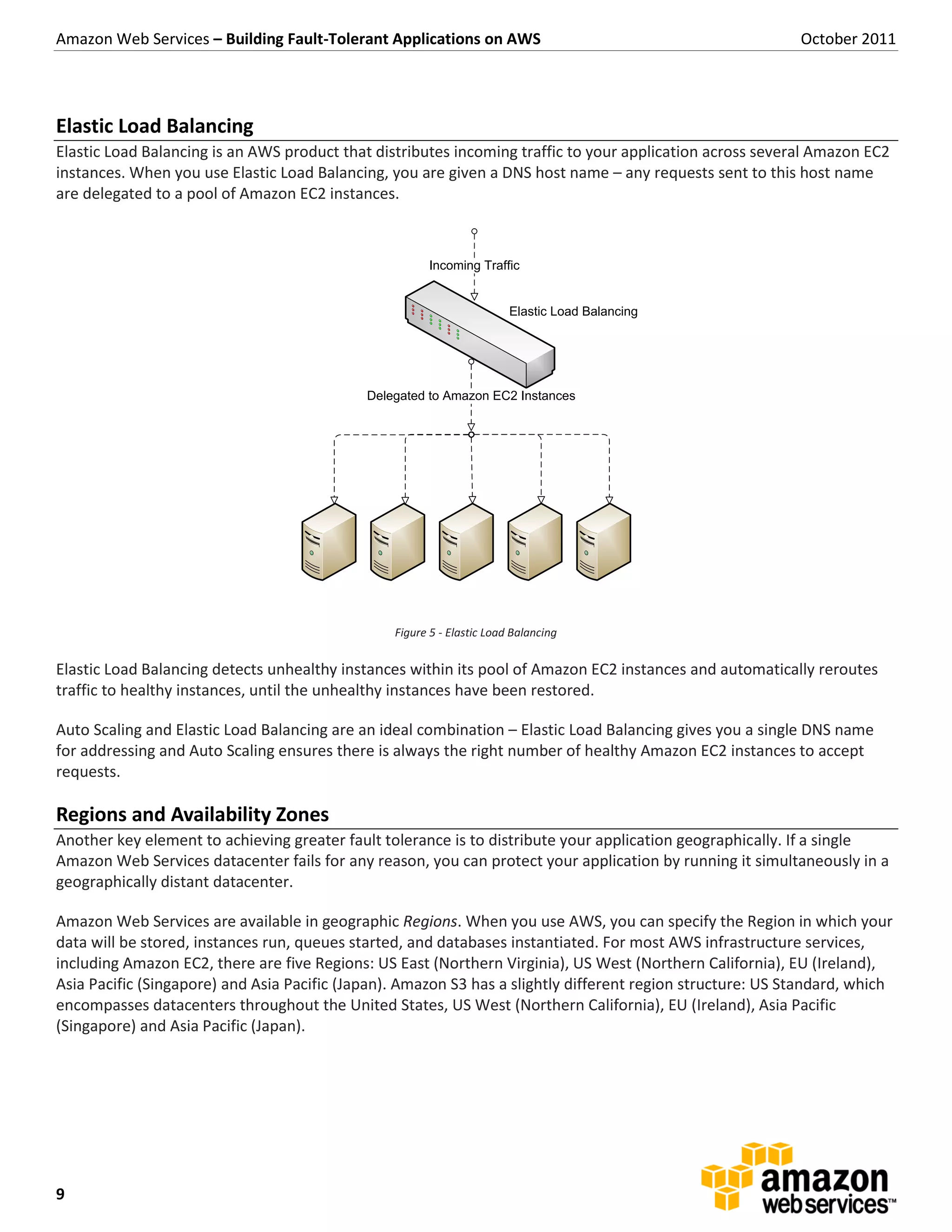

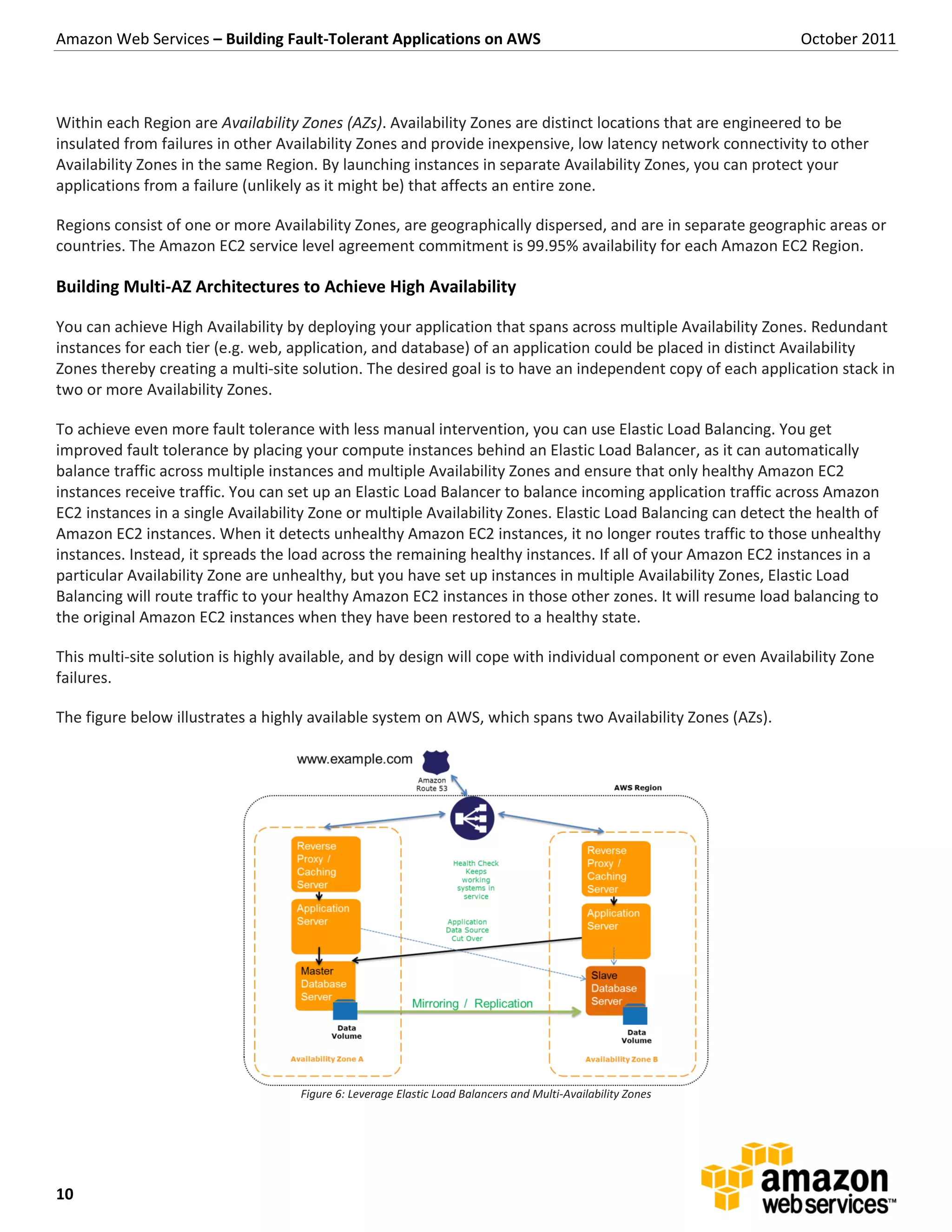

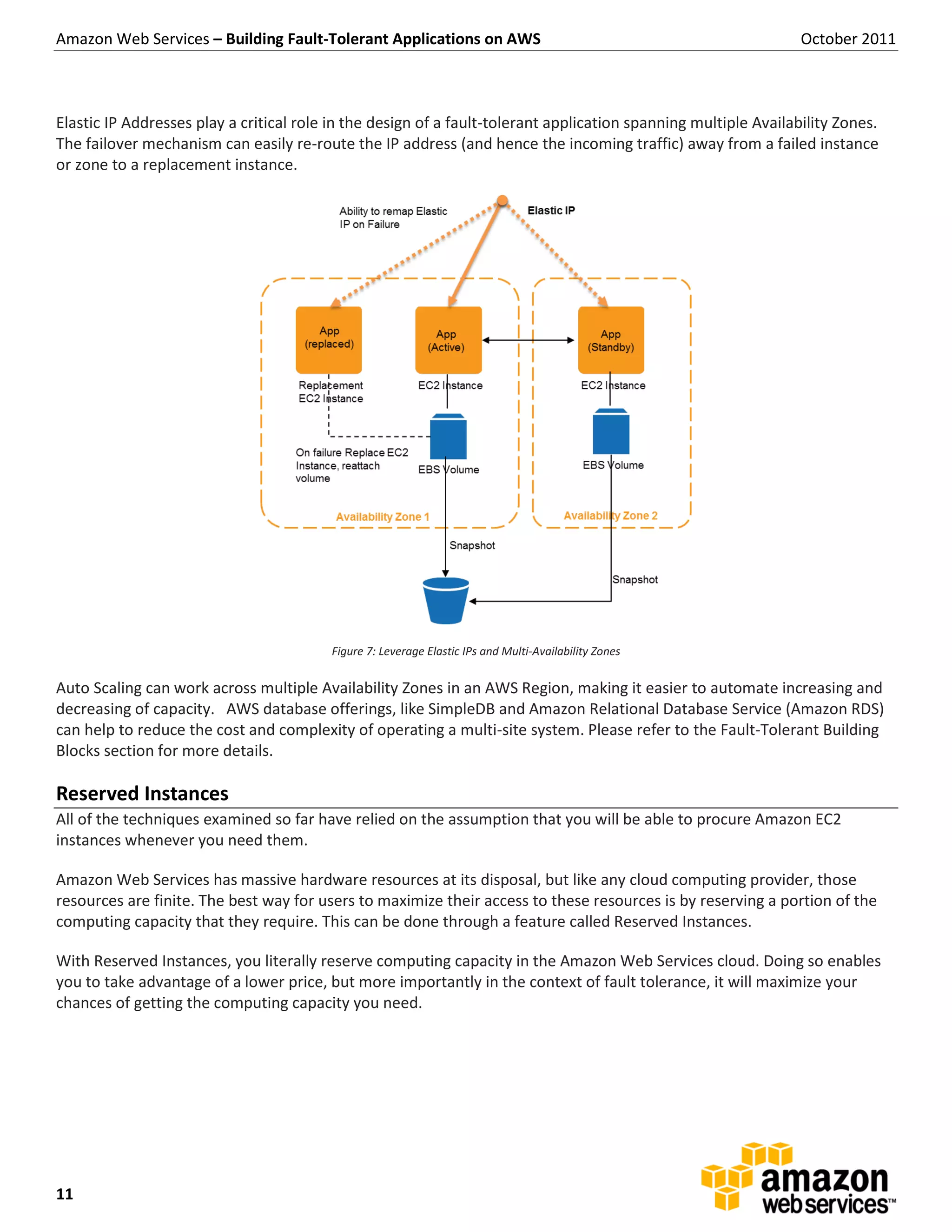

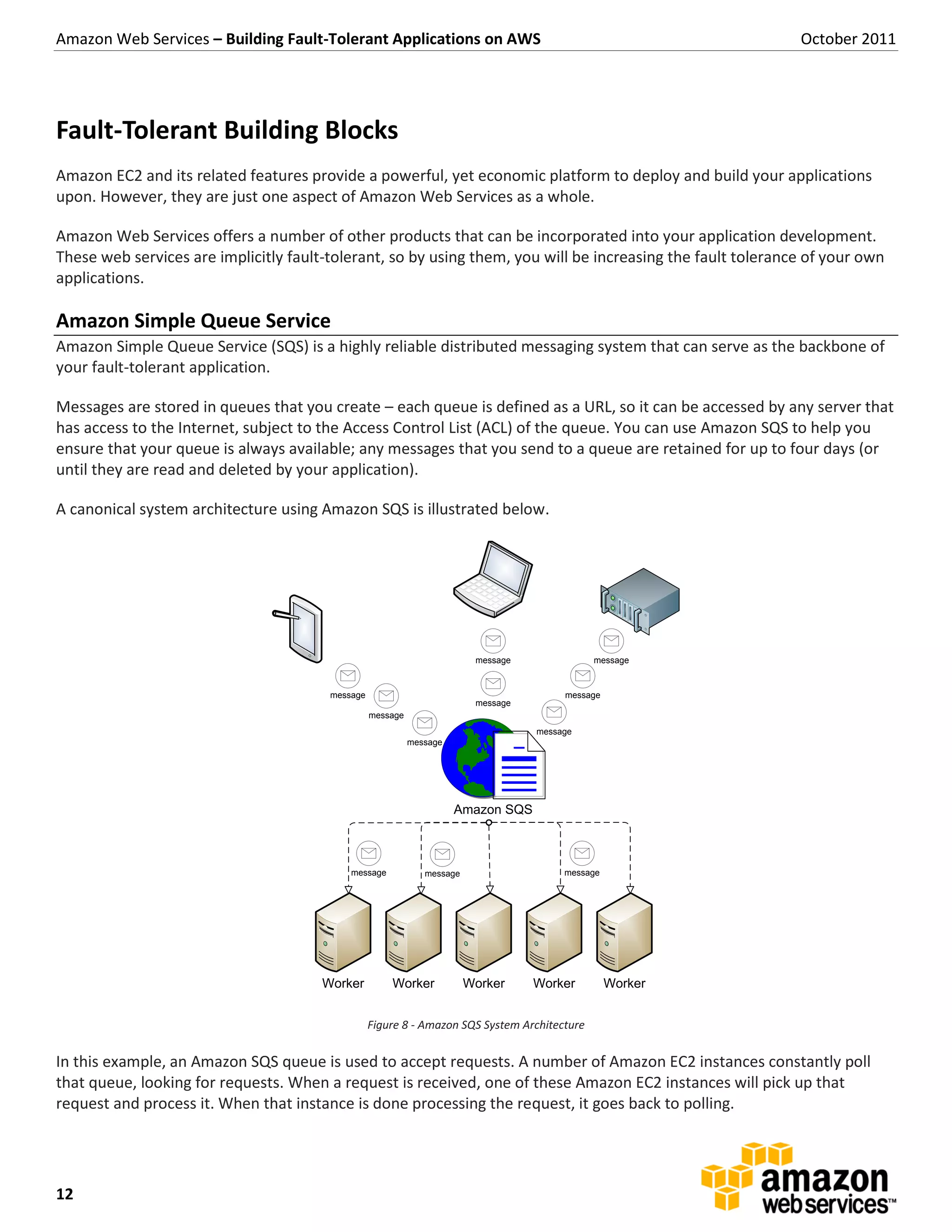

3) Using Elastic Load Balancing and Auto Scaling to automatically distribute traffic across instances and replace failed instances

4) Leveraging Regions and Availability Zones to distribute an application across multiple distinct geographic locations for redundancy.