Apache Deep Learning 201

In my talk I will discuss and show examples of using Apache Hadoop, Apache Hive, Apache MXNet, Apache OpenNLP, Apache NiFi and Apache Spark for deep learning applications. This is the follow up to last years Apache Deep Learning 101 that was done at Dataworks Summit and ApacheCon. As part of my talk I will walk through using Apache NXNet Pre-Built Models, MXNet's New Model Server with Apache NiFi, executing MXNet with Apache NiFi and running Apache MXNet on edge nodes utilizing Python and Apache MiniFi. This talk is geared towards Data Engineers interested in the basics of Deep Learning with open source Apache tools in a Big Data environment. I will walk through source code examples available in github and run the code live on an Apache Hadoop / YARN / Apache Spark cluster. This will be an introduction to executing Deep Learning Pipelines in an Apache Big Data environment. My talk at Data Works Summit Sydney was listed in top 7 -> https://hortonworks.com/blog/7-sessions-dataworks-summit-sydney-see/ Also have speak at and run Future of Data Princeton and at Oracle Code NYC. https://www.slideshare.net/oom65/hadoop-security-architecture?next_slideshow=1 https://community.hortonworks.com/articles/83100/deep-learning-iot-workflows-with-raspberry-pi-mqtt.html https://community.hortonworks.com/articles/146704/edge-analytics-with-nvidia-jetson-tx1-running-apac.html https://dzone.com/refcardz/introduction-to-tensorflow

Recommended

Recommended

More Related Content

What's hot

What's hot (20)

Similar to Apache Deep Learning 201

Similar to Apache Deep Learning 201 (20)

More from DataWorks Summit

More from DataWorks Summit (20)

Recently uploaded

Recently uploaded (20)

Apache Deep Learning 201

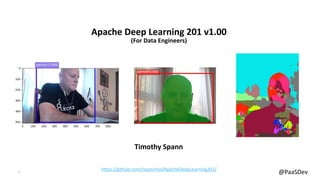

- 1. 1 @PaaSDev Apache Deep Learning 201 v1.00 (For Data Engineers) Timothy Spann https://github.com/tspannhw/ApacheDeepLearning201/

- 2. 2 @PaaSDev Disclaimer • This is my personal integration and use of Apache software, no companies vision. • This document may contain product features and technology directions that are under development, may be under development in the future or may ultimately not be developed. This is Tim’s ideas only. • Technical feasibility, market demand, user feedback, and the Apache Software Foundation community development process can all effect timing and final delivery. • This document’s description of these features and technology directions does not represent a contractual commitment, promise or obligation from Hortonworks to deliver these features in any generally available product. • Product features and technology directions are subject to change, and must not be included in contracts, purchase orders, or sales agreements of any kind. • Since this document contains an outline of general product development plans, customers should not rely upon it when making a purchase decision.

- 3. 3 @PaaSDev There are some who call him... DZone Zone Leader and Big Data MVB; Princeton Future of Data Meetup https://github.com/tspannhw https://community.hortonworks.com/users/9304/tspann.html https://dzone.com/users/297029/bunkertor.html https://www.meetup.com/futureofdata-princeton/

- 4. 4 @PaaSDev

- 5. 5 @PaaSDev Hadoop {Submarine} Project: Running deep learning workloads on YARN , Tim Spann (Cloudera)

- 6. 6 @PaaSDev

- 7. 7 @PaaSDev

- 8. 8 @PaaSDev IoT Edge Processing with Apache MiniFi and Multiple Deep Learning Libraries

- 9. 9 @PaaSDev Deep Learning for Big Data Engineers Multiple users, frameworks, languages, devices, data sources & clusters BIG DATA ENGINEER • Experience in ETL • Coding skills in Scala, Python, Java • Experience with Apache Hadoop • Knowledge of database query languages such as SQL • Knowledge of Hadoop tools such as Hive, or Pig • Expert in ETL (Eating, Ties and Laziness) • Social Media Maven • Deep SME in Buzzwords • No Coding Skills • Interest in Pig and Falcon CAT AI • Will Drive your Car • Will Fix Your Code • Will Beat You At Q-Bert • Will Not Be Discussed Today • Will Not Finish This Talk For Me, This Time http://gluon.mxnet.io/chapter01_crashcourse/preface.html

- 10. 10 @PaaSDev

- 11. 11 @PaaSDev

- 12. 12 @PaaSDev Why Apache NiFi? • Guaranteed delivery • Data buffering - Backpressure - Pressure release • Prioritized queuing • Flow specific QoS - Latency vs. throughput - Loss tolerance • Data provenance • Supports push and pull models • Hundreds of processors • Visual command and control • Over a sixty sources • Flow templates • Pluggable/multi-role security • Designed for extension • Clustering • Version Control

- 13. 13 @PaaSDev Aggregate all the Data! Sensors, Drones, logs, Geo-location devices Photos, Images, Results from running predictions on Pre-trained models. Collect: Bring Together

- 14. 14 @PaaSDev Mediate point-to-point and Bi-directional data flows Delivering data reliably to and from Apache HBase, Druid, Apache Phoenix, Apache Hive, HDFS, Slack and Email. Conduct: Mediate the Data Flow

- 15. 15 @PaaSDev Orchestrate, parse, merge, aggregate, filter, join, transform, fork, query, sort, dissect, store, enrich with weather, location, sentiment analysis, image analysis, object detection, image recognition, … Curate: Gain Insights

- 16. 16 @PaaSDev • Cloud ready • Python, C++, Scala, R, Julia, Matlab, MXNet.js and Perl Support • Experienced team (XGBoost) • AWS, Microsoft, NVIDIA, Baidu, Intel • Apache Incubator Project • Run distributed on YARN and Spark • In my early tests, faster than TensorFlow. (Try this your self) • Runs on Raspberry PI, NVidia Jetson TX1 and other constrained devices https://mxnet.incubator.apache.org/how_to/cloud.html https://github.com/apache/incubator-mxnet/tree/1.3.1/example https://gluon-cv.mxnet.io/api/model_zoo.html

- 17. 17 @PaaSDev • Great documentation • Crash Course • Gluon (Open API), GluonCV, GluonNLP • Keras (One API Many Runtime Options) • Great Python Interaction • Open Source Model Server Available • ONNX (Open Neural Network Exchange Format) Support for AI Models • Now in Version 1.3.1 • Rich Model Zoo! • TensorBoard compatible http://mxnet.incubator.apache.org/ http://gluon.mxnet.io/https://onnx.ai/ pip3.6 install -U keras-mxnet https://gluon-nlp.mxnet.io/ pip3.6 install --pre --upgrade mxnet pip3.6 install gluonnlp

- 18. 18 @PaaSDev • Apache MXNet Running in Apache Zeppelin Notebooks • Apache MXNet Running on YARN 3.1 In Hadoop 3.1 In Dockerized Containers • Apache MXNet Running on YARN Apache NiFi Integration with Apache Hadoop Options https://community.hortonworks.com/articles/176789/apache-deep-learning-101-using-apache-mxnet-in-apa.html https://community.hortonworks.com/articles/174399/apache-deep-learning-101-using-apache-mxnet-on-apa.html https://www.slideshare.net/Hadoop_Summit/deep-learning-on-yarn-running-distributed-tensorflow-etc-on-hadoop-cluster-v3

- 19. 19 @PaaSDev Apache MXNet GluonCV Zoo https://gluon-cv.mxnet.io/model_zoo/classification.html • ResNet152_v2 • MobileNetV2_0.25 • VGG19_bn • SqueezeNet1.1 • DenseNet201 • Darknet53 • InceptionV3 • CIFAR_ResNeXt29_16x64 • yolo3_darknet53_voc • ssd_512_mobilenet1.0_coco • faster_rcnn_resnet101_v1d_coco • yolo3_darknet53_coco • FCN model on PASCAL VOC

- 20. 20 @PaaSDev Object Detection: GluonCV YOLO v3 and Apache NiFi https://community.hortonworks.com/articles/222367/using-apache-nifi-with-apache-mxnet-gluoncv-for-yo.html

- 21. 21 @PaaSDev Object Detection: Faster RCNN with GluonCV net = gcv.model_zoo.get_model(faster_rcnn_resnet50_v1b_voc, pretrained=True) Faster RCNN model trained on Pascal VOC dataset with ResNet-50 backbone https://gluon-cv.mxnet.io/api/model_zoo.html

- 22. 22 @PaaSDev Instance Segmentation: Mask RCNN with GluonCV net = model_zoo.get_model('mask_rcnn_resnet50_v1b_coco', pretrained=True) Mask RCNN model trained on COCO dataset with ResNet-50 backbone https://gluon-cv.mxnet.io/build/examples_instance/demo_mask_rcnn.html https://arxiv.org/abs/1703.06870 https://github.com/matterport/Mask_RCNN

- 23. 23 @PaaSDev Semantic Segmentation: DeepLabV3 with GluonCV model = gluoncv.model_zoo.get_model('deeplab_resnet101_ade', pretrained=True) GluonCV DeepLabV3 model on ADE20K dataset https://gluon-cv.mxnet.io/build/examples_segmentation/demo_deeplab.html run1.sh demo_deeplab_webcam.py http://groups.csail.mit.edu/vision/datasets/ADE20K/ https://arxiv.org/abs/1706.05587 https://www.cityscapes-dataset.com/ This one is a bit slower.

- 24. 24 @PaaSDev Semantic Segmentation: Fully Convolutional Networks model = gluoncv.model_zoo.get_model(‘fcn_resnet101_voc ', pretrained=True) GluonCV FCN model on PASCAL VOC dataset https://gluon-cv.mxnet.io/build/examples_segmentation/demo_fcn.html run1.sh demo_fcn_webcam.py https://people.eecs.berkeley.edu/~jonlong/long_shelhamer_fcn.pdf

- 25. 25 @PaaSDev Apache MXNet Model Server from Apache NiFi https://community.hortonworks.com/articles/223916/posting-images-with-apache-nifi-17-and-a-custom- pr.html

- 26. 26 @PaaSDev Apache MXNet Native Processor for Apache NiFi This is a beta, community release by me using the new beta Java API for Apache MXNet. https://github.com/tspannhw/nifi-mxnetinference-processor https://community.hortonworks.com/articles/229215/apache-nifi-processor-for-apache-mxnet-ssd-single.html https://www.youtube.com/watch?v=Q4dSGPvqXSA

- 27. 27 @PaaSDev Edge Intelligence with Apache NiFi Subproject - MiNiFi Guaranteed delivery Data buffering ‒ Backpressure ‒ Pressure release Prioritized queuing Flow specific QoS ‒ Latency vs. throughput ‒ Loss tolerance Data provenance Recovery / recording a rolling log of fine-grained history Designed for extension Java or C++ Agent Different from Apache NiFi Design and Deploy Warm re-deploys Key Features

- 28. 28 @PaaSDev Apache MXNet Running on Edge Nodes (MiniFi) https://community.hortonworks.com/articles/83100/deep-learning-iot-workflows-with-raspberry-pi-mqtt.html https://github.com/tspannhw/OpenSourceComputerVision https://github.com/tspannhw/ApacheDeepLearning101 https://github.com/tspannhw/mxnet-for-iot

- 29. 29 @PaaSDev Multiple IoT Devices with Apache NiFi and Apache MXNet https://community.hortonworks.com/articles/203638/ingesting-multiple-iot-devices-with-apache-nifi-17.html

- 30. 30 @PaaSDev Using Apache MXNet on The Edge with Sensors and Intel Movidius (MiniFi) https://community.hortonworks.com/articles/176932/apache-deep-learning-101-using-apache-mxnet-on-the.html https://community.hortonworks.com/articles/146704/edge-analytics-with-nvidia-jetson-tx1-running-apac.html

- 31. 31 @PaaSDev Storage Platform: HDFS in Apache Hadoop 3.1 Compute & GPU Platform: YARN in Apache Hadoop 3.1HBase2.0 Security & Governance: Atlas 1.0, Ranger 1.0, Knox 1.0 Hive 3.0 Spark 2.3Phoenix 0.8 Operations: Ambari 2.7 Open Source Hadoop 3.1

- 32. 32 @PaaSDev Apache MXNet on Apache YARN 3.1 Native No Spark yarn jar /usr/hdp/current/hadoop-yarn-client/hadoop-yarn-applications- distributedshell.jar -jar /usr/hdp/current/hadoop-yarn-client/hadoop- yarn-applications-distributedshell.jar -shell_command python3.6 - shell_args "/opt/demo/analyzex.py /opt/images/cat.jpg" - container_resources memory-mb=512,vcores=1 Uses: Python Any

- 33. 33 @PaaSDev Apache MXNet on Apache YARN 3.1 Native No Spark https://community.hortonworks.com/content/kbentry/222242/running-apache-mxnet-deep-learning-on-yarn-31- hdp.html https://github.com/tspannhw/ApacheDeepLearning101/blob/master/analyzehdfs.py

- 34. 34 @PaaSDev Apache MXNet on YARN 3.2 in Docker Using “Submarine” https://github.com/apache/hadoop/tree/trunk/hadoop-yarn-project/hadoop-yarn/hadoop-yarn-applications/hadoop-yarn-submarine yarn jar hadoop-yarn-applications-submarine-<version>.jar job run --name xyz-job-001 --docker_image <your docker image> --input_path hdfs://default/dataset/cifar-10-data --checkpoint_path hdfs://default/tmp/cifar-10-jobdir --num_workers 1 --worker_resources memory=8G,vcores=2,gpu=2 --worker_launch_cmd "shell for Apache MXNet" Wangda Tan (wangda@apache.org) Hadoop {Submarine} Project: Running deep learning workloads on YARN https://issues.apache.org/jira/browse/YARN-8135

Editor's Notes

- Monitor Time Follow—ups Q/A at end Defer additional questions to later, we are short on time Ingest – multiple options, different types of data (rdbms, streams, files) HDF, Sqoop, Flume, Kafka Streaming Script vs UI + Mgmt. Data Movement tool. Streamlined.

- Kafka Reads events in memory and write to distributed log

- Adam Gibson DL4J/Skymind has spoken at my meetup Deep Learning A Practitioner’s Approach – I consulted with them on the Spark/Hadoop chapter.

- Adam Gibson DL4J/Skymind has spoken at my meetup Deep Learning A Practitioner’s Approach – I consulted with them on the Spark/Hadoop chapter.

- https://github.com/USCDataScience/dl4j-kerasimport-examples/tree/master/dl4j-import-example Also: https://github.com/adatao/tensorspark https://arimo.com/machine-learning/deep-learning/2016/arimo-distributed-tensorflow-on-spark/ https://caffe2.ai/docs/AI-Camera-demo-android

- TALK TRACK Apache MiNiFI is a sub project of Apache NiFi. It is designed to solve the difficulties of managing and transmitting data feeds to and from the source of origin, enabling edge intelligence to adjust dataflow behavior with bi-directional communication, out to the last mile of digital signal. It has a very small and lightweight footprint*, and generate the same level of data provenance as NiFi that is vital to edge analytics and IoAT (Internet of Any Thing) It’s a little bit diferent from NiF in that is is not a real-time command and control interface – in fact – the agent, unlike NiFi doesn’t have a built in UI at all. MiNiFi is designed for design and deploy situations and for “warm re-deploys”. HDF 2.0 supports the java version of the MiNiFi agent, and a C++ version is coming soon as well.

- You need to holistically manage all the data in all places, then begin to move our platform into place