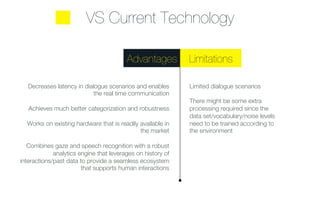

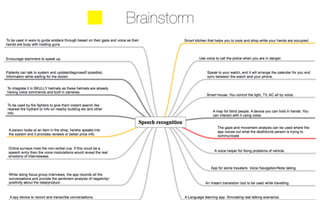

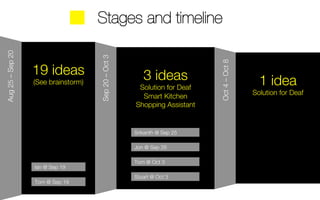

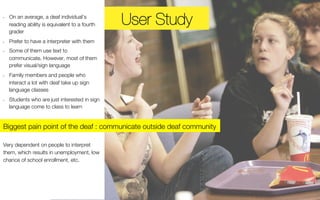

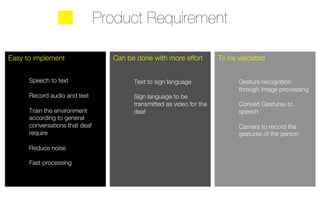

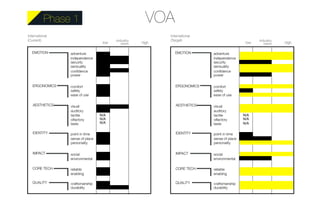

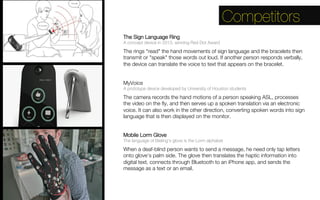

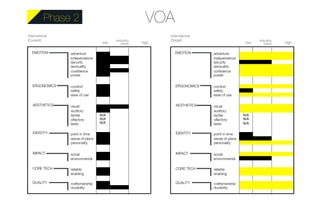

The document discusses a team's project on developing speech recognition technology to help the deaf and hard of hearing community communicate. It outlines 3 phases: Phase 1 involves speech to text transcription, Phase 2 adds translating speech to sign language, and Phase 3 aims to enable two-way conversation between deaf and hearing individuals by translating between sign language and spoken language. The team identifies stakeholders, potential use cases, competitors' products, and next steps to further develop the technical feasibility and design of the phases, especially the complexity of bidirectional translation.