Fostering Diversity (of thinking) in Science

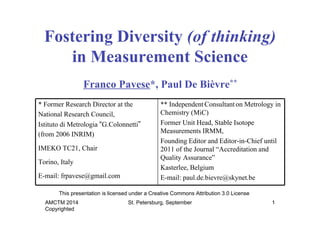

- 1. Fostering Diversity (of thinking) in Measurement Science Franco Pavese*, Paul De Bièvre** * Former Research Director at the National Research Council, Istituto di Metrologia “G.Colonnetti” (from 2006 INRIM) IMEKO TC21, Chair Torino, Italy E-mail: frpavese@gmail.com This presentation is licensed under a Creative Commons Attribution 3.0 License AMCTM 2014 Copyrighted ** Independent Consultant on Metrology in Chemistry (MiC) Former Unit Head, Stable Isotope Measurements IRMM, Founding Editor and Editor-in-Chief until 2011 of the Journal “Accreditation and Quality Assurance” Kasterlee, Belgium E-mail: paul.de.bievre@skynet.be St. Petersburg, September 1

- 2. we observe increasing symptoms of a trend: the prevalence of single-path thinking, maybe fostered by either the anxiety to take a decision by the intention to attempt to ‘force’ a conclusion AMCTM 2014 Copyrighted Prologue In many fields of science or St. Petersburg, September 2

- 3. Truth: the gnoseological* dilemma * ”of the philosophy of knowledge and the human faculties for learning” “Five fundamental aspects can be attributed to ‘truth’ … by correspondence by revelation (disclosure) by conformity to a rule by consistency (coherence) by benefit They are not reciprocally alternative, are diverse and not-reducible “Consistency is indifferent to truth. Once can be entirely consistent and still be entirely wrong” [Steven G. Vick] AMCTM 2014 Copyrighted to each other.” [N. Abbagnano, Dictionary of Philosophy] St. Petersburg, September 3

- 4. Truth: the gnoseological dilemma The history of thinking shows that, in the search for ‘truth’, general principles are typically subject to contrasting positions, leading to irresolvable criticism. “Reason alone is incapable of resolving the various philosophical problems” [D. Hume] Actually, it is impossible to demonstrate any position, (“Relativism is the traditional epithet applied to pragmatism by realists” [R. Rorty]) AMCTM 2014 Copyrighted including “relativism”—or similar categories St. Petersburg, September 4

- 5. Truth ⇒ Certainty The epistemological dilemma Modern science, basically founded on empirism, as opposed to metaphysics, is usually considered exempt from the previous weakness. Considering doubt as a shortcoming, science reasoning aims at reaching, if not truth, at least certainties, and many scientists tend to believe that this goal can be fulfilled in their field. However, let us listen to Fr Bacon: “If we begin with certainties, we shall end in doubts; but if we begin with doubts, and are patient with them, we shall end with certainties.” [Sir Francis Bacon 1605] AMCTM 2014 Copyrighted (…still an optimistic viewpoint …) St. Petersburg, September 5

- 6. Truth ⇒ Certainty ⇒ Objectivity The illusion of objective knowledge As alerted by philosophers, however, the previous belief simply arises from the illusion of science being able to attain objectivity, as a consequence of being based on information drawn from the observation of natural phenomena, taken as ‘facts’. Fact: “A thing that is known or proven to be true” [Oxford Dictionary] “A piece of information presented as having objective reality” [Merriam-Webster Dictionary] Objectivity and cause-effect-cause chain are the pillars of single-path scientific reasoning. AMCTM 2014 Copyrighted St. Petersburg, September 6

- 7. Truth ⇒ Certainty ⇒ Objectivity The illusion of objective knowledge Should these pillars stand firm, the theories developed for systematically interlocking the empirical experience would, similarly, consist of a single building block, with the occasional addition of ancillary building blocks accommodating specific new knowledge. “Verification” [L. Wittgenstein] would become unnecessary… the road toward the next “Scientific revolution” [T. Kuhn] AMCTM 2014 Copyrighted (a static vision) “Falsification” [K. Popper] a paradox … impossible. St. Petersburg, September 7

- 8. Truth ⇒ Certainty ⇒ Objectivity Remedy 1: Uncertainty (& Imprecision) Confronted with the evidence available since long, and reconfirmed everyday, that the previous scenario does not apply, However, strictly speaking, it applies only if the object of the observations (the ‘measurand’ in measurement science) is the same. Hence the issue is not fully resolved, the problem is shifted to another concept, : the uniqueness of the measurand, a concept of non-random “Concerning non-precise data, uncertainty is called imprecision … is not of stochastic nature … can be modelled by the so-called non-precise numbers” [R. Viertl, EOLSS UNESCO Encyclopoedia] AMCTM 2014 Copyrighted the concept of ‘uncertainty’ came in. nature, leading to imprecision. St. Petersburg, September 8

- 9. Certainty ⇒ Uncertainty ⇒ Chance Confronted with the evidence of diverse results of observations, modern science’s way-out was to introduce the concept of ‘chance’—replacing ‘certainty’. This was done with the illusion of reaching firmer conclusions by establishing a hierarchy in measurement results (e.g. based on the frequency of occurrence), in order to take a ‘decision’ (i.e. for choosing from various measurement results). Chance concept initiated the framework of ‘probability’, but expanded later into several other streams, e.g., possibility, fuzzy, cause-effect, interval, non-parametric, … reasoning frames depending on the type of information available or on the approach to it. “With the idol of certainty (including that of degrees of imperfect certainty or probability) there falls one of the defences of obscurantism which bar the way of scientific advance.” [K. Popper] AMCTM 2014 Copyrighted Remedy 2: Decision St. Petersburg, September 9

- 10. The illusions of chance –1 Chance ⇒ (Prediction) ⇒ Decision The ultimate common goal of any branch of science is to communicate measurement results and perform robust prediction. In the probability frame, any decision strategy requires the choice of an expected value as well of the limits of the dispersion interval of the observations. The choice of the expected value (‘expectation’: “a strong belief that something will happen or be the case” [from Oxford Dictionary]) is not unequivocal, since several location parameters are offered by probability theory—with a ‘true value’ still standing in the shade, deviations from which are called ‘errors’. AMCTM 2014 Copyrighted St. Petersburg, September 10

- 11. Chance ⇒ (Prediction) ⇒ Decision The illusions of chance –2 As to data dispersion, most theoretical frameworks tend to lack general reasons for bounding a probability distribution, whose tails thus extend without limits to infinitum. However, without a limit, no decision is possible; and, the wider the limit, the less meaningful a decision is. Stating a limit becomes itself a decision, assumed to fit the intended use of the data. The terms used in this frame clearly indicate the difficulty and the meaning that is applicable in this context: ‘confidence level’ (confidence: “the feeling or belief that one can have faith in or rely on someone or something” [from Oxford Dictionary]), or ‘degree of belief’ (belief: “trust, faith, or confidence in (someone or something)” or “an acceptance that something exists or is true, especially one without proof” [ibidem]) AMCTM 2014 Copyrighted St. Petersburg, September 11

- 12. Chance ⇒ (Prediction) ⇒ Decision The illusions of chance –3 As to data dispersion, alternatively, one can believe in using truncated (finite tail-width) distributions. However, reasons for truncation are generally supported by uncertain information. In rare cases it may be justified by theory, e.g. a bound to zero –itself not normally reachable exactly (experimental limit of detection) Stating limits becomes itself again a decision, also in this case assumed to be fit for the intended use of the data. AMCTM 2014 Copyrighted St. Petersburg, September 12

- 13. Uncertainty ⇒ Chance ⇒ Decision ⇒ Risk But … what about ‘decision’? When (objective) reasoning is replaced by choice, a decision can only be based on • a priori assumptions (for hypotheses), or • inter-subjectively accepted conventions (predictive, for subsequent action), However, hypotheses cannot be proved, and inter-subjective agreements are strictly relative to a community and for a given period of time. The loss of certainty resulted in the loss of uniqueness of decisions, and the concept of ‘risk’ emerged as a remedy. AMCTM 2014 Copyrighted Remedy 3: St. Petersburg, September 13

- 14. Uncertainty ⇒ Chance ⇒ Decision ⇒ Risk Any parameter chosen to represent a set of observations becomes ‘uncertain’, not because it must be expressed with a dispersion attribute associated to an expected value, but because the choice of both parameters is the result of decisions, and a decision cannot be ‘exact’ (unequivocal). Any decision is fuzzy. The use of risk does not alleviate the issue: if a decision cannot be exact, the risk cannot be null. AMCTM 2014 Copyrighted The illusions of risk –1 St. Petersburg, September 14

- 15. Uncertainty ⇒ Chance ⇒ Decision ⇒ Risk In other words: • The association of a ‘risk’ to a decision, a recent popular issue, does not add any real benefit in respect to the fundamental issue. • Risk is only zero for certainty, so zero risk is unreachable. “The relations between probability and experience are also still in need of clarification. In investigating this problem we shall discover what will at first seem an almost insuperable objection to my methodological views. For although probability statements play such a vitally important role in empirical science, they turn out to be in principle impervious to strict falsification.” [K. Popper 1936] AMCTM 2014 Copyrighted The illusions of risk –2 St. Petersburg, September 15

- 16. Chance is a bright prescription for working on symptoms of the disease, but is not a therapy for its deep origin, subjectivity. In fact, the very origin of the problem is related to our knowledge interface—human being. It is customary to make a distinction between the ‘outside’ and the ‘inside’ of the observer, i.e. between the ‘real world’ and the ‘mind’. Note: we are not fostering here a vision of the world as a ‘dream’. There are solid arguments for conceiving a structured and reasonably stable reality outside us (objectivity of the “true value”). AMCTM 2014 Copyrighted The failure of remedies: deeper origin –1 St. Petersburg, September 16

- 17. This distinction is one of the reasons generating a dichotomy since at least a couple of centuries, between ‘exact sciences’ and other branches, often called ‘soft sciences’, like psychology, sociology, economy… For ‘soft’ science we are ready to admit that the objects of observations tend to be dissimilar, because every human individual is dissimilar from any other. In ‘exact’ science we are usually not readily admitting that the human interface between our ‘mind’ and the ‘real world’ is a factor of influence affecting our knowledge. Mathematics stays in between, not being based on observations but on a ‘exact’ construction of concepts based on the thinking mechanisms in our mind. AMCTM 2014 Copyrighted The failure of remedies: deeper origin –2 St. Petersburg, September 17

- 18. • All of the above should suggest scientists to be humble about contending on methods for expressing experimental knowledge— apart from gross mistakes (“blunders”). • Different from the theoretical context, experience can be only shared to a certain degree, leading, at best, to a shared decision. The association of a ‘risk’ to a decision does not add any real benefit with respect to the fundamental issue. • One cannot expect a single decision to be valid in all cases, i.e. without exceptions. Risk is only zero for certainty, so zero risk is unreachable. • Similarly, no single frame of reasoning leading to a specific type of decision can be expected to be valid in all cases. AMCTM 2014 Copyrighted Consequences St. Petersburg, September 18

- 19. • Also in science, ‘diversity’ is not always a synonym of ‘confusion’, a popular term used to contrast it, rather it is an invaluable additional resource leading to better understanding. • Should this be the case, diversity rather becomes richness, by deserving a higher degree of confidence in our pointing to the correct answers (but, obviously, “nothing that has been or will be said makes it a process of evolution toward anything” [T. Kuhn]). • This fact is already well understood in experimental science, where the main way to detect systematic effects is to diversify the experimental methods and procedures used. Why not accepting it also in reasoning? AMCTM 2014 Copyrighted Diversity: a resource St. Petersburg, September 19

- 20. Sparse examples of exclusive choices in measurement science The Guide for the Expression of Uncertainty in Measurement (GUM) in favour to choose a single framework, with the ‘error approach’ discontinued in favour of an ‘uncertainty approach’; The Guide for the Expression of Uncertainty in Measurement (GUM) in favour to choose for its future edition the single approach—‘Bayesian’— replacing ‘frequentist’ parts; The International System of Measurement Units (SI) proposed to change, with “fundamental constants” replacing ‘physical states or conditions’ in definitions of base units; The singled ‘official’ set of “recommended values” used for the numerical values of quantities (fundamental constants, atomic masses, differences in scales, …); The pretended permanent validity of numerical value stipulations; The traditional exclusive classification of the errors/effects in random and systematic, with the concept of “correction” associated to the latter; … AMCTM 2014 Copyrighted St. Petersburg, September 20

- 21. • At its origin, the indicated trend might be due to a wrong assignment to a relevant Commission or Task Group, with the request of a single ‘consensus’ outcome, instead of a rationally-compounded information/knowledge. • However, the consequence risks to be politics (needing decisions) leaking into science (seeking understanding), a trend carrying the danger of potentially threatening scientific integrity. AMCTM 2014 Copyrighted General conclusions digest of the best available St. Petersburg, September 21