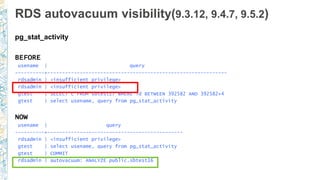

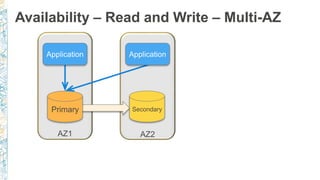

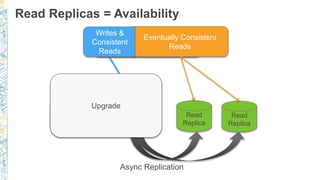

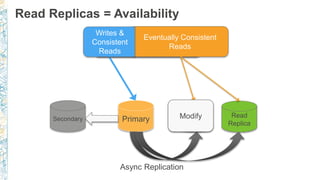

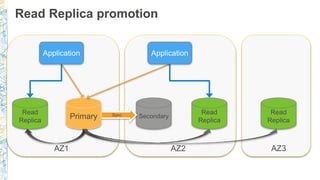

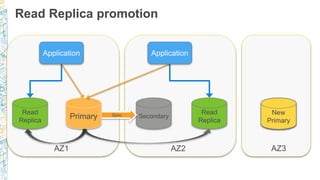

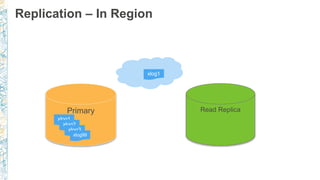

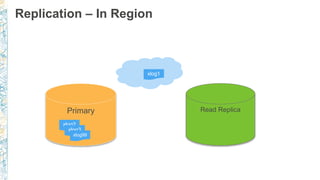

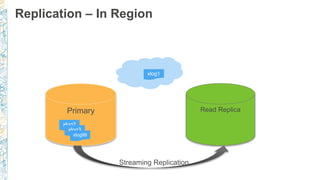

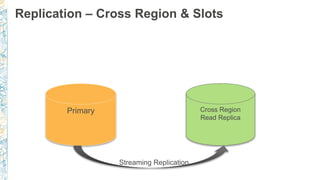

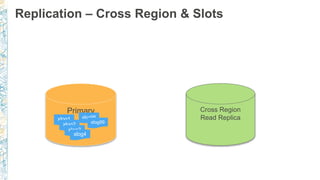

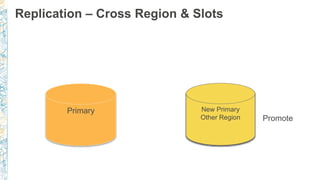

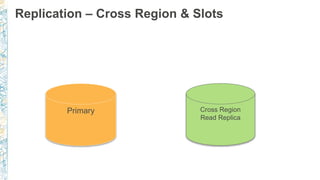

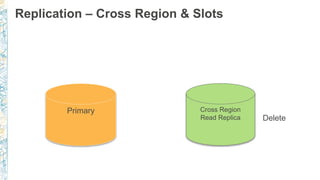

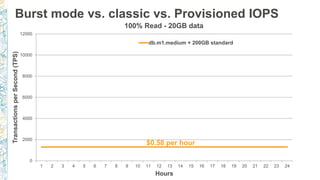

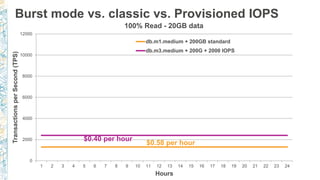

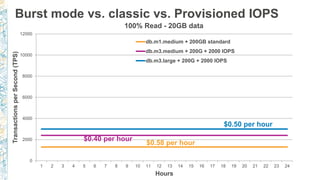

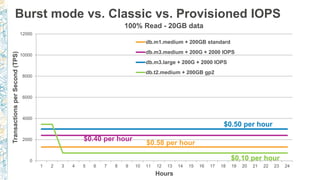

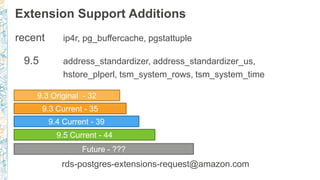

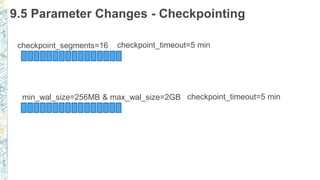

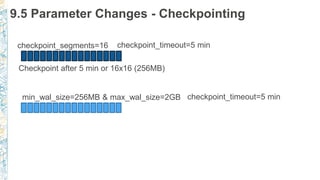

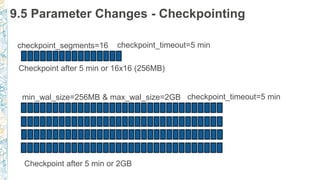

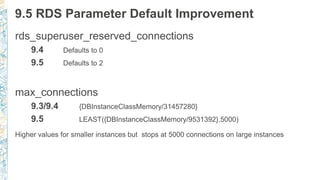

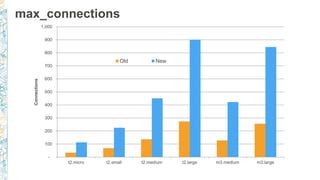

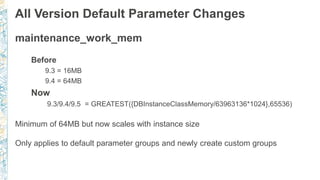

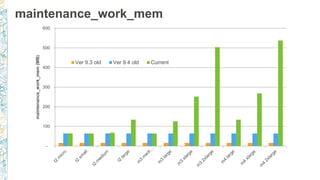

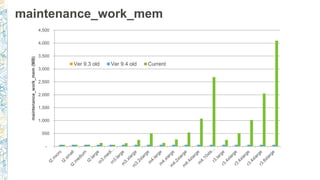

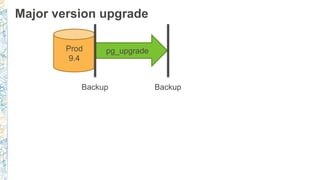

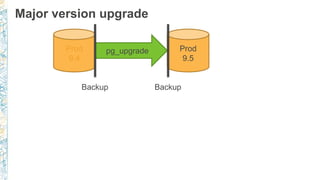

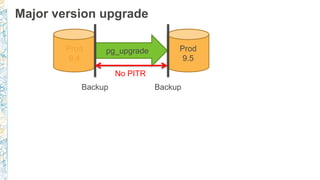

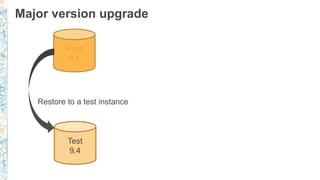

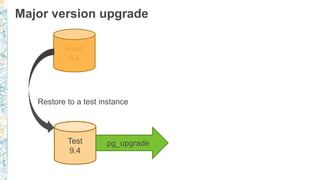

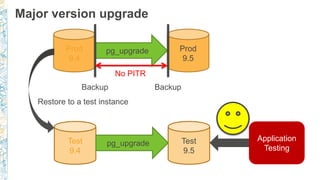

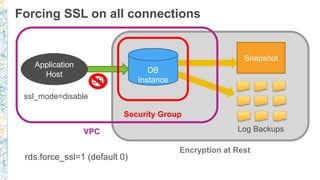

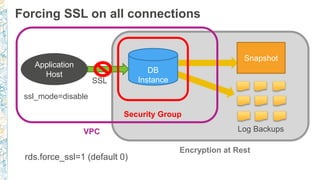

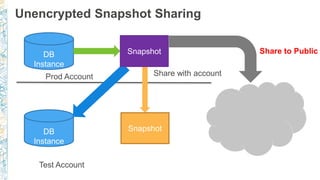

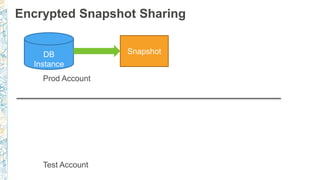

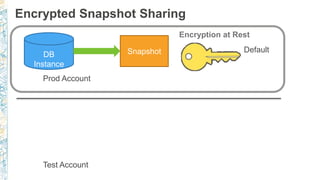

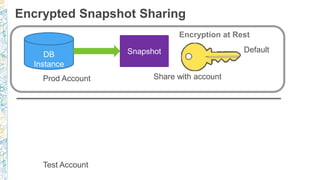

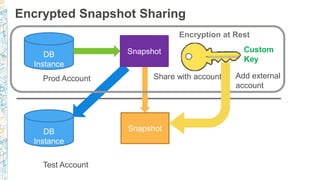

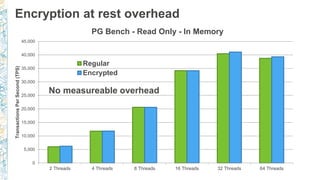

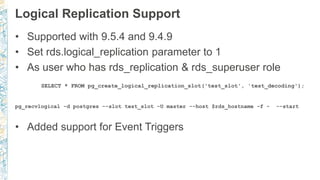

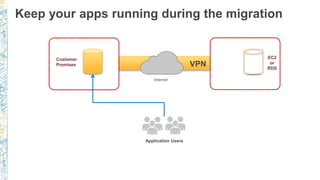

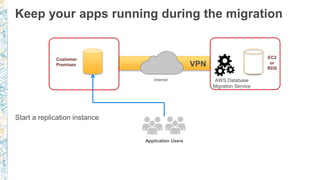

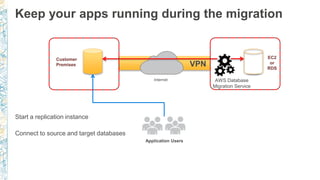

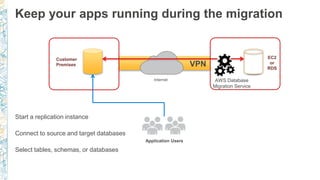

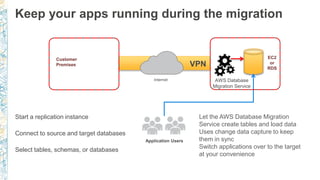

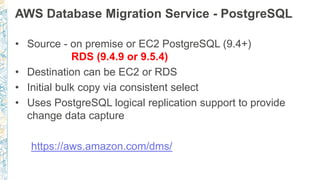

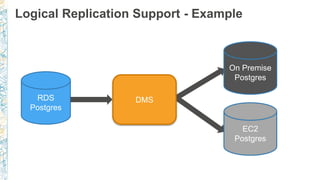

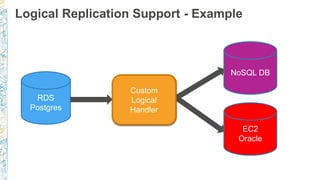

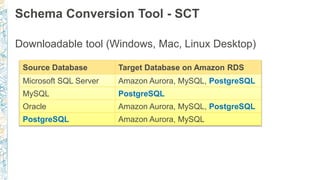

The document discusses the new features and enhancements of Amazon RDS for PostgreSQL, specifically focusing on version 9.5 and its updates including parameter changes, extension support, and logical replication. It highlights improvements in maintenance parameters, connection limits, and encryption, as well as outlining best practices for major version upgrades and replication strategies. Additionally, it covers the use of AWS Database Migration Service for seamless migrations and ongoing operations during transitions.

![RDS autovacuum logging (9.4.5+)

log_autovacuum_min_duration = 5000 (i.e. 5 secs)

rds.force_autovacuum_logging_level = LOG

…[14638]:ERROR: canceling autovacuum task

…[14638]:CONTEXT: automatic vacuum of table "postgres.public.pgbench_tellers"

…[14638]:LOG: skipping vacuum of "pgbench_branches" --- lock not available

http://docs.aws.amazon.com/AmazonRDS/latest/UserGuide/Appendix.PostgreSQL.Comm

onDBATasks.html#Appendix.PostgreSQL.CommonDBATasks.Autovacuum](https://image.slidesharecdn.com/amazonrdspostgresqlnewfeaturesandlessonslearneddallas2016finalexploded-160930164725/85/Amazon-RDS-for-PostgreSQL-Postgres-Open-2016-New-Features-and-Lessons-Learned-72-320.jpg)