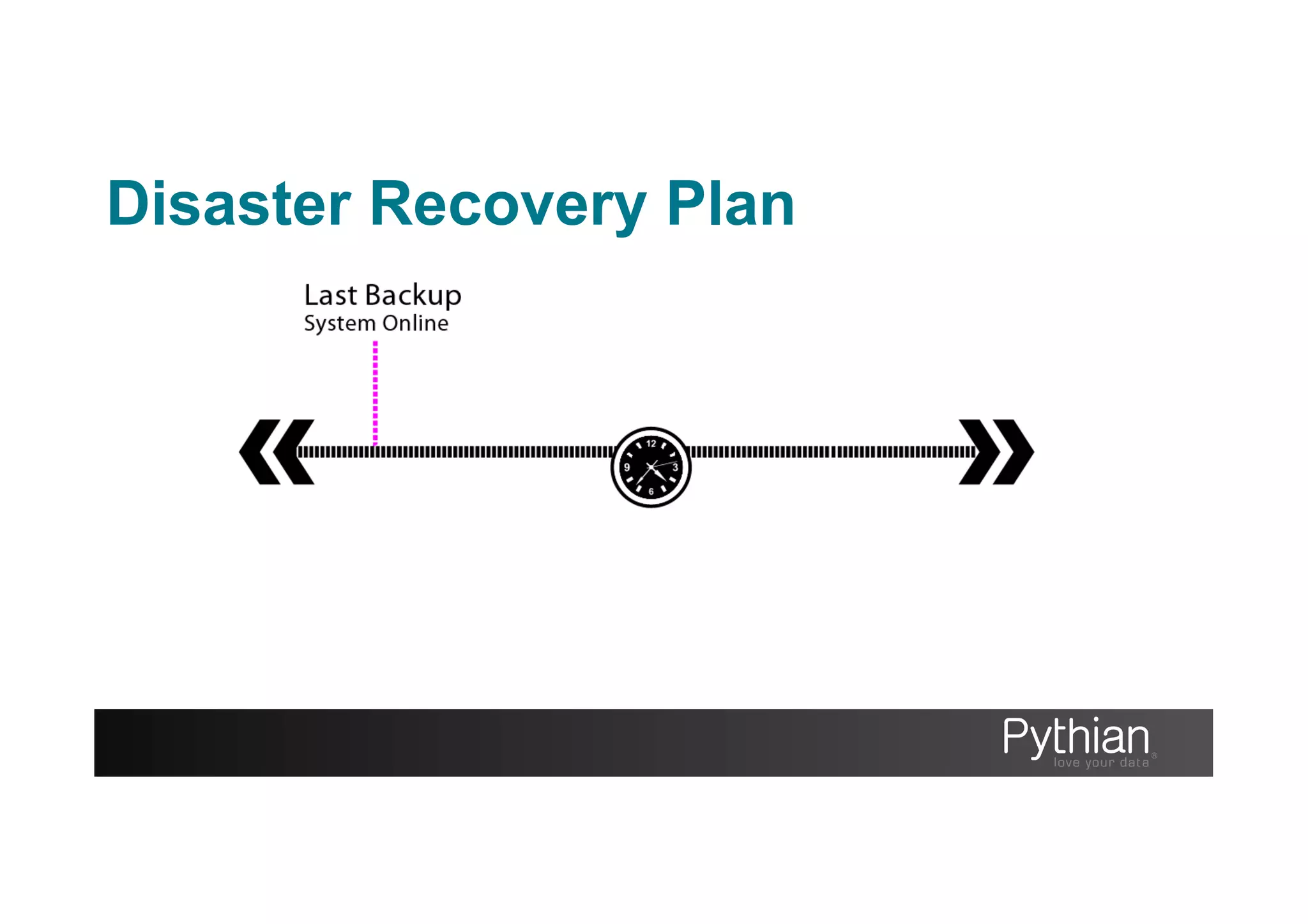

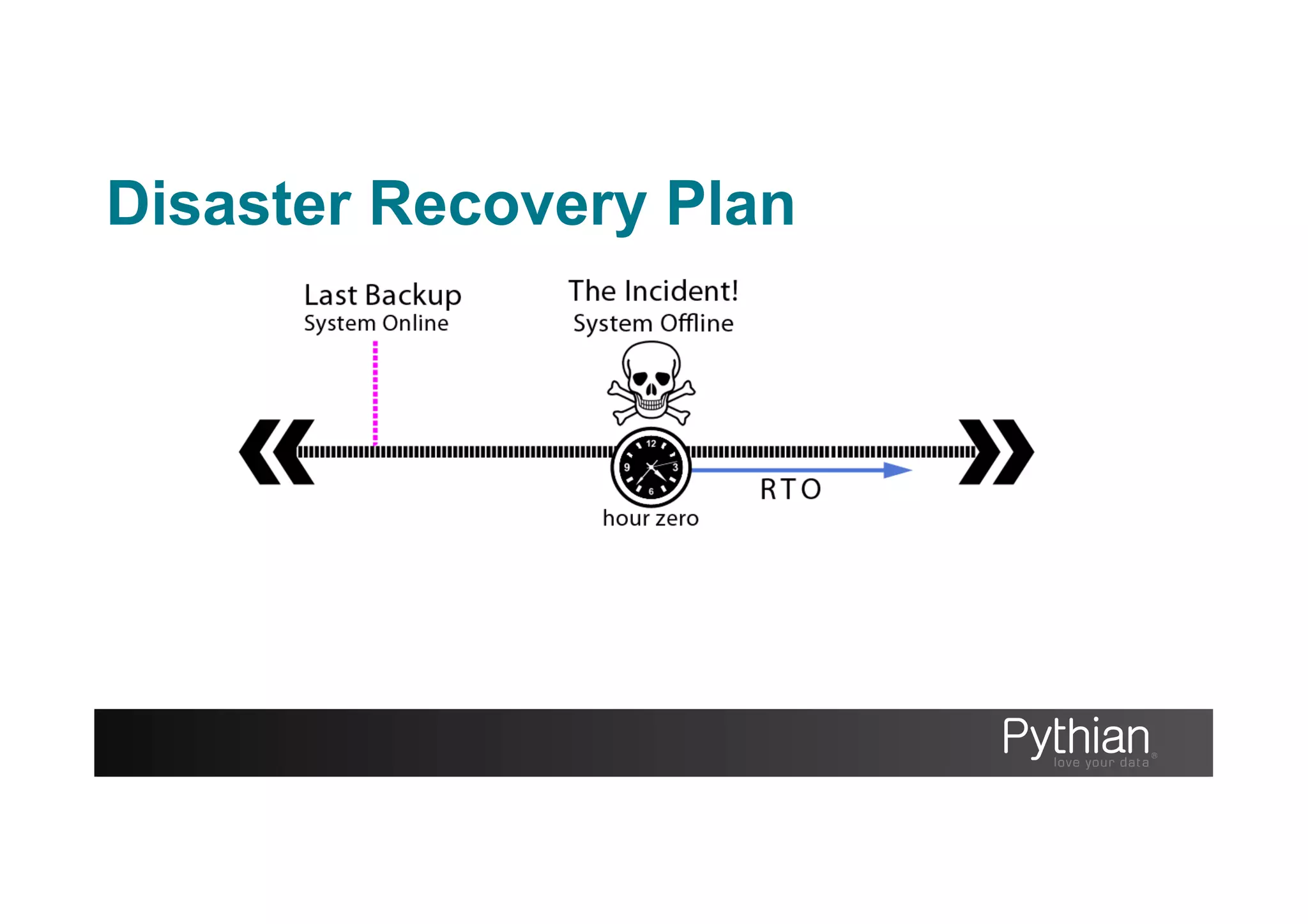

The document discusses the importance of backups for database management, outlining strategies for disaster recovery, different backup types (logical, physical, hot, warm, cold), and tools available for MySQL. It emphasizes the necessity of a well-documented disaster recovery plan, addressing recovery time and point objectives, and lists various backup tools such as mysqldump, mydumper, and Percona XtraBackup. Additionally, it covers techniques for ensuring data reliability and operational continuity, underscoring the importance of regular testing and monitoring of backups.

![mysqldump

The command line utility to create logical dumps of your schema, database

objects and data Good solution for small to medium datasets (0G>20G)

Pros

• Packaged with MySQL

• Broad compatibility (engines)

• Flexible use with pipelines

(gzip, sed, awk, pv, ssh, nc)

• No locking in --single-

transaction with innodb only

tables

Cons

• Single threaded

• Locking by default

• Can be hard to troubleshoot

errors (syntax error on line

14917212938)

• Slow to reload data

• Be wary of foreign keys and

triggers when restoring.

Type: Logical Heat: Hot[innodb only]:Warm[myisam] Impact: Medium Speed: Slow](https://image.slidesharecdn.com/backuptodaysavestomorrow-130501035205-phpapp02/75/A-Backup-Today-Saves-Tomorrow-30-2048.jpg)

![Tips

RTFM

There’s much more to see and test then what I’ve shown

--where = “id between 10000 and 20000”

--single-transaction

Check the time

Time your backup and recovery durations this will allow you to set expectations if you need to use the

backups. The time and pv unix programs will help you

time mysqldump > backup.sql

real 0m0.108s

user 0m0.003s

sys 0m0.006s

pv backup.sql | mysql -u user -p

101MB 0:00:39 [5.6MB/s]

[===> ] 13% ETA 0:03:12

mysqldump](https://image.slidesharecdn.com/backuptodaysavestomorrow-130501035205-phpapp02/75/A-Backup-Today-Saves-Tomorrow-33-2048.jpg)

![Build from source;

[moore@bkphost ~]# wget https://launchpad.net/mydumper/0.5/0.5.2/+download/mydumper-0.5.2.tar.gz

[moore@bkphost ~]# cmake .

-- The CXX compiler identification is GNU

…

-- Build files have been written to: ~/mydumper-0.5.2

[moore@bkphost ~]# make

Scanning dependencies of target mydumper

[ 20%] Building C object CMakeFiles/mydumper.dir/

…

[ 80%] Built target mydumper

Scanning dependencies of target myloader

[100%] Built target myloader

[moore@bkphost ~]# mydumper --help

…

Dependencies

(MySQL, GLib, ZLib, PCRE)

mydumper](https://image.slidesharecdn.com/backuptodaysavestomorrow-130501035205-phpapp02/75/A-Backup-Today-Saves-Tomorrow-35-2048.jpg)

![mydumper

Example of mydumper & myloader

Dump data

[moore@bkphost ~]# mydumper -h localhost –u bkpuser –p secret --database world --outputdir /backup_dir --verbose 3

** Message: Connected to a MySQL server

** Message: Started dump at: 2013-04-15 12:22:48

** Message: Thread 1 connected using MySQL connection ID 4

** Message: Thread 1 dumping data for `world`.`City`

** Message: Thread 1 shutting down

** Message: Non-InnoDB dump complete, unlocking tables

** Message: Finished dump at: 2013-04-15 12:22:54

Restore data

[moore@bkphost ~]# myloader -h localhost –u bkpuser –p secret --database world –d /backup_dir --verbose 3

** Message: n threads created

** Message: Creating table `world`.`City`

** Message: Thread 1 restoring `world`.`City` part 0

** Message: Thread 1 shutting down](https://image.slidesharecdn.com/backuptodaysavestomorrow-130501035205-phpapp02/75/A-Backup-Today-Saves-Tomorrow-36-2048.jpg)

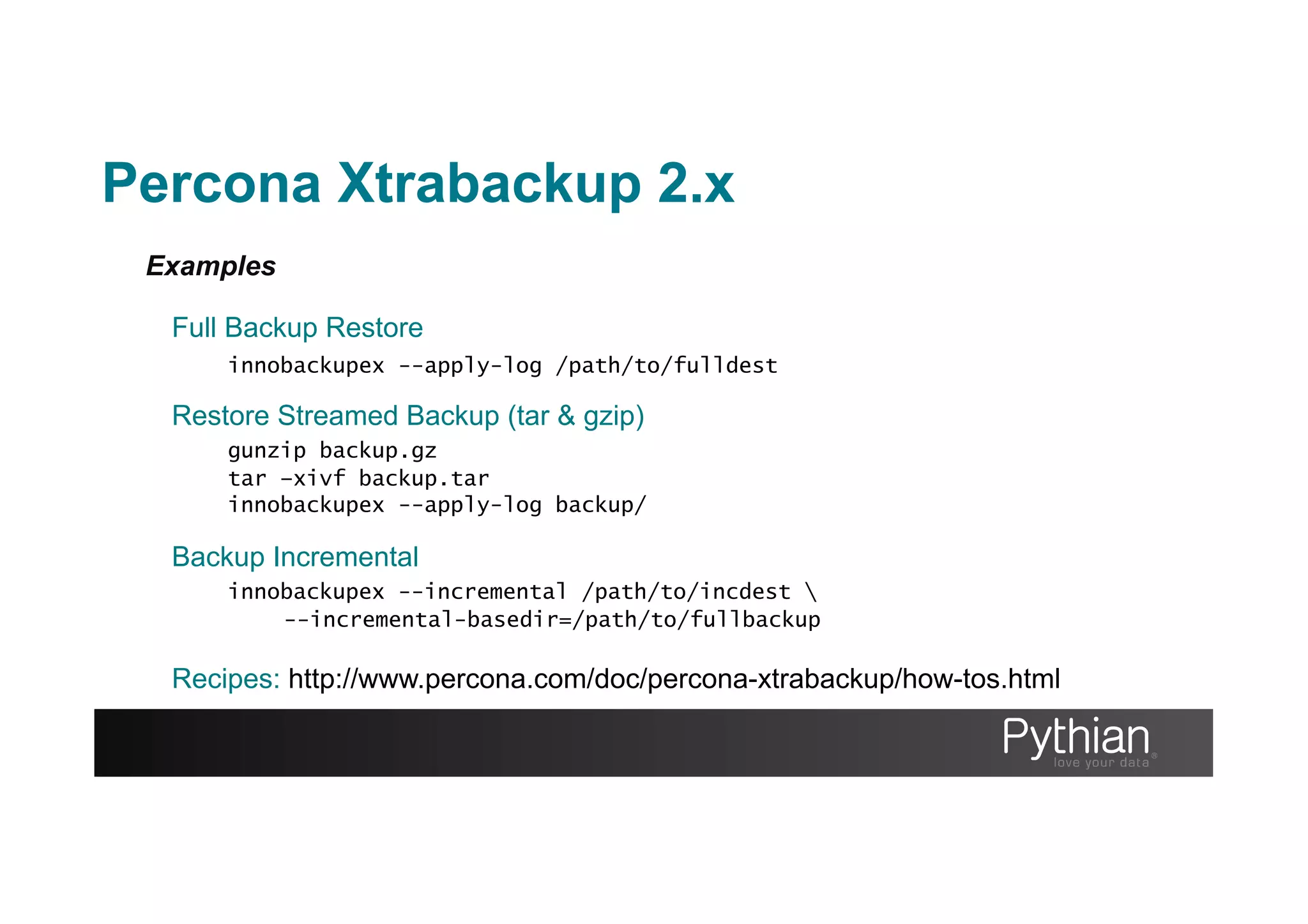

![More Examples

Optimizing the restore phase

innobackupex --use-memory=2G --apply-log /path/to/backup

Backups with pigz

innobackupex --stream=[xbstream|tar] . | [pigz|gzip] > backup.gz

Backup Incremental

innobackupex --incremental /path/to/incdest

--incremental-basedir=/path/to/fullbackup

Recipes: http://www.percona.com/doc/percona-xtrabackup/how-tos.html

Many, many more options…

Percona Xtrabackup 2.x](https://image.slidesharecdn.com/backuptodaysavestomorrow-130501035205-phpapp02/75/A-Backup-Today-Saves-Tomorrow-41-2048.jpg)