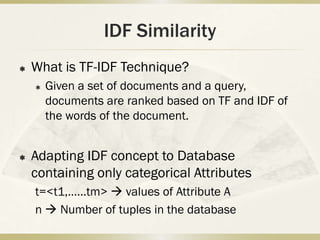

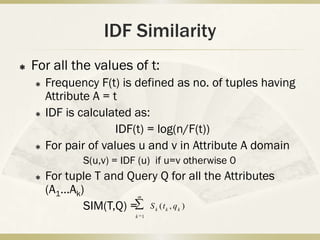

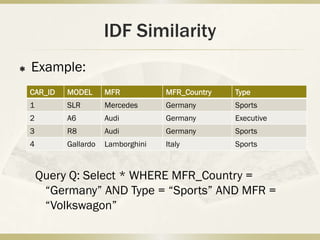

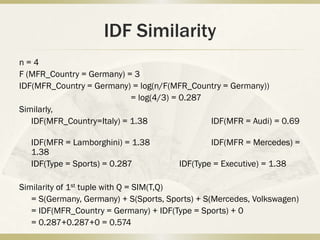

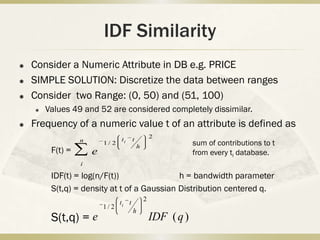

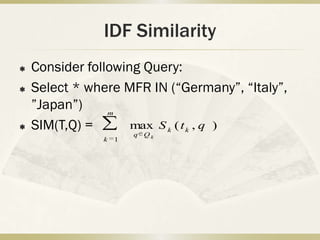

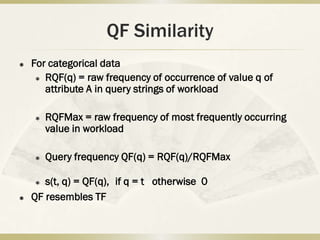

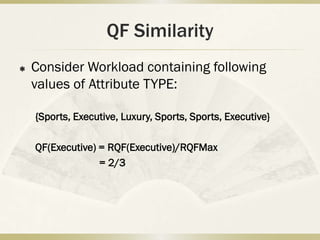

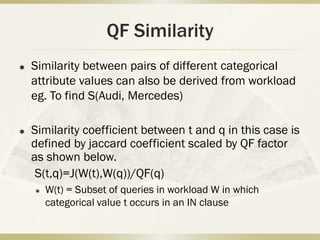

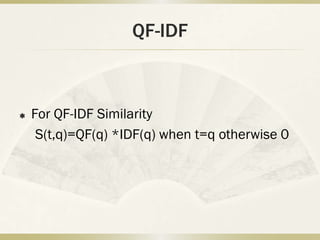

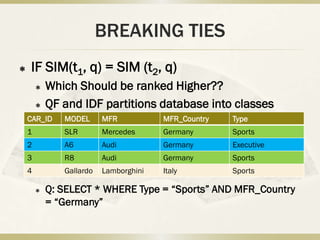

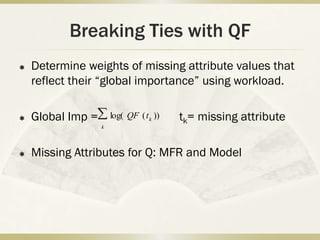

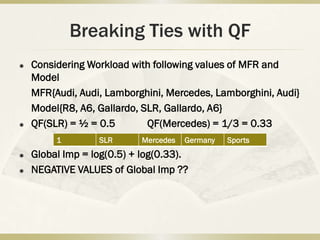

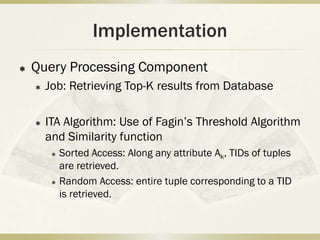

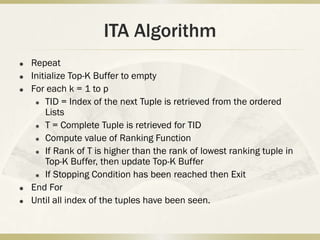

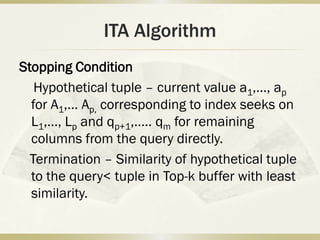

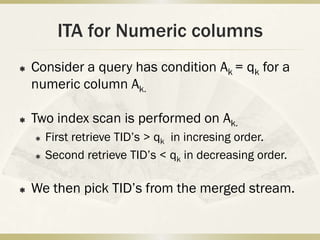

This document proposes techniques for automatically ranking results from database queries. It extends TF-IDF models from information retrieval to databases by developing IDF and QF similarity measures. IDF similarity adapts inverse document frequency to databases by calculating frequency of attribute values. QF similarity leverages query frequency from workload logs. An index-based threshold algorithm is used to efficiently retrieve top-K results by sorting on attribute values.