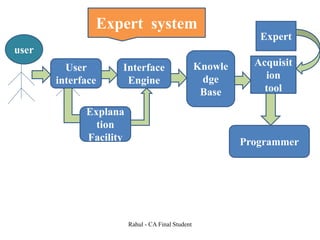

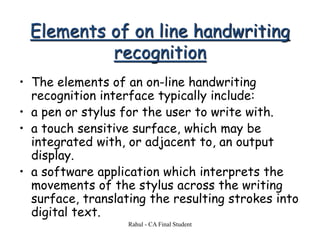

The document discusses the history and development of artificial intelligence from its origins in 1956 to modern applications. It covers many foundational concepts in AI research including knowledge representation, machine learning, natural language processing, computer vision, robotics, and the challenges of achieving human-level artificial general intelligence. The document also provides examples of notable AI systems developed for games, speech recognition, and expert systems.