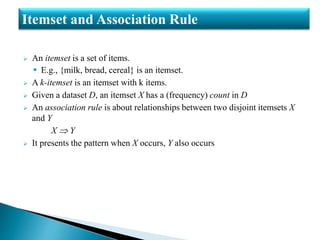

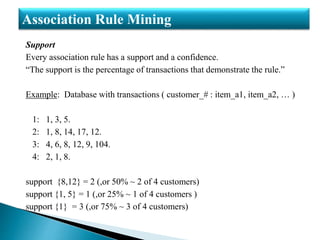

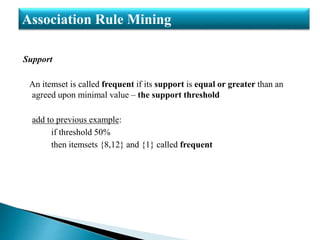

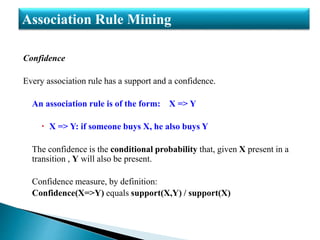

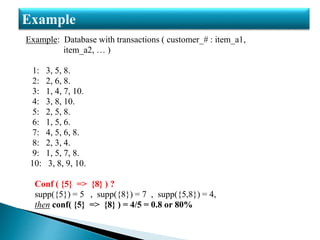

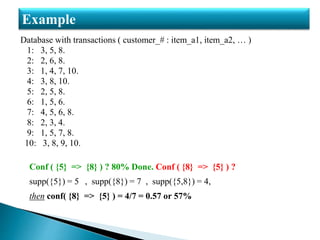

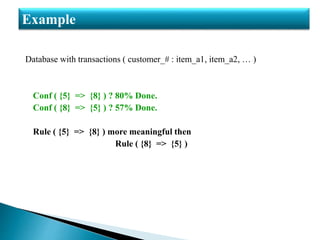

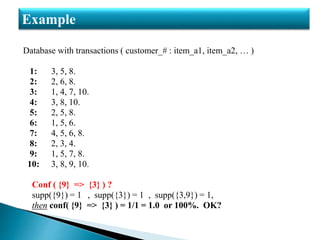

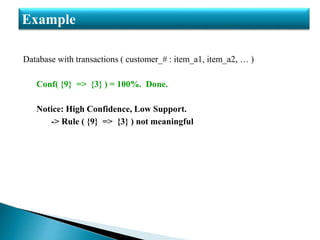

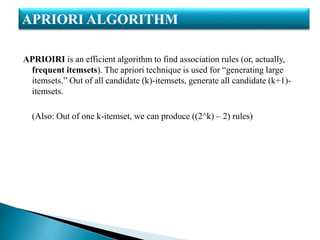

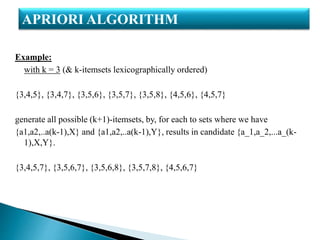

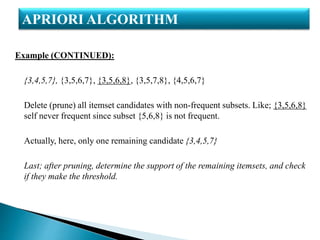

The document discusses association rule mining and the Apriori algorithm. It defines key concepts in association rule mining such as frequent itemsets, support, confidence, and association rules. It also explains the steps in the Apriori algorithm to generate frequent itemsets and rules, including candidate generation, pruning infrequent subsets, and determining support. An example transaction database is used to demonstrate calculating support and confidence for rules and illustrate the Apriori algorithm.