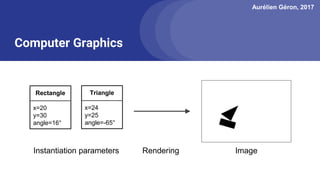

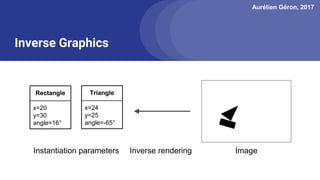

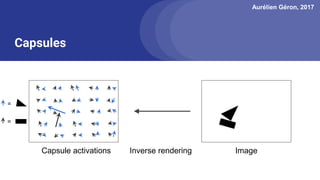

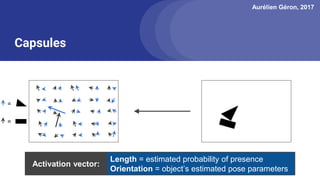

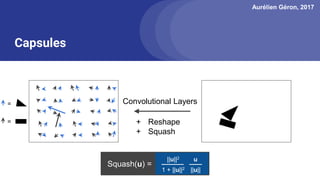

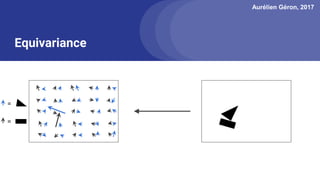

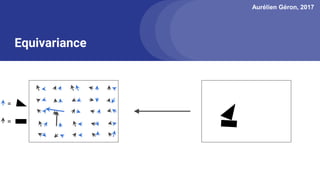

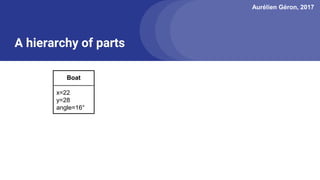

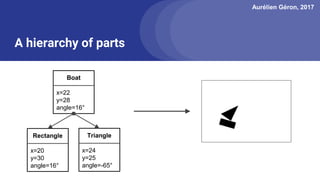

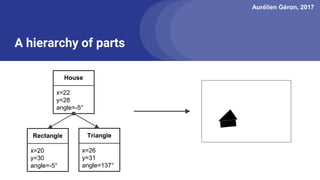

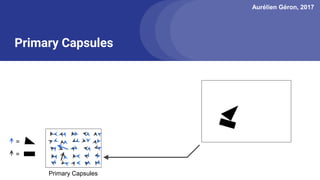

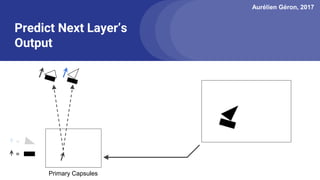

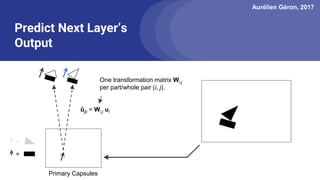

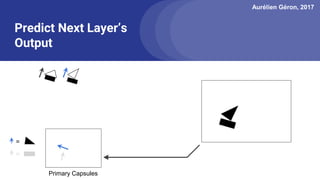

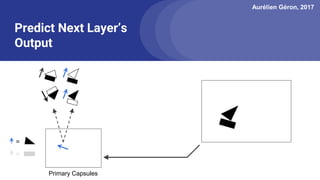

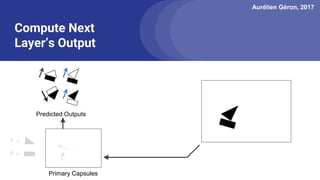

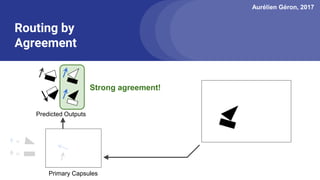

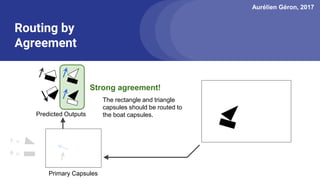

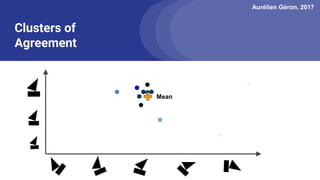

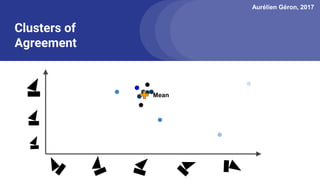

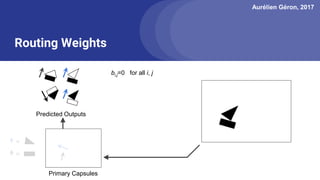

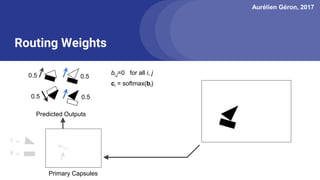

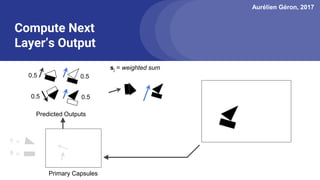

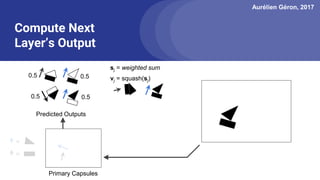

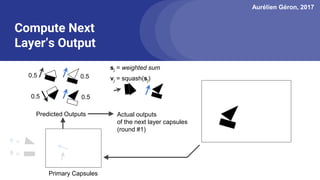

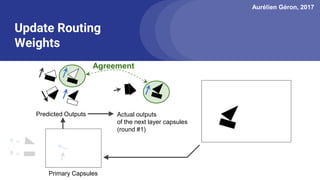

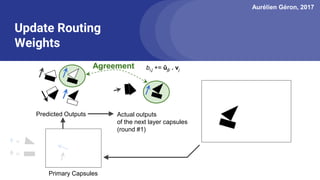

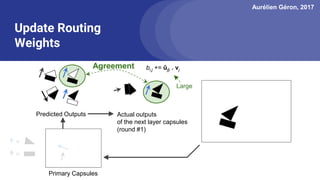

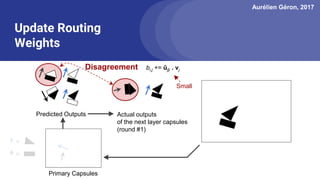

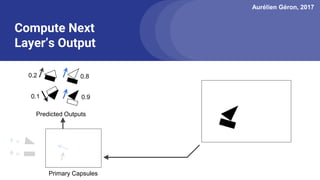

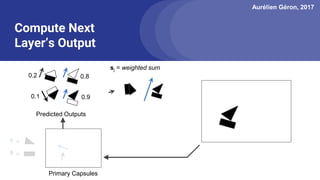

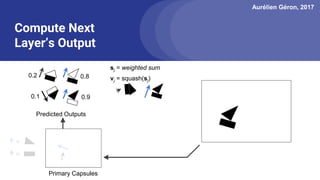

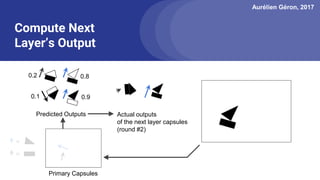

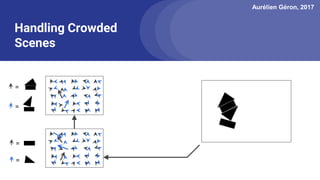

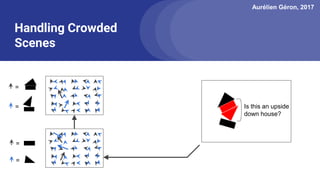

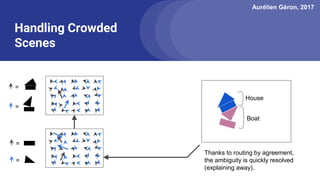

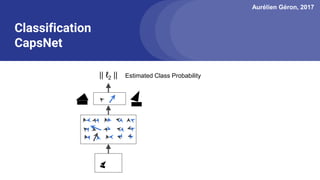

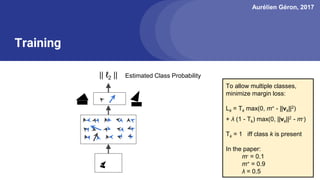

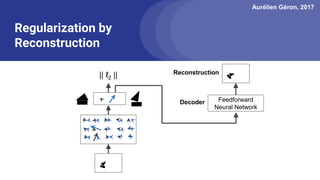

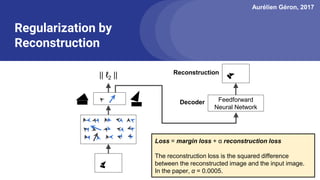

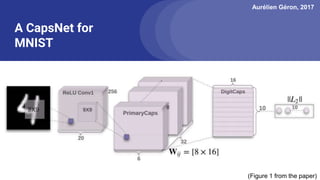

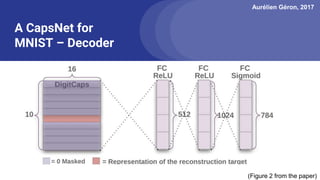

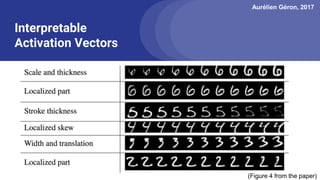

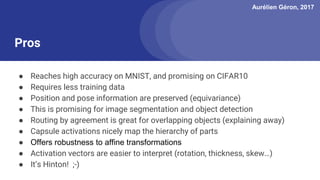

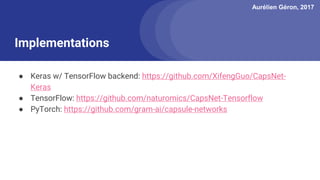

The document discusses capsule networks, focusing on their architecture and functionality, including dynamic routing, activations, and handling of perspective transformations. It highlights the advantages of capsule networks, such as high accuracy on MNIST and robustness to affine transformations, while also addressing limitations like slow training and issues with closely clustered objects. Additionally, it provides links to various implementations and resources related to capsule networks.