Report

Share

Download to read offline

Recommended

VLSI Projects for M. Tech, VLSI Projects in Vijayanagar, VLSI Projects in Bangalore, M. Tech Projects in Vijayanagar, M. Tech Projects in Bangalore, VLSI IEEE projects in Bangalore, IEEE 2015 VLSI Projects, FPGA and Xilinx Projects, FPGA and Xilinx Projects in Bangalore, FPGA and Xilinx Projects in VijayangarHigh speed and energy-efficient carry skip adder operating under a wide range...

High speed and energy-efficient carry skip adder operating under a wide range...LogicMindtech Nologies

Recommended

VLSI Projects for M. Tech, VLSI Projects in Vijayanagar, VLSI Projects in Bangalore, M. Tech Projects in Vijayanagar, M. Tech Projects in Bangalore, VLSI IEEE projects in Bangalore, IEEE 2015 VLSI Projects, FPGA and Xilinx Projects, FPGA and Xilinx Projects in Bangalore, FPGA and Xilinx Projects in VijayangarHigh speed and energy-efficient carry skip adder operating under a wide range...

High speed and energy-efficient carry skip adder operating under a wide range...LogicMindtech Nologies

More Related Content

Similar to Enabling Condor in LHC Computing Grid

Similar to Enabling Condor in LHC Computing Grid (20)

Improved Utilization of Infrastructure of Clouds by using Upgraded Functional...

Improved Utilization of Infrastructure of Clouds by using Upgraded Functional...

A Novel Approach for Workload Optimization and Improving Security in Cloud Co...

A Novel Approach for Workload Optimization and Improving Security in Cloud Co...

[WSO2Con Asia 2018] Architecting for Container-native Environments![[WSO2Con Asia 2018] Architecting for Container-native Environments](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

![[WSO2Con Asia 2018] Architecting for Container-native Environments](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

[WSO2Con Asia 2018] Architecting for Container-native Environments

HOMOGENEOUS MULTISTAGE ARCHITECTURE FOR REAL-TIME IMAGE PROCESSING

HOMOGENEOUS MULTISTAGE ARCHITECTURE FOR REAL-TIME IMAGE PROCESSING

ZCloud Consensus on Hardware for Distributed Systems

ZCloud Consensus on Hardware for Distributed Systems

gVisor, Kata Containers, Firecracker, Docker: Who is Who in the Container Space?

gVisor, Kata Containers, Firecracker, Docker: Who is Who in the Container Space?

IEEE Paper - A Study Of Cloud Computing Environments For High Performance App...

IEEE Paper - A Study Of Cloud Computing Environments For High Performance App...

GDG Cloud Southlake #8 Steve Cravens: Infrastructure as-Code (IaC) in 2022: ...

GDG Cloud Southlake #8 Steve Cravens: Infrastructure as-Code (IaC) in 2022: ...

Recently uploaded

💉💊+971581248768>> SAFE AND ORIGINAL ABORTION PILLS FOR SALE IN DUBAI AND ABUDHABI}}+971581248768

+971581248768 Mtp-Kit (500MG) Prices » Dubai [(+971581248768**)] Abortion Pills For Sale In Dubai, UAE, Mifepristone and Misoprostol Tablets Available In Dubai, UAE CONTACT DR.Maya Whatsapp +971581248768 We Have Abortion Pills / Cytotec Tablets /Mifegest Kit Available in Dubai, Sharjah, Abudhabi, Ajman, Alain, Fujairah, Ras Al Khaimah, Umm Al Quwain, UAE, Buy cytotec in Dubai +971581248768''''Abortion Pills near me DUBAI | ABU DHABI|UAE. Price of Misoprostol, Cytotec” +971581248768' Dr.DEEM ''BUY ABORTION PILLS MIFEGEST KIT, MISOPROTONE, CYTOTEC PILLS IN DUBAI, ABU DHABI,UAE'' Contact me now via What's App…… abortion Pills Cytotec also available Oman Qatar Doha Saudi Arabia Bahrain Above all, Cytotec Abortion Pills are Available In Dubai / UAE, you will be very happy to do abortion in Dubai we are providing cytotec 200mg abortion pill in Dubai, UAE. Medication abortion offers an alternative to Surgical Abortion for women in the early weeks of pregnancy. We only offer abortion pills from 1 week-6 Months. We then advise you to use surgery if its beyond 6 months. Our Abu Dhabi, Ajman, Al Ain, Dubai, Fujairah, Ras Al Khaimah (RAK), Sharjah, Umm Al Quwain (UAQ) United Arab Emirates Abortion Clinic provides the safest and most advanced techniques for providing non-surgical, medical and surgical abortion methods for early through late second trimester, including the Abortion By Pill Procedure (RU 486, Mifeprex, Mifepristone, early options French Abortion Pill), Tamoxifen, Methotrexate and Cytotec (Misoprostol). The Abu Dhabi, United Arab Emirates Abortion Clinic performs Same Day Abortion Procedure using medications that are taken on the first day of the office visit and will cause the abortion to occur generally within 4 to 6 hours (as early as 30 minutes) for patients who are 3 to 12 weeks pregnant. When Mifepristone and Misoprostol are used, 50% of patients complete in 4 to 6 hours; 75% to 80% in 12 hours; and 90% in 24 hours. We use a regimen that allows for completion without the need for surgery 99% of the time. All advanced second trimester and late term pregnancies at our Tampa clinic (17 to 24 weeks or greater) can be completed within 24 hours or less 99% of the time without the need surgery. The procedure is completed with minimal to no complications. Our Women's Health Center located in Abu Dhabi, United Arab Emirates, uses the latest medications for medical abortions (RU-486, Mifeprex, Mifegyne, Mifepristone, early options French abortion pill), Methotrexate and Cytotec (Misoprostol). The safety standards of our Abu Dhabi, United Arab Emirates Abortion Doctors remain unparalleled. They consistently maintain the lowest complication rates throughout the nation. Our Physicians and staff are always available to answer questions and care for women in one of the most difficult times in their lives. The decision to have an abortion at the Abortion Cl+971581248768>> SAFE AND ORIGINAL ABORTION PILLS FOR SALE IN DUBAI AND ABUDHA...

+971581248768>> SAFE AND ORIGINAL ABORTION PILLS FOR SALE IN DUBAI AND ABUDHA...?#DUbAI#??##{{(☎️+971_581248768%)**%*]'#abortion pills for sale in dubai@

Recently uploaded (20)

Why Teams call analytics are critical to your entire business

Why Teams call analytics are critical to your entire business

Apidays New York 2024 - The Good, the Bad and the Governed by David O'Neill, ...

Apidays New York 2024 - The Good, the Bad and the Governed by David O'Neill, ...

ProductAnonymous-April2024-WinProductDiscovery-MelissaKlemke

ProductAnonymous-April2024-WinProductDiscovery-MelissaKlemke

Repurposing LNG terminals for Hydrogen Ammonia: Feasibility and Cost Saving

Repurposing LNG terminals for Hydrogen Ammonia: Feasibility and Cost Saving

AWS Community Day CPH - Three problems of Terraform

AWS Community Day CPH - Three problems of Terraform

ICT role in 21st century education and its challenges

ICT role in 21st century education and its challenges

Axa Assurance Maroc - Insurer Innovation Award 2024

Axa Assurance Maroc - Insurer Innovation Award 2024

TrustArc Webinar - Unlock the Power of AI-Driven Data Discovery

TrustArc Webinar - Unlock the Power of AI-Driven Data Discovery

+971581248768>> SAFE AND ORIGINAL ABORTION PILLS FOR SALE IN DUBAI AND ABUDHA...

+971581248768>> SAFE AND ORIGINAL ABORTION PILLS FOR SALE IN DUBAI AND ABUDHA...

Automating Google Workspace (GWS) & more with Apps Script

Automating Google Workspace (GWS) & more with Apps Script

A Beginners Guide to Building a RAG App Using Open Source Milvus

A Beginners Guide to Building a RAG App Using Open Source Milvus

Exploring the Future Potential of AI-Enabled Smartphone Processors

Exploring the Future Potential of AI-Enabled Smartphone Processors

TrustArc Webinar - Stay Ahead of US State Data Privacy Law Developments

TrustArc Webinar - Stay Ahead of US State Data Privacy Law Developments

Connector Corner: Accelerate revenue generation using UiPath API-centric busi...

Connector Corner: Accelerate revenue generation using UiPath API-centric busi...

Apidays Singapore 2024 - Building Digital Trust in a Digital Economy by Veron...

Apidays Singapore 2024 - Building Digital Trust in a Digital Economy by Veron...

Emergent Methods: Multi-lingual narrative tracking in the news - real-time ex...

Emergent Methods: Multi-lingual narrative tracking in the news - real-time ex...

Enabling Condor in LHC Computing Grid

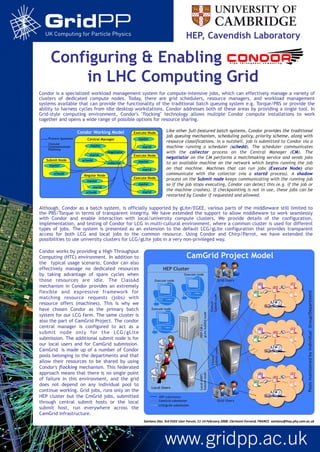

- 1. HEP, Cavendish Laboratory Configuring & Enabling Condor in LHC Computing Grid Condor is a specialized workload management system for compute-intensive jobs, which can effectively manage a variety of clusters of dedicated compute nodes. Today, there are grid schedulers, resource managers, and workload management systems available that can provide the functionality of the traditional batch queuing system e.g. Torque/PBS or provide the ability to harness cycles from idle desktop workstations. Condor addresses both of these areas by providing a single tool. In Grid-style computing environment, Condor's "flocking" technology allows multiple Condor compute installations to work together and opens a wide range of possible options for resource sharing. Central Manager negotiator master startd collector scheddSubmit Node schedd master Execute Node startd master Regular Node master schedd startd Execute Node startd master Execute Node startd master Process Spawned ClassAd Communication Pathway Condor Working Model Like other full-featured batch systems, Condor provides the traditional job queuing mechanism, scheduling policy, priority scheme, along with resource classifications. In a nutshell, job is submitted to Condor via a machine running a scheduler (schedd). The scheduler communicates with the collector process on the Central Manager (CM). The negotiator on the CM performs a matchmaking service and sends jobs to an available machine on the network which begins running the job on that machine. Machines that can run jobs (Execute Node) also communicate with the collector (via a startd process). A shadow process on the Submit node keeps communicating with the running job so if the job stops executing, Condor can detect this (e.g. if the job or the machine crashes). If checkpointing is not in use, these jobs can be restarted by Condor if requested and allowed. Although, Condor as a batch system, is officially supported by gLite/EGEE, various parts of the middleware still limited to the PBS/Torque in terms of transparent integrity. We have extended the support to allow middleware to work seamlessly with Condor and enable interaction with local/university compute clusters. We provide details of the configuration, implementation, and testing of Condor for LCG in multi-cultural environment, where a common cluster is used for different types of jobs. The system is presented as an extension to the default LCG/gLite configuration that provides transparent access for both LCG and local jobs to the common resource. Using Condor and Chirp/Parrot, we have extended the possibilities to use university clusters for LCG/gLite jobs in a very non-privileged way. PoolsmaintainedbyindividualGroup/Department Local Users HEP Cluster Execute node Execute node Execute node CamGrid Project Model HEPCM+ gLiteSubmitnode Local(HEP) Submitnode Central Submitnode Grid Users Grid Users Central Submitnode HEP submission CamGrid submission LCG/gLite submission Condor works by providing a High Throughput Computing (HTC) environment. In addition to the typical usage scenario, Condor can also effectively manage no dedicated resources by taking advantage of spare cycles when those resources are idle. The ClassAd mechanism in Condor provides an extremely flexible and expressive framework for matching resource requests (jobs) with resource offers (machines). This is why we have chosen Condor as the primary batch system for our LCG farm. The same cluster is also the part of CamGrid Project. The condor central manager is configured to act as a submit node only for the LCG/gLite submission. The additional submit node is for our local users and for CamGrid submission. CamGrid is made up of a number of Condor pools belonging to the departments and that allow their resources to be shared by using Condor's flocking mechanism. This federated approach means that there is no single point of failure in this environment, and the grid does not depend on any individual pool to continue working. Grid jobs, runs only on the HEP cluster but the CmGrid jobs, submitted through central submit hosts or the local submit host, run everywhere across the CamGrid infrastructure. Santanu Das. 3rd EGEE User Forum, 11-‐14 February 2008, Clermont-‐Ferrand, FRANCE. santanu@hep.phy.cam.ac.uk