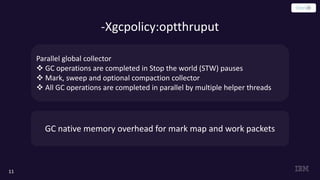

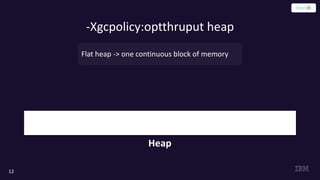

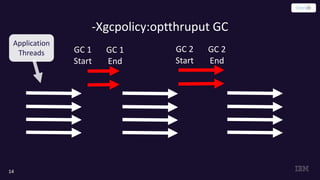

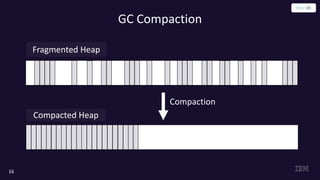

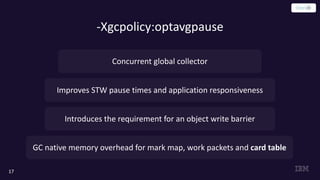

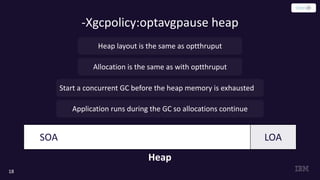

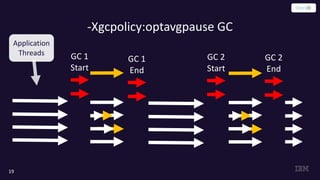

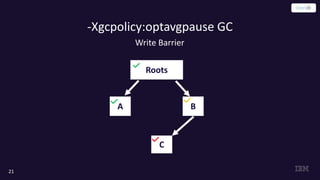

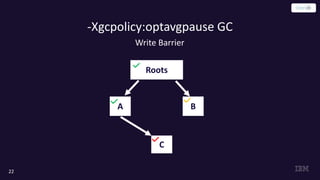

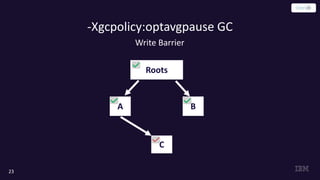

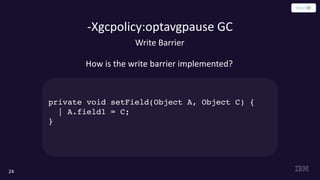

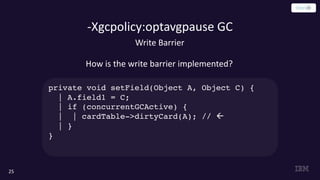

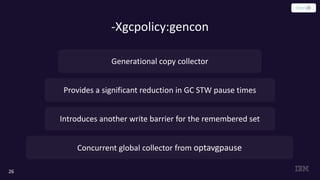

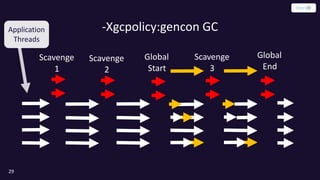

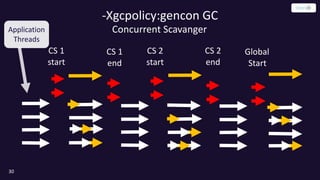

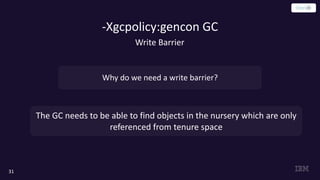

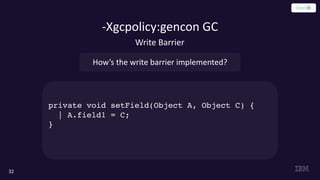

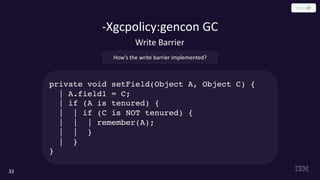

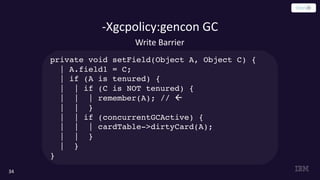

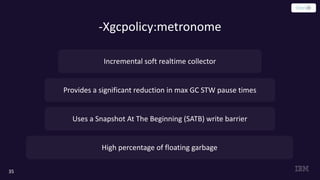

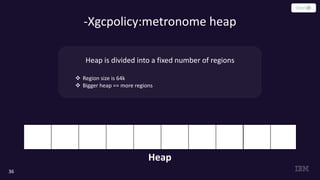

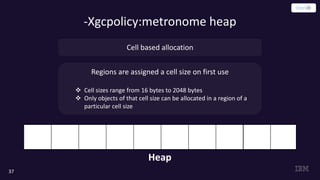

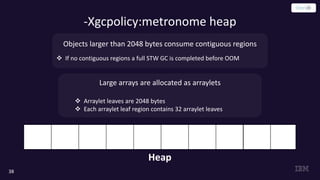

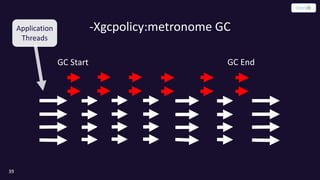

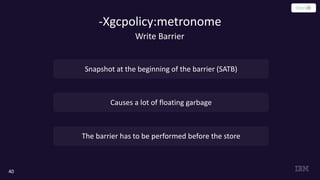

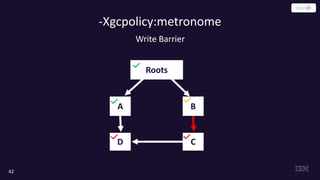

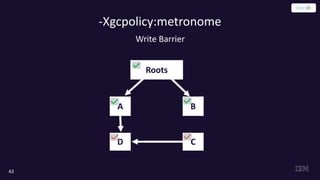

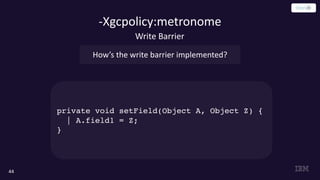

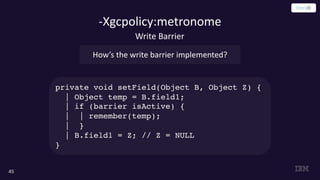

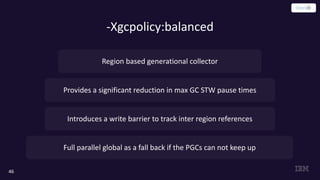

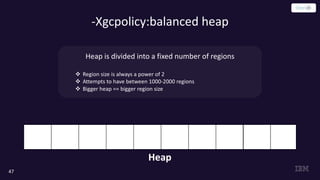

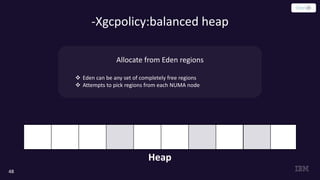

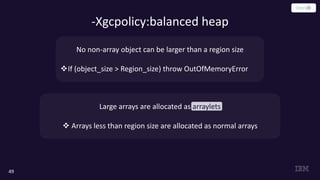

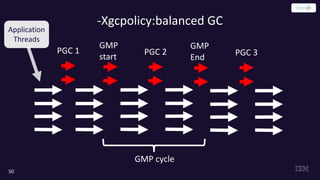

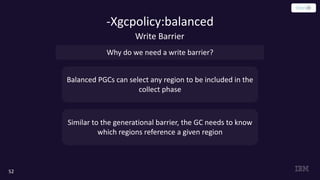

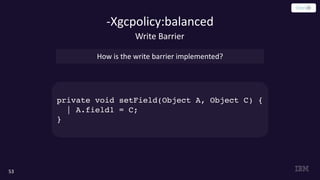

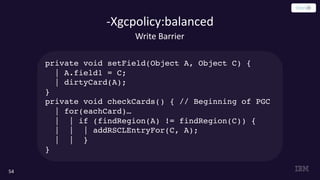

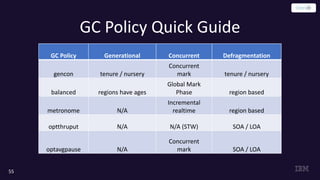

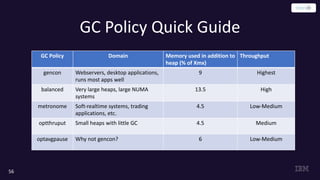

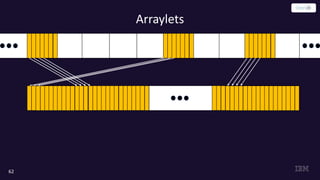

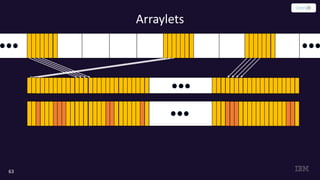

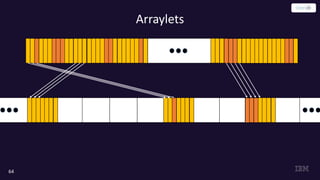

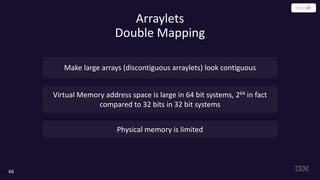

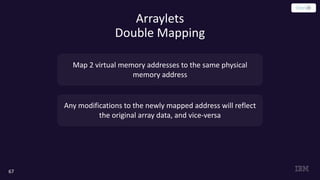

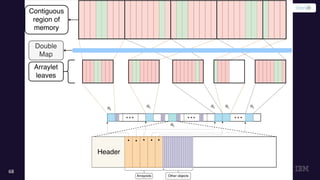

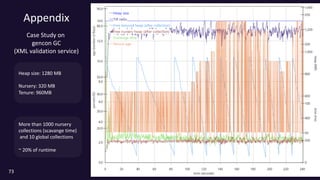

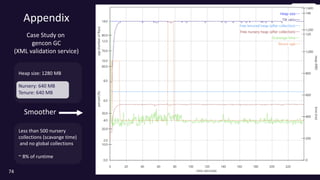

This document summarizes key aspects of garbage collection in Java. It begins with an introduction to garbage collection and an overview of OpenJ9, an open source JVM project. It then discusses various garbage collection policies in OpenJ9 including optthruput, optavgpause, gencon, balanced, and metronome. For each policy, it describes the heap layout, garbage collection process, and use of write barriers. It also covers double mapping for improving large array performance in some policies. Throughout it provides code examples and diagrams to illustrate concepts.

![References

75

[1] R. Jones et al. “The Garbage Collection Handbook”. Chapman & Hall/CRC, 2012](https://image.slidesharecdn.com/bragaslides-200218135421/85/Demystifying-Garbage-Collection-in-Java-75-320.jpg)