Prml 2.3

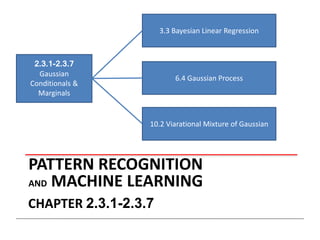

- 1. PATTERN RECOGNITION AND MACHINE LEARNING CHAPTER 2.3.1-2.3.7 2.3.1-2.3.7 Gaussian Conditionals & Marginals 3.3 Bayesian Linear Regression 6.4 Gaussian Process 10.2 Viarational Mixture of Gaussian

- 3. Marginal

- 4. Partitioned Conditionals and Marginals

- 6. Partitioned Conditionals and Marginals Conditional Marginal

- 7. To Bayesian Linear Regression & Gaussian Process

- 8. To Bayesian Linear Regression & Gaussian Process

- 9. Bayes’ Theorem for Gaussian Variables (1)

- 10. Bayes’ Theorem for Gaussian Variables (2) Given we have where

- 11. Maximum Likelihood for the Gaussian (1) Given i.i.d. data , the log likeli- hood function is given by Sufficient statistics

- 12. Maximum Likelihood for the Gaussian (2) Set the derivative of the log likelihood function to zero, and solve to obtain Similarly

- 13. Maximum Likelihood for the Gaussian (3) Under the true distribution Hence define When dataset is small, more bias in connivance.

- 14. Maximum Likelihood for the Gaussian (4)

- 15. Contribution of the Nth data point, xN Sequential Estimation correction given xN correction weight old estimate

- 16. We all know Newton's method

- 17. Assume we are given samples from p(z,µ), one at the time. The Robbins-Monro Algorithm

- 18. Successive estimates of µN are then given by Conditions on aN for convergence : The Robbins-Monro Algorithm

- 19. Example: estimate the mean of a Gaussian. Robbins-Monro for Maximum Likelihood The distribution of z is Gaussian with mean μML. For the Robbins-Monro update equation, aN = σ/N.

- 20. Go back to the online updating μ(N) ML

- 21. Bayesian Inference for the Gaussian (1) Assume σ2 is known. Given i.i.d. data , the likelihood function for μ is given by

- 22. Bayesian Inference for the Gaussian (2) Combined with a Gaussian prior over μ, this gives the posterior Completing the square over σ2 , we see that

- 23. Bayesian Inference for the Gaussian (3) … where Note:

- 24. Different from Kalman Filter

- 25. Bayesian Inference for the Gaussian (4) Example: for N = 0, 1, 2 and 10.

- 26. Bayesian Inference for the Gaussian (5) Now assume μ is known. The likelihood function for λ=1/σ2 is given by This has a Gamma shape as a function of λ.

- 27. Bayesian Inference for the Gaussian (6) The Gamma distribution

- 28. Bayesian Inference for the Gaussian (7) Now we combine a Gamma prior, , with the likelihood function for λ¸ to obtain which we recognize as with

- 29. Bayesian Inference for the Gaussian (8) If both μ and λ are unknown, the joint likelihood function is given by We need a prior with the same functional dependence on μ and λ

- 30. Bayesian Inference for the Gaussian (10) The Gaussian-gamma distribution

- 31. Bayesian Inference for the Gaussian (11) The Gaussian-gamma distribution In Bayesian model inference process, the density is updating base on data

- 32. Bayesian Inference for the Gaussian (11) Multivariate conjugate priors • μ unknown, Λ known: p(μ) Gaussian. • Λ unknown, μ known: p(Λ) Wishart, • μ and Λ unknown: p(μ, Λ) Gaussian-Wishart,

- 35. Student’s t-Distribution Robustness to outliers: Gaussian vs t-distribution.

- 36. where Infinite mixture of Gaussians. Student’s t-Distribution

- 37. Student’s t-Distribution (1) The D-variate case: where . Properties:

- 38. Student’s t-Distribution (2) If use the inverse Wishart distribution as the prior, we also get a Student t-distribution Gibbs sampling for fitting finite and infinite Gaussian mixture models by Herman Kamper

- 39. An application on IGMM (1) How many Gaussian distributions can approximate the right data ?

- 40. An application on IGMM (2) Data Use 6 Gaussians! Let the data speak for itself.

- 41. An application on IGMM (3)

- 42. An application on IGMM (4)

- 43. Thanks

Editor's Notes

- The likelihood function depends on the data set only through the two quantities

- Want a better expiation.

- The prior is not only gives the prior knowledge, it also play as an “memory ” in the inference process. E.g., A Professor publish 0 paper this week, I published 1. Only look at this week, it seems I am better. But no body will think in this way. You have to consider the history, maybe the professor published 1000 papers before but I got only 2. What we did before is the prior, it helps us get a better ajudgement. but if I keep publish paper, the professor stopped. When I made 2000 papers with the same quality, it is more likely I will surpass him. In this process, the prior plays as an “memory”