The Duet model

•Download as PPTX, PDF•

8 likes•2,680 views

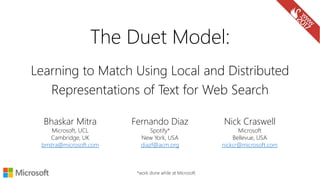

This document presents the Duet model for document ranking. The Duet model uses a combination of local and distributed representations of text to perform both exact and inexact matching of queries to documents. The local model operates on a term interaction matrix to model exact matches, while the distributed model projects text into an embedding space for inexact matching. Results show the Duet model, which combines these approaches, outperforms models using only local or distributed representations. The Duet model benefits from training on large datasets and can effectively handle queries containing rare terms or needing semantic matching.

Report

Share

Report

Share

Recommended

Recommended

https://telecombcn-dl.github.io/dlai-2020/

Deep learning technologies are at the core of the current revolution in artificial intelligence for multimedia data analysis. The convergence of large-scale annotated datasets and affordable GPU hardware has allowed the training of neural networks for data analysis tasks which were previously addressed with hand-crafted features. Architectures such as convolutional neural networks, recurrent neural networks or Q-nets for reinforcement learning have shaped a brand new scenario in signal processing. This course will cover the basic principles of deep learning from both an algorithmic and computational perspectives.Generative Adversarial Networks GAN - Xavier Giro - UPC TelecomBCN Barcelona ...

Generative Adversarial Networks GAN - Xavier Giro - UPC TelecomBCN Barcelona ...Universitat Politècnica de Catalunya

https://telecombcn-dl.github.io/2017-dlcv/

Deep learning technologies are at the core of the current revolution in artificial intelligence for multimedia data analysis. The convergence of large-scale annotated datasets and affordable GPU hardware has allowed the training of neural networks for data analysis tasks which were previously addressed with hand-crafted features. Architectures such as convolutional neural networks, recurrent neural networks and Q-nets for reinforcement learning have shaped a brand new scenario in signal processing. This course will cover the basic principles and applications of deep learning to computer vision problems, such as image classification, object detection or image captioning.Attention Models (D3L6 2017 UPC Deep Learning for Computer Vision)

Attention Models (D3L6 2017 UPC Deep Learning for Computer Vision)Universitat Politècnica de Catalunya

More Related Content

What's hot

https://telecombcn-dl.github.io/dlai-2020/

Deep learning technologies are at the core of the current revolution in artificial intelligence for multimedia data analysis. The convergence of large-scale annotated datasets and affordable GPU hardware has allowed the training of neural networks for data analysis tasks which were previously addressed with hand-crafted features. Architectures such as convolutional neural networks, recurrent neural networks or Q-nets for reinforcement learning have shaped a brand new scenario in signal processing. This course will cover the basic principles of deep learning from both an algorithmic and computational perspectives.Generative Adversarial Networks GAN - Xavier Giro - UPC TelecomBCN Barcelona ...

Generative Adversarial Networks GAN - Xavier Giro - UPC TelecomBCN Barcelona ...Universitat Politècnica de Catalunya

https://telecombcn-dl.github.io/2017-dlcv/

Deep learning technologies are at the core of the current revolution in artificial intelligence for multimedia data analysis. The convergence of large-scale annotated datasets and affordable GPU hardware has allowed the training of neural networks for data analysis tasks which were previously addressed with hand-crafted features. Architectures such as convolutional neural networks, recurrent neural networks and Q-nets for reinforcement learning have shaped a brand new scenario in signal processing. This course will cover the basic principles and applications of deep learning to computer vision problems, such as image classification, object detection or image captioning.Attention Models (D3L6 2017 UPC Deep Learning for Computer Vision)

Attention Models (D3L6 2017 UPC Deep Learning for Computer Vision)Universitat Politècnica de Catalunya

What's hot (20)

Neural Text Embeddings for Information Retrieval (WSDM 2017)

Neural Text Embeddings for Information Retrieval (WSDM 2017)

NS-CUK Joint Journal Club: V.T.Hoang, Review on "Heterogeneous Graph Attentio...

NS-CUK Joint Journal Club: V.T.Hoang, Review on "Heterogeneous Graph Attentio...

PR-169: EfficientNet: Rethinking Model Scaling for Convolutional Neural Networks

PR-169: EfficientNet: Rethinking Model Scaling for Convolutional Neural Networks

Transformers In Vision From Zero to Hero (DLI).pptx

Transformers In Vision From Zero to Hero (DLI).pptx

Generative Adversarial Networks GAN - Xavier Giro - UPC TelecomBCN Barcelona ...

Generative Adversarial Networks GAN - Xavier Giro - UPC TelecomBCN Barcelona ...

Attention Models (D3L6 2017 UPC Deep Learning for Computer Vision)

Attention Models (D3L6 2017 UPC Deep Learning for Computer Vision)

Survey of Attention mechanism & Use in Computer Vision

Survey of Attention mechanism & Use in Computer Vision

Similar to The Duet model

EDI 2009- Advanced Search: What’s Under the Hood of your Favorite Search System?

EDI 2009- Advanced Search: What’s Under the Hood of your Favorite Search System?Georgetown University Law Center Office of Continuing Legal Education

Similar to The Duet model (20)

EDI 2009- Advanced Search: What’s Under the Hood of your Favorite Search System?

EDI 2009- Advanced Search: What’s Under the Hood of your Favorite Search System?

THE ABILITY OF WORD EMBEDDINGS TO CAPTURE WORD SIMILARITIES

THE ABILITY OF WORD EMBEDDINGS TO CAPTURE WORD SIMILARITIES

THE ABILITY OF WORD EMBEDDINGS TO CAPTURE WORD SIMILARITIES

THE ABILITY OF WORD EMBEDDINGS TO CAPTURE WORD SIMILARITIES

5 Lessons Learned from Designing Neural Models for Information Retrieval

5 Lessons Learned from Designing Neural Models for Information Retrieval

Lecture 9 - Machine Learning and Support Vector Machines (SVM)

Lecture 9 - Machine Learning and Support Vector Machines (SVM)

Vectorland: Brief Notes from Using Text Embeddings for Search

Vectorland: Brief Notes from Using Text Embeddings for Search

Document Classification Using KNN with Fuzzy Bags of Word Representation

Document Classification Using KNN with Fuzzy Bags of Word Representation

Topic Modeling for Information Retrieval and Word Sense Disambiguation tasks

Topic Modeling for Information Retrieval and Word Sense Disambiguation tasks

More from Bhaskar Mitra

More from Bhaskar Mitra (20)

Joint Multisided Exposure Fairness for Search and Recommendation

Joint Multisided Exposure Fairness for Search and Recommendation

So, You Want to Release a Dataset? Reflections on Benchmark Development, Comm...

So, You Want to Release a Dataset? Reflections on Benchmark Development, Comm...

Efficient Machine Learning and Machine Learning for Efficiency in Information...

Efficient Machine Learning and Machine Learning for Efficiency in Information...

Multisided Exposure Fairness for Search and Recommendation

Multisided Exposure Fairness for Search and Recommendation

Neural Information Retrieval: In search of meaningful progress

Neural Information Retrieval: In search of meaningful progress

Conformer-Kernel with Query Term Independence @ TREC 2020 Deep Learning Track

Conformer-Kernel with Query Term Independence @ TREC 2020 Deep Learning Track

Benchmarking for Neural Information Retrieval: MS MARCO, TREC, and Beyond

Benchmarking for Neural Information Retrieval: MS MARCO, TREC, and Beyond

Adversarial and reinforcement learning-based approaches to information retrieval

Adversarial and reinforcement learning-based approaches to information retrieval

A Simple Introduction to Neural Information Retrieval

A Simple Introduction to Neural Information Retrieval

Recently uploaded

Recently uploaded (20)

TrustArc Webinar - Unlock the Power of AI-Driven Data Discovery

TrustArc Webinar - Unlock the Power of AI-Driven Data Discovery

Navigating Identity and Access Management in the Modern Enterprise

Navigating Identity and Access Management in the Modern Enterprise

Modular Monolith - a Practical Alternative to Microservices @ Devoxx UK 2024

Modular Monolith - a Practical Alternative to Microservices @ Devoxx UK 2024

JavaScript Usage Statistics 2024 - The Ultimate Guide

JavaScript Usage Statistics 2024 - The Ultimate Guide

Cloud Frontiers: A Deep Dive into Serverless Spatial Data and FME

Cloud Frontiers: A Deep Dive into Serverless Spatial Data and FME

How to Check CNIC Information Online with Pakdata cf

How to Check CNIC Information Online with Pakdata cf

Six Myths about Ontologies: The Basics of Formal Ontology

Six Myths about Ontologies: The Basics of Formal Ontology

WSO2's API Vision: Unifying Control, Empowering Developers

WSO2's API Vision: Unifying Control, Empowering Developers

Rising Above_ Dubai Floods and the Fortitude of Dubai International Airport.pdf

Rising Above_ Dubai Floods and the Fortitude of Dubai International Airport.pdf

AI+A11Y 11MAY2024 HYDERBAD GAAD 2024 - HelloA11Y (11 May 2024)

AI+A11Y 11MAY2024 HYDERBAD GAAD 2024 - HelloA11Y (11 May 2024)

Choreo: Empowering the Future of Enterprise Software Engineering

Choreo: Empowering the Future of Enterprise Software Engineering

Repurposing LNG terminals for Hydrogen Ammonia: Feasibility and Cost Saving

Repurposing LNG terminals for Hydrogen Ammonia: Feasibility and Cost Saving

Stronger Together: Developing an Organizational Strategy for Accessible Desig...

Stronger Together: Developing an Organizational Strategy for Accessible Desig...

Polkadot JAM Slides - Token2049 - By Dr. Gavin Wood

Polkadot JAM Slides - Token2049 - By Dr. Gavin Wood

The Duet model

- 1. Learning to Match Using Local and Distributed Representations of Text for Web Search Nick Craswell Microsoft Bellevue, USA nickcr@microsoft.com *work done while at Microsoft Fernando Diaz Spotify* New York, USA diazf@acm.org Bhaskar Mitra Microsoft, UCL Cambridge, UK bmitra@microsoft.com The Duet Model:

- 2. The document ranking task Given a query rank documents according to relevance The query text has few terms The document representation can be long (e.g., body text) or short (e.g., title) query ranked results search engine w/ an index of retrievable items

- 3. This paper is focused on ranking documents based on their long body text

- 4. Many DNN models for short text ranking (Huang et al., 2013) (Severyn and Moschitti, 2015) (Shen et al., 2014) (Palangi et al., 2015) (Hu et al., 2014) (Tai et al., 2015)

- 5. But few for long document ranking… (Guo et al., 2016) (Salakhutdinov and Hinton, 2009)

- 6. Challenges in short vs. long text retrieval Short-text Vocabulary mismatch more serious problem Long-text Documents contain mixture of many topics Matches in different parts of the document non-uniformly important Term proximity is important

- 7. The “black swans” of Information Retrieval The term black swan originally referred to impossible events. In 1697, Dutch explorers encountered black swans for the very first time in western Australia. Since then, the term is used to refer to surprisingly rare events. In IR, many query terms and intents are never observed in the training data Exact matching is effective in making the IR model robust to rare events

- 8. Desiderata of document ranking Exact matching Important if query term is rare / fresh Frequency and positions of matches good indicators of relevance Term proximity is important Inexact matching Synonymy relationships united states president ↔ Obama Evidence for document aboutness Documents about Australia likely to contain related terms like Sydney and koala Proximity and position is important

- 9. Different text representations for matching Local representation Terms are considered distinct entities Term representation is local (one-hot vectors) Matching is exact (term-level) Distributed representation Represent text as dense vectors (embeddings) Inexact matching in the embedding space Local (one-hot) representation Distributed representation

- 10. A tale of two queries “pekarovic land company” Hard to learn good representation for rare term pekarovic But easy to estimate relevance based on patterns of exact matches Proposal: Learn a neural model to estimate relevance from patterns of exact matches “what channel are the seahawks on today” Target document likely contains ESPN or sky sports instead of channel An embedding model can associate ESPN in document to channel in query Proposal: Learn embeddings of text and match query with document in the embedding space The Duet Architecture Use a neural network to model both functions and learn their parameters jointly

- 11. The Duet architecture Linear combination of two models trained jointly on labelled query- document pairs Local model operates on lexical interaction matrix Distributed model projects n-graph vectors of text into an embedding space and then estimates match Sum Query text Generate query term vector Doc text Generate doc term vector Generate interaction matrix Query term vector Doc term vector Local model Fully connected layers for matching Query text Generate query embedding Doc text Generate doc embedding Hadamard product Query embedding Doc embedding Distributed model Fully connected layers for matching

- 12. Local model

- 13. Local model: term interaction matrix 𝑋𝑖,𝑗 = 1, 𝑖𝑓 𝑞𝑖 = 𝑑𝑗 0, 𝑜𝑡ℎ𝑒𝑟𝑤𝑖𝑠𝑒 In relevant documents, →Many matches, typically clustered →Matches localized early in document →Matches for all query terms →In-order (phrasal) matches

- 14. Local model: estimating relevance ← document words → Convolve using window of size 𝑛 𝑑 × 1 Each window instance compares a query term w/ whole document Fully connected layers aggregate evidence across query terms - can model phrasal matches

- 16. Distributed model: input representation dogs → [ d , o , g , s , #d , do , og , gs , s# , #do , dog , ogs , gs#, #dog, dogs, ogs#, #dogs, dogs# ] (we consider 2K most popular n-graphs only for encoding) d o g s h a v e o w n e r s c a t s h a v e s t a f f n-graph encoding concatenate Channels=2K [words x channels]

- 17. convolutio n pooling Query embedding … … … HadamardproductHadamardproductFullyconnected query document Distributed model: estimating relevance Convolve over query and document terms Match query with moving windows over document Learn text embeddings specifically for the task Matching happens in embedding space * Network architecture slightly simplified for visualization – refer paper for exact details

- 18. Putting the two models together…

- 19. The Duet model Training sample: 𝑄, 𝐷+, 𝐷1 − 𝐷2 − 𝐷3 − 𝐷4 − 𝐷+ = 𝐷𝑜𝑐𝑢𝑚𝑒𝑛𝑡 𝑟𝑎𝑡𝑒𝑑 𝐸𝑥𝑐𝑒𝑙𝑙𝑒𝑛𝑡 𝑜𝑟 𝐺𝑜𝑜𝑑 𝐷− = 𝐷𝑜𝑐𝑢𝑚𝑒𝑛𝑡 2 𝑟𝑎𝑡𝑖𝑛𝑔𝑠 𝑤𝑜𝑟𝑠𝑒 𝑡ℎ𝑎𝑛 𝐷+ Optimize cross-entropy loss Implemented using CNTK (GitHub link)

- 20. Data Need large-scale training data (labels or clicks) We use Bing human labelled data for both train and test

- 21. Results Key finding: Duet performs significantly better than local and distributed models trained individually

- 22. Random negatives vs. judged negatives Key finding: training w/ judged bad as negatives significantly better than w/ random negatives

- 23. Local vs. distributed model Key finding: local and distributed model performs better on different segments, but combination is always better

- 24. Effect of training data volume Key finding: large quantity of training data necessary for learning good representations, less impactful for training local model

- 25. Term importance Local model Only query terms have an impact Earlier occurrences have bigger impact Query: united states president Visualizing impact of dropping terms on model score

- 26. Term importance Distributed model Non-query terms (e.g., Obama and federal) has positive impact on score Common words like ‘the’ and ‘of’ probably good indicators of well- formedness of content Query: united states president Visualizing impact of dropping terms on model score

- 27. Types of models If we classify models by query level performance there is a clear clustering of lexical (local) and semantic (distributed) models

- 28. Duet on other IR tasks Promising early results on TREC 2017 Complex Answer Retrieval (TREC-CAR) Duet performs significantly better when trained on large data (~32 million samples) (PAPER UNDER REVIEW)

- 29. Summary Both exact and inexact matching is important for IR Deep neural networks can be used to model both types of matching Local model more effective for queries containing rare terms Distributed model benefits from training on large datasets Combine local and distributed model to achieve state-of-the-art performance Get the model: https://github.com/bmitra-msft/NDRM/blob/master/notebooks/Duet.ipynb