The document discusses Asymmetric Logical Unit Access (ALUA) and its role in managing multi-path configurations in storage systems, particularly focusing on how it allows different access characteristics for various paths to a logical unit. It explains the complexities introduced by multiple ports in storage devices, highlights the necessity of multipathing for high availability and performance, and compares active/active versus active/passive storage systems in relation to ALUA. Additionally, it outlines standard command interfaces, the implementation and support of ALUA across various operating systems, and provides details on device mapper multipathing (dm-multipath) in Red Hat environments.

![Why multipathing & ALUA needed

When you have a device [LUN] presented to the host using multiple ports [multiple

paths] it does add complexity at the OS level. In other words, getting the device to

show up properly as a single [pseudo] device is one thing and then have the OS

understand its port characteristics is virtually impossible without breaking into the

operating system code and writing a module to sit in the storage stack to tap into these

features. This led to the development of Multipathing, which basically provides high

availability, performance and fault tolerance at the front-end/Host side.

On the back-end/Storage side, same characteristics are provided by Active/Active

Storage arrays. A/A Storage arrays exposes multiple-target ports to the Host, in other

words the „Host‟ can access the „unit‟ of storage from any of the ports available on the

A/A storage array, sounds great, but to determine which ports are optimized (direct-

path-to-node-owning-the-lun) and which ports are non-optimized (Indirect-path-to-

node-owning-the-lun) are the most important decisions for the Host to process in

order to ensure optimum storage access paths to the lun. As a result, this led to the

development of Hardware based device specific modules, and subsequently the

standardization of SCSI standard called „ALUA‟.

Overview of Mainstream Multipathing Software](https://image.slidesharecdn.com/alua-140923140830-phpapp01/75/ALUA-2-2048.jpg)

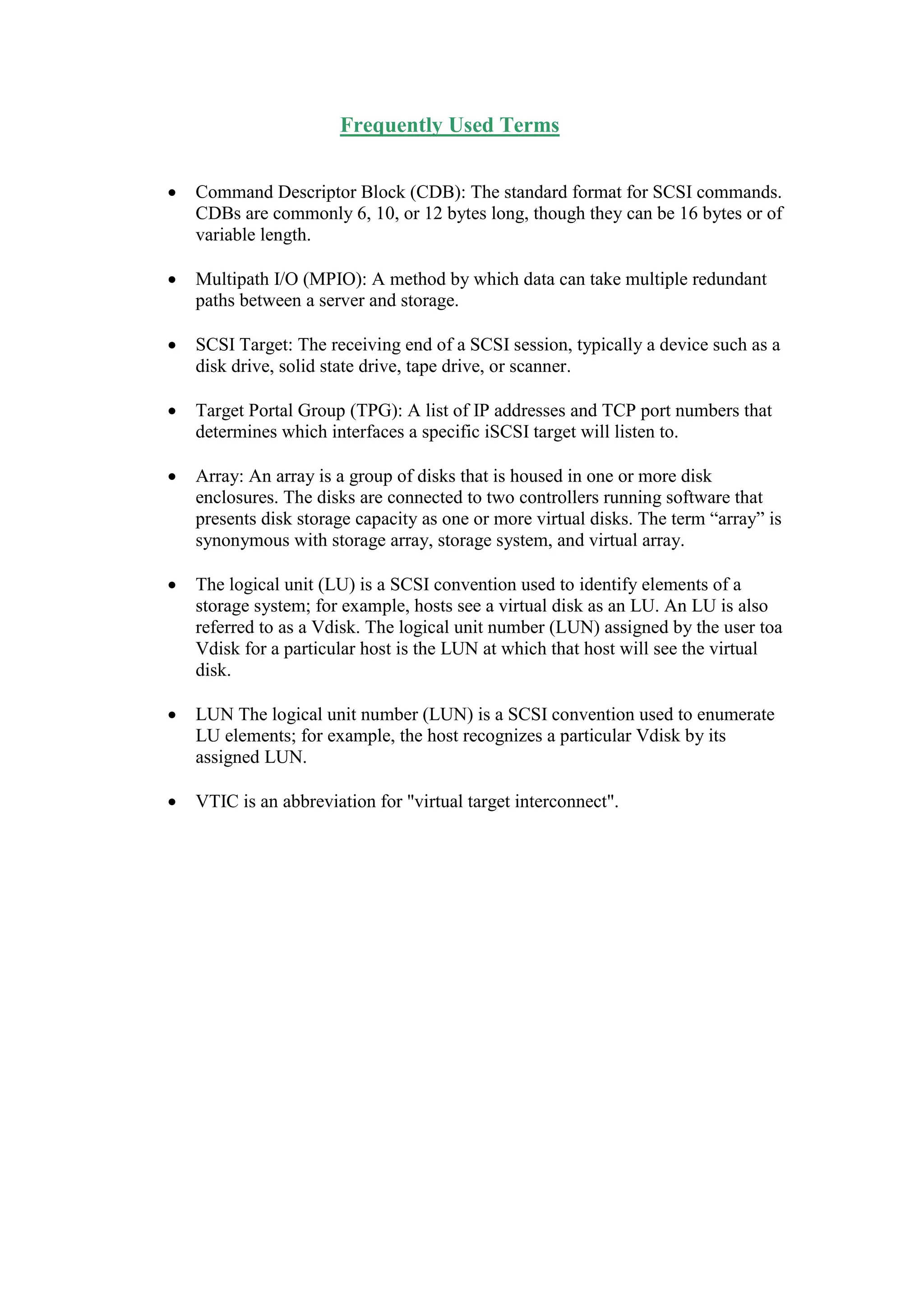

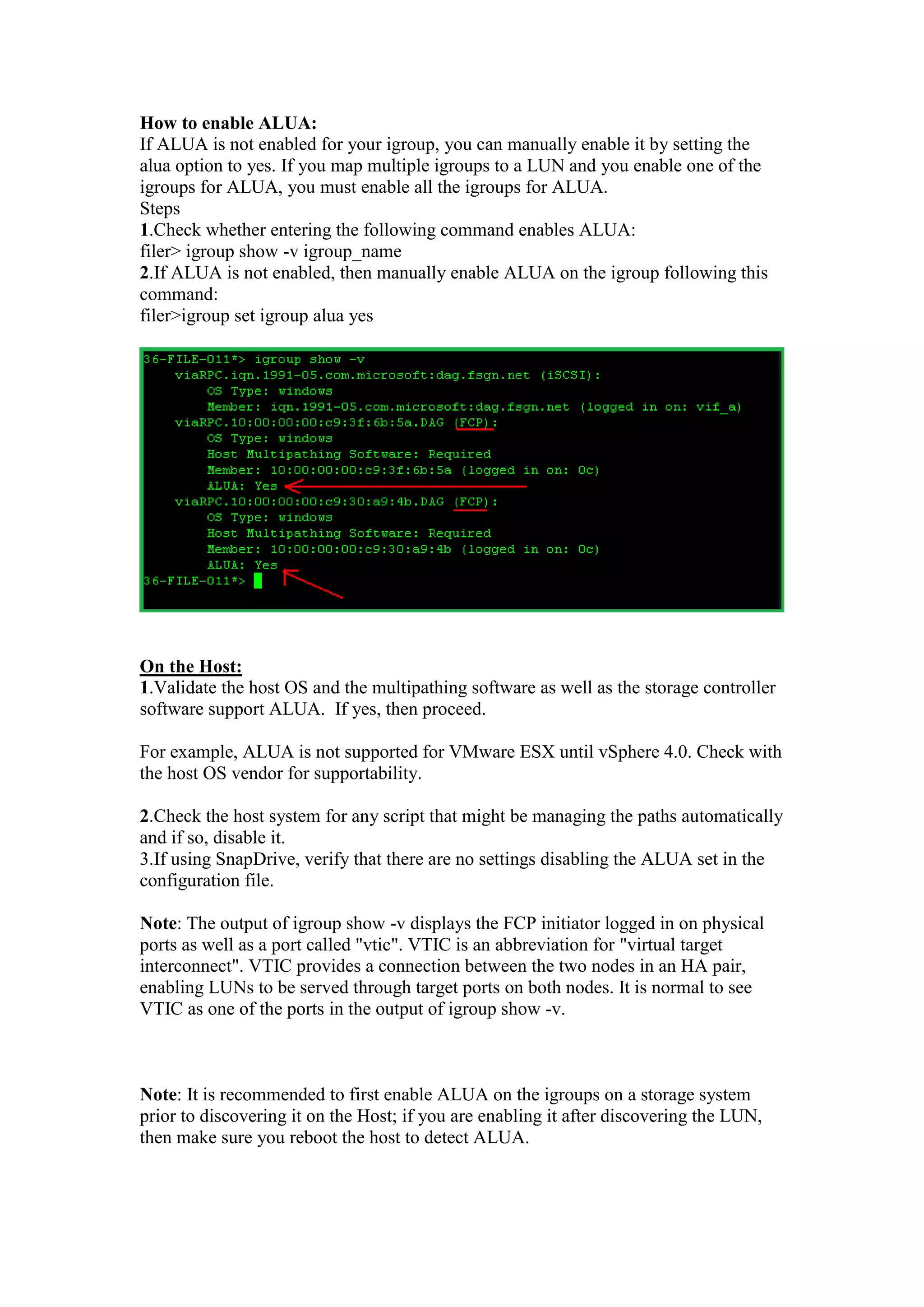

![Following is my „own‟ illustration of ALUA for learning purpose only.

Figure 1

I/O for Logical Unit 'A' going directly to the Node 'A' owning the Lun.

TPG: TargetPortGroup

ALUA allows you to see any given LUN via both storage processors as active but

only one of these storage processors “owns” the LUN and because of that there will

be optimized and non-optimized paths. The optimized paths are the ones with a direct

path to the storage processor [Node-A] that owns the LUN. The non-optimized paths

have a connection with the storage processor that does not own the LUN but have an

indirect path to the storage processor that does own it via an interconnect bus.](https://image.slidesharecdn.com/alua-140923140830-phpapp01/75/ALUA-4-2048.jpg)

![Which storage array types are candidates for ALUA?

Active/Active Storage Systems are the ideal candidate for ALUA. That means, ALUA

does not apply to Active/Passive Storage Systems. You might ask, why is that?

Let's understand the difference between Active/Active & Active/Passive Storage

Systems.

Active/Passive Storage Systems:

With Active/Passive storage systems, one controller is assigned to a LUN as primary

controller (owner of the LUN) and handles all the I/O requests to it. The other

controller – or multiple other controllers, if available – acts as standby controller. The

standby controller of a LUN only issues I/O requests to it, if their primary controller

failed. The key word here is 'primary controller failed', which means at any given

time, only one controller is serving the LUN and hence the question of preferred

controller does not come into picture.

Active/Active Storage Systems:

With Active/Active storage systems, multiple controllers can issue I/O requests to an

individual LUN concurrently. Both the controllers are Active; there is no stand-by

concept. In Active/Active setup, each controller can have multiple ports. I/O requests

reaching the storage system through ports of the preferred controller of a LUN will be

sent directly to the LUN. I/O requests arriving at the non-preferred controller of a

LUN will be first forwarded to the preferred controller of the LUN.

Therefore, for storage devices with ALUA feature implemented, there must be at least

two target port groups, first one would be direct/Optimized TPG [Controller A] and

second one Indirect/Un-optimized TPG [Controller B]

Active/Active Storage Systems further divide into two categories:

Asymmetrical Active/Active (SAA) storage systems:

With theses type of arrays, one controller is assigned to each LUN as a preferred

controller. Each controller can have multiple ports – our example above showed 2

ports per controller. I/O requests reaching the storage system through ports of the

preferred controller of a LUN will be sent directly to the LUN. I/O requests arriving at

the non-preferred controller of a LUN will be first forwarded to the preferred

controller of the LUN. These arrays are also called Asymmetrical Logical Unit

Access (ALUA) compliant devices.

Multi-pathing software can query ALUA compliant arrays to load balance only

between paths connected to the preferred controller and use the paths to the non-

preferred controller for automatic path failover if all of the paths to the primary

controller fail.](https://image.slidesharecdn.com/alua-140923140830-phpapp01/75/ALUA-5-2048.jpg)

![ Symmetrical Active/Active (SAA) storage systems:

These types of arrays do not have a primary or preferred controller per LUN. I/O

requests can be issued over all paths mapped to a LUN. Some models of the HP

StorageWorks XP Disk Array family are symmetrical active/active arrays.

Device specific Multi-pathing solutions available from different vendors:

1.LVM PVlinks

2.PVLinks

3.Symantec DMP

4.HP Storage Works SecurePath®

5.EMC PowerPath®

Microsoft Windows 2008 Introduced Native MPIO with feature that utilizes ALUA

for path selection. Hence, if you are running Windows 2008, you don't have to worry

about installing vendor specific DSM for Active/Active storage systems that supports

ALUA. Native MPIO can handle this for you. This has been made possible through

the standardization of ALUA in SCSI-3 specification.

Similarly, many OS vendors are now providing ALUA feature in the Native

Multipathing software. Following is the rough estimation of the time line since

various vendors adopted ALUA.

ALUA adaptation Time Line

Note: With ALUA standardization, mainstream operating systems [with „built-in

Native Multipathing] now supports ALUA natively on the Active/Active arrays

without having to install Hardware vendor provided Device Specific Plug-ins.](https://image.slidesharecdn.com/alua-140923140830-phpapp01/75/ALUA-6-2048.jpg)

![Storage Vendor Plug-ins No longer required

Asymmetric Logical Unit Access (ALUA) do not need a plug-in to work with Native

Multi-Pathing which comes out of box with mainstream standard Operating Systems.

As ALUA support has been widely adopted and delivered in the Host side OS, no

special storage plug-ins are required, which means volume manager and arrays based

plug-ins are becoming less dominant or unneeded for Active/Active Storage arrays.

ALUA devices can operate in two modes: implicit and/or explicit.

Implicit ALUA: With the implicit ALUA style, the host multipathing software can

monitor the path states but cannot change them, either automatically or manually. Of

the active paths, a path may be specified as preferred (optimized), and as non-

preferred (non-optimized). If there are active preferred paths, then only these paths

will receive commands, and will be load balanced to evenly distribute the commands.

If there are no active preferred paths, then the active non-preferred paths are used in a

round-robin fashion. If there are no active non-preferred paths, then the LUN cannot

be accessed until the controller activates its standby paths.

Explicit ALUA: Devices allow the host to use the Set Target Port Group task

management command to set the Target Port Group's state. In implicit ALUA, the

target device itself manages a device‟s Target Port Group states.

1st Stage: Discovery

TARGET PORT GROUPS SUPPORT [TPGS]:

SCSI logical units with asymmetric logical unit access may be identified using the

INQUIRY command. The value in the target port group support (TPGS) field

indicates whether or not the logical unit supports asymmetric logical unit access and if

so whether implicit or explicit management is supported. The asymmetric access

states supported by a logical unit may be determined by the REPORT TARGET

PORT GROUPS command parameter data.

2nd Stage: Report Access States

REPORT TARGET PORT GROUPS [RTPG]:

The REPORT TARGET PORT GROUPS command requests that the device server

send target port group information to the application client. This command shall be

supported by logical units that report in the standard INQUIRY data that they support

asymmetric logical unit access (i.e., return a non-zero value in the TPGS field).](https://image.slidesharecdn.com/alua-140923140830-phpapp01/75/ALUA-7-2048.jpg)

![What is DM-Multipath [Redhat]?

Device mapper multipathing (DM-Multipath) allows you to configure multiple I/O

paths between server nodes and storage arrays into a single device. Without DM-

Multipath, each path from a server node to a storage controller is treated by the

system as a separate device, even when the I/O path connects the same server node to

the same storage controller. DM-Multipath provides a way of organizing the I/O paths

logically, by creating a single multipath device on top of the underlying devices

What do you mean by I/O paths ?

I/O paths are physical SAN connections that can include separate cables, switches,

and controllers. Multipathing aggregates the I/O paths, creating a new device that

consists of the aggregated paths.

If any element of an I/O path (the cable, switch, or controller) fails, DM-Multipath

switches to an alternate path.

Let's list down all the possible components that could fail in a typical I/O path

between server & storage?

Points of possible failure:

1.FC-HBA/iSCSI-HBA/NIC

2.FC/Ethernet cable

3.SAN switch

4.Array controller/ Array controller port

With DM-Multipath configured, a failure at any of these points will cause DM-

Multipath to switch to the alternate I/O path.

Can I use LVM on top of Multipath device?

Yes. After creating multipath devices, you can use the multipath device names just as

you would use a physical device name when creating an LVM physical volume.

For example, if /dev/mapper/mpathn is the name of a multipath device, the following

command will mark /dev/mapper/mpathn as a physical volume.

After creating multipath devices, you can use the multipath device names just as you

would use a physical device name when creating an LVM physical volume. For

example, if /dev/mapper/mpathn [n=device number] is the name of a multipath

device, the following command will mark /dev/mapper/mpathn as a physical volume.

pvcreate /dev/mapper/mpathn

For example: In our case, we have one „multipath‟ device available by name

'mpath53'

[root@redhat /]# cd /dev/mapper/

[root@redhat mapper]# ll

total 0

crw------- 1 root root 10, 60 Sep 19 14:25 control

brw-rw---- 1 root disk 253, 0 Sep 20 13:30 mpath53](https://image.slidesharecdn.com/alua-140923140830-phpapp01/75/ALUA-9-2048.jpg)

![Now, using pvcreate we can create a physical volume to be used for LVM purpose:

[root@redhat mapper]# pvcreate /dev/mapper/mpath53

Writing physical volume data to disk "/dev/mpath/mpath53"

Physical volume "/dev/mpath/mpath53" successfully created

[root@redhat mapper]#

Next step: Once we have one or more physical volumes created, we can create a

volume group on top of PVs using the vgcreate command. In our case, we have

created just one 'physical volume' using pvcreate command. Following command

creates 'volumegroup' on top of Physical Volume and on top volume group we can

create a Logicalvolume to be used for filesystem purpose.

Volume create command:

[root@redhat mapper]# vgcreate volume_53 /dev/mapper/mpath53

Volume group "volume_53" successfully created

[root@redhat mapper]#

Logical volume create command: [For example purpose, we will create 1GB disk]

[root@redhat mapper]# lvcreate -n lv_53 -L 1G volume_53

Logical volume "lv_53" created

[root@redhat mapper]#

Next, let's lay/install the filesystem on top of the Logival volume we just created.

First we will find out how our logical volume looks using 'lvdisplay' command:

[root@redhat mapper]# lvdisplay

--- Logical volume ---

LV Name /dev/volume_53/lv_53

VG Name volume_53

LV UUID 2bQkgR-vXAQ-tmwZ-Afs1-Knxv-0mUG-NepgLB

LV Write Access read/write

LV Status available

# open 0

LV Size 1.00 GB

Current LE 256

Segments 1

Allocation inherit

Read ahead sectors auto

- currently set to 256

Block device 253:1](https://image.slidesharecdn.com/alua-140923140830-phpapp01/75/ALUA-10-2048.jpg)

![Now, using the LV name, lets lay the filesystem, we are going to put the 'EXT3'

filesystem in the following example:

[root@redhat mapper]# mkfs.ext3 /dev/volume_53/lv_53

mke2fs 1.39 (29-May-2006)

Filesystem label=

OS type: Linux

Block size=4096 (log=2)

Fragment size=4096 (log=2)

131072 inodes, 262144 blocks

Creating journal (8192 blocks): done

Writing superblocks and filesystem accounting information: done

This filesystem will be automatically checked every 36 mounts or

180 days, whichever comes first. Use tune2fs -c or -i to override.

Finally, make it available to user-space by mounting it.

[root@redhat mapper]# mount /dev/volume_53/lv_53 /mnt/netapp/

Using the 'mount' command we should see the new filesystem:

[root@redhat mapper]# mount

/dev/sda2 on / type ext3 (rw)

proc on /proc type proc (rw)

sysfs on /sys type sysfs (rw)

/dev/mapper/volume_53-lv_53 on /mnt/netapp type ext3 (rw)

[root@redhat mapper]#

Important: If you are using LVM on top of Multipath, then make sure Multipath is

be loaded before LVM to ensure that multipath maps are built correctly. Loading

multipath after LVM can result in incomplete device maps for a multipath device

because LVM locks the device, and MPIO cannot create the maps properly.

Overview of Storage Stack and Multipath Positioning](https://image.slidesharecdn.com/alua-140923140830-phpapp01/75/ALUA-11-2048.jpg)

![How to troubleshoot dm-multipath failure issues

On Linux [Redhat]

Querying the multipath I/O status outputs the current status of the multipath maps.

This is perhaps the first thing you would do to find out if paths show up?

The two key switches with 'multupath' tool are:

1. multipath -l

2. multipath -ll

The multipath -l option displays the current path status as of the last time that

the path checker was run. It does not run the path checker.

The multipath -ll option runs the path checker, updates the path information,

then displays the current status information. This option always the displays

the latest information about the path status.

At a terminal console prompt, enter

[root@redhat ]# multipath -ll

This displays information for each multipathed device. For example:

3600601607cf30e00184589a37a31d911

[size=127 GB][features="0"][hwhandler="1 alua"]

_ round-robin 0 [active][first]

_ 1:0:1:2 sdav 66:240 [ready ][active]

_ 0:0:1:2 sdr 65:16 [ready ][active]

_ round-robin 0 [enabled]

_ 1:0:0:2 sdag 66:0 [ready ][active]

_ 0:0:0:2 sdc 8:32 [ready ][active]

You can also use „dmsetup‟ command to see the number of paths:

root@redhat ~]# dmsetup ls --tree

volume_53-lv_53 (253:1)

└─mpath53 (253:0)

├─ (8:80)

├─ (8:64)

├─ (8:48)

└─ (8:32)

[root@redhat ~]#](https://image.slidesharecdn.com/alua-140923140830-phpapp01/75/ALUA-12-2048.jpg)

![Interactive Tool for Multipath Troubleshooting

The multipathd -k command is an interactive interface to the -> multipathd daemon.

Entering this command brings up an interactive multipath console. After entering this

command, you can enter help to get a list of available commands, you can enter a

interactive command, or you can enter CTRL-D to quit.

[root@redhat /]# multipathd -k

multipathd> help

multipath-tools v0.4.7 (03/12, 2006)

CLI commands reference:

list|show paths

list|show maps|multipaths

list|show maps|multipaths status

list|show maps|multipaths stats

list|show maps|multipaths topology

list|show topology

list|show map|multipath $map topology

list|show config

list|show blacklist

list|show devices

add path $path

remove|del path $path

add map|multipath $map

remove|del map|multipath $map

switch|switchgroup map|multipath $map group $group

reconfigure

suspend map|multipath $map

resume map|multipath $map

reinstate path $path

fail path $path

disablequeueing map|multipath $map

restorequeueing map|multipath $map

disablequeueing maps|multipaths

restorequeueing maps|multipaths

resize map|multipath $map

multipathd>

In this example, I am using 'show paths' command to see the multipaths on my host:

[root@redhat /]# multipathd -k

multipathd> show paths

hcil dev dev_t pri dm_st chk_st next_check

3:0:0:0 sdc 8:32 2 [active][ready] XX........ 4/20

5:0:0:0 sdf 8:80 2 [active][ready] XX........ 4/20

2:0:0:0 sdd 8:48 2 [active][ready] XX........ 4/20

4:0:0:0 sde 8:64 2 [active][ready] XX........ 4/20

multipathd>](https://image.slidesharecdn.com/alua-140923140830-phpapp01/75/ALUA-13-2048.jpg)

![multipathd> show topology

reload: mpath53 (360a98000427045777a24463968533155) dm-0 NETAPP,LUN

[size=2.0G][features=1 queue_if_no_path][hwhandler=0 ][rw ]

_ round-robin 0 [prio=8][enabled]

_ 3:0:0:0 sdc 8:32 [active][ready]

_ 5:0:0:0 sdf 8:80 [active][ready]

_ 2:0:0:0 sdd 8:48 [active][ready]

_ 4:0:0:0 sde 8:64 [active][ready]

multipathd>

multipathd> show multipaths stats

name path_faults switch_grp map_loads total_q_time q_timeouts

mpath53 0 0 4 0 0

multipathd>

multipathd> show paths

hcil dev dev_t pri dm_st chk_st next_check

3:0:0:0 sdc 8:32 2 [active][ready] XXX....... 7/20

5:0:0:0 sdf 8:80 2 [active][ready] XXX....... 7/20

2:0:0:0 sdd 8:48 2 [active][ready] XXX....... 7/20

4:0:0:0 sde 8:64 2 [active][ready] XXX....... 7/20

Now, let's simulate path failure - We will fail the path 'sdc'

multipathd> fail path sdc

ok

multipathd> show paths

hcil dev dev_t pri dm_st chk_st next_check

3:0:0:0 sdc 8:32 2 [failed][faulty] X......... 3/20

5:0:0:0 sdf 8:80 2 [active][ready] XXX....... 7/20

2:0:0:0 sdd 8:48 2 [active][ready] XXX....... 7/20

4:0:0:0 sde 8:64 2 [active][ready] XXX....... 7/20

multipathd>

As you can see 'sdc' is now marked 'faulty' but due to constant polling, default interval

is 5 seconds, the path should come back up as Active immediately.

multipathd> show paths

hcil dev dev_t pri dm_st chk_st next_check

3:0:0:0 sdc 8:32 2 [active][ready] XXX....... 7/20

5:0:0:0 sdf 8:80 2 [active][ready] XXX....... 7/20

2:0:0:0 sdd 8:48 2 [active][ready] XXX....... 7/20

4:0:0:0 sde 8:64 2 [active][ready] XXX....... 7/20

The goal of multipath I/O is to provide connectivity fault tolerance between the

storage system and the server. When you configure multipath I/O for a stand-alone

server, the retry setting protects the server operating system from receiving I/O errors

as long as possible. It queues messages until a multipath failover occurs and provides

a healthy connection.](https://image.slidesharecdn.com/alua-140923140830-phpapp01/75/ALUA-14-2048.jpg)

![However, when connectivity errors occur for a cluster node, you want to report the

I/O failure in order to trigger the resource failover instead of waiting for a multipath

failover to be resolved.

In cluster environments, you must modify the retry setting so that the cluster node

receives an I/O error in relation to the cluster verification process. Please read the

OEM document for recommended retry settings.

Enabling ALUA on NetApp Storage & Host

Where & how to enable ALUA on the Host when using NetApp Active/Active

storage systems:

Where:

On NetApp Storage: ALUA is enabled or disabled on the igroup mapped to a NetApp

LUN on the NetApp controller.

Only FCP igroups support ALUA in ontap 7-mode or simple Ontap HA.

You might ask why only FCP and not iSCSI in 7-mode, because there is no proxy

path in 7-mode, as both controllers have different IP address and the IP addresses are

tied to the Physical NIC/Ports.

However, with Ontap cmode or cluster mode, access characteristics changes, as the

physical adapters are virtualised in cmode, and clients accesses the data via LIF

[Virtual Adapter/Logical Interface] and in this case, the IP addresses are tied to the

LIF and not to the Physical NIC/Ports, which means if port failure is detected, a LIF

can be migrated to another working port on the another node with-in the cluster, and

hence ALUA is now supported with iSCSI in cmode setups.

This table only shows Windows OS, but ALUA is supported with iSCSI & ontap

cmode on non-windows OS. Please check NetApp interoperability matrix table.](https://image.slidesharecdn.com/alua-140923140830-phpapp01/75/ALUA-15-2048.jpg)

![ALUA support on VMware

[Courtesy: VMware Knowledgebase ID 1022030]

ESX/ESXi 4.1 or ESXi 5.x host supports Asymmetric Logical Unit Access (ALUA),

the output of storage commands has some new parameters.

If you run the command:

In ESX/ESXi 4.x – #esxcli nmp device list -d

naa.60060160455025000aa724285e1ddf11,

In ESX/ESXi 5.x – #esxcli storage nmp device list -d

naa.60060160455025000aa724285e1ddf11

You see output similar to:

naa.60060160455025000aa724285e1ddf11

Device Display Name: DGC Fibre Channel Disk

(naa.60060160455025000aa724285e1ddf11)

Storage Array Type: VMW_SATP_ALUA_XX

Storage Array Type Device Config: {navireg=on,

ipfilter=on}{implicit_support=on;explicit_support=on;

explicit_allow=on;alua_followover=on;{TPG_id=1,TPG_state=AO}{TPG_id=2,TPG

_state=ANO}}

Path Selection Policy: VMW_PSP_FIXED_AP

Path Selection Policy Device Config: {preferred=vmhba1:

C0:T0:L0;current=vmhba1: C0:T0:L0}

Working Paths: vmhba1:C0:T0:L0

The output may contain these new device configuration parameters:

•implicit_support=on

This parameter shows whether or not the device supports implicit ALUA. You cannot

set this option as it is a property of the LUN.

•explicit_support

This parameter shows whether or not the device supports explicit ALUA. You cannot

set this option as it is a property of the LUN.](https://image.slidesharecdn.com/alua-140923140830-phpapp01/75/ALUA-17-2048.jpg)