CNCF Thanos @ Qonto

•

0 likes•374 views

How to achieve long term retention easily for your metrics and have a single point of view of all your prometheus deployment with CNCF Thanos.

Report

Share

Report

Share

Recommended

Recommended

More Related Content

Similar to CNCF Thanos @ Qonto

Similar to CNCF Thanos @ Qonto (20)

How to upgrade to MongoDB 4.0 - Percona Europe 2018

How to upgrade to MongoDB 4.0 - Percona Europe 2018

MongoDB .local London 2019: Migrating a Monolith to MongoDB Atlas – Auto Trad...

MongoDB .local London 2019: Migrating a Monolith to MongoDB Atlas – Auto Trad...

OSA Con 2022 - Switching Jaeger Distributed Tracing to ClickHouse to Enable A...

OSA Con 2022 - Switching Jaeger Distributed Tracing to ClickHouse to Enable A...

Scaling Monitoring At Databricks From Prometheus to M3

Scaling Monitoring At Databricks From Prometheus to M3

The Glue is the Hard Part: Making a Production-Ready PaaS

The Glue is the Hard Part: Making a Production-Ready PaaS

DockerCon EU 2015: The Glue is the Hard Part: Making a Production-Ready PaaS

DockerCon EU 2015: The Glue is the Hard Part: Making a Production-Ready PaaS

Netflix Container Scheduling and Execution - QCon New York 2016

Netflix Container Scheduling and Execution - QCon New York 2016

Heroku to Kubernetes & Gihub to Gitlab success story

Heroku to Kubernetes & Gihub to Gitlab success story

Recently uploaded

Recently uploaded (20)

KIT-601 Lecture Notes-UNIT-3.pdf Mining Data Stream

KIT-601 Lecture Notes-UNIT-3.pdf Mining Data Stream

Low rpm Generator for efficient energy harnessing from a two stage wind turbine

Low rpm Generator for efficient energy harnessing from a two stage wind turbine

Introduction to Machine Learning Unit-4 Notes for II-II Mechanical Engineering

Introduction to Machine Learning Unit-4 Notes for II-II Mechanical Engineering

RM&IPR M5 notes.pdfResearch Methodolgy & Intellectual Property Rights Series 5

RM&IPR M5 notes.pdfResearch Methodolgy & Intellectual Property Rights Series 5

E-Commerce Shopping for developing a shopping ecommerce site

E-Commerce Shopping for developing a shopping ecommerce site

An improvement in the safety of big data using blockchain technology

An improvement in the safety of big data using blockchain technology

Lect_Z_Transform_Main_digital_image_processing.pptx

Lect_Z_Transform_Main_digital_image_processing.pptx

Attraction and Repulsion type Moving Iron Instruments.pptx

Attraction and Repulsion type Moving Iron Instruments.pptx

CNCF Thanos @ Qonto

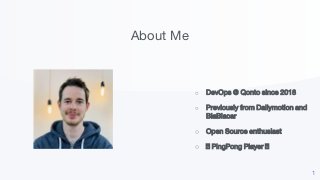

- 1. About Me 1 ○ DevOps @ Qonto since 2018 ○ Previously from Dailymotion and BlaBlacar ○ Open Source enthusiast ○ 🏓 PingPong Player 🏓

- 2. 2

- 3. ○ Context ○ Single Point of View ○ Long Term Retention ○ Next Steps ○ Results Summary What are you going to discover 3

- 4. Qonto, neobank for SMEs and freelancers 4

- 5. ○ All our services run on AWS in two distinct accounts ○ Kubernetes user with 6 clusters ○ Monitoring is ensured by Prometheus (with its operator) and Grafana Context What is the tech at Qonto 5

- 6. Problems What leads to Thanos 6 ○ How to have a single view on all theses clusters ? ○ How to keep this vision over time ?

- 7. First try What I have done in 2015 Prometheus remote write Reformat metrics into Warp10 formats Learn and deploy Warp10 components Learn and deploy Kafka 0.8.2.4 Learn and deploy HDFS Rewrite all dashboards to use WarpScript Train all devs and ops Maintain it 7

- 8. qonto.eu Never used by anyone 6 months of work

- 9. What we really want And dare asking Seamless integration with Prometheus and Grafana Managed storage outside Kubernetes Easily modulable and lightweight architecture 9

- 10. Why not Prometheus federation Easy peasy, end of story? HA Prometheus federation Federated Prometheus (one per kubernetes cluster) 10

- 11. Why not federation Still the same problems ○ Which prometheus to query ? ○ At which interval the federated Prometheus should be scrapped ○ Storage is still handled by Kubernetes (more generally a finite hard drive) 11

- 12. Thanos.io ○ Open source, written in golang by Improbable ○ Based on Prometheus 2.0 engine and GRPC ○ Split into distinct components ○ Highly available Prometheus setup with long term storage capabilities 12

- 14. qonto.eu Single point of view

- 15. Thanos sidecar What is a sidecar A sidecar is a utility container in the Pod and its purpose is to support the main container. In our case, the Thanos sidecar run alongside each Prometheus and : ● exposes a GRPC endpoint ● implements Thanos Store API ● access Prometheus chunks 15

- 16. Thanos sidecar Integration Prometheus operator config Kubernetes deployment 16

- 17. 17

- 18. Thanos sidecar How to differentiate sidecars context qonto and cbs environment production, staging and infra kafka_brokers{context="qonto", environment="staging", service="kafka-broker-exporter"} 3 kafka_brokers{context="qonto", environment="production", service="kafka-broker-exporter"} 5 Leverage on Prometheus external labels feature 18

- 19. Thanos query Link sidecars together ○ Centralize, propagate PromQL to sidecars and merge results ○ Act as a Prometheus data source in ou Grafana ○ Stick to the same UI as Prometheus ○ Also implements Thanos StoreApi 19

- 20. Thanos query Service discovery ○ Gossip protocol since the beginning, removed in v0.5.0 ○ File service discovery ○ Static list of Thanos components that implements their storeApi 20

- 24. 24

- 25. qonto.eu NOPE Did it work the first time? component=storeset msg="update of store node failed" err="initial store client info fetch: rpc error: code = DeadlineExceeded desc = context deadline exceeded" address=thanos-sidecar.production.qonto.co

- 26. Fail #1 Communication between Query And Sidecars HTTP 2.0 HTTP 1.1 26 ✅ ❌

- 27. Solution #1 Use an Elastic Load Balancer HTTP 2.0 HTTP 2.0 27 ✅ ✅

- 28. qonto.eu Still NOPE Does it work now?

- 30. Fail #2 Solutions ○ Reduce the number of concurrent queries (--query.max-concurrent) ○ Modify all our Grafana dashboards to include filters on context and environment labels ○ Be careful when requesting metrics. 30

- 31. qonto.eu YES ! Does it work now?

- 32. 32

- 34. Storage Where and how to store the metrics ○ Thanos can use differents storage backend, GCP, S3, Azure Storage. ○ We use choose S3 for obvious reason ○ We need a way to upload metrics ○ And a way to retrieve them 34

- 35. Sidecar Uploading the metrics The sidecar uploads Prometheus chunks each time a new one is created For each chunks, the sidecar enhances the associated meta.json with the value of the external labels Can delay uploads to the storage backend if it is not available (network partition resilience) Kubernetes secrets data Prometheus operator config 35

- 36. 36

- 37. Store Gateway Retrive the metrics Uses the same object-store configuration as the sidecar Implements the same StoreAPI interface as the other Thanos components Maintains a local index cache of meta.json file (not the actual chunks) for performance purposeKubernetes deployment 37

- 38. Thanos Query Store UI with Store 38

- 39. 39

- 40. Fail #3 AWS bucket permission ○ Chunks uploaded from CBS account to the S3 bucket works ○ But the Store Gateway cannot read chunks from the CBS account ○ Sidecars cannot change objects owner when uploading 40

- 41. 41

- 42. 42

- 43. Downsampling Keep only relevant data 43 ○ Raw metrics data are stored, retrieved and displayed ○ But do we need the same precision for old data? ○ We need to find a way to reduce the precision over time, it is called downsampling

- 44. Compactor Retrive the metrics Define retention time interval for raw, five minutes and one hour data Can either run as a Cronjob or a one shot job Does not have a max age limit, you are responsible to remove chunks you do not want anymore Kubernetes deployment 44

- 46. Benefits of the project ○ Metrics retention during two years ○ Infinite storage without worrying ○ Selling pure tech project / refactoring to stakeholders is more easily ■ Retrospective ■ Enhancement, applications publish their own prometheus metrics ○ First immersion in the Open Source community 46

- 47. Next Steps possible with Thanos ○ Alerting with the Thanos Rulers ○ Thanos Store sharding based on metrics time range ○ Prometheus remote write to Thanos Receiver 47

Editor's Notes

- Logo qonto en entier