Classical Test Theory and Item Analysis

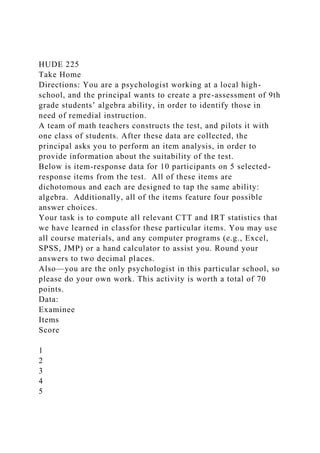

- 1. HUDE 225 Take Home Directions: You are a psychologist working at a local high- school, and the principal wants to create a pre-assessment of 9th grade students’ algebra ability, in order to identify those in need of remedial instruction. A team of math teachers constructs the test, and pilots it with one class of students. After these data are collected, the principal asks you to perform an item analysis, in order to provide information about the suitability of the test. Below is item-response data for 10 participants on 5 selected- response items from the test. All of these items are dichotomous and each are designed to tap the same ability: algebra. Additionally, all of the items feature four possible answer choices. Your task is to compute all relevant CTT and IRT statistics that we have learned in classfor these particular items. You may use all course materials, and any computer programs (e.g., Excel, SPSS, JMP) or a hand calculator to assist you. Round your answers to two decimal places. Also—you are the only psychologist in this particular school, so please do your own work. This activity is worth a total of 70 points. Data: Examinee Items Score 1 2 3 4 5

- 4. Q (5 points) Variance (5 points) Standard deviation (5points) D (5 points) Point-biserial correlation (5 points)

- 5. Inter-Item Covariance Matrix (5 points: .5 point per covariance) Item Number 1 2 3 4 5 1 2 3 4

- 6. 5 Inter-Item Correlation Matrix (5points: .5 point per correlation) Item Number 1 2 3 4 5 1 1 2 1 3 1 4

- 7. 1 5 1 Test Statistics (6 points) Average Score Composite Variance Composite SD Cronbach’s Alpha Standard Error of Measurement Standard Error of Estimate Item-Characteristic Curves (Paste below, 5 points): (Note: Because of the small sample-size, your principal is only requiring a 1pl IRT model) Test Information Function (Paste below- 1 point):

- 8. Item difficulty parameters (5 points): Item b 1 2 3 4 5 Item-Analysis Report: Based on the results of your item analysis, do you think this test is suitable for the purpose for which it was designed? Are there any possible revisions you might recommend? Explain your answer using relevant statistics you calculated above as support. Remember, students may be placed in remedial algebra based on their score on this test, so your report is important. (13 points). Classical Test Theory and Item Analysis 1

- 9. Review: Why do we measure? abilities and traits we are interested in cannot be directly observed attitudes, personality, etc. assess students on these variables 2 A Classic Discovery X = T + E Observed Score = True Score + Error 3 Measured variable Latent variable Measurement

- 10. error = + Observed score X True score T Error E = + 4 A Model-Based Perspective -score iance in X comes from T, not E. T

- 11. XE 5 measurement model allows for straightforward analysis of items. T XE 6 Beginning Item Analysis o decide whether an item works well for a given population, we need to calculate a number of

- 12. statistics associated with each item. 7 Mean and variance: Polytomous items tegories) it work 8 Note: This is N, not N-1 because we assume this is a population parameter Dichotomous items a 1

- 13. referred to as “p” 9 ij j X p N = ∑ Mind your P’s and Q’s 10 1j jq p= − Pay attention to subscripts em

- 14. 11 Item variance items multiply that item’s p and q 12 2 j j jp qσ = Item Standard Deviation -root of the variance 13 j j jp qσ = Now you try14 Examinee

- 15. Items 1 2 3 4 5 Score 1 0 0 1 1 0 2 2 0 0 0 1 0 1 3 1 1 0 0 0 2 4 1 0 0 1 0 2 5 0 1 1 1 1 4 6 0 1 0 0 0 1 7 1 1 1 1 1 5 8 1 1 0 1 0 3 9 1 1 1 1 0 4 10 0 0 0 1 1 2 15 Examinee Items 1 2 3 4 5 Score 1 0 0 1 1 0 2 2 0 0 0 1 0 1 3 1 1 0 0 0 2 4 1 0 0 1 0 2 5 0 1 1 1 1 4 6 0 1 0 0 0 1 7 1 1 1 1 1 5 8 1 1 0 1 0 3 9 1 1 1 1 0 4 10 0 0 0 1 1 2

- 16. p 0.5 0.6 0.4 0.8 0.3 q 0.5 0.4 0.6 0.2 0.7 variance 0.25 0.24 0.24 0.16 0.21 SD 0.50 0.49 0.49 0.40 0.46 Item correlations that determines it’s quality THE OTHER ITEMS covariances 16 Polytomous item correlations -dichotomous variables. ichotomous items 17

- 17. Phi: Dichotomous correlation (called the Phi Coefficient), we need the p’s and q’s from before. 18 jk j k jk j j k k p p p p q p q φ − = Dichotomous covariance fall between 0 and 1. retains the units of the actual measure we are using. riance:

- 18. 19 jk jk j kCov φ σ σ= Now you try 4: 20 Examinee Items 1 2 3 4 5 p 0.5 0.6 0.4 0.8 0.3 q 0.5 0.4 0.6 0.2 0.7 variance 0.25 0.24 0.24 0.16 0.21 21 34 .40 (.40 *.80) (.40 *.60)(.80 *.20) φ − =

- 19. 34 .40 (.08) (.24)(.16) φ − = 34 .08 (.038) φ = 34 .08 .196 φ = 34 .408 .41φ = ≈ 22 jk jk j kCov φ σ σ= 34 .41*.49 *.40Cov = 34 .08Cov = Correlation Matrices rganize these values into matrices

- 20. 23 Item Correlation 1 2 3 4 5 1 1 2 1 3 1 .41 4 1 5 1 Variance-Covariance Matrix t yet completed) 24 Item Variance-Covariance 1 2 3 4 5 1 .25

- 21. 2 .24 3 .24 .08 4 .24 5 .24 Let’s do it! items. l out the remaining parts of the Matrices for our example data using the formulas we just learned 25 Correlations26 Item Correlation 1 2 3 4 5 1 1 0.41 0 0 -0.22 2 1 0.25 -0.41 0.09 3 1 0.41 0.36

- 22. 4 1 0.33 5 1 Covariances27 Variance-Covariance 1 2 3 4 5 1 0.25 0.1 0 0 -0.05 2 0.24 0.06 -0.08 0.02 3 0.24 0.08 0.08 4 0.16 0.06 5 0.21 Finding the Variance of the Composite score score composite

- 23. variance 28 T XE Variance of a composite29 2 2 2 2 1 2 3 12 1 2 13 1 3 23 2 32 2 2cσ σ σ σ φ σ σ φ σ σ φ σ σ= + + + + + Item Variances Item Covariances **DOUBLED Step by step -covariance matrix ariances! diagonal values)

- 24. 30 Variance-Covariance 1 2 3 4 5 1 0.25 0.1 0 0 -0.05 2 0.24 0.06 -0.08 0.02 3 0.24 0.08 0.08 4 0.16 0.06 5 0.21 Try it out r example composite? 31 cov .27=∑ .27 * 2 .54= var 1.10=∑ 2 1.64cσ = Variance-Covariance 1 2 3 4 5 1 0.25 0.1 0 0 -0.05 2 0.24 0.06 -0.08 0.02 3 0.24 0.08 0.08

- 25. 4 0.16 0.06 5 0.21 1.28cσ = 1.10 *.54 1.64= Item-specific statistics main aspects of items are of primary importance: 32 Item Difficulty item p value EASIER while smaller (.3) are HARDER 33 What’s good?

- 26. -.6) participants. -value close to chance (.25 for a 4- choice item) need to be revised or deleted. -.8 in difficulty 34 Discrimination separating participants based on their true ability good thing no purpose 35 Indices of Discrimination

- 27. whether an item is discriminating well -total, or Point biserial, correlation 36 D index - response to an item is with a participant doing well on a test score on the test -point score, as the cut-point. -value (difficulty) separately for each of those two groups. -group’s p-value from the upper- group’s p- value 37 upper lowerD p p= − What we want to see

- 28. completely revamp 38 Now you try e dataset 39 Participant Item 1 Item 2 Item 3 Item 4 Item 5 Score 2 0 0 0 1 0 1 6 0 1 0 0 0 1 1 0 0 1 1 0 2 4 1 0 0 1 0 2 3 1 1 0 0 0 2 p(lower) 0.4 0.4 0.2 0.6 0 10 0 0 0 1 1 2 8 1 1 0 1 0 3 5 0 1 1 1 1 4 9 1 1 1 1 0 4 7 1 1 1 1 1 5 p(upper) 0.6 0.8 0.6 1 0.6 D 0.2 0.4 0.4 0.4 0.6

- 29. Point Bi-serial Correlations scores between the item and the test g formula: 40 ( ) jcorrect total item total total j p q µ µ ρ σ− − = Average total score of those who got the item right Average total score of all participants

- 30. Total score SD of all participants Item p and q What do we want to see? are OK revising - biserial that you should accept one item! 41 Now you try -biserial correlation for each of the items in our

- 31. example: 42 Examinee Items 1 2 3 4 5 Score Point Biserial 0.47 0.54 0.73 0.43 0.55 Ok…Now we have CTT item statistics! which items are functioning best, worst, and why? 43 Examinee Items 1 2 3 4 5 Score p 0.5 0.6 0.4 0.8 0.3 q 0.5 0.4 0.6 0.2 0.7 variance 0.25 0.24 0.24 0.16 0.21 SD 0.50 0.49 0.49 0.40 0.46 D 0.2 0.4 0.4 0.4 0.6 Point Biserial 0.47 0.54 0.73 0.43 0.55

- 32. Don’t forget to think about the inter-item correlations. What do these tell you? 44 Item Correlation 1 2 3 4 5 1 1 0.41 0 0 -0.22 2 1 0.25 -0.41 0.09 3 1 0.41 0.36 4 1 0.33 5 1 So…what are your recommendations for this measure? 45 Classical Test Theory and Item AnalysisReview: Why do we measure? A Classic DiscoverySlide Number 4A Model-Based PerspectiveSlide Number 6Beginning Item AnalysisMean and variance: Polytomous itemsDichotomous itemsMind your P’s and Q’sPay attention to subscriptsItem varianceItem Standard DeviationNow you trySlide Number 15Item correlationsPolytomous item correlationsPhi: Dichotomous correlationDichotomous covarianceNow you trySlide Number 21Slide Number 22Correlation MatricesVariance-Covariance

- 33. MatrixLet’s do it!CorrelationsCovariancesFinding the Variance of the CompositeVariance of a compositeStep by stepTry it outItem-specific statisticsItem Difficulty What’s good?DiscriminationIndices of DiscriminationD indexWhat we want to seeNow you tryPoint Bi-serial CorrelationsWhat do we want to see?Now you tryOk…Now we have CTT item statistics!Don’t forget to think about the inter-item correlations. What do these tell you?So…what are your recommendations for this measure? Reliability Remember from last class: T + E T XE A quote to consider scale, for the weight that tipped it into place.

- 34. True weight σ2T Observed weight σ2X Observed score (X) = True Score (T) + Error (E) The Bathroom Scale Analogy = ρ2XT ≡ ρXX σ2T σ2X Reliability = True weight σ2T Observed weight σ2X Conceptualizing reliability observed score variance nt to the correlation between the observed scores and the true scores SQUARED observed scores and THEMSELVES = ρ2XT ≡ ρXX σ2T σ2X

- 35. Reliability = T XE Approach One: Test-Retest o a group of participants measure in the same context participants’ scores at time 1 and time 2 Test-retest: A Modelling Perspective Assumptions: variance in X at both time-points participants’ true scores time-points provides

- 36. information about the magnitude of the measurement errors T Time 1 E Time 2 E Approach Two: Parallel Forms participants, all at once participants’ scores on both forms of the measure Parallel Forms: A Modelling Perspective

- 37. causing variance in X on both forms but potentially less likely to hold T Form 1 E Form 2 E Approach Three: Internal Consistency order to calculate a parallel-forms correlation? -HALF reliability recalculated…And then you did this over and over again, and averaged the resulting correlations? Cronbach’s alpha:

- 38. ))1(( cKv cK −+ × =α Number of items Average covariance among items Average variance of items Cronbach’s Alpha same true score is causing variance in every item on the measure information about the true score, Alpha will generally increase as you add items T Item 1 E

- 39. Item 3 E Item 2 E Let’s do it! Variance-Covariance 1 2 3 4 5 1 0.25 0.1 0 0 -0.05 2 0.24 0.06 -0.08 0.02 3 0.24 0.08 0.08 4 0.16 0.06 5 0.21 Step by Step Variance-Covariance 1 2 3 4 5 1 0.25 0.1 0 0 -0.05

- 40. 2 0.24 0.06 -0.08 0.02 3 0.24 0.08 0.08 4 0.16 0.06 5 0.21 cK −+ × =α 5 .027 (.22 (5 1).027) α × = + − .135 .328 α = .411α =

- 41. What’s good? .85 d Cronbach’s Alpha: SPSS Calculation Spearman-Brown Prophecy items reliability? -Brown formula: * ' ' *

- 42. ' ' (1 ) (1 ) xx xx xx xx N ρ ρ ρ ρ − = − Multiplier of items Desired reliability Current reliability Let’s do it. to get our example test to have reliability = .80 .41 (dismal) * ' ' * ' '

- 43. (1 ) (1 ) xx xx xx xx N ρ ρ ρ ρ − = − .80(1 .41) .41(1 .80) N − = − .472 .082 N = 5.75N = Keep going!

- 44. we simply take: 5 * 5.75K = 28.78K =Total number of items needed Original number of items Multiplier from last slide So…our example test would need 29 items to achieve a reliability of .80 Consequences of low reliability Consequence One: Student Assessment student’s observed score is close to their true score could have? xxrSDSEM −= 1

- 45. Assessment and Reliability interval around the student’s previous score xxrSDSEM −= 1 8.15 −=SEM 24.2=SEM 68% confidence the student’s true score is within 25 ± 2.24 or [22.76, 27.24] Scenario One: Student Assessment You are consulting with a local school district concerning the placement of particular students to an accelerated learning program. The district’s policy states that a student needs an IQ score of 130 to be admitted to the program. You see in the manual for the IQ test that its reliability coefficient is .83, and the population SD is 15. Based on this information, if a particular student scored a 120, can you be 95% confident that their true score is NOT above 130? 36.12218.683.115 =×=−=SEM

- 46. [ ]36.132,64.10736.12120 =± Scenario one continued: Retesting a student ure a student will score the same if they are retested. get. nce interval around a student’s score 21 xxSEE SD r= − Same scenario: Student Assessment You are consulting with a local school district concerning the placement of particular students to an accelerated learning program. The district’s policy states that a student needs an IQ score of 130 to be admitted to the program. You see in the manual for the IQ test that its reliability coefficient is .83, and the population SD is 15. Based on this information, if a particular student scored a 120, can you be 95% confident that, if they were retested, they wouldn’t score 130? 215 1 .83 8.37 2 16.74SEE = − = × =

- 47. [ ]120 16.74 103.26,136.74± = Diet 1 Diet 2 Error variance increases the “noise,” thereby dampening the power to detect the group difference “signal.” Consequence Two: Loss of Power Y X2X1 Y σ µµ − =dObserved weight T T2T1 T σ µµ − =dTrue weight Power Analysis and Reliability interest:

- 48. T XX Y 1 n r = N if reliability was perfect (results of basic power analysis) N needed with your reliability Reliability of measure Scenario Two: Power Analysis You are conducting an a priori power analysis for your

- 49. dissertation. You plan to compare the self-efficacy of students in two different instruction conditions. When you run your initial power analysis, you find you need 50 students for your study. However, after checking the literature, you find your measure of self- efficacy has a reliability coefficient of .91. Based on this value, how many students do you need in your study to maintain your power? 94.54 50 91. 1 Y Y = = n n

- 50. Consequence Three: Loss of Correlation ures have low reliability, the correlation between them will be attenuated yyxx xy yx rr r r ='' Original correlation between measures (attenuated) Product of reliability coefficients Corrected correlation (unattenuated)

- 51. Scenario Three: Correlation You and your advisor are conducting a study of personality and creativity. You run a correlation on your participants’ extraversion and creativity scores, and get .35. Your advisor is disappointed because this correlation is smaller than they expected. But, you know from the literature that the extraversion measure has a reliability coefficient of .89 and your creativity measure only .72. Given these values, what is the actual correlation between extraversion and creativity in your sample? 44. 72.89. 35. '' = × =yxr ReliabilityRemember from last class:A quote to considerThe Bathroom Scale AnalogySlide Number 5Conceptualizing reliabilityApproach One: Test-RetestTest-retest: A Modelling PerspectiveApproach Two: Parallel FormsParallel Forms: A Modelling PerspectiveApproach Three: Internal ConsistencyCronbach’s alpha:Cronbach’s AlphaLet’s do it!Step by StepWhat’s good?Cronbach’s Alpha: SPSS CalculationSlide Number 18Slide Number 19Slide Number 20Spearman-Brown ProphecyLet’s do it.Keep going!Consequences of low reliabilityConsequence One: Student AssessmentAssessment and ReliabilityScenario One: Student AssessmentScenario one continued: Retesting a studentSame scenario: Student AssessmentConsequence Two: Loss of PowerPower Analysis and ReliabilityScenario Two: Power AnalysisConsequence

- 52. Three: Loss of CorrelationScenario Three: Correlation ITEM RESPONSE THEORY An Introduction Problems with CTT ■ Extremely sample-dependent ■ Item statistics are all on separate scales from the ability score ■ Cannot adequately take guessing into account ■ Does not estimate true scores well ■ Assumes a measurement model, but does not actually fit the model to the data A breakthrough: the Rasch model ■ Models the probability of a correct answer on a given item as a function of two parameters: – Participant ability (Theta) – Item difficulty (b) ■ These two parameters are on the SAME scale – Represented as z-scores

- 53. Rasch Model: Equation form ( ) 1 ( 1 | ) 1 i jij i b P x e θ θ −= = + Probability of answering item X correctly, given participant ability Ability Item difficulty *Also known as One- parameter-logistic (1pl) model 1pl: characteristic curves ■ The probability of a correct response on a given item can be represented by an item characteristic curve ■ Item difficulty is the level of theta (ability) at which a participant has a 50% likelihood of getting the item correct.

- 54. ■ Example of curve where b = 0 (perfectly average) ■ The point where the b parameter is located is called the inflection point Some real examples from JMP This item is easier because it takes less ability to have a 50% chance of getting it right This item is more difficult: a greater than average ability is required Now you try: Which item is easiest and hardest The 2pl model: Adding Discrimination ■ Remember from CTT: not all items discriminate equally! ■ The 2pl IRT model includes a discrimination parameter (a) ( ) 1

- 55. ( 1 | ) 1 i jij i a b P x e θ θ − −= = + Everything is the same except for this 2pl Curves: Look for Slope ■ The slope of the curve represents the item’s discrimination ■ Answers the question: how related to theta is this particular item? In this example, Item 1 is more discriminating than Item 2 Now you try: Which item is most and least discriminating? 3pl: Adding guessing

- 56. ■ One major failing of CTT is we can’t account for guessing ■ Is an item easy because participants can guess it, or is it actually an easy concept? ■ The 3pl model includes the guessing parameter (c) as the lower asymptote of the probability function: ■ The higher the probability of guessing, the easier it is for participants to guess the item. ( ) 1 ( 1 | ) 1 j i j j ij i j a b c P x c e θ θ − − − = = + + Guessing

- 57. 3pl: Characteristic Curves The green curve (V5) shows the highest guessing parameter Important: Inflection point shift ■ When we add the guessing parameter, the inflection point is not longer the point where a participant has a 50% likelihood of a correct answer. ■ The new inflection point is calculated simply like this: ■ That means we need to change where we look for the difficulty parameter. 1 2 c+ Now you try: Which item is easiest and hardest to guess? Information

- 58. ■ In IRT, we typically talk about reliability in terms of “information” ■ Answers the question: “How much do we know about a participant’s true ability (theta) based on this item?” ■ BUT the information is not the same for all participants – This is a major difference from CTT – Items are more or less informative for different participants, depending on their level of theta ■ Information functions depict the level of theta at which an item (or an entire test) is most informative Information functions ■ The HEIGHT of the function shows HOW MUCH information is given by an item ■ The LOCATION of the peak of the function shows for what participants the item is informative ■ **Important: The information function will always peak at the item’s b-parameter (difficulty) and it’s height is determined directly by the item discrimination The black curve here seems like the least informative, but

- 59. it is the MOST informative for participants with theta levels greater than 2 JMP examples ■ The information functions of each item has been on the characteristic curves the whole time: ■ Remember: Information function peaks at b (difficulty) and it’s height is determined by a (discrimination) Now you try: Which item gives the most or least information? Test Information ■ Summarizes the amount of information given by all of the items included on the test. ■ Analogous to composite reliability measures like Cronbach’s alpha. ■ Remember: information is different across different levels of theta For what participants is this test most informative?

- 60. Right around the mean Standard error of measurement in IRT ■ At any given point of theta, the SEM is the inverse of the test information. – SEM = 1 - Information ■ So the SEM is DIFFERENT for all the participants! ■ Think about this: how would this change our scenarios from last class? Model Assumptions ■ In CTT we had the assumption that the errors were the same magnitude for all the items, and for all participants. – Everything was at the composite level ■ Now, we don’t have those assumptions anymore. ■ We are still making these important assumptions: – Unidimensionality: The same true score causes variance in all the items – Independence: A previous item is not required to get a new item correct T Item 1

- 61. E1 Item 3 E3 Item 2 E2 Other benefits of IRT ■ True scores (thetas) can be directly estimated by the computer. – When we deal with these true score estimates, confidence intervals like those we constructed in CTT are not needed ■ Item parameters should be nearly the same across samples – This can be empirically tested – This gets into test fairness ■ IRT can be used to: – construct computer-adaptive-tests – equate scores across tests or grade levels – pick items for clinical or neurocognitive testing ■ In IRT we have an empirical test of whether our measurement model fits our data (called model fit statistics) IRT in JMP

- 62. Click on Item analysis Select your data-set Check settings and then click import This dialog box pops up Highlight the items you want to analyze Click Test Items

- 63. Then Click OK Change model type here (default is 2pl) This is the output: just click on the arrows to display the particular plots you are looking for Ok– now you try! Item Response TheoryProblems with CTTA breakthrough: the Rasch modelRasch Model: Equation form1pl: characteristic curvesSome real examples from JMPNow you try: Which item is easiest and hardestThe 2pl model: Adding Discrimination2pl Curves: Look for SlopeNow you try: Which item is most and least discriminating?3pl: Adding guessing3pl: Characteristic CurvesImportant: Inflection point shiftNow you try: Which item is easiest and hardest to guess? InformationInformation functionsJMP examplesNow you try: Which item gives the most or least information?Test InformationStandard error of

- 64. measurement in IRTModel AssumptionsOther benefits of IRTIRT in JMPSlide Number 24Slide Number 25Slide Number 26Slide Number 27Slide Number 28Slide Number 29Slide Number 30Slide Number 31Slide Number 32Slide Number 33Ok– now you try!