Tensor Network Presentation.pdf

•

0 likes•89 views

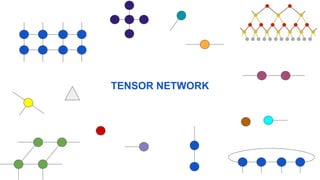

#Tensor Network Presentation

Report

Share

Report

Share

Download to read offline

Recommended

Recommended

www.ijeijournal.comInternational Journal of Engineering Inventions (IJEI),

International Journal of Engineering Inventions (IJEI),International Journal of Engineering Inventions www.ijeijournal.com

More Related Content

What's hot

What's hot (20)

Similar to Tensor Network Presentation.pdf

www.ijeijournal.comInternational Journal of Engineering Inventions (IJEI),

International Journal of Engineering Inventions (IJEI),International Journal of Engineering Inventions www.ijeijournal.com

Distributed processing of probabilistic top k queries in wireless sensor networksDistributed processing of probabilistic top k queries in wireless sensor netw...

Distributed processing of probabilistic top k queries in wireless sensor netw...JPINFOTECH JAYAPRAKASH

Similar to Tensor Network Presentation.pdf (20)

International Journal of Engineering Inventions (IJEI),

International Journal of Engineering Inventions (IJEI),

A cellular network architecture with polynomial weight functions

A cellular network architecture with polynomial weight functions

Reconfigurable intelligent surface passive beamforming enhancement using uns...

Reconfigurable intelligent surface passive beamforming enhancement using uns...

Efficiency of Neural Networks Study in the Design of Trusses

Efficiency of Neural Networks Study in the Design of Trusses

Distributed processing of probabilistic top k queries in wireless sensor netw...

Distributed processing of probabilistic top k queries in wireless sensor netw...

Maximizing network capacity and reliable transmission in mimo cooperative net...

Maximizing network capacity and reliable transmission in mimo cooperative net...

Maximizing network capacity and reliable transmission

Maximizing network capacity and reliable transmission

Tensor representations in signal processing and machine learning (tutorial ta...

Tensor representations in signal processing and machine learning (tutorial ta...

IRJET - Study on the Effects of Increase in the Depth of the Feature Extracto...

IRJET - Study on the Effects of Increase in the Depth of the Feature Extracto...

Large Scale Kernel Learning using Block Coordinate Descent

Large Scale Kernel Learning using Block Coordinate Descent

Compressive Data Gathering using NACS in Wireless Sensor Network

Compressive Data Gathering using NACS in Wireless Sensor Network

Once-for-All: Train One Network and Specialize it for Efficient Deployment

Once-for-All: Train One Network and Specialize it for Efficient Deployment

Recently uploaded

Model Call Girl Services in Delhi reach out to us at 🔝 9953056974 🔝✔️✔️

Our agency presents a selection of young, charming call girls available for bookings at Oyo Hotels. Experience high-class escort services at pocket-friendly rates, with our female escorts exuding both beauty and a delightful personality, ready to meet your desires. Whether it's Housewives, College girls, Russian girls, Muslim girls, or any other preference, we offer a diverse range of options to cater to your tastes.

We provide both in-call and out-call services for your convenience. Our in-call location in Delhi ensures cleanliness, hygiene, and 100% safety, while our out-call services offer doorstep delivery for added ease.

We value your time and money, hence we kindly request pic collectors, time-passers, and bargain hunters to refrain from contacting us.

Our services feature various packages at competitive rates:

One shot: ₹2000/in-call, ₹5000/out-call

Two shots with one girl: ₹3500/in-call, ₹6000/out-call

Body to body massage with sex: ₹3000/in-call

Full night for one person: ₹7000/in-call, ₹10000/out-call

Full night for more than 1 person: Contact us at 🔝 9953056974 🔝. for details

Operating 24/7, we serve various locations in Delhi, including Green Park, Lajpat Nagar, Saket, and Hauz Khas near metro stations.

For premium call girl services in Delhi 🔝 9953056974 🔝. Thank you for considering us!CHEAP Call Girls in Rabindra Nagar (-DELHI )🔝 9953056974🔝(=)/CALL GIRLS SERVICE

CHEAP Call Girls in Rabindra Nagar (-DELHI )🔝 9953056974🔝(=)/CALL GIRLS SERVICE9953056974 Low Rate Call Girls In Saket, Delhi NCR

Saudi Arabia [ Abortion pills) Jeddah/riaydh/dammam/+966572737505☎️] cytotec tablets uses abortion pills 💊💊

How effective is the abortion pill? 💊💊 +966572737505) "Abortion pills in Jeddah" how to get cytotec tablets in Riyadh " Abortion pills in dammam*💊💊

The abortion pill is very effective. If you’re taking mifepristone and misoprostol, it depends on how far along the pregnancy is, and how many doses of medicine you take:💊💊 +966572737505) how to buy cytotec pills

At 8 weeks pregnant or less, it works about 94-98% of the time. +966572737505[ 💊💊💊

At 8-9 weeks pregnant, it works about 94-96% of the time. +966572737505)

At 9-10 weeks pregnant, it works about 91-93% of the time. +966572737505)💊💊

If you take an extra dose of misoprostol, it works about 99% of the time.

At 10-11 weeks pregnant, it works about 87% of the time. +966572737505)

If you take an extra dose of misoprostol, it works about 98% of the time.

In general, taking both mifepristone and+966572737505 misoprostol works a bit better than taking misoprostol only.

+966572737505

Taking misoprostol alone works to end the+966572737505 pregnancy about 85-95% of the time — depending on how far along the+966572737505 pregnancy is and how you take the medicine.

+966572737505

The abortion pill usually works, but if it doesn’t, you can take more medicine or have an in-clinic abortion.

+966572737505

When can I take the abortion pill?+966572737505

In general, you can have a medication abortion up to 77 days (11 weeks)+966572737505 after the first day of your last period. If it’s been 78 days or more since the first day of your last+966572737505 period, you can have an in-clinic abortion to end your pregnancy.+966572737505

Why do people choose the abortion pill?

Which kind of abortion you choose all depends on your personal+966572737505 preference and situation. With+966572737505 medication+966572737505 abortion, some people like that you don’t need to have a procedure in a doctor’s office. You can have your medication abortion on your own+966572737505 schedule, at home or in another comfortable place that you choose.+966572737505 You get to decide who you want to be with during your abortion, or you can go it alone. Because+966572737505 medication abortion is similar to a miscarriage, many people feel like it’s more “natural” and less invasive. And some+966572737505 people may not have an in-clinic abortion provider close by, so abortion pills are more available to+966572737505 them.

+966572737505

Your doctor, nurse, or health center staff can help you decide which kind of abortion is best for you.

+966572737505

More questions from patients:

Saudi Arabia+966572737505

CYTOTEC Misoprostol Tablets. Misoprostol is a medication that can prevent stomach ulcers if you also take NSAID medications. It reduces the amount of acid in your stomach, which protects your stomach lining. The brand name of this medication is Cytotec®.+966573737505)

Unwanted Kit is a combination of two mediciAbortion pills in Doha Qatar (+966572737505 ! Get Cytotec

Abortion pills in Doha Qatar (+966572737505 ! Get CytotecAbortion pills in Riyadh +966572737505 get cytotec

Model Call Girl Services in Delhi reach out to us at 🔝 9953056974 🔝✔️✔️

Our agency presents a selection of young, charming call girls available for bookings at Oyo Hotels. Experience high-class escort services at pocket-friendly rates, with our female escorts exuding both beauty and a delightful personality, ready to meet your desires. Whether it's Housewives, College girls, Russian girls, Muslim girls, or any other preference, we offer a diverse range of options to cater to your tastes.

We provide both in-call and out-call services for your convenience. Our in-call location in Delhi ensures cleanliness, hygiene, and 100% safety, while our out-call services offer doorstep delivery for added ease.

We value your time and money, hence we kindly request pic collectors, time-passers, and bargain hunters to refrain from contacting us.

Our services feature various packages at competitive rates:

One shot: ₹2000/in-call, ₹5000/out-call

Two shots with one girl: ₹3500/in-call, ₹6000/out-call

Body to body massage with sex: ₹3000/in-call

Full night for one person: ₹7000/in-call, ₹10000/out-call

Full night for more than 1 person: Contact us at 🔝 9953056974 🔝. for details

Operating 24/7, we serve various locations in Delhi, including Green Park, Lajpat Nagar, Saket, and Hauz Khas near metro stations.

For premium call girl services in Delhi 🔝 9953056974 🔝. Thank you for considering us!CHEAP Call Girls in Saket (-DELHI )🔝 9953056974🔝(=)/CALL GIRLS SERVICE

CHEAP Call Girls in Saket (-DELHI )🔝 9953056974🔝(=)/CALL GIRLS SERVICE9953056974 Low Rate Call Girls In Saket, Delhi NCR

Ashok Vihar Call Girls in Delhi (–9953330565) Escort Service In Delhi NCR PROVIDE 100% REAL GIRLS ALL ARE GIRLS LOOKING MODELS AND RAM MODELS ALL GIRLS” INDIAN , RUSSIAN ,KASMARI ,PUNJABI HOT GIRLS AND MATURED HOUSE WIFE BOOKING ONLY DECENT GUYS AND GENTLEMAN NO FAKE PERSON FREE HOME SERVICE IN CALL FULL AC ROOM SERVICE IN SOUTH DELHI Ultimate Destination for finding a High Profile Independent Escorts in Delhi.Gurgaon.Noida..!.Like You Feel 100% Real Girl Friend Experience. We are High Class Delhi Escort Agency offering quality services with discretion. We only offer services to gentlemen people. We have lots of girls working with us like students, Russian, models, house wife, and much More We Provide Short Time and Full Night Service Call ☎☎+91–9953330565 ❤꧂ • In Call and Out Call Service in Delhi NCR • 3* 5* 7* Hotels Service in Delhi NCR • 24 Hours Available in Delhi NCR • Indian, Russian, Punjabi, Kashmiri Escorts • Real Models, College Girls, House Wife, Also Available • Short Time and Full Time Service Available • Hygienic Full AC Neat and Clean Rooms Avail. In Hotel 24 hours • Daily New Escorts Staff Available • Minimum to Maximum Range Available. Location;- Delhi, Gurgaon, NCR, Noida, and All Over in Delhi Hotel and Home Services HOTEL SERVICE AVAILABLE :-REDDISSON BLU,ITC WELCOM DWARKA,HOTEL-JW MERRIOTT,HOLIDAY INN MAHIPALPUR AIROCTY,CROWNE PLAZA OKHALA,EROSH NEHRU PLACE,SURYAA KALKAJI,CROWEN PLAZA ROHINI,SHERATON PAHARGANJ,THE AMBIENC,VIVANTA,SURAJKUND,ASHOKA CONTINENTAL , LEELA CHANKYAPURI,_ALL 3* 5* 7* STARTS HOTEL SERVICE BOOKING CALL Call WHATSAPP Call ☎+91–9953330565❤꧂ NIGHT SHORT TIME BOTH ARE AVAILABLE

Call Girls In Shalimar Bagh ( Delhi) 9953330565 Escorts Service

Call Girls In Shalimar Bagh ( Delhi) 9953330565 Escorts Service9953056974 Low Rate Call Girls In Saket, Delhi NCR

(NEHA) Call Girls Katra Call Now: 8617697112 Katra Escorts Booking Contact Details WhatsApp Chat: +91-8617697112 Katra Escort Service includes providing maximum physical satisfaction to their clients as well as engaging conversation that keeps your time enjoyable and entertaining. Plus, they look fabulously elegant, making an impression. Independent Escorts Katra understands the value of confidentiality and discretion; they will go the extra mile to meet your needs. Simply contact them via text messaging or through their online profiles; they'd be more than delighted to accommodate any request or arrange a romantic date or fun-filled night together. We provide: (NEHA) Call Girls Katra Call Now 8617697112 Katra Escorts 24x7

(NEHA) Call Girls Katra Call Now 8617697112 Katra Escorts 24x7Call Girls in Nagpur High Profile Call Girls

Recently uploaded (20)

CHEAP Call Girls in Rabindra Nagar (-DELHI )🔝 9953056974🔝(=)/CALL GIRLS SERVICE

CHEAP Call Girls in Rabindra Nagar (-DELHI )🔝 9953056974🔝(=)/CALL GIRLS SERVICE

Detecting Credit Card Fraud: A Machine Learning Approach

Detecting Credit Card Fraud: A Machine Learning Approach

Digital Advertising Lecture for Advanced Digital & Social Media Strategy at U...

Digital Advertising Lecture for Advanced Digital & Social Media Strategy at U...

➥🔝 7737669865 🔝▻ Bangalore Call-girls in Women Seeking Men 🔝Bangalore🔝 Esc...

➥🔝 7737669865 🔝▻ Bangalore Call-girls in Women Seeking Men 🔝Bangalore🔝 Esc...

➥🔝 7737669865 🔝▻ Thrissur Call-girls in Women Seeking Men 🔝Thrissur🔝 Escor...

➥🔝 7737669865 🔝▻ Thrissur Call-girls in Women Seeking Men 🔝Thrissur🔝 Escor...

Abortion pills in Doha Qatar (+966572737505 ! Get Cytotec

Abortion pills in Doha Qatar (+966572737505 ! Get Cytotec

Chintamani Call Girls: 🍓 7737669865 🍓 High Profile Model Escorts | Bangalore ...

Chintamani Call Girls: 🍓 7737669865 🍓 High Profile Model Escorts | Bangalore ...

Call me @ 9892124323 Cheap Rate Call Girls in Vashi with Real Photo 100% Secure

Call me @ 9892124323 Cheap Rate Call Girls in Vashi with Real Photo 100% Secure

Call Girls in Sarai Kale Khan Delhi 💯 Call Us 🔝9205541914 🔝( Delhi) Escorts S...

Call Girls in Sarai Kale Khan Delhi 💯 Call Us 🔝9205541914 🔝( Delhi) Escorts S...

CHEAP Call Girls in Saket (-DELHI )🔝 9953056974🔝(=)/CALL GIRLS SERVICE

CHEAP Call Girls in Saket (-DELHI )🔝 9953056974🔝(=)/CALL GIRLS SERVICE

Call Girls Indiranagar Just Call 👗 7737669865 👗 Top Class Call Girl Service B...

Call Girls Indiranagar Just Call 👗 7737669865 👗 Top Class Call Girl Service B...

Junnasandra Call Girls: 🍓 7737669865 🍓 High Profile Model Escorts | Bangalore...

Junnasandra Call Girls: 🍓 7737669865 🍓 High Profile Model Escorts | Bangalore...

Thane Call Girls 7091864438 Call Girls in Thane Escort service book now -

Thane Call Girls 7091864438 Call Girls in Thane Escort service book now -

Call Girls Begur Just Call 👗 7737669865 👗 Top Class Call Girl Service Bangalore

Call Girls Begur Just Call 👗 7737669865 👗 Top Class Call Girl Service Bangalore

Call Girls Jalahalli Just Call 👗 7737669865 👗 Top Class Call Girl Service Ban...

Call Girls Jalahalli Just Call 👗 7737669865 👗 Top Class Call Girl Service Ban...

Call Girls In Shalimar Bagh ( Delhi) 9953330565 Escorts Service

Call Girls In Shalimar Bagh ( Delhi) 9953330565 Escorts Service

Jual Obat Aborsi Surabaya ( Asli No.1 ) 085657271886 Obat Penggugur Kandungan...

Jual Obat Aborsi Surabaya ( Asli No.1 ) 085657271886 Obat Penggugur Kandungan...

Call Girls In Hsr Layout ☎ 7737669865 🥵 Book Your One night Stand

Call Girls In Hsr Layout ☎ 7737669865 🥵 Book Your One night Stand

(NEHA) Call Girls Katra Call Now 8617697112 Katra Escorts 24x7

(NEHA) Call Girls Katra Call Now 8617697112 Katra Escorts 24x7

Tensor Network Presentation.pdf

- 2. Tensor Tensor: natural presentation of multi-dimensional array A D-order Tensor is denoted as

- 3. Tensor Network Tensor Network (TNs): ● Sparse data structures engineered for the efficient representation and manipulation of very high-dimensional Tensor. ● Graphical representation with Tensor Network Diagram. Scalar I1 I2 I3 I J I I I J I1 I2 I3 Vector Matrix Cube Rank-0 Tensor Rank-1 Tensor Rank-2 Tensor Rank-3 Tensor Array form Graphical Diagram Form

- 4. Tensor Network Tensor Operations A x I J I b=Ax A B C=AB I J K I K A B I J K L M P C L M P I J Matrix-Vector Multiplication Matrix-Matrix Multiplication Matrix Trace Tensor-Tensor Contraction

- 5. Tensor Network Tensor Decomposition I1 I2 I3 I4 I5 I6 1 1 1 1 1 1 I1 I2 I3 I4 I5 I6 r r r r r r I1 I2 I3 I4 I5 I6 r r r r r I1 I2 I3 I4 I5 I6 r r r r r r CP Decomposition Tucker Decomposition Tensor Train Decomposition Tensor Ring Decomposition

- 6. Types of Tensor Network Matrix Product States / Tensor Train Decomposition Matrix Product States (MPS) is a factorization of a tensor with N indices into a chain-like product of three-index tensors. MPS MPS with periodic boundary conditions

- 7. Types of Tensor Network Matrix Product Operator A matrix product operator (MPO) is a tensor network where each tensor has two external, uncontracted indices as well as two internal indices contracted with neighboring tensors in a chain-like fashion. MPO

- 8. Types of Tensor Network Tree Tensor Network / Hierarchical Tucker Decomposition Tree Tensor Network is class of Tensor Network presented by general tree-like structures.

- 9. Types of Tensor Network Projected Entangled Pair States (PEPS) The PEPS tensor network generalizes the Matrix Product State / Tensor Train Tensor Network from a one-dimensional network to a network on an arbitrary graph. The tensor diagram for a PEPS on a finite square lattice is:

- 10. Types of Tensor Network Multi-scale Entanglement Renormalization Ansatz (MERA) Compared with the PEPS formats, the main advantage of the MERA formats is that the order and size of each core tensor in the internal tensor network structure is often much smaller, which dramatically reduces the number of free parameters and provides more efficient distributed storage of huge-scale data tensors.

- 11. Applications of Tensor Network Big domains: ● Representing Continuous Functions ● Machine Learning ● Probability and Statistic ● Quantum Physics In Machine Learning: ● Quantum Computers ● Supervised/ Unsupervised/ Reinforcement Learning ● Expressivity & priors of TN based models ● Generative models ● Compressing weights of neural nets ● Optimization Methods ● Feature extraction & tensor completion ● …

- 12. Resource https://tensornetwork.org/ [1] Roberts, Chase, et al. "Tensornetwork: A library for physics and machine learning." arXiv preprint arXiv:1905.01330 (2019). [2] Biamonte, Jacob. "Lectures on quantum tensor networks." arXiv preprint arXiv:1912.10049 (2019). [3] Biamonte, Jacob, and Ville Bergholm. "Tensor networks in a nutshell." arXiv preprint arXiv:1708.00006 (2017). [4] Orús, Román. "A practical introduction to tensor networks: Matrix product states and projected entangled pair states." Annals of physics 349 (2014): 117-158. [5] Bridgeman, Jacob C., and Christopher T. Chubb. "Hand-waving and interpretive dance: an introductory course on tensor networks." Journal of physics A: Mathematical and theoretical 50.22 (2017): 223001. [6] Cichocki, Andrzej, et al. "Low-rank tensor networks for dimensionality reduction and large-scale optimization problems: Perspectives and challenges part 1." arXiv preprint arXiv:1609.00893 (2016).