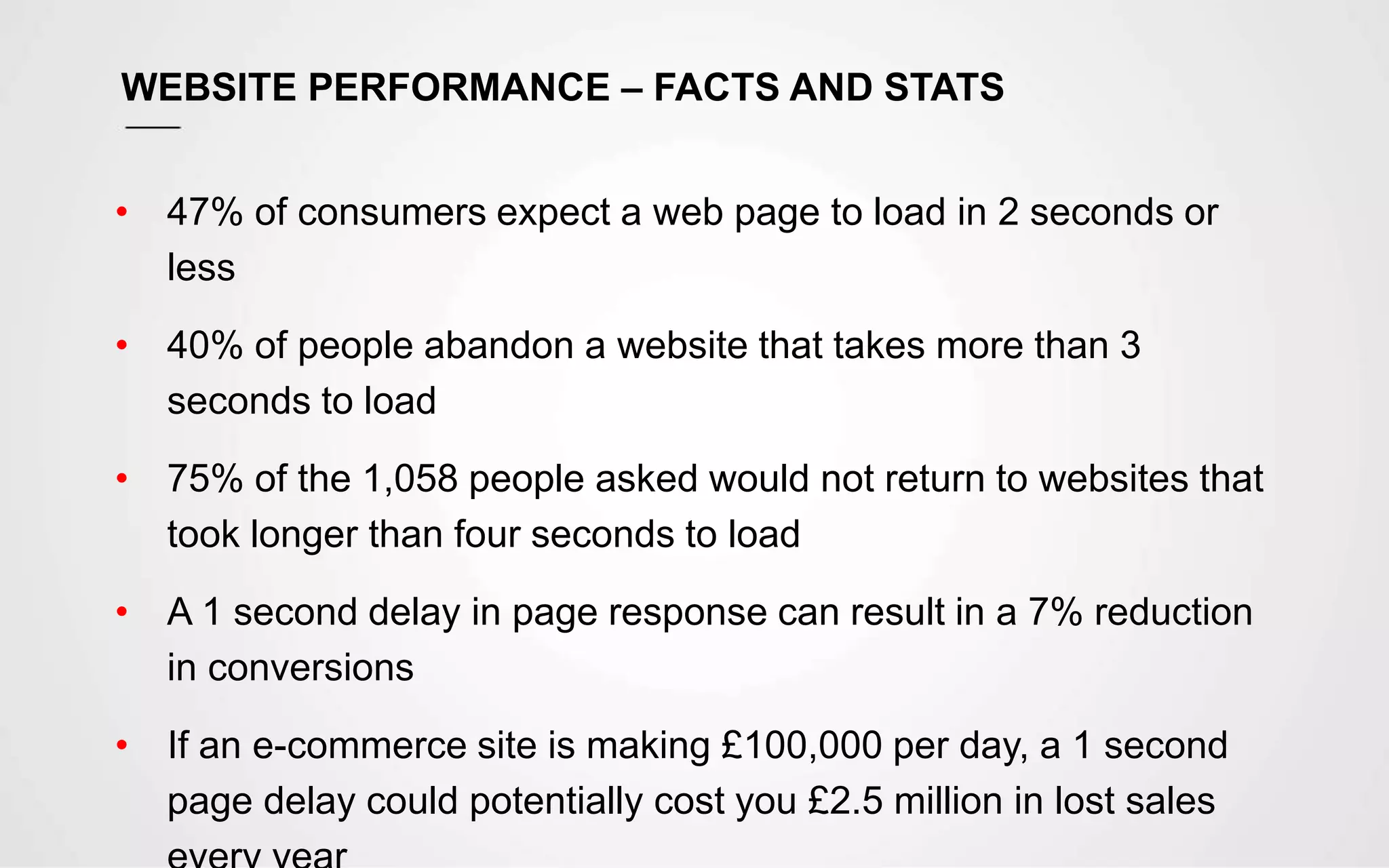

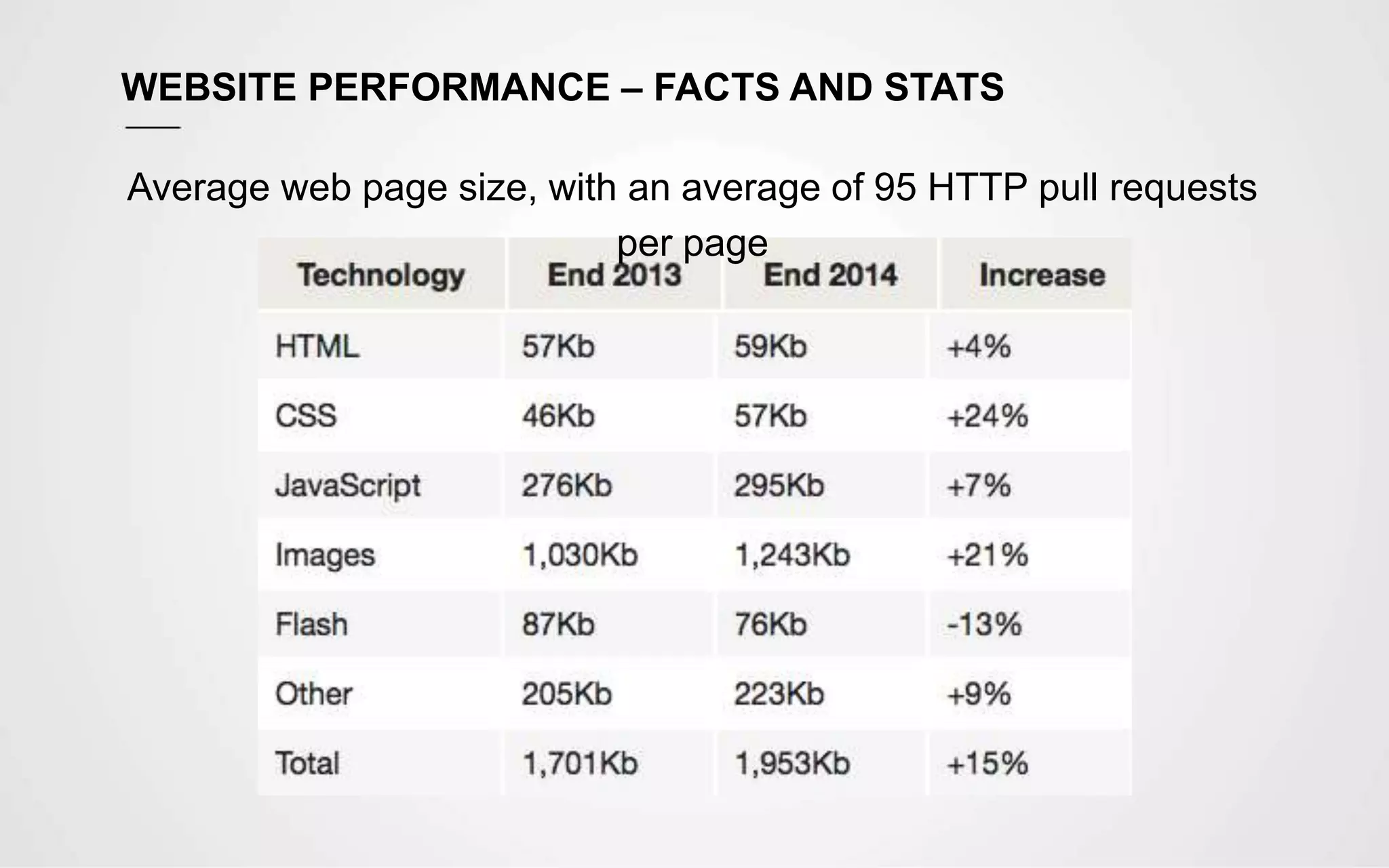

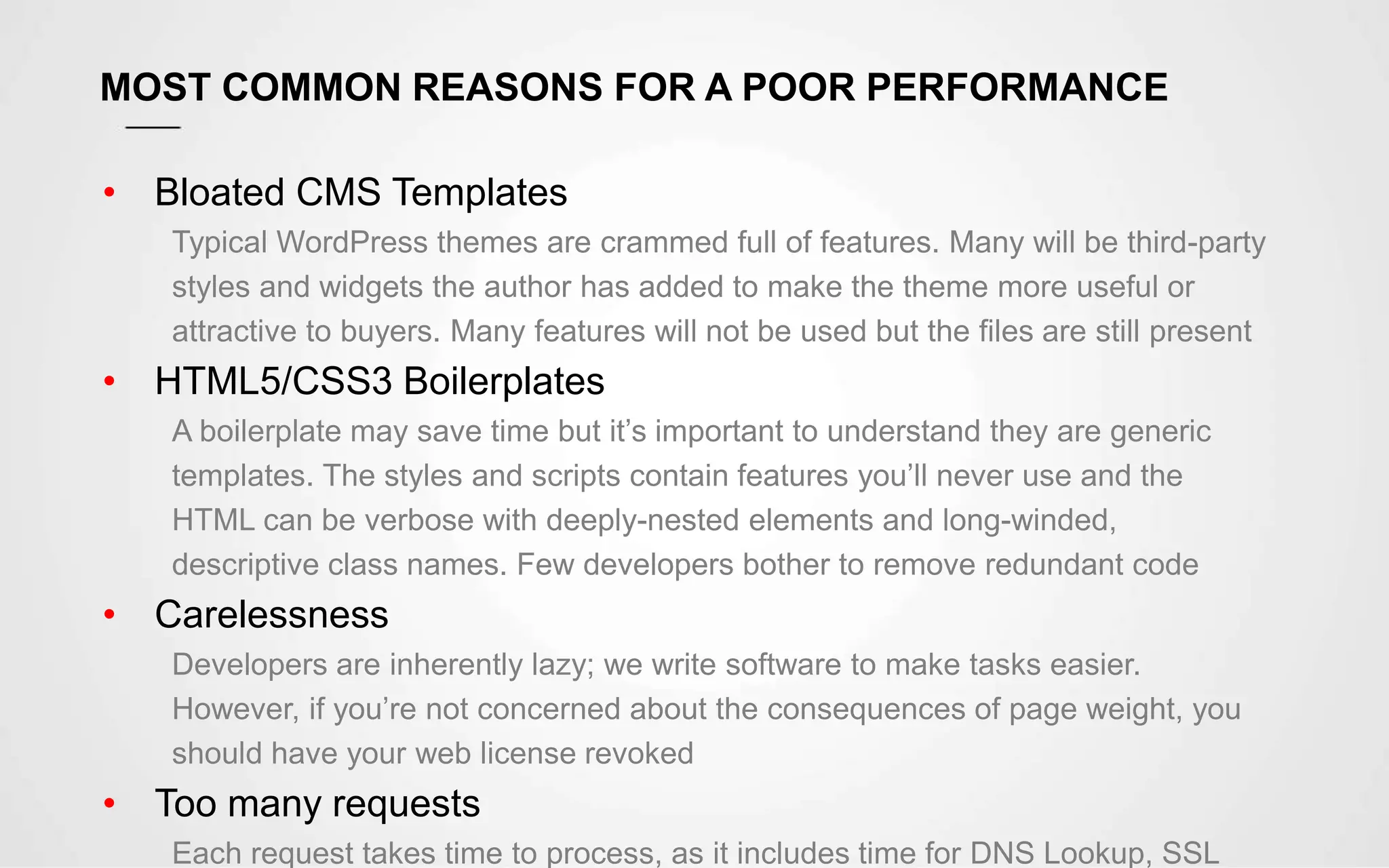

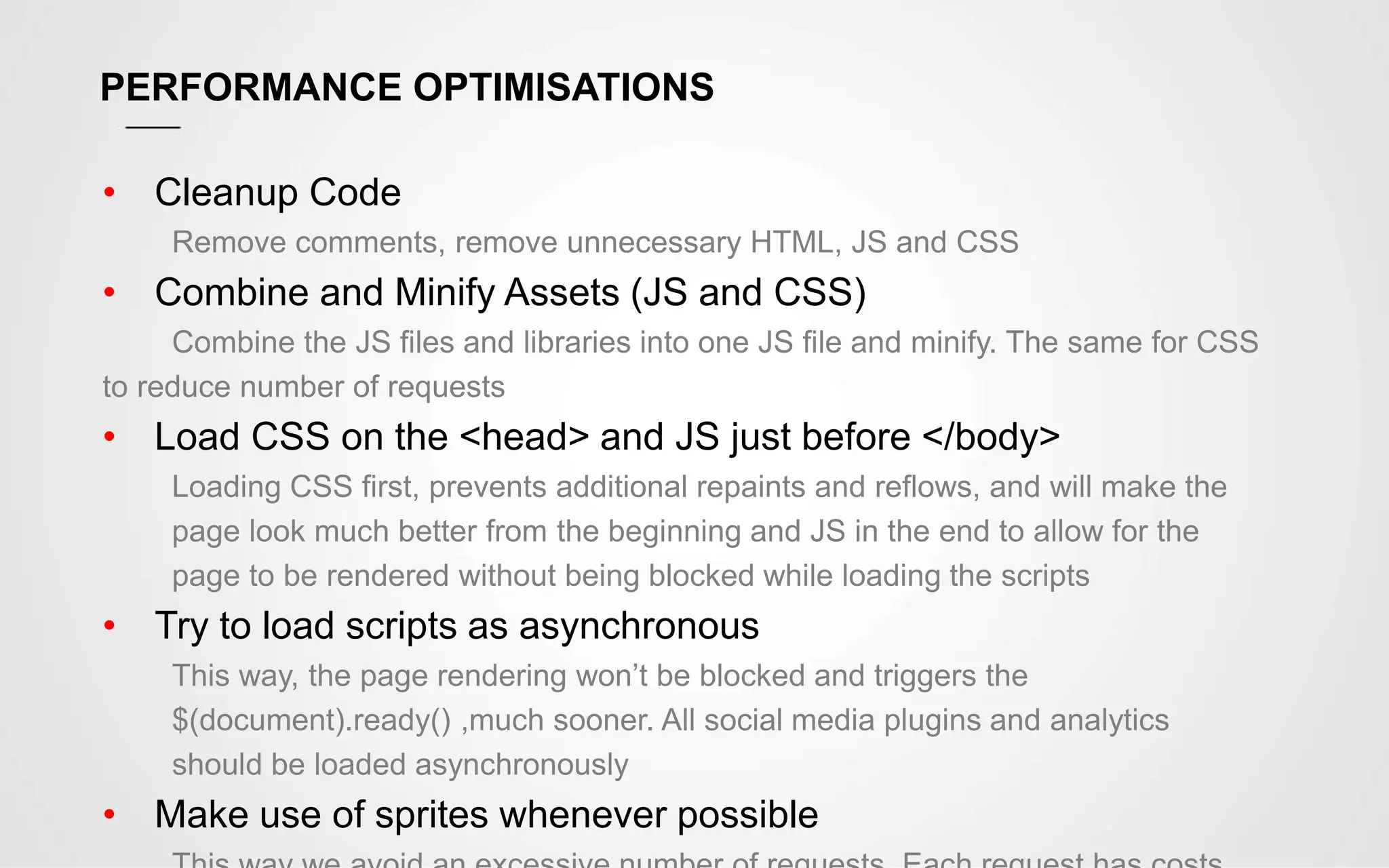

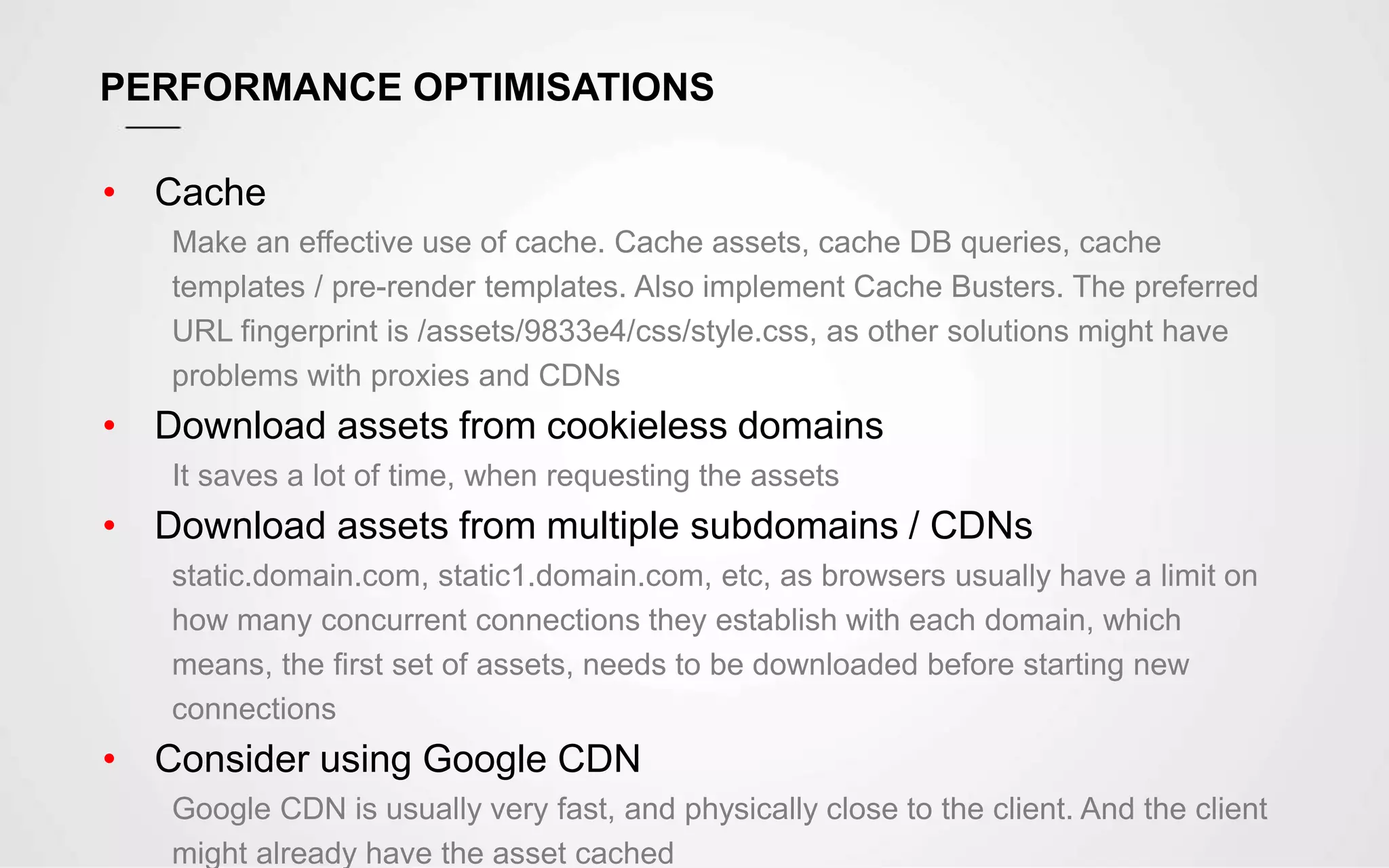

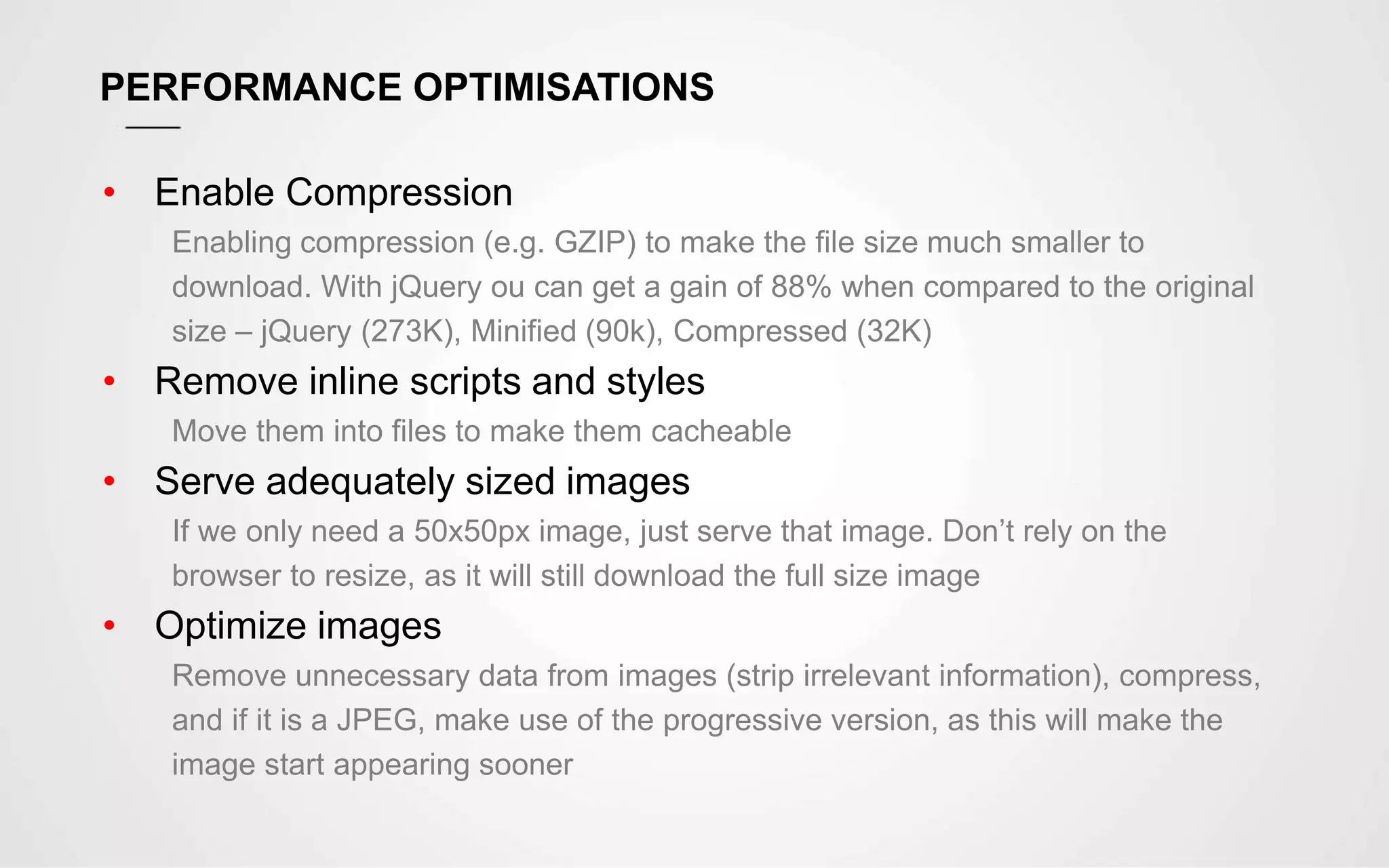

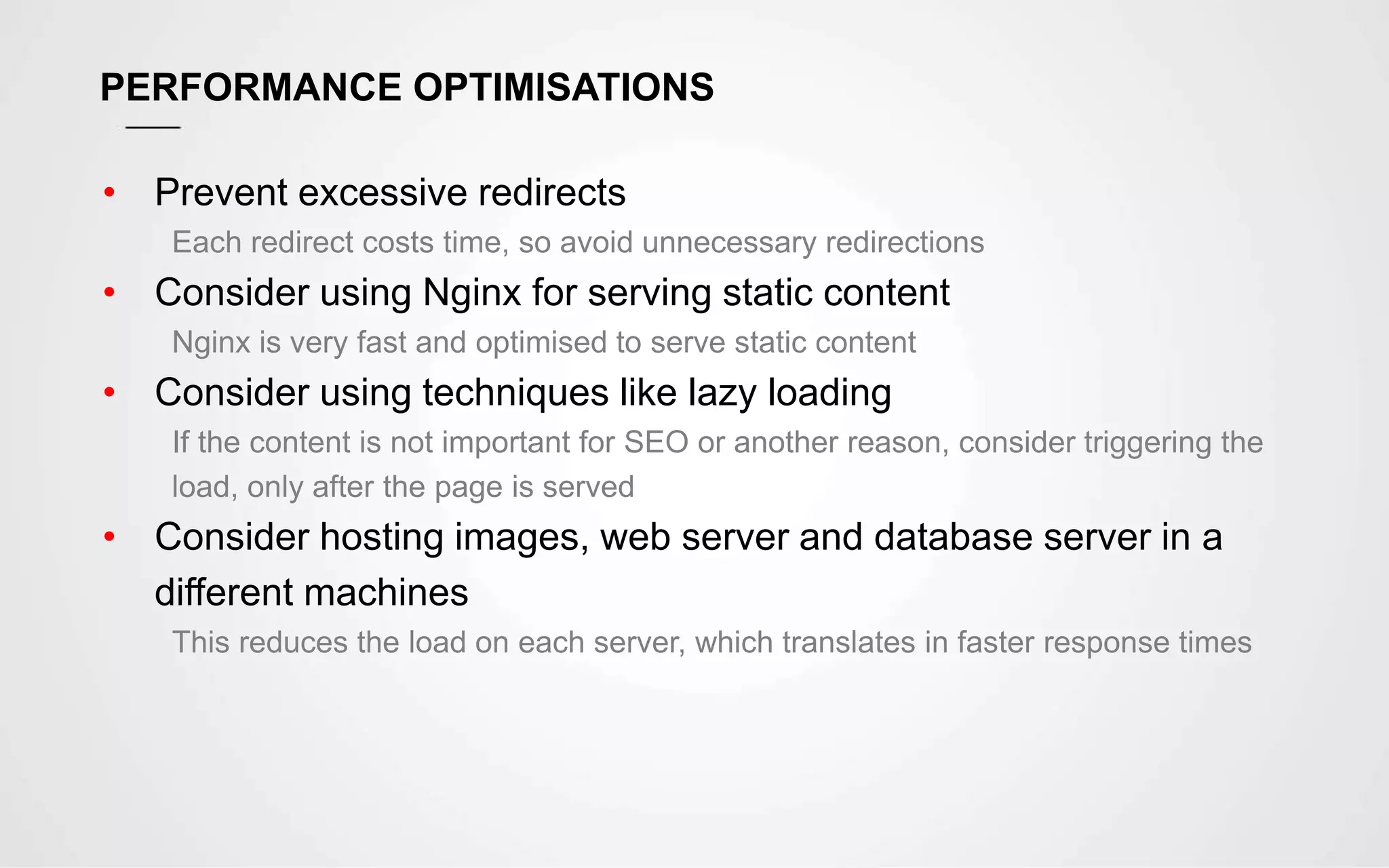

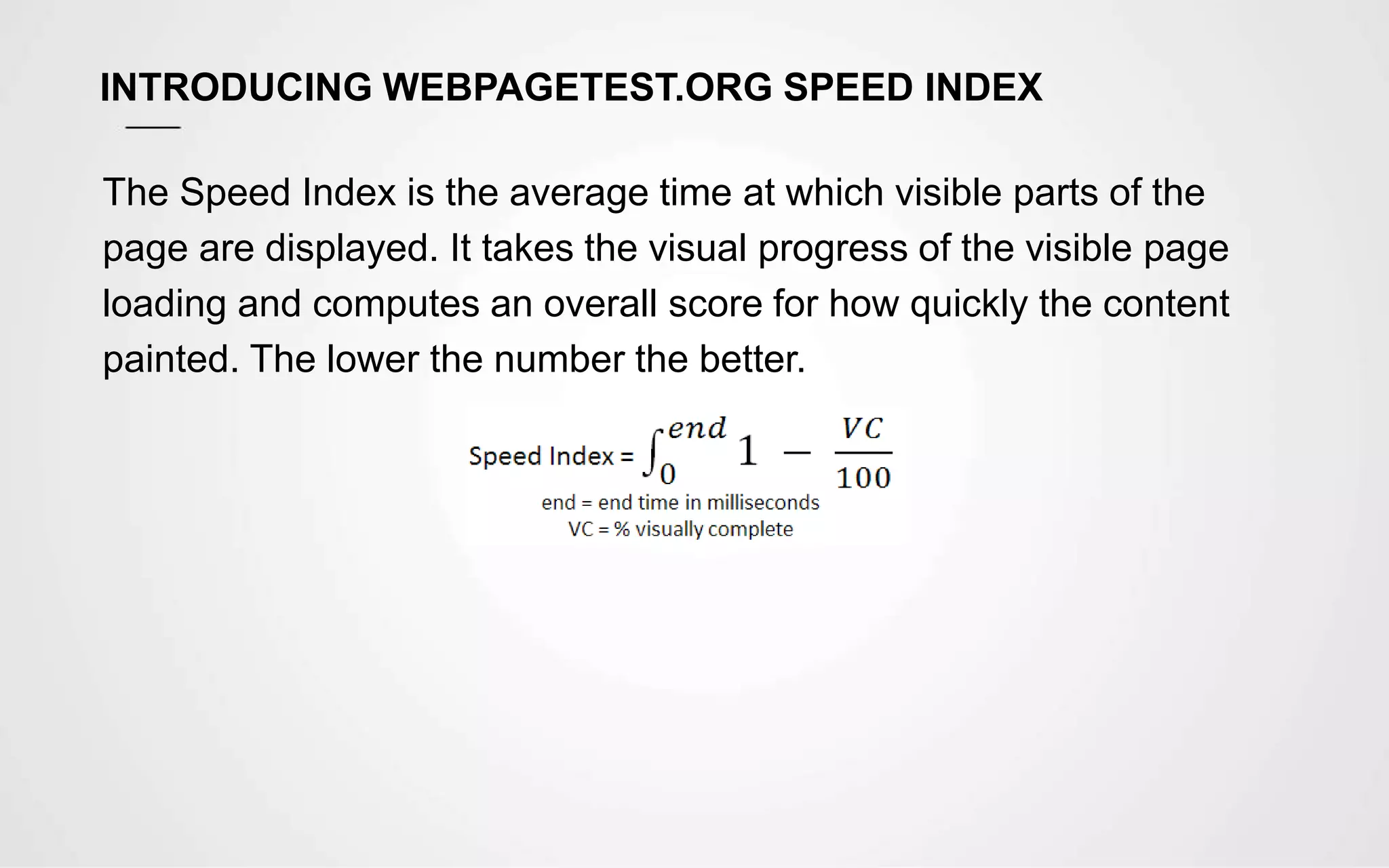

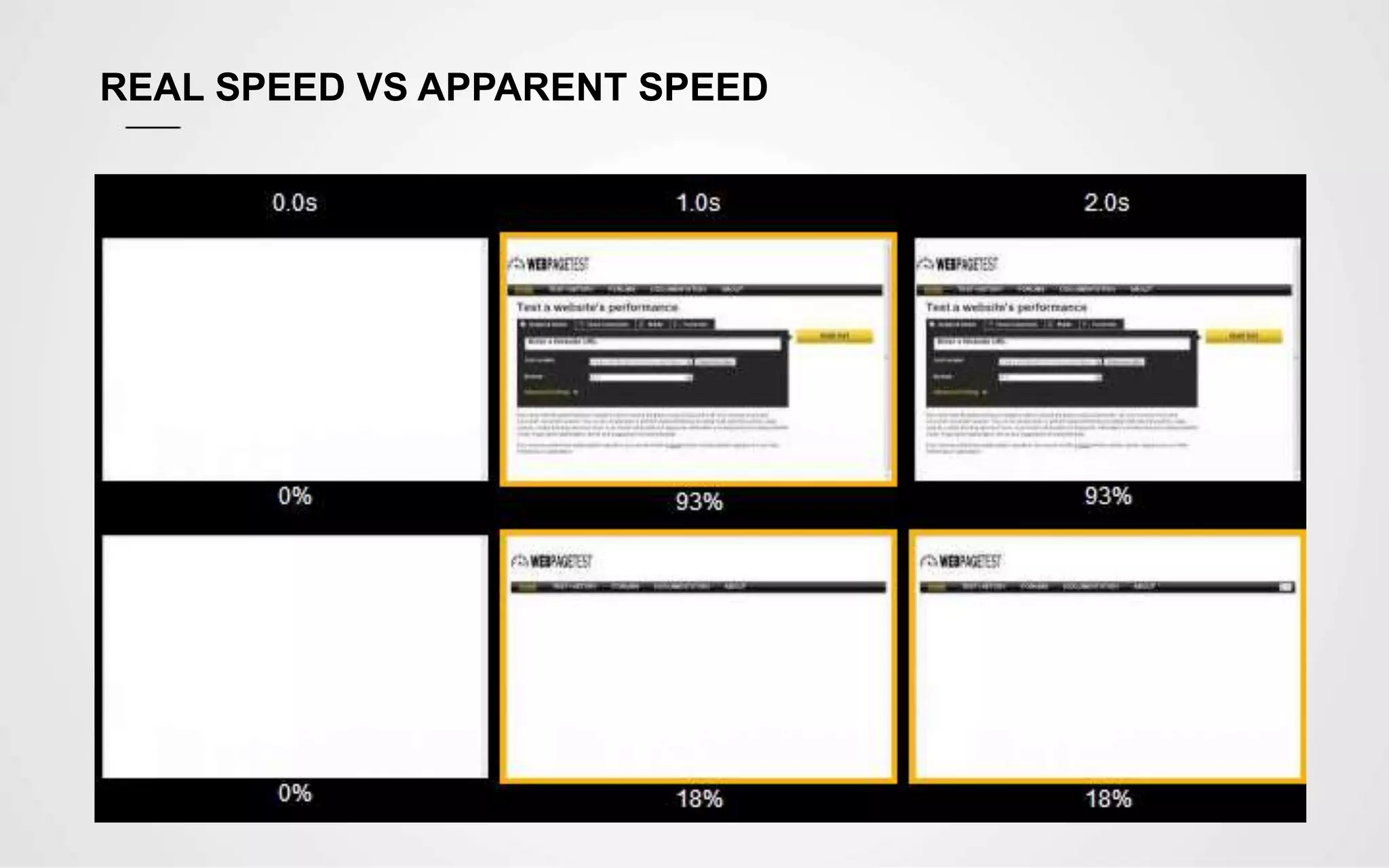

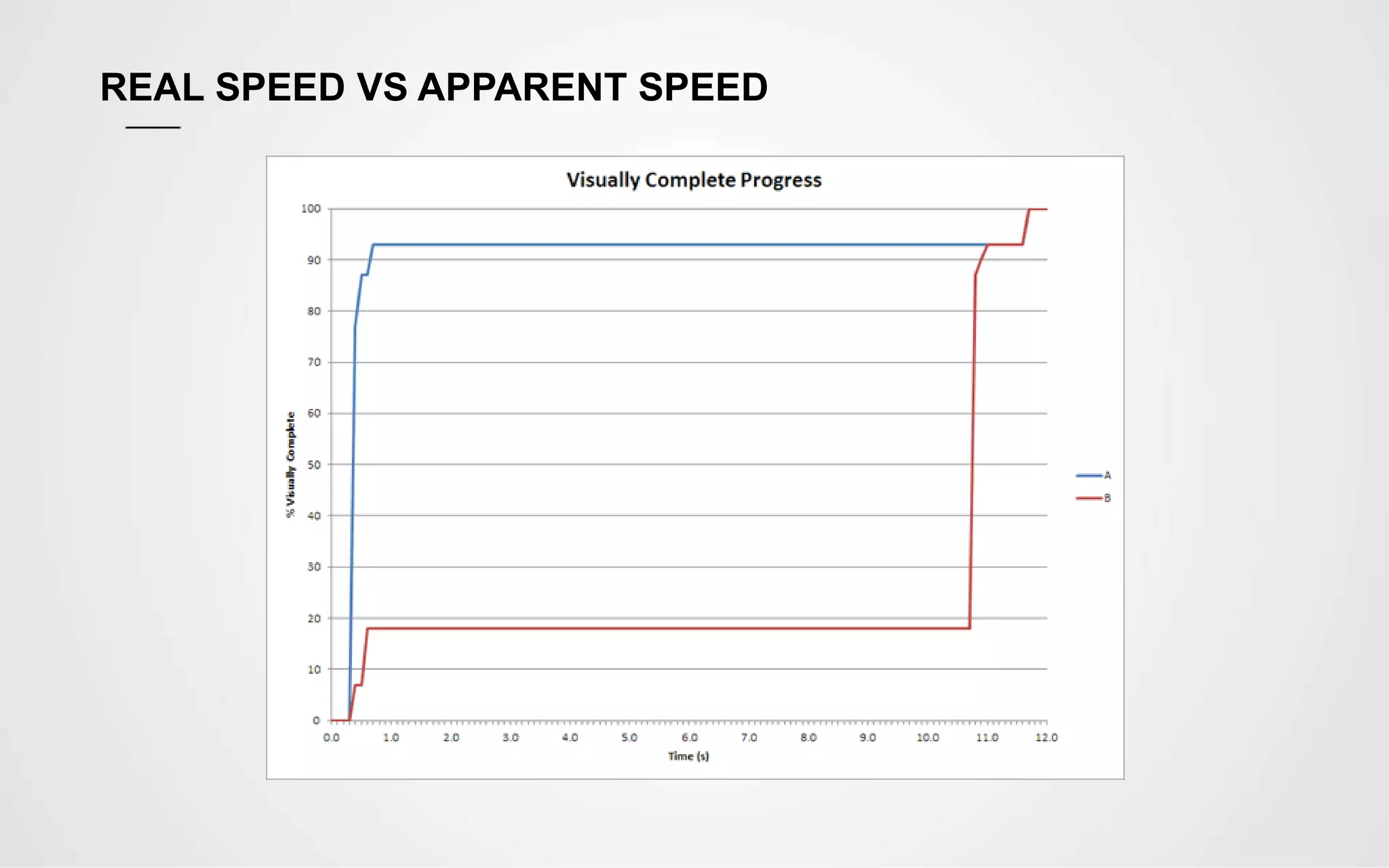

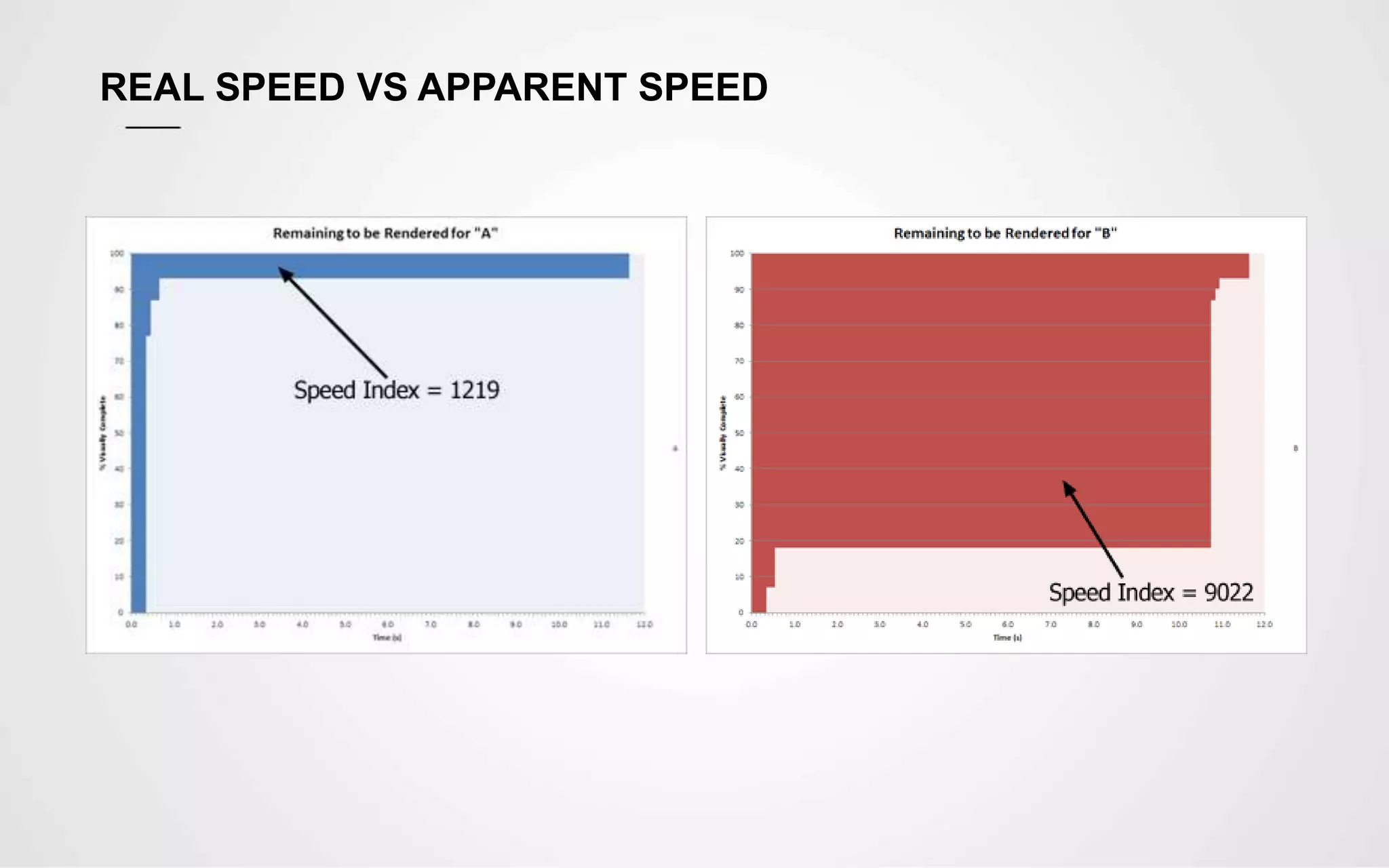

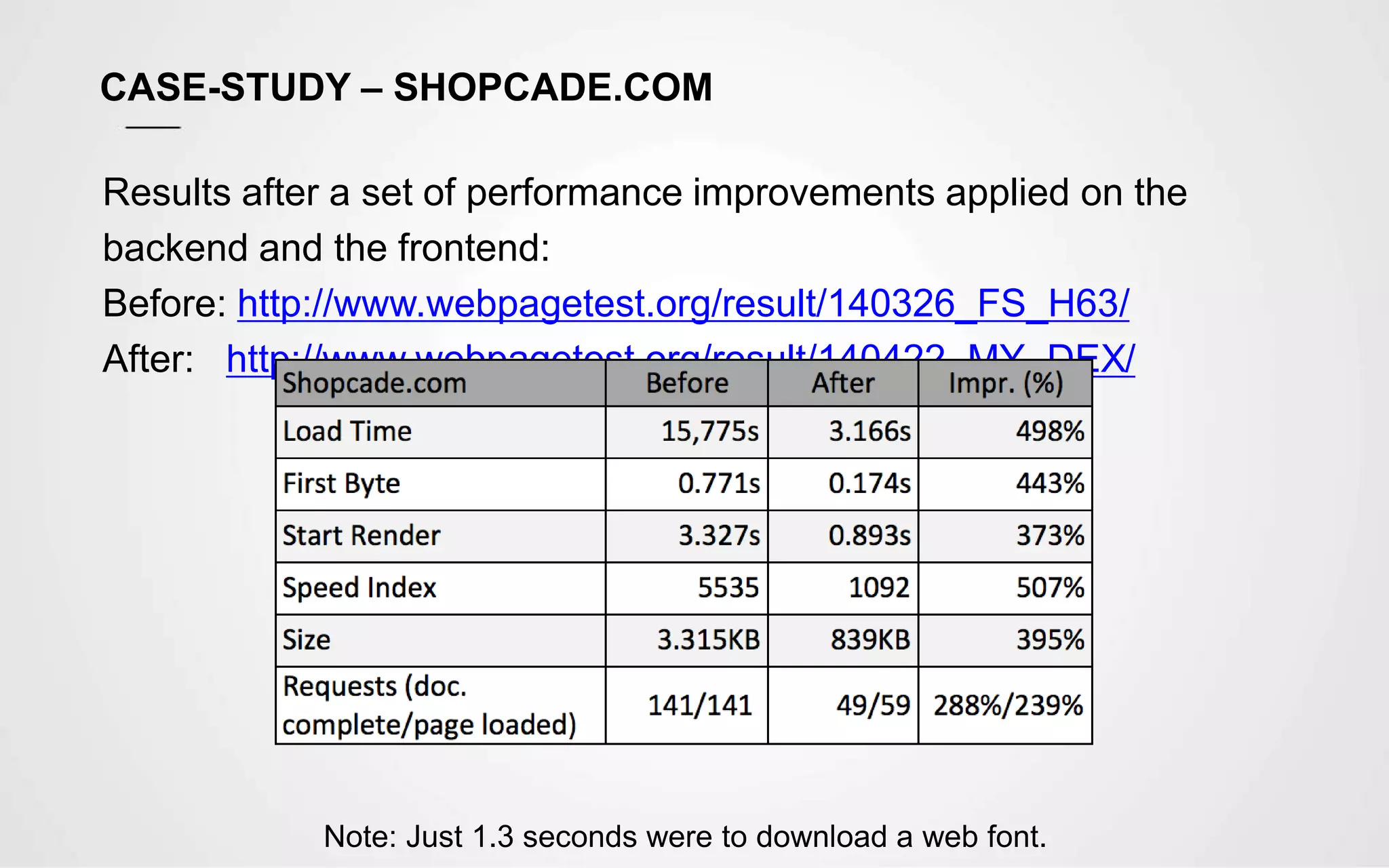

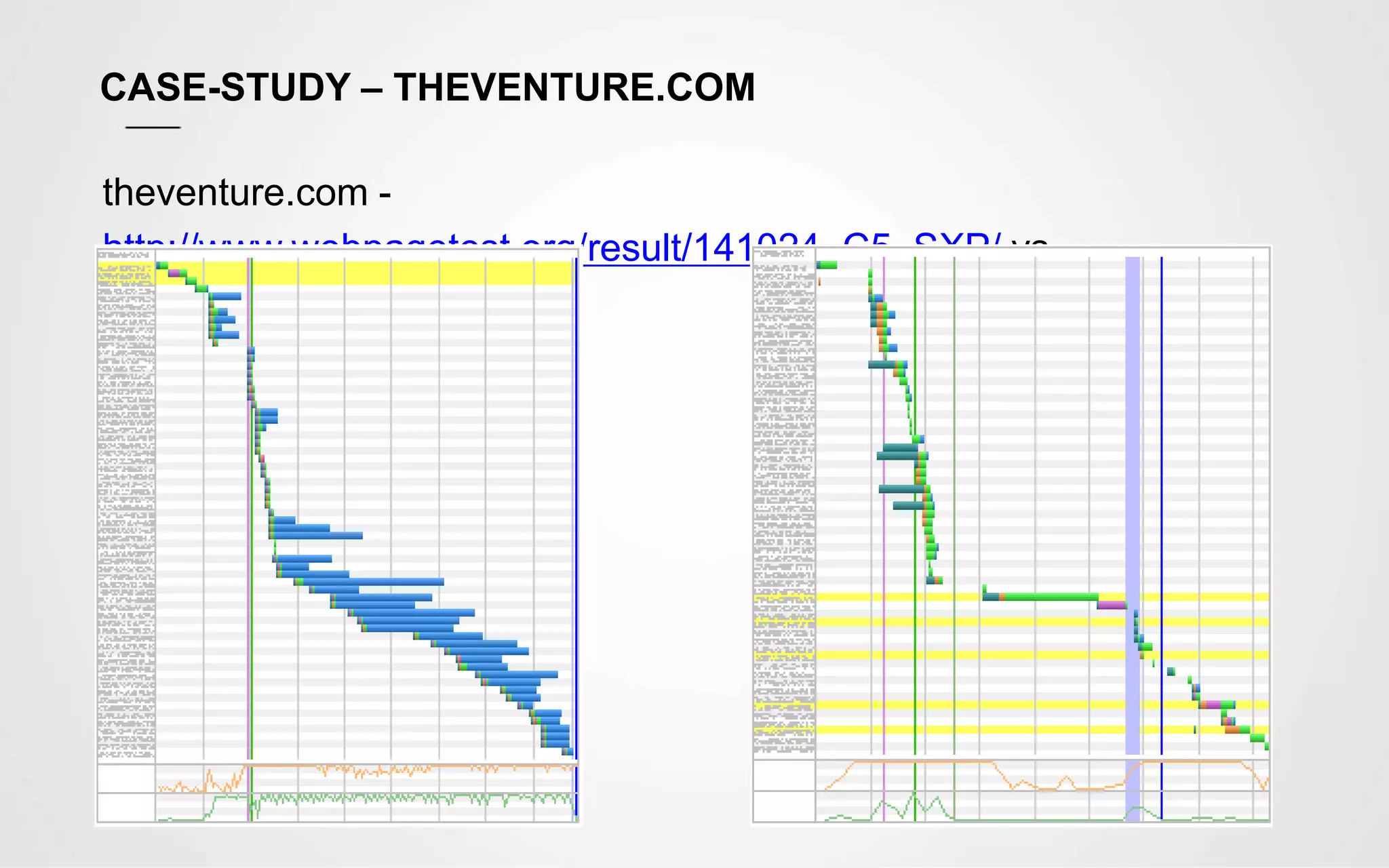

The document discusses website performance and optimization. It notes that nearly half of users expect a site to load within 2 seconds and will abandon a site taking longer than 3 seconds. Common issues causing poor performance are bloated templates, unnecessary code, and too many HTTP requests. Suggested optimizations include minimizing assets, prioritizing visible content, image optimization, caching, compression, and lazy loading. Case studies show significant speed improvements after implementing optimizations. Metrics like Speed Index measure how quickly visible content displays to influence perceived performance.