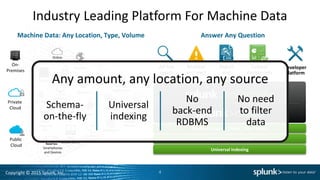

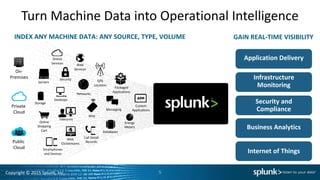

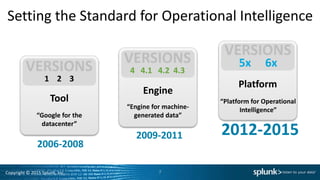

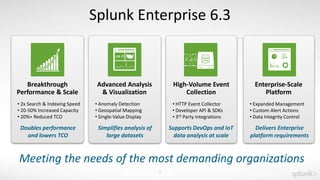

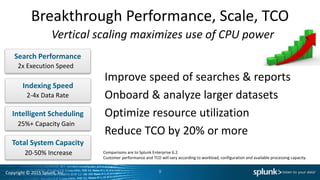

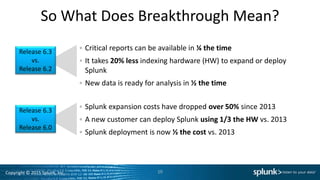

This document discusses a presentation about the new features of Splunk Enterprise 6.3. It highlights breakthrough performance and scale improvements including doubling search and indexing speed, increasing capacity by 20-50%, and reducing total cost of ownership by over 20%. It also mentions new capabilities for advanced analysis, visualization, high-volume event collection, and supporting enterprise-scale requirements. The presentation aims to demonstrate how Splunk Enterprise 6.3 provides significant performance gains and lower costs compared to previous versions.