Embed presentation

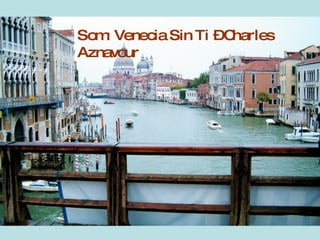

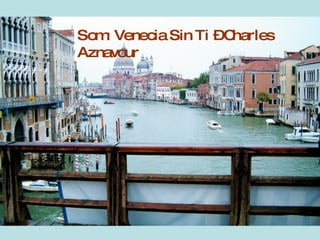

This document discusses the city of Venice, Italy. It mentions the song "Venice Without You" by Charles Aznavour, the Venice Carnival, and Murano glass craftsmanship. The document provides brief information about cultural aspects of Venice.