Unsupervised learning algorithms aim to learn rapidly without an external teacher by discovering patterns in input data. There are several types of unsupervised learning algorithms including competitive learning networks, Kohonen self-organizing networks, and Hebbian learning. Competitive learning involves neurons competing to be activated by an input pattern, with only the winning neuron updating its weights. Kohonen self-organizing maps perform topological mapping, placing input patterns in a higher dimensional output layer while preserving neighborhood relationships between similar inputs. Hebbian learning is based on Hebb's rule that the connection between two frequently co-active neurons is strengthened.

![Problem

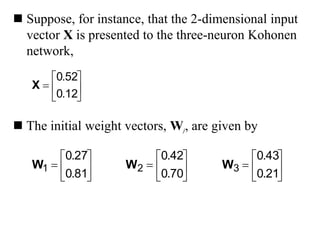

• Construct a kohenen self-organizing map

to cluster the four given vectors [0 0 1 1],

[1 0 0 0], [0 1 1 0],[0 0 01]. The number of

cluster to be formed is two. Initial learning

rate is 0.5

• Initial weights =

3

.

0

8

.

0

5

.

0

6

.

0

7

.

0

4

.

0

9

.

0

2

.

0](https://image.slidesharecdn.com/unsupervised-learning-230622154134-c297cf57/85/Unsupervised-learning-ppt-33-320.jpg)

![Solution to the problem

Input vector Winner weights

[0 0 1 1] D(1) [0.1 0.2 0.8 0.9]

[1 0 0 0] D(2) [0.95 0.35 0.25 0.15]

[0 1 1 0] D(1) [0.05 0.6 0.9 0.95]

[0 0 0 1] D(1) [0.025 0.3 0.45 0.975]](https://image.slidesharecdn.com/unsupervised-learning-230622154134-c297cf57/85/Unsupervised-learning-ppt-34-320.jpg)

![Simulation Example

The data employed in the

experiment comprised

500 points distributed

uniformly over the bipolar

square [−1, 1] × [−1, 1]

The points thus describe

a geometrically square

topology](https://image.slidesharecdn.com/unsupervised-learning-230622154134-c297cf57/85/Unsupervised-learning-ppt-36-320.jpg)

![Start Initialize weight

Learning rate

For each i/p

x

A

B

Y Calculate winner

Winner = min D(j)

If T=Cj

Input T

wj(n)=wj(o) +

ɳ[x-wj(o)]

wj(n)=wj(o) -

ɳ[x-wj(o)]

Y N

Reduce ɳ

ɳ(t+1)=0.5 ɳ(t)

If ɳ reduces

negligible

Stop

Y

B

A](https://image.slidesharecdn.com/unsupervised-learning-230622154134-c297cf57/85/Unsupervised-learning-ppt-56-320.jpg)

![Problem

• Construct and test and LVQ net with five

vectors assigned to two classes. The

given vectors along with the classes are

as shown in the table below

Vector Class

[0 0 1 1] 1

[1 0 0 0] 2

[0 0 0 1] 2

[1 1 0 0] 1

[0 1 1 0] 1](https://image.slidesharecdn.com/unsupervised-learning-230622154134-c297cf57/85/Unsupervised-learning-ppt-57-320.jpg)