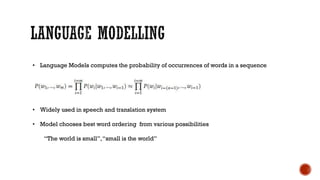

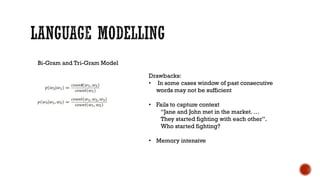

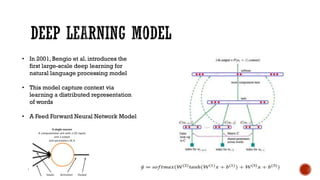

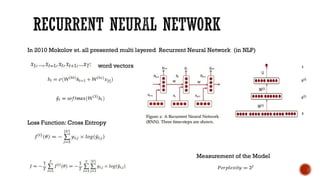

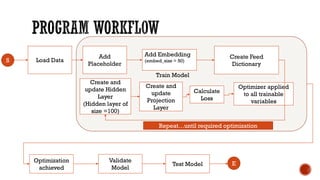

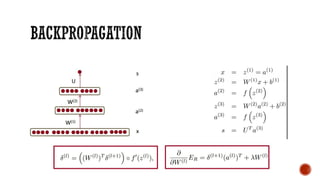

Sanjib Basak works as the director of data science at Digital River and has over 15 years of experience in analytics. He is fascinated by machine learning and deep learning, and has been experimenting with TensorFlow for 6-7 months. In his presentation, he introduces TensorFlow and builds basic models using it, including language models and RNNs. He discusses advantages of TensorFlow over Python/NumPy and takes questions at the end.