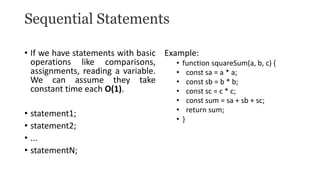

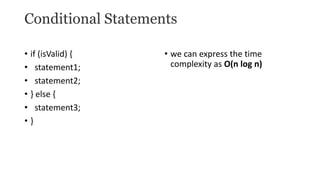

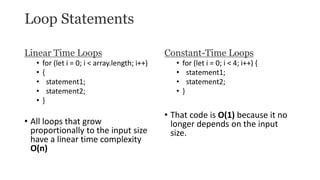

The document discusses methods for analyzing the time complexity of algorithms. It describes the step count method, where the number of times each instruction executes is counted. Common statements like loops and conditionals are analyzed. For example, a for loop executes n+1 times, while nested loops result in n^2 complexity. Time complexities like constant, linear, logarithmic, and exponential are defined. The document illustrates analyzing the linear search algorithm which runs in O(n) time.

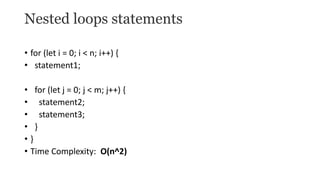

![Illustration of Step Count Method:

• Analysis of Linear Search algorithm

• Let us consider a Linear Search Algorithm.

• Linearsearch(arr, n, key)

• {

• i = 0;

• for(i = 0; i < n; i++)

• {

• if(arr[i] == key)

• {

• printf(“Found”);

• }

• }](https://image.slidesharecdn.com/stepcountmethodfortimecomplexityanalysis-240205051900-25214717/85/Step-Count-Method-for-Time-Complexity-Analysis-pptx-8-320.jpg)

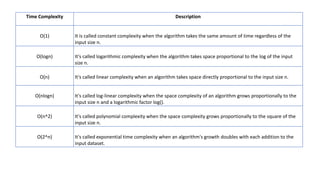

![(Cont..)

• Where,

• i = 0, is an initialization statement and takes O(1) times.

• for(i = 0;i < n ; i++), is a loop and it takes O(n+1) times .

• if(arr[i] == key), is a conditional statement and takes O(1) times.

• printf(“Found”), is a function and that takes O(0)/O(1) times.

• Therefore Total Number of times it is executed is n + 4 times. As we

ignore lower exponents in time complexity total time became O(n).

• Time complexity: O(n).

Auxiliary Space: O(1)](https://image.slidesharecdn.com/stepcountmethodfortimecomplexityanalysis-240205051900-25214717/85/Step-Count-Method-for-Time-Complexity-Analysis-pptx-9-320.jpg)

![Logarithmic Time Loops

• Consider the following code, where we divide an array in half on each iteration (binary

search)

• function fn1(array, target, low = 0, high = array.length - 1) {

• let mid;

• while ( low <= high ) {

• mid = ( low + high ) / 2;

• if ( target < array[mid] )

• high = mid - 1;

• else if ( target > array[mid] )

• low = mid + 1;

• else break;

• }

• return mid;

• }

• Time complexity: O(log(n))](https://image.slidesharecdn.com/stepcountmethodfortimecomplexityanalysis-240205051900-25214717/85/Step-Count-Method-for-Time-Complexity-Analysis-pptx-14-320.jpg)