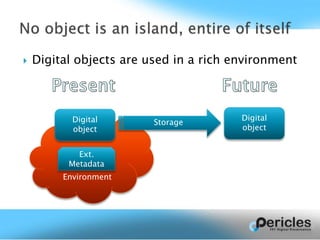

This document summarizes a presentation about PET, an open source tool for collecting digital object environments and supporting digital preservation. PET monitors digital object use throughout their lifecycle to collect information about objects and their relationships, called semantic environment information (SEI). It analyzes this data to create a weighted graph of inter-object relationships. This graph can help understand relationships and support appraisal/collection definition. The document provides examples of how PET could track an operator troubleshooting an anomaly by recording relevant environment information and documentation. It invites the audience to consider other useful applications and get involved in the open source project.

![GRANT AGREEMENT: 601138 | SCHEME FP7 ICT 2011.4.3

Promoting and Enhancing Reuse of Information throughout the Content Lifecycle taking account of Evolving

Semantics [Digital Preservation]

Fabio Corubolo, University of Liverpool

11 February, IDCC 2015, London](https://image.slidesharecdn.com/idccpetintro-150217112517-conversion-gate01/85/Slides-for-IDCC-PET-presentation-1-320.jpg)