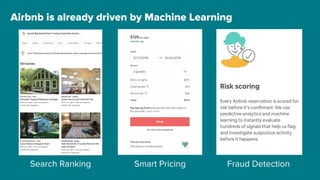

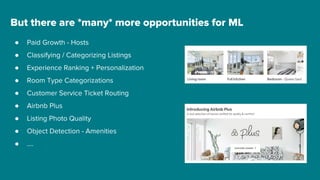

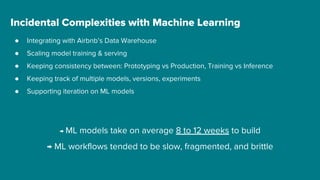

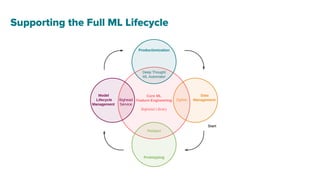

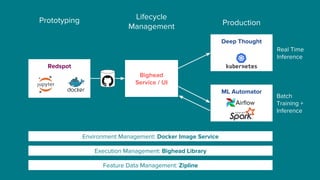

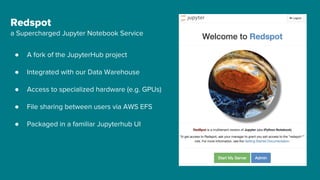

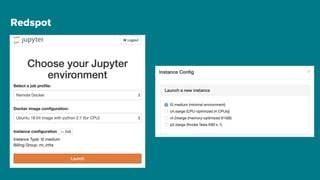

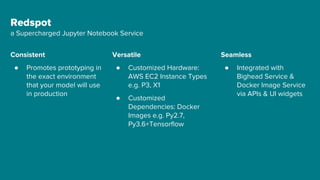

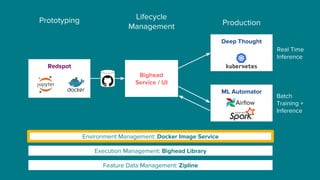

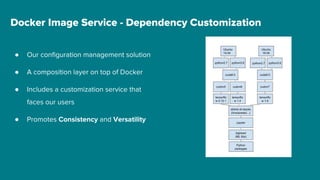

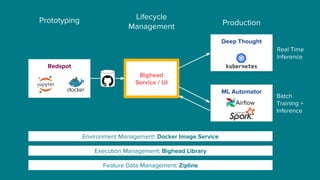

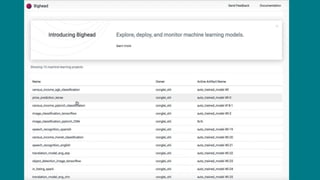

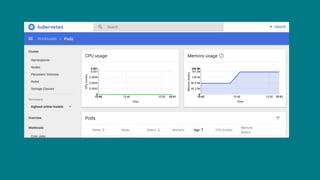

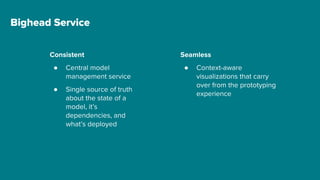

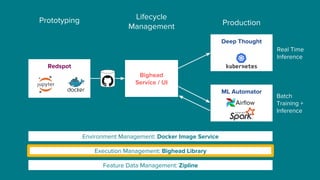

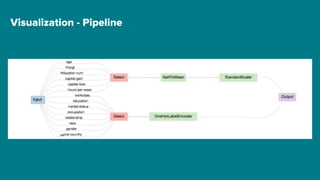

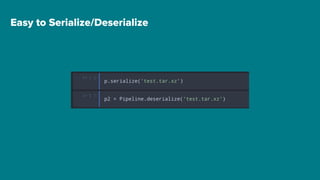

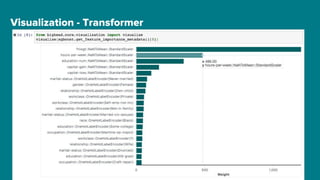

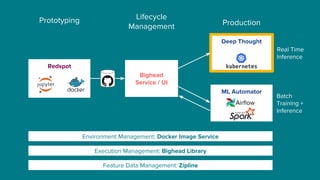

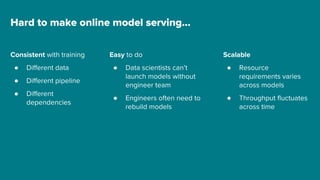

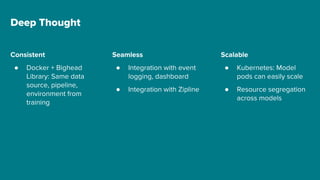

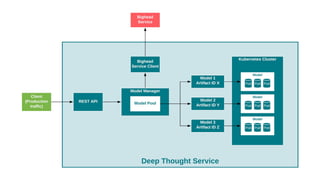

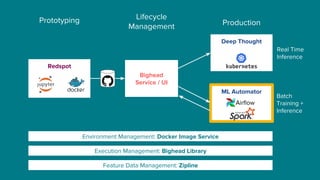

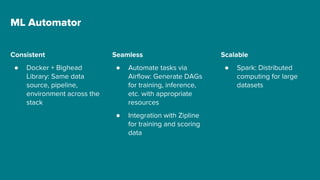

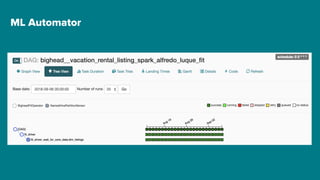

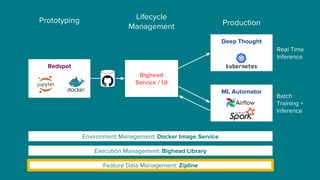

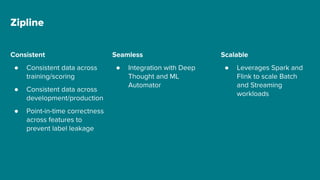

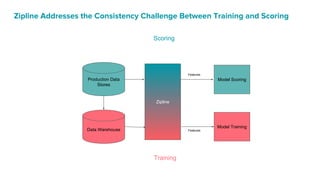

The document details Airbnb's machine learning infrastructure, designed to support various ML applications and streamline the development process. It outlines the architecture and key components like Bighead, Redspot, and Zipline, emphasizing scalability, consistency, and ease of use in deploying models. The infrastructure aims to enhance ML workflows by automating tasks, ensuring model lifecycle management, and integrating multiple data management frameworks.