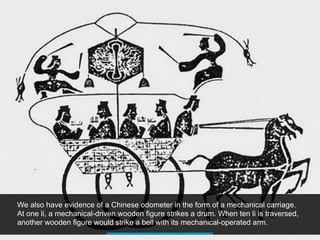

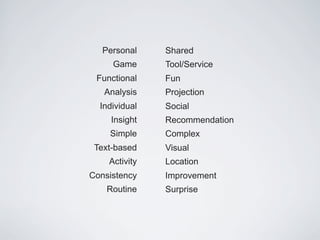

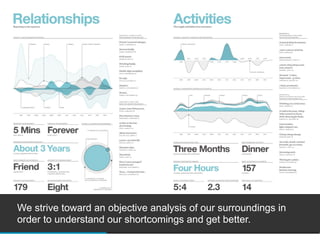

1. People have long been fascinated with understanding themselves through objective analysis of their surroundings and tracking tools help provide insights into activities, behaviors, and improvements.

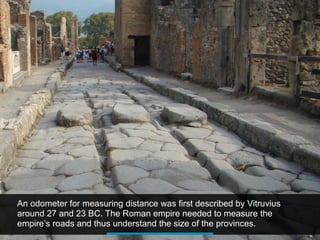

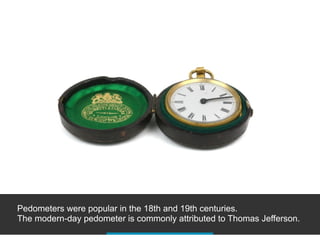

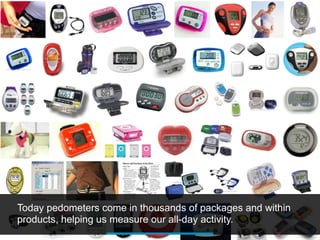

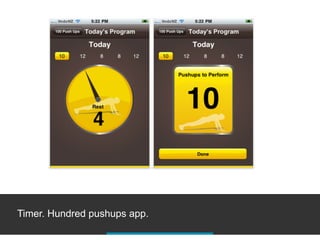

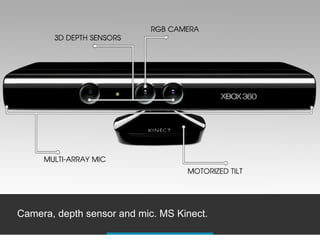

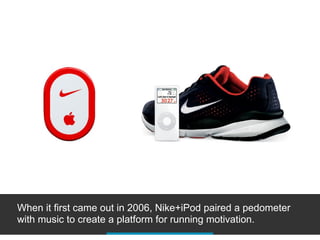

2. Tracking technologies have evolved from simple devices like pedometers to more advanced sensors in devices that can track a variety of daily activities both active and inactive.

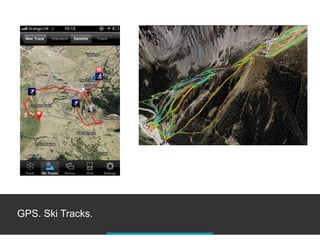

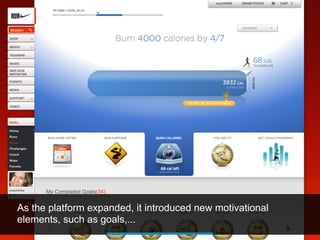

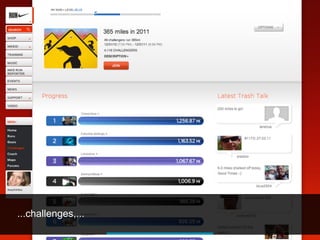

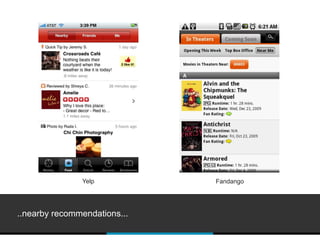

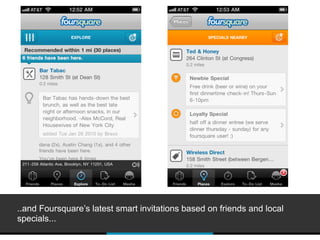

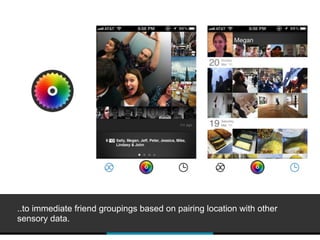

3. Emerging areas of focus include location-based tracking and combining multiple sensory data streams to gain a more holistic view of behaviors.