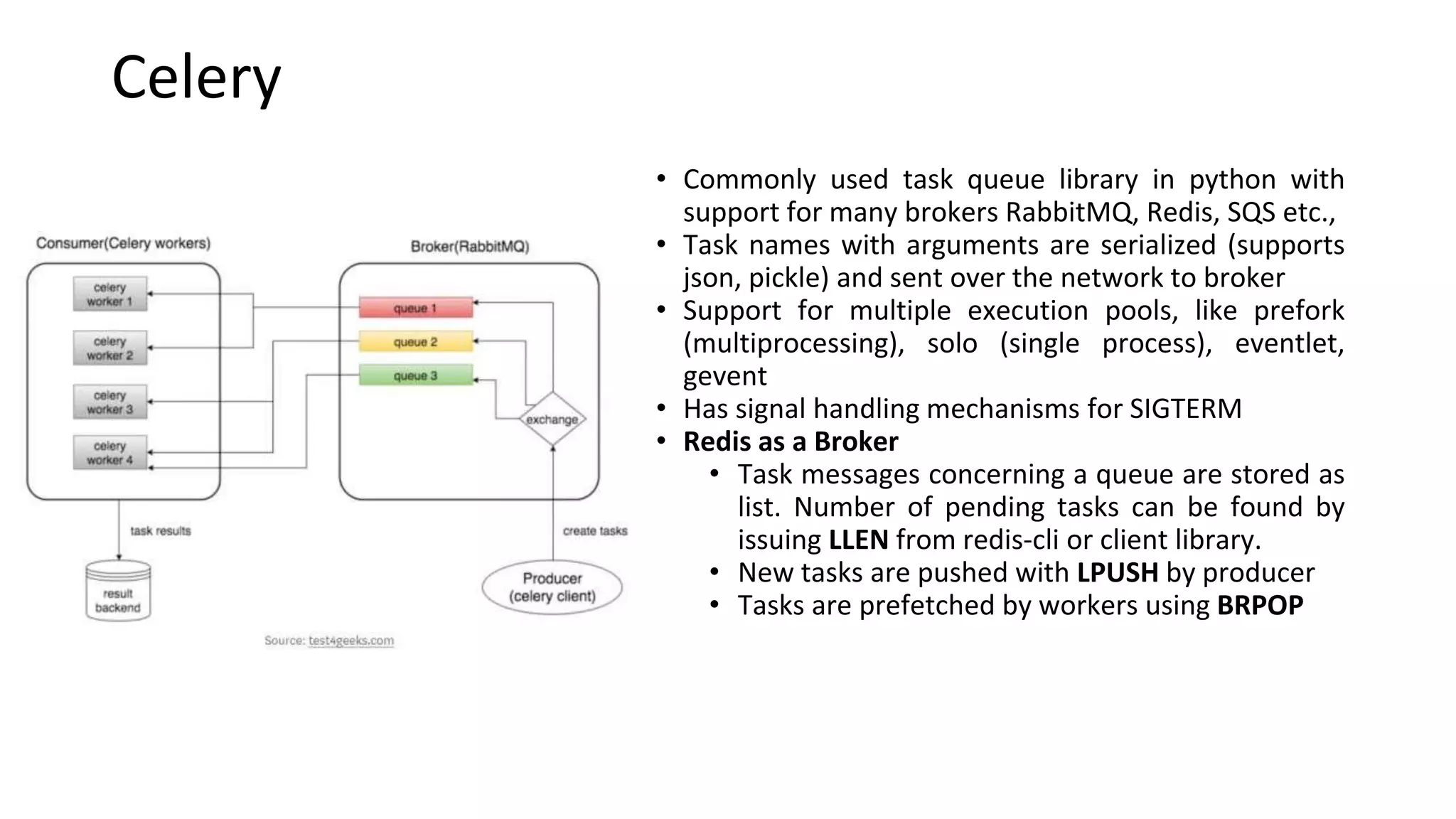

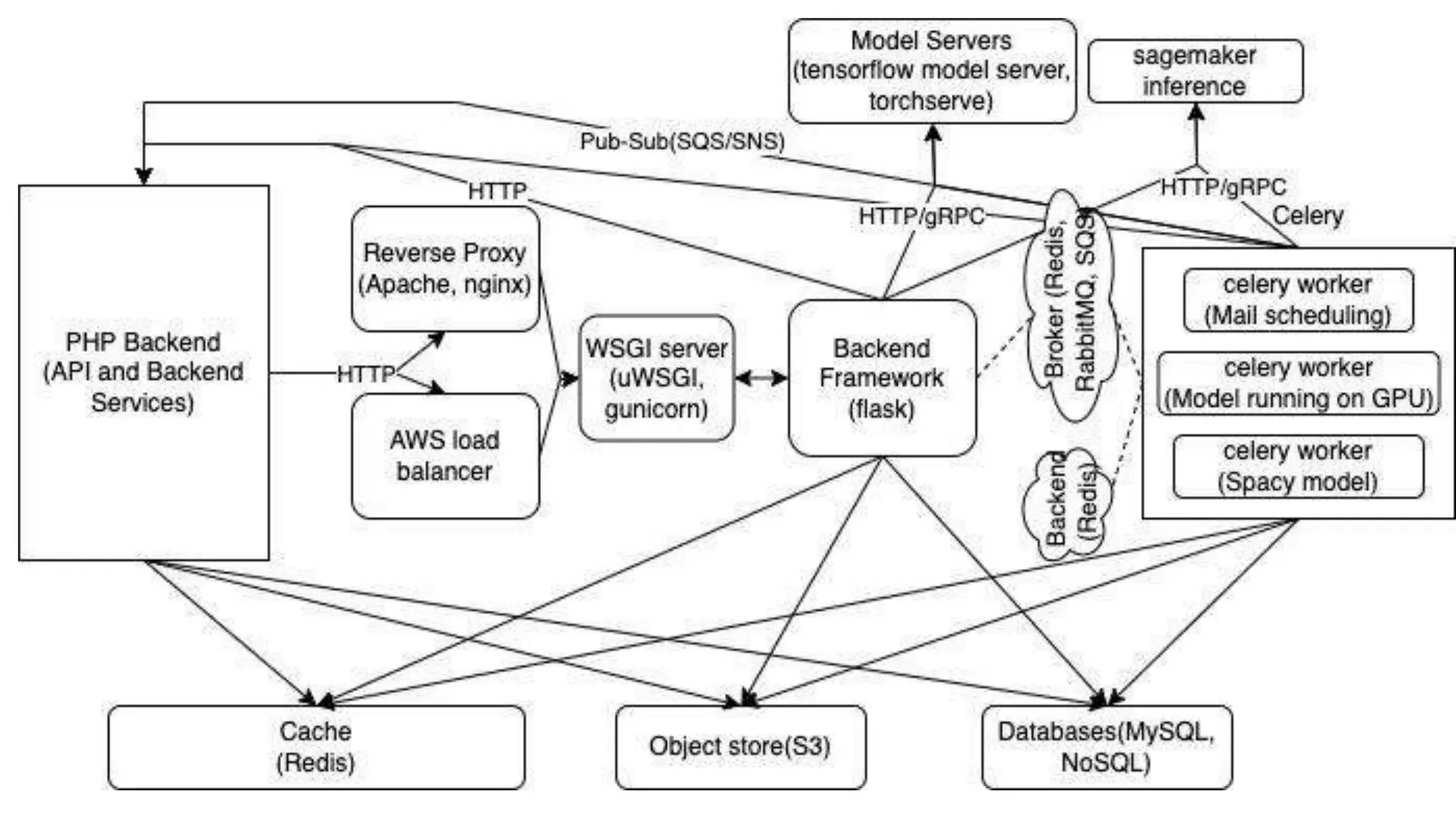

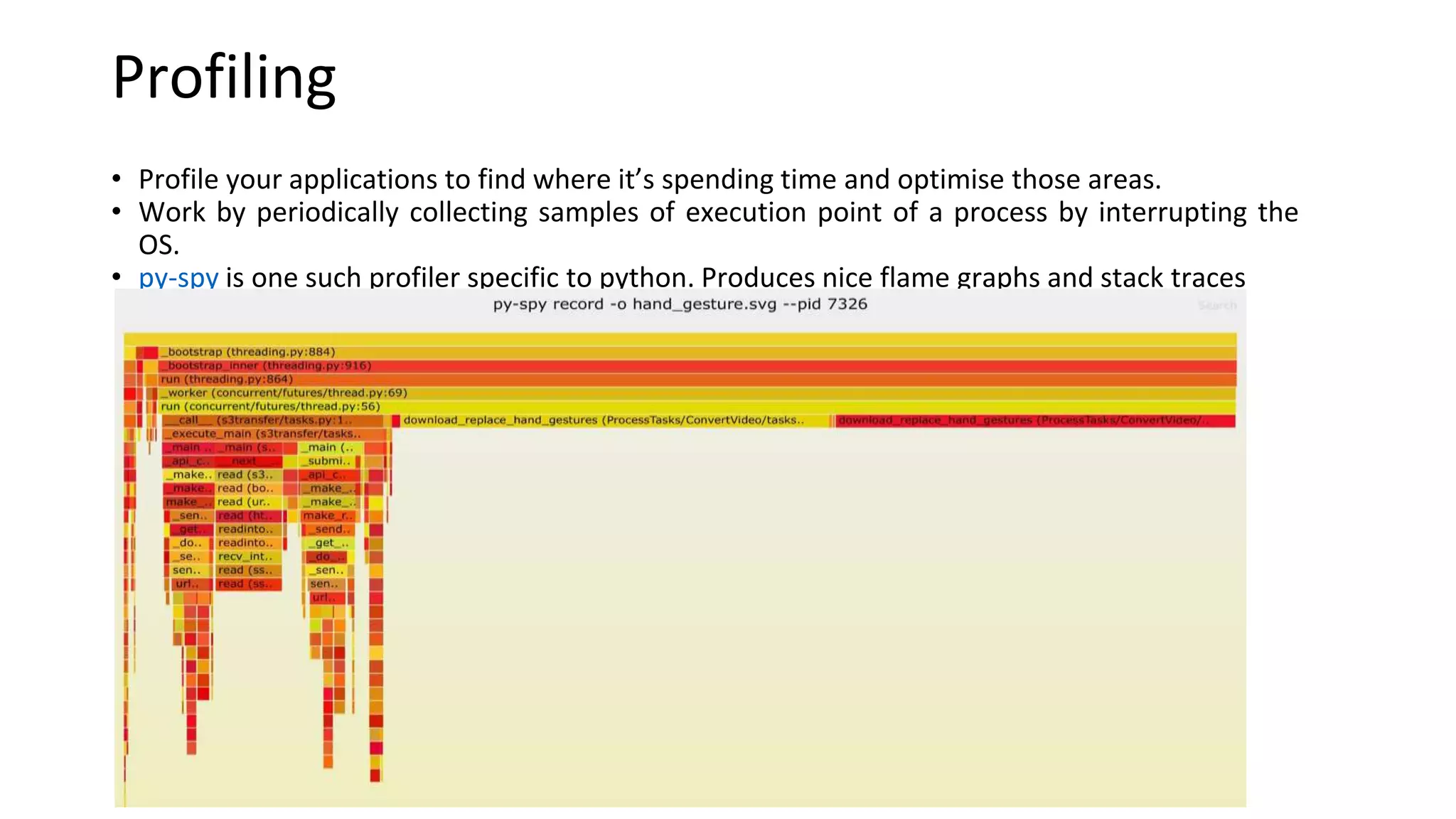

This document discusses Python web application development. It summarizes popular packages for web development with Flask including SQLAlchemy, Celery, and TensorFlow Model Server. It provides best practices for Flask, Celery, and Docker deployment. It also discusses profiling Python applications and handling signals in Docker containers.