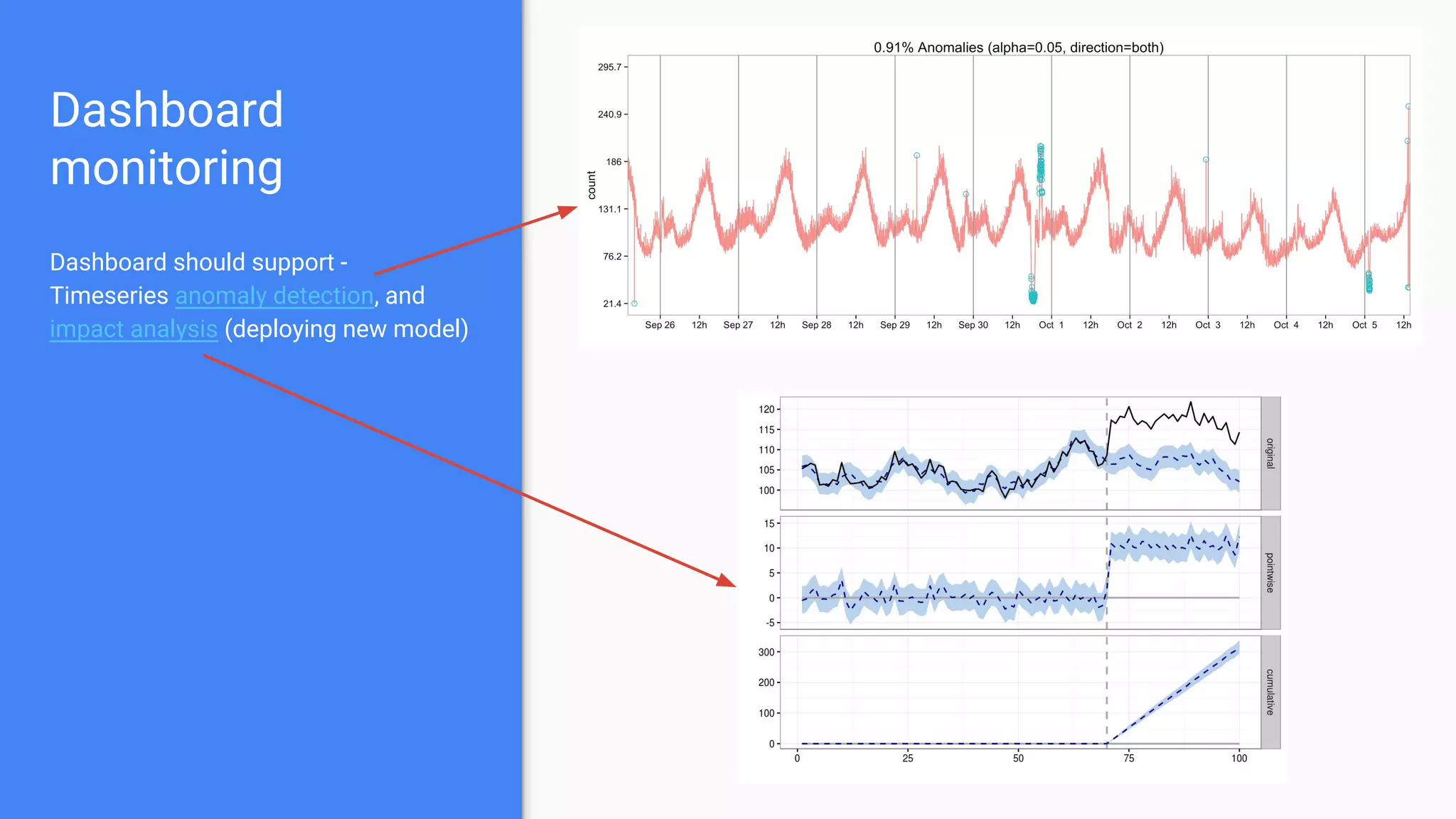

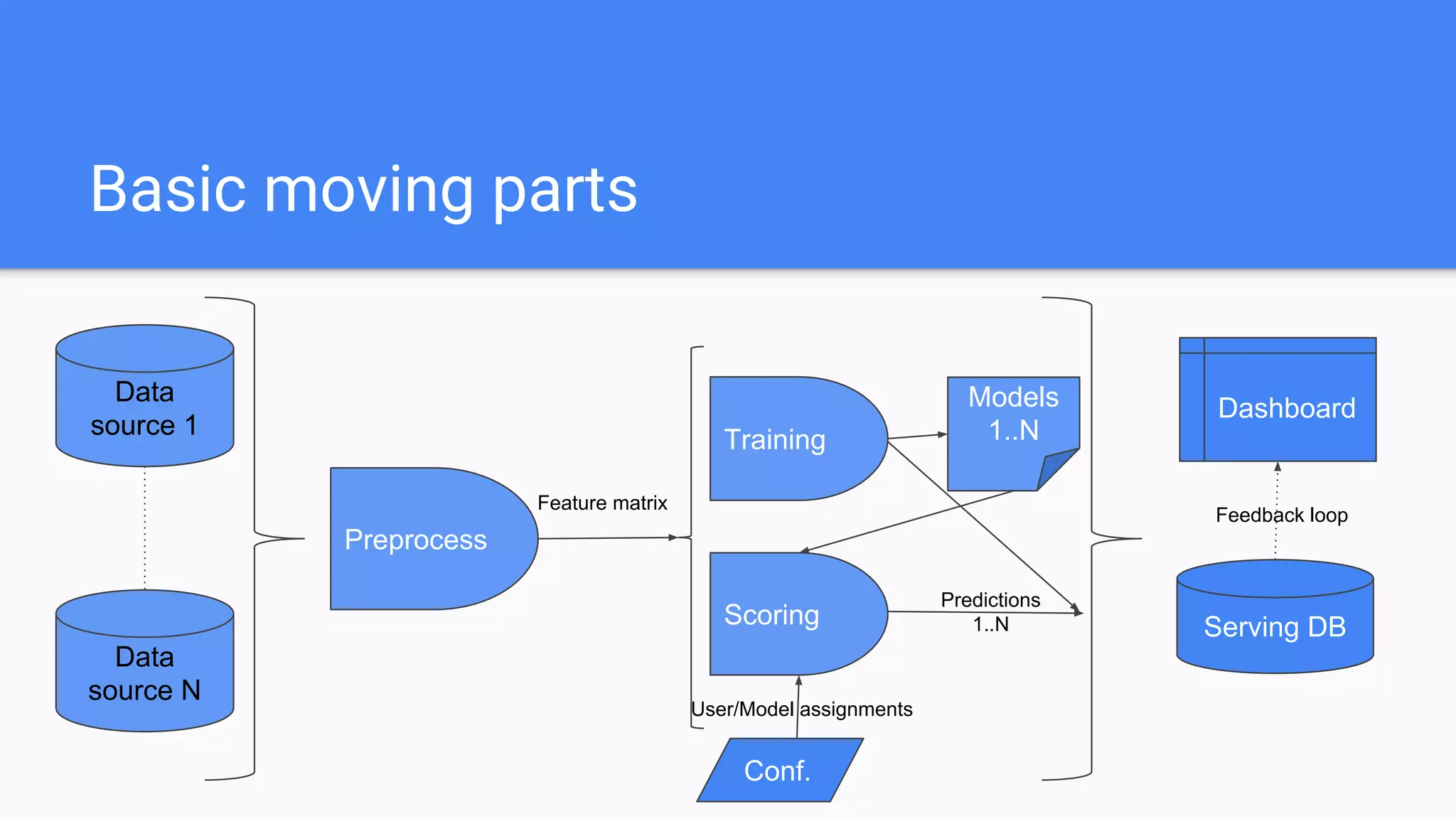

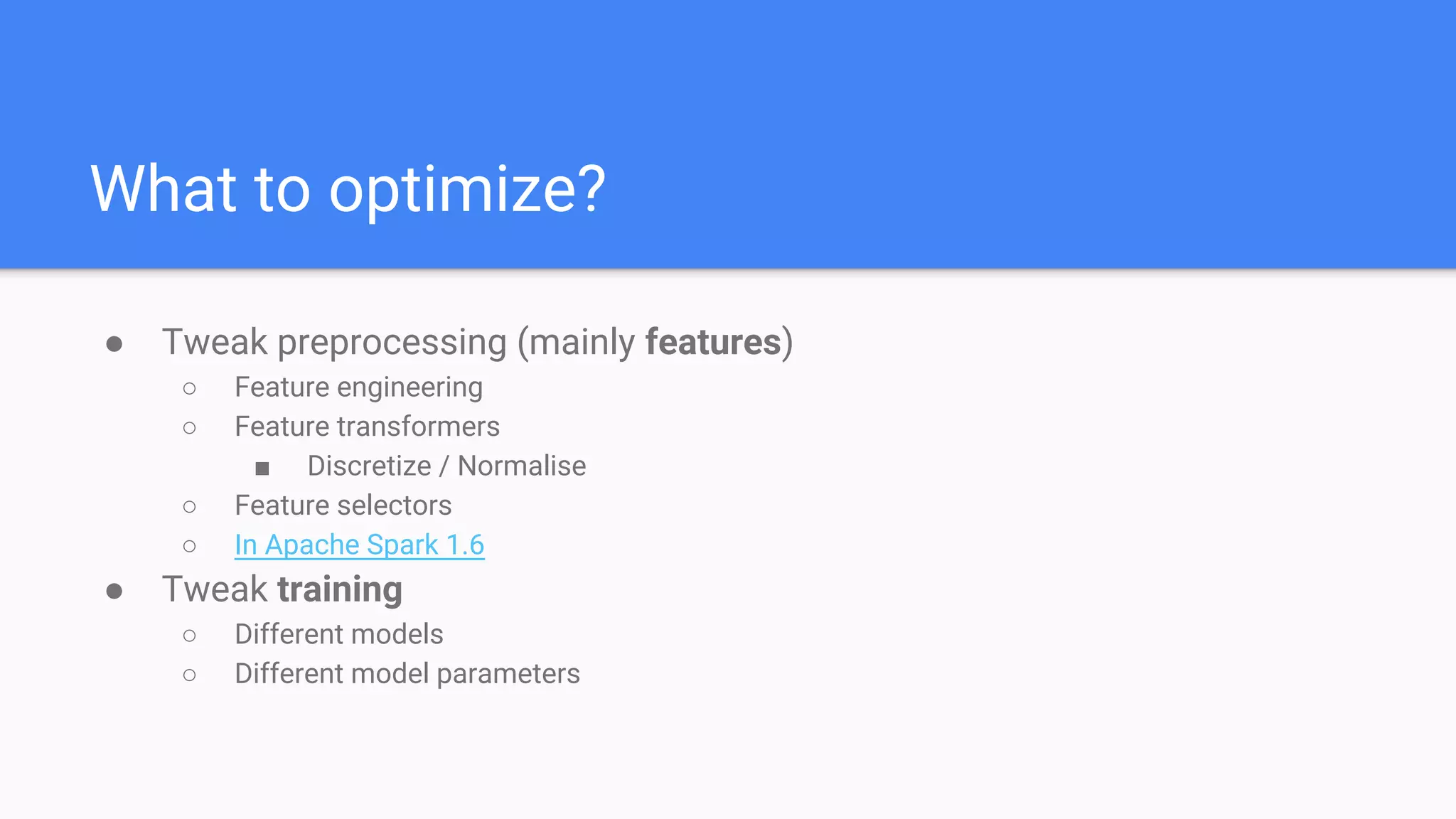

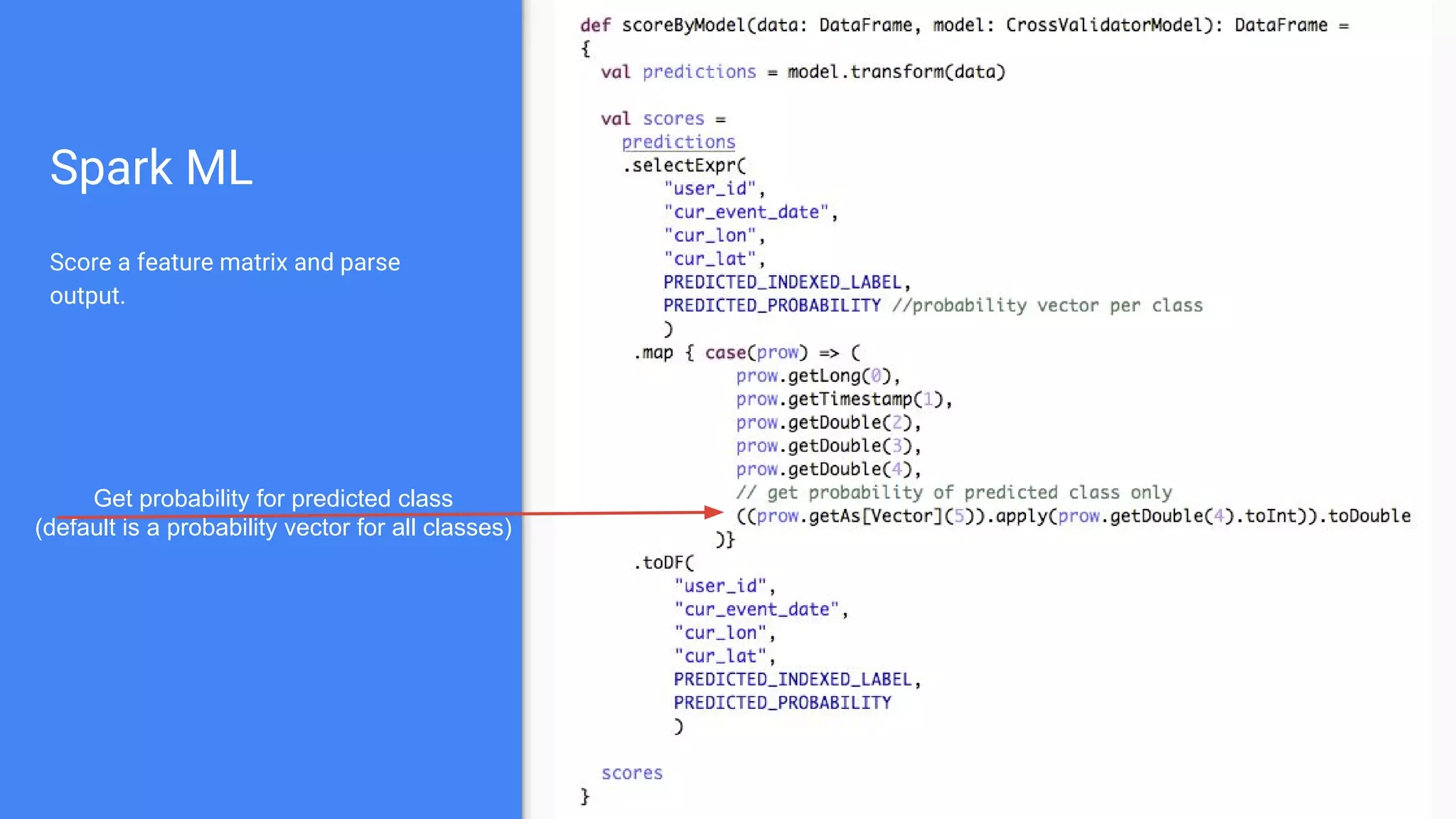

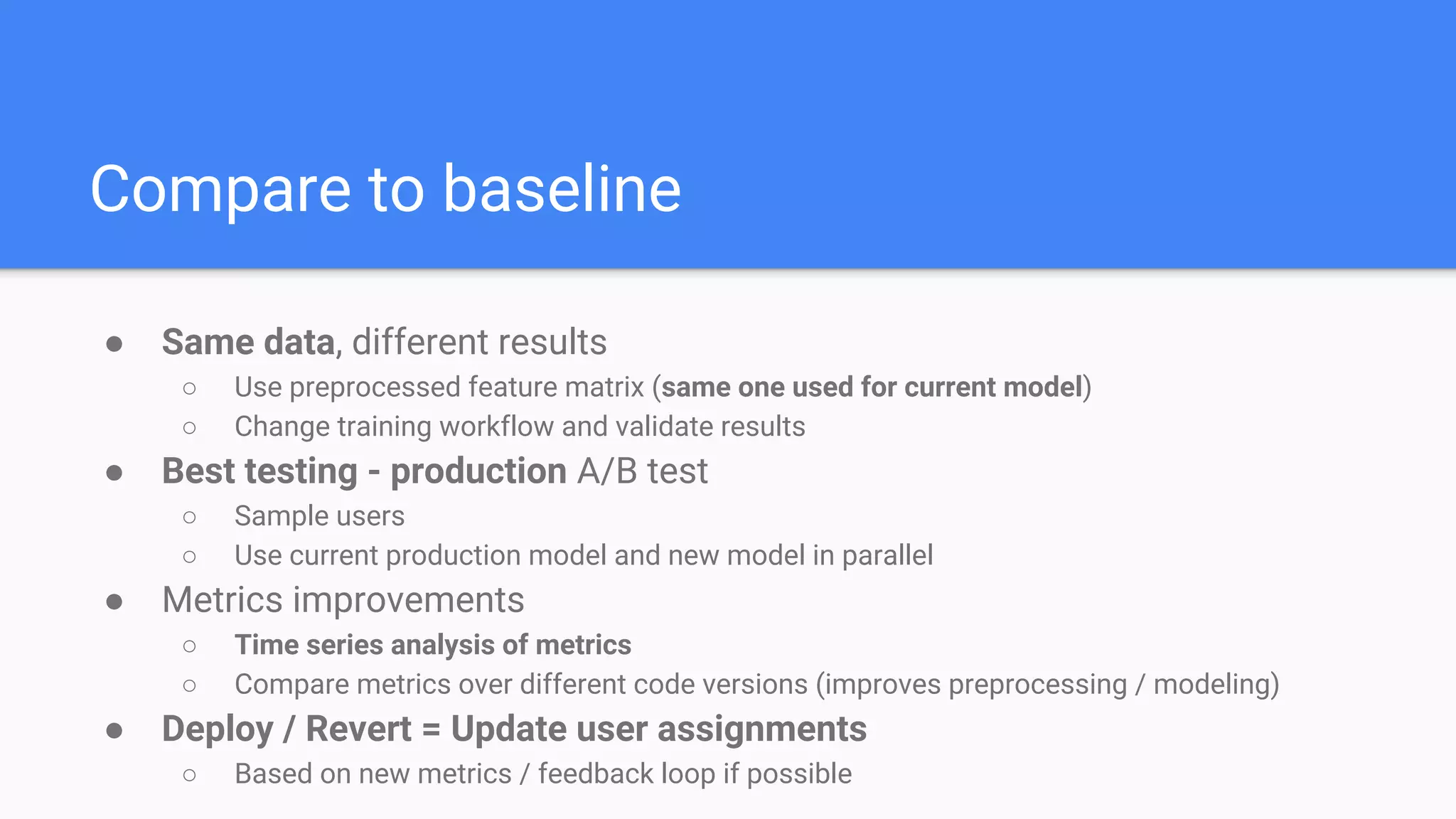

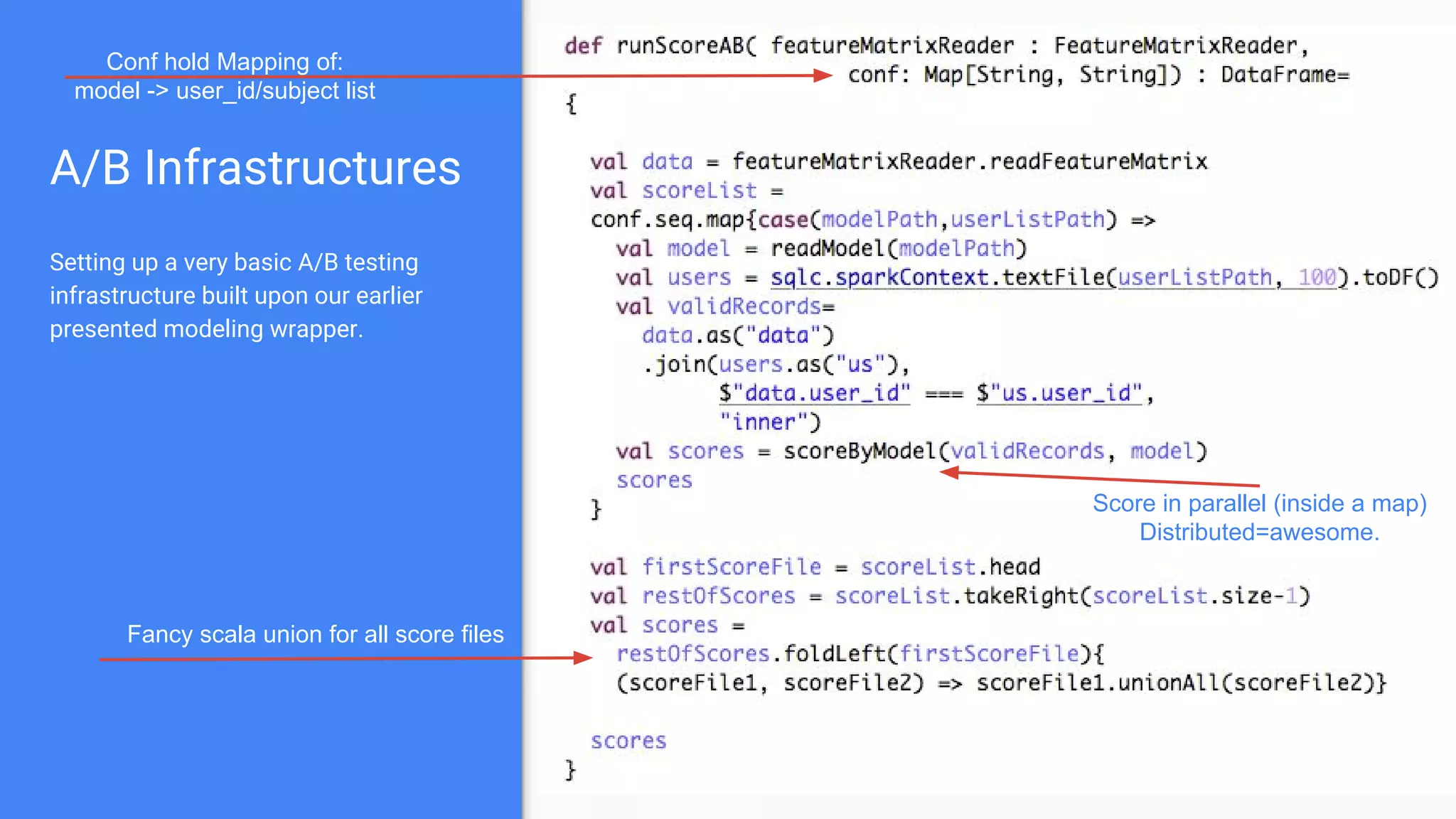

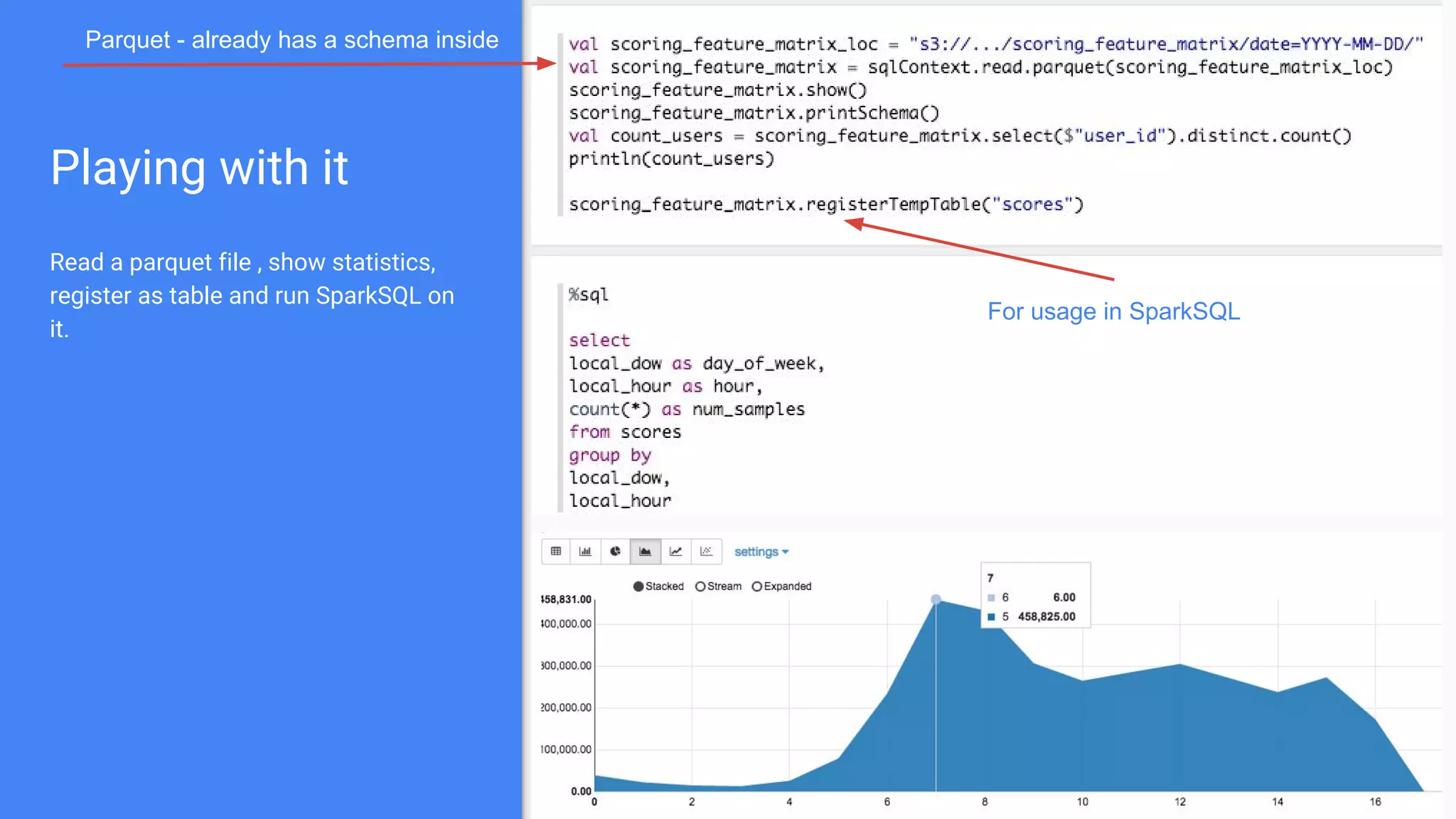

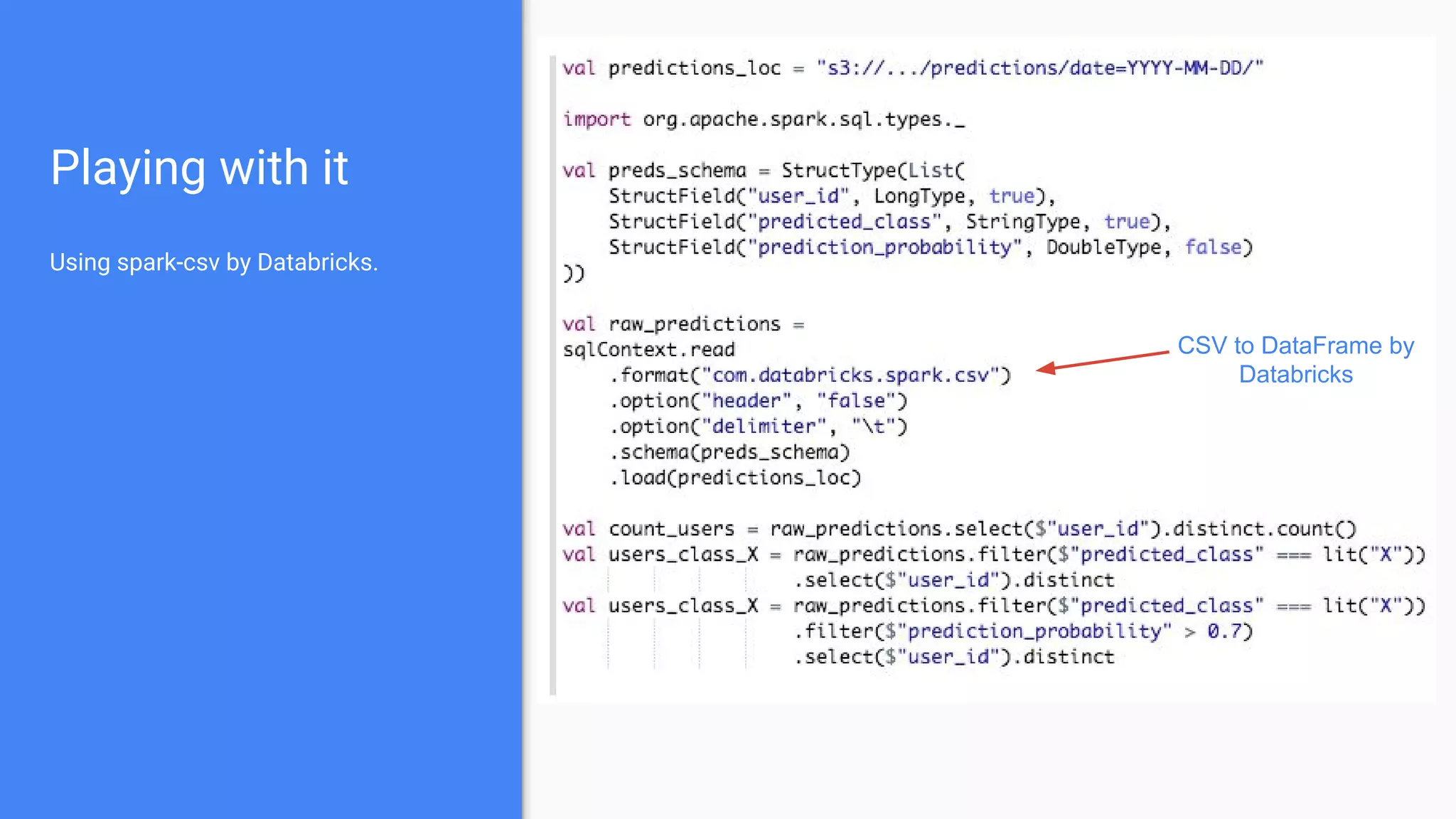

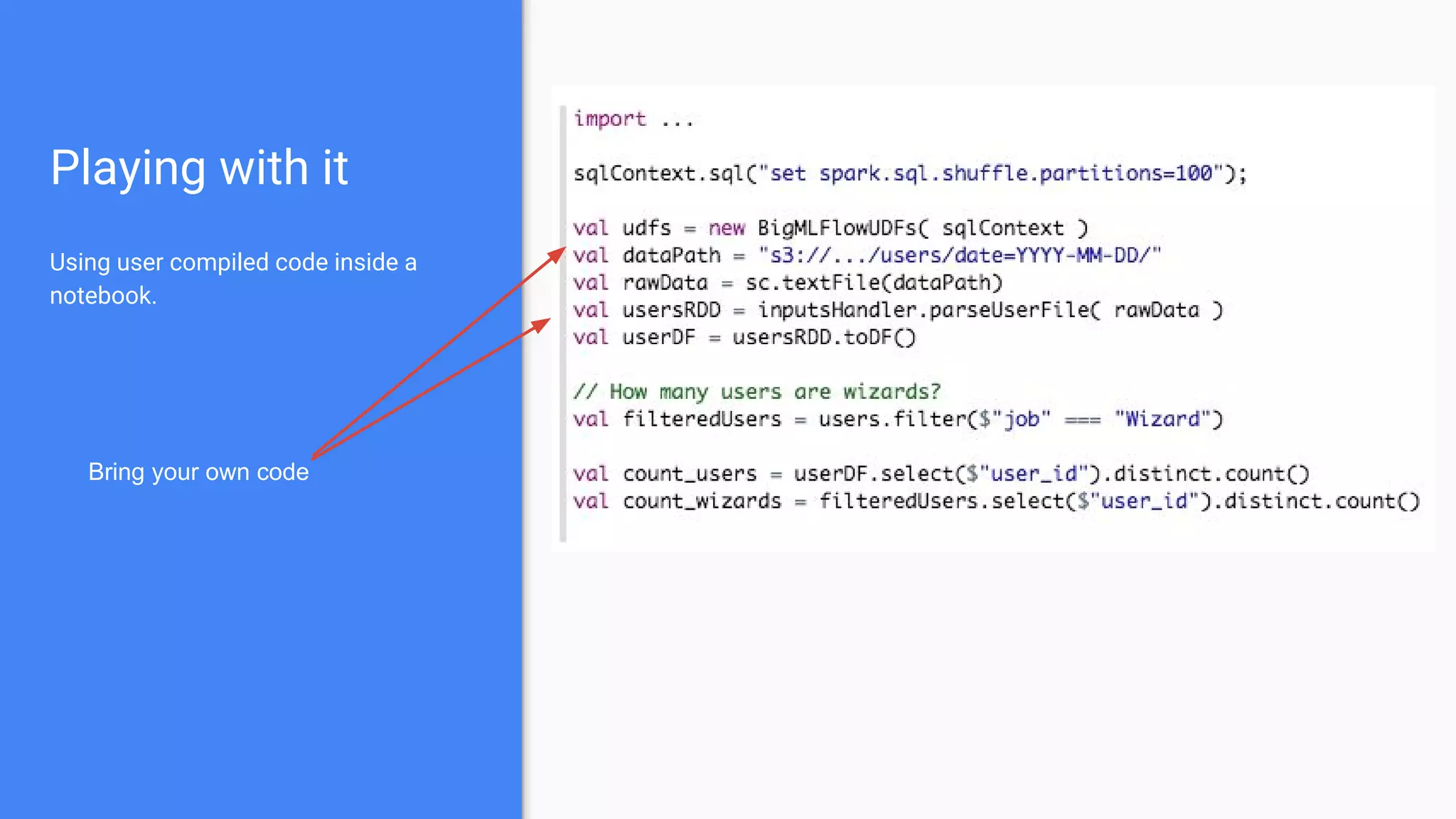

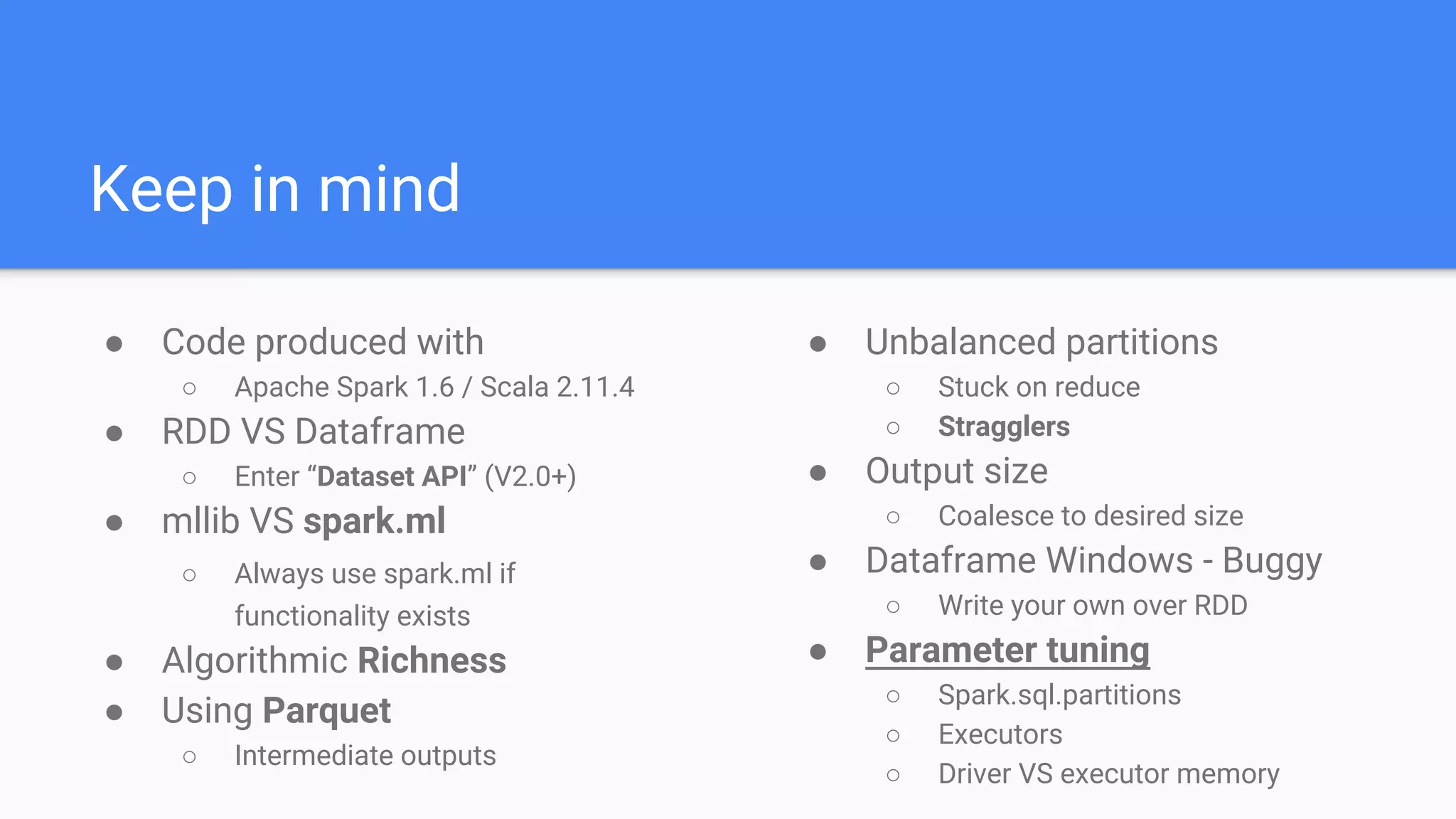

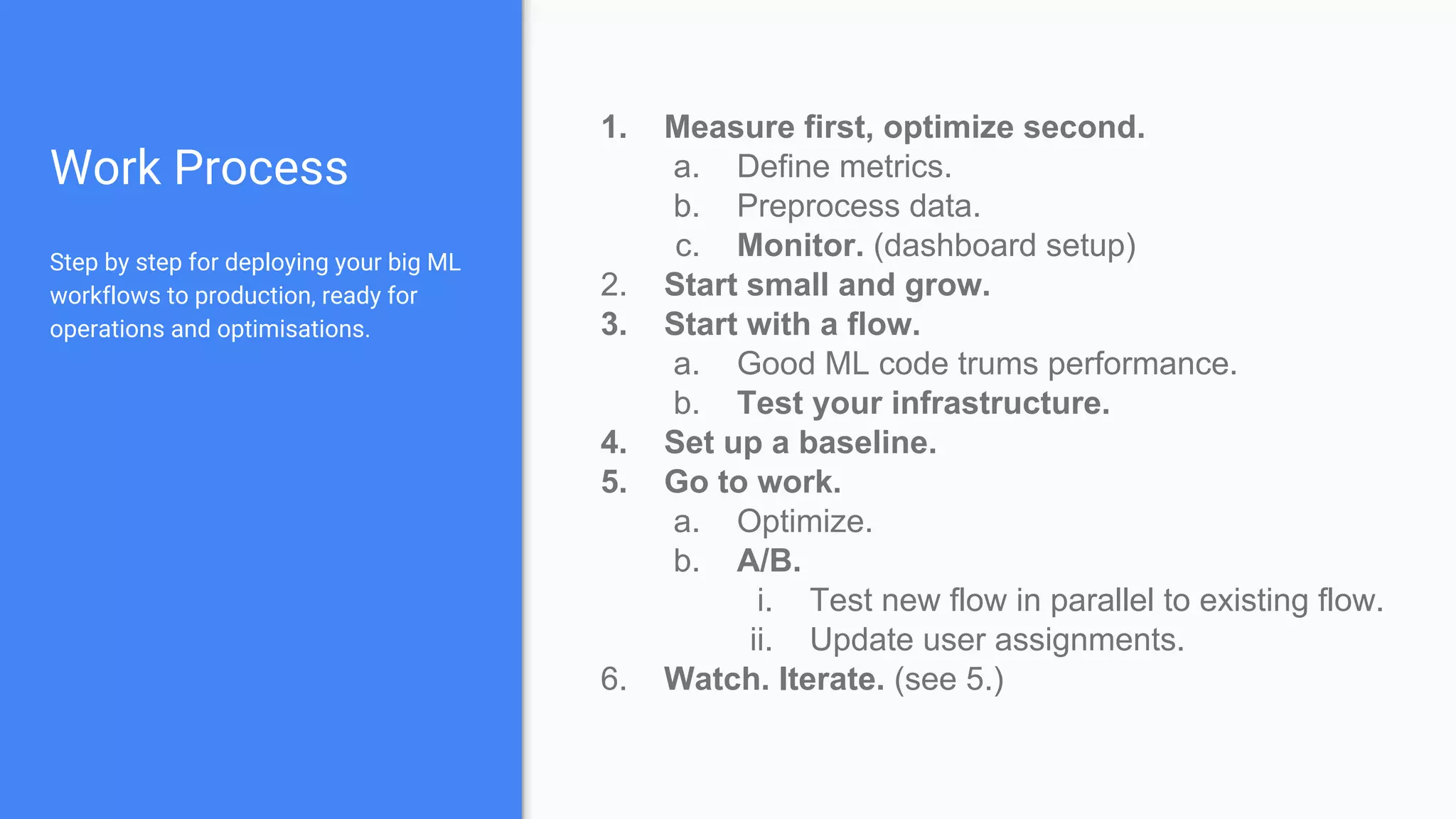

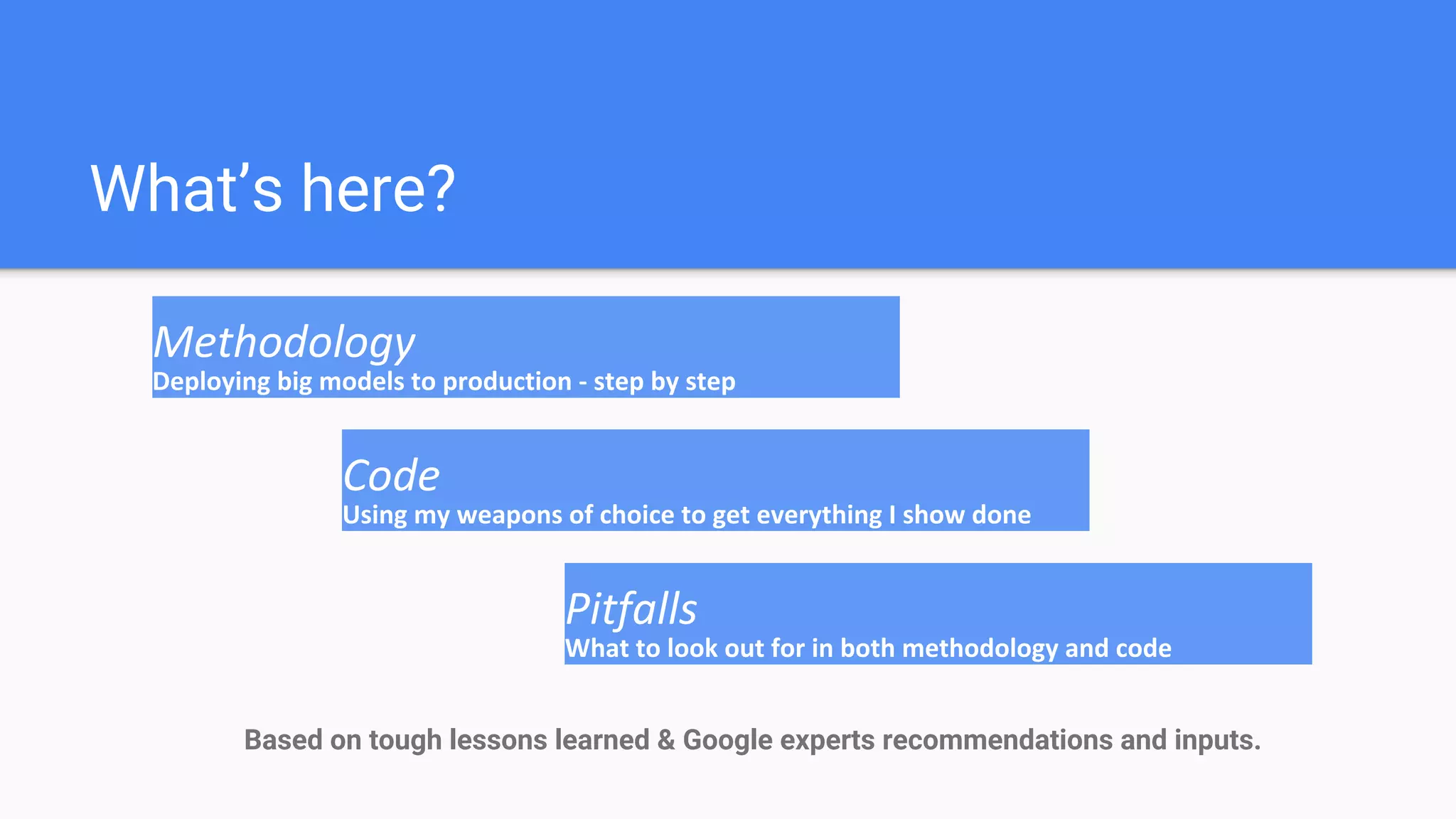

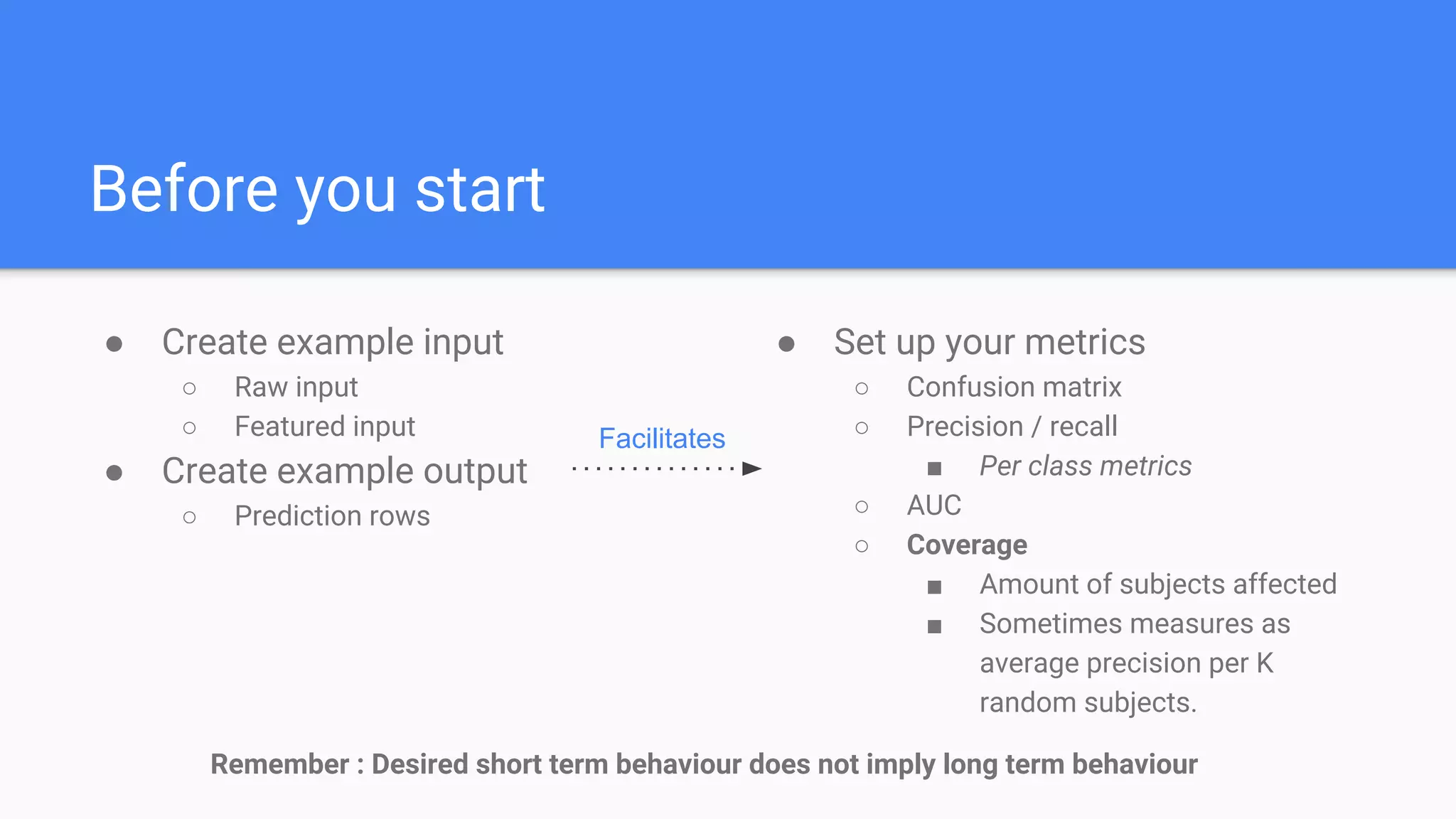

The document outlines a comprehensive approach to deploying big data analytics workflows in production, particularly through the use of Google’s Waze application. It emphasizes the importance of measuring and optimizing workflows, assessing various methodologies, and learning from both successes and failures. Key topics include data preprocessing, model evaluation, dashboard monitoring, and practical use cases for improving services like traffic management and anomaly detection.

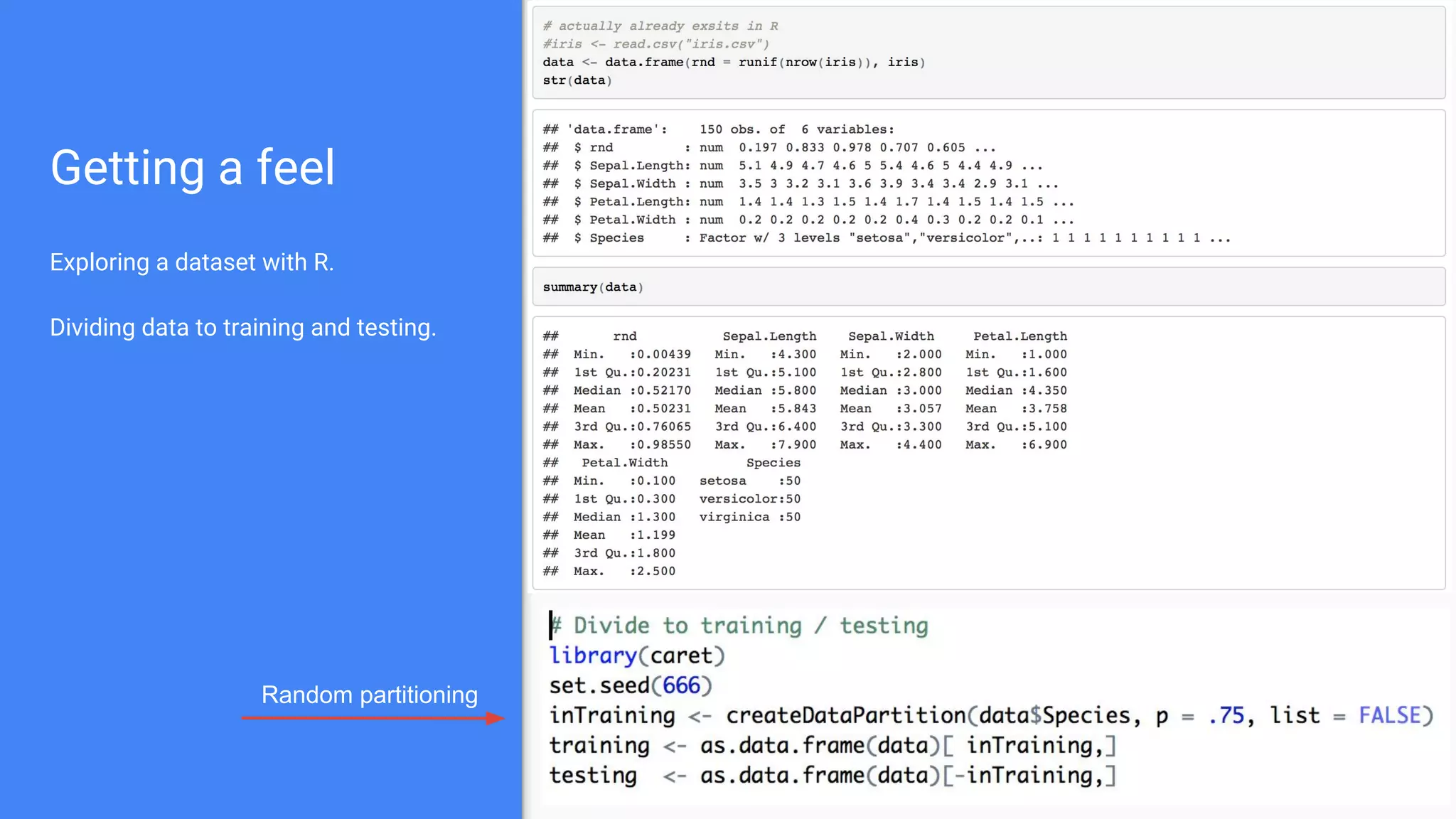

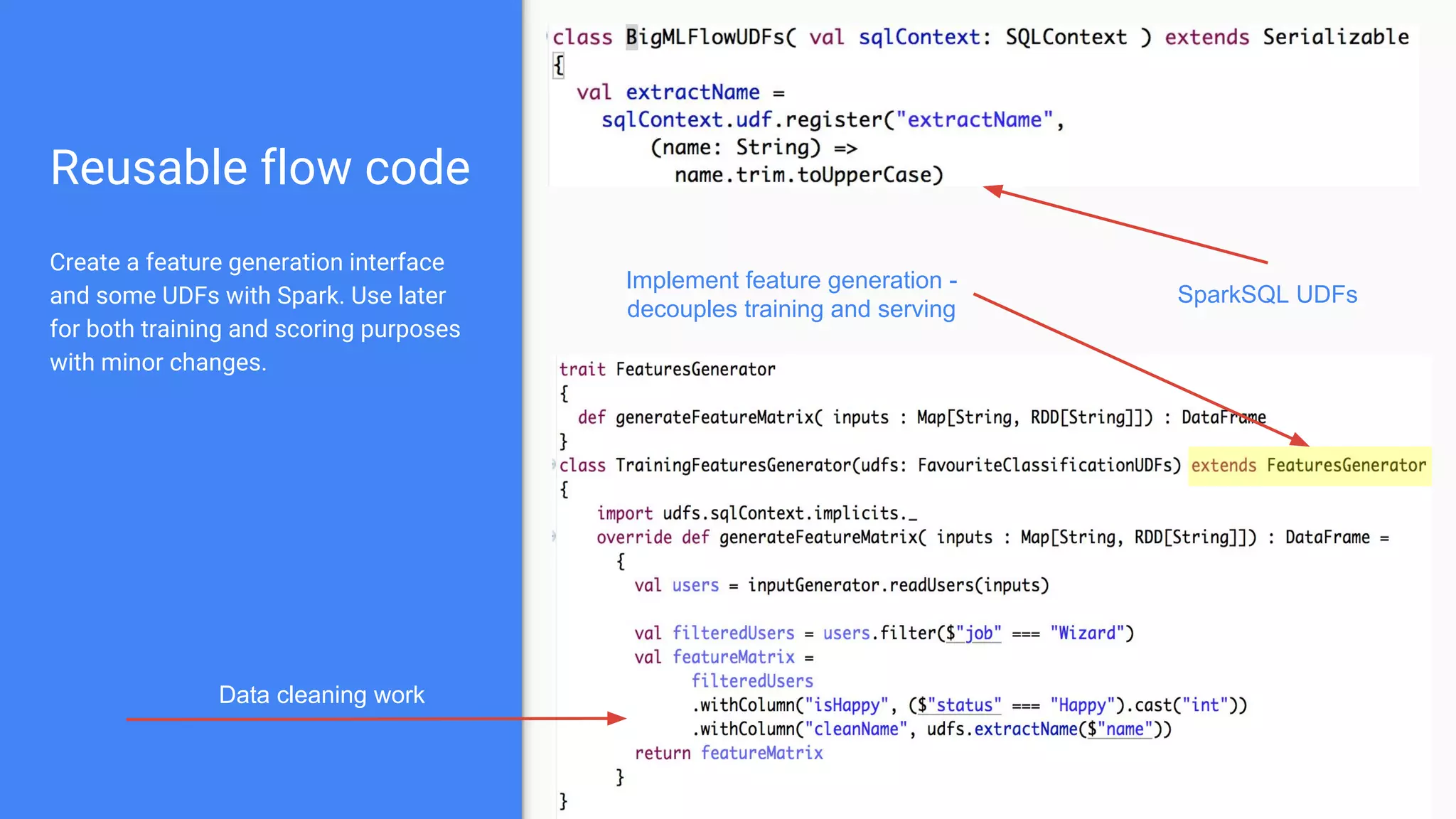

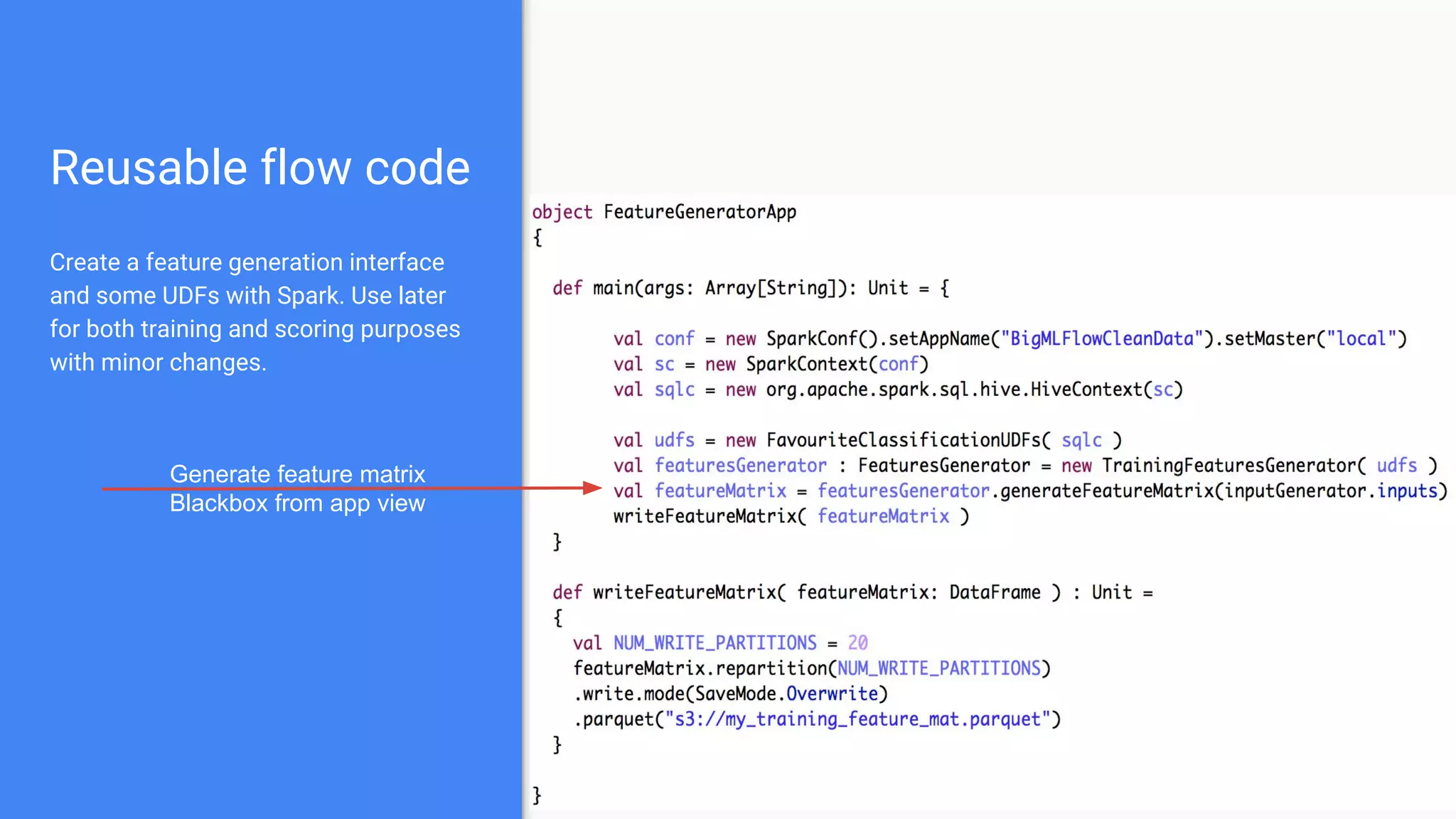

![Preprocess

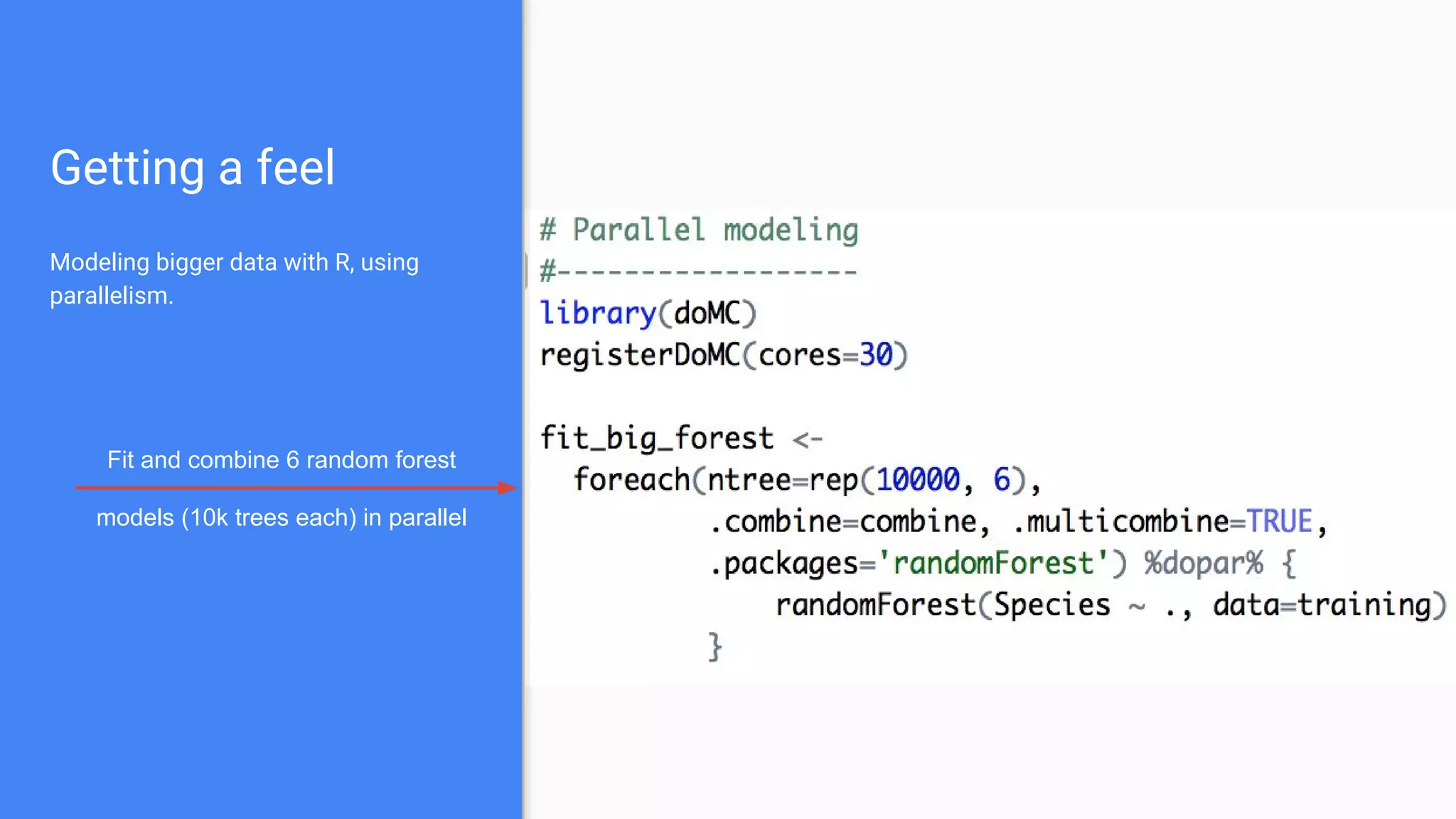

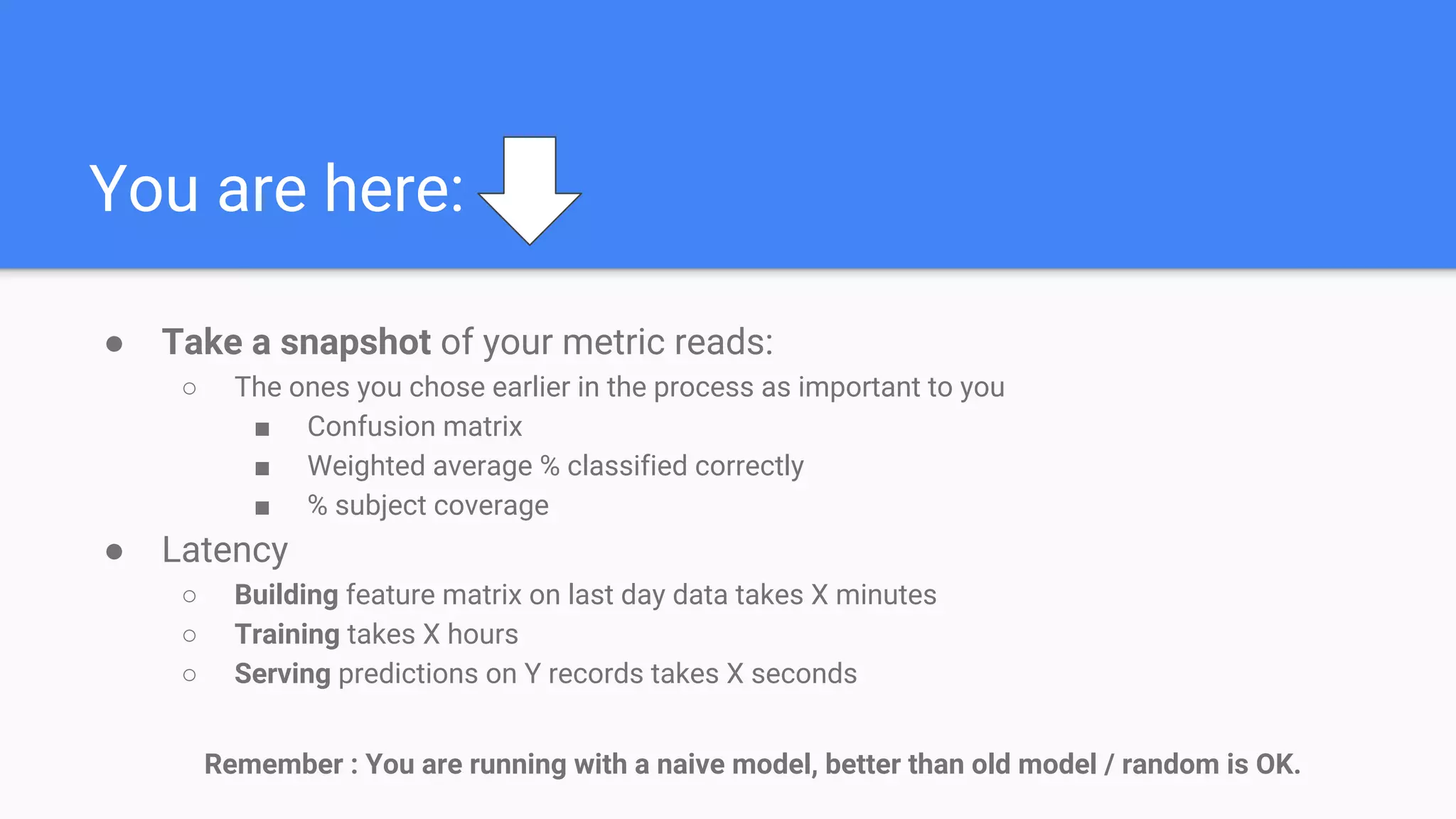

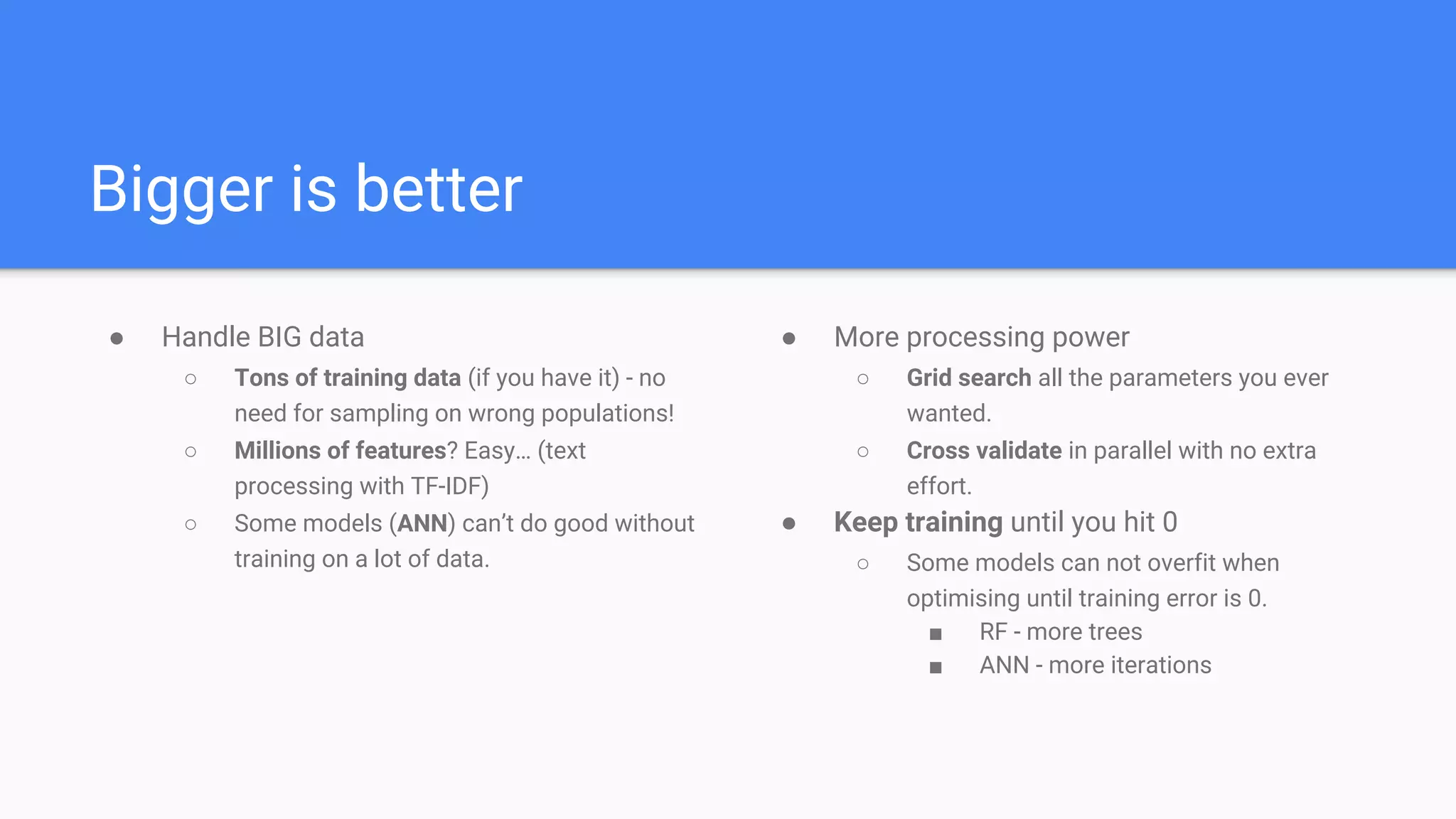

● Naive feature matrix

○ Parse (Text -> RDD[Object] -> DataFrame)

○ Clean (remove outliers / bad records)

○ Join

○ Remove non-features

● Get real data

● Create a baseline dataset for training

○ Add some basic features

■ Day of week / hour / etc.

○ Write a READABLE CSV that you can start and work with.](https://image.slidesharecdn.com/bigmlwithsparktoproduction-160530111447/75/Production-Ready-BIG-ML-Workflows-from-zero-to-hero-17-2048.jpg)

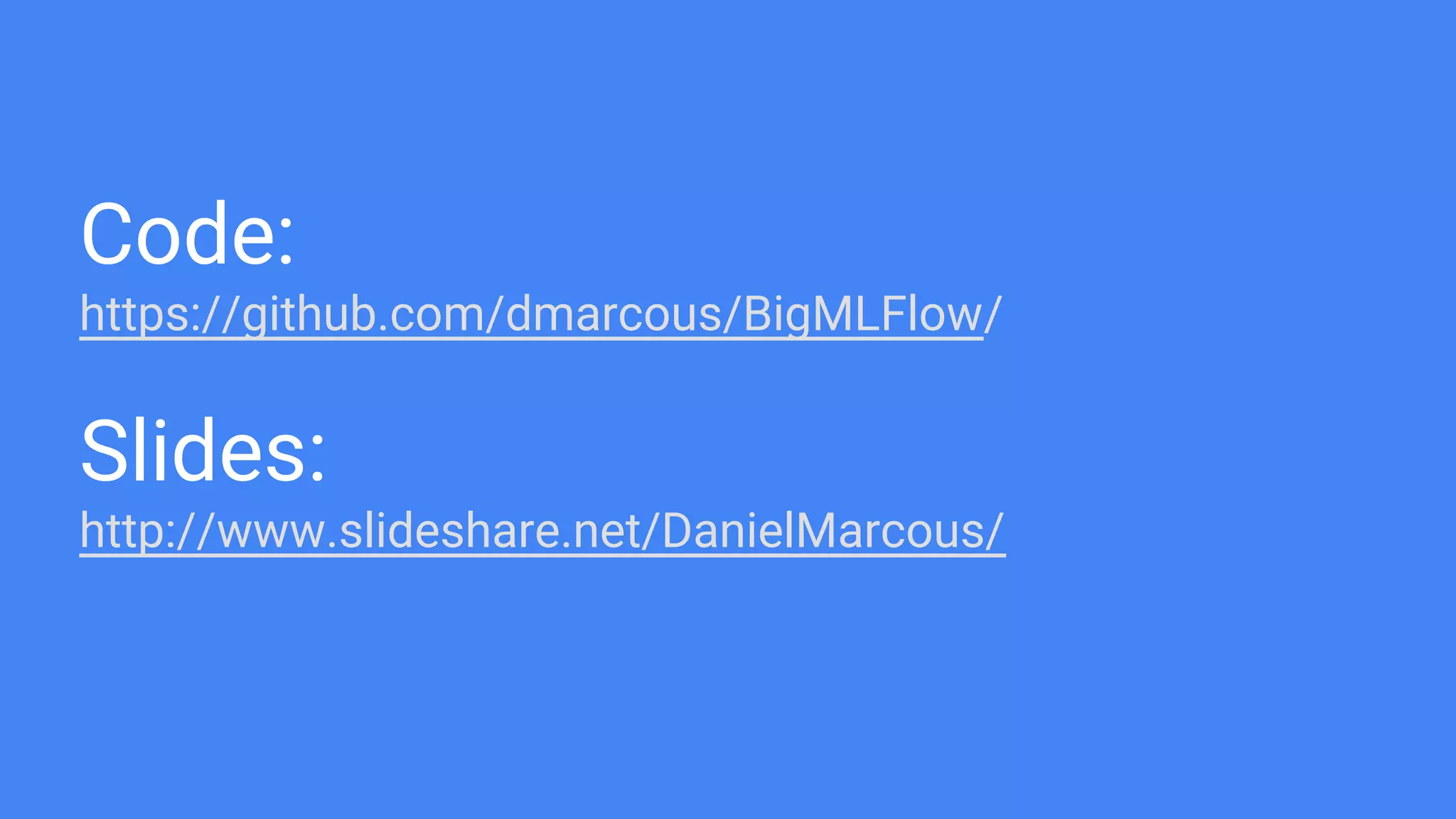

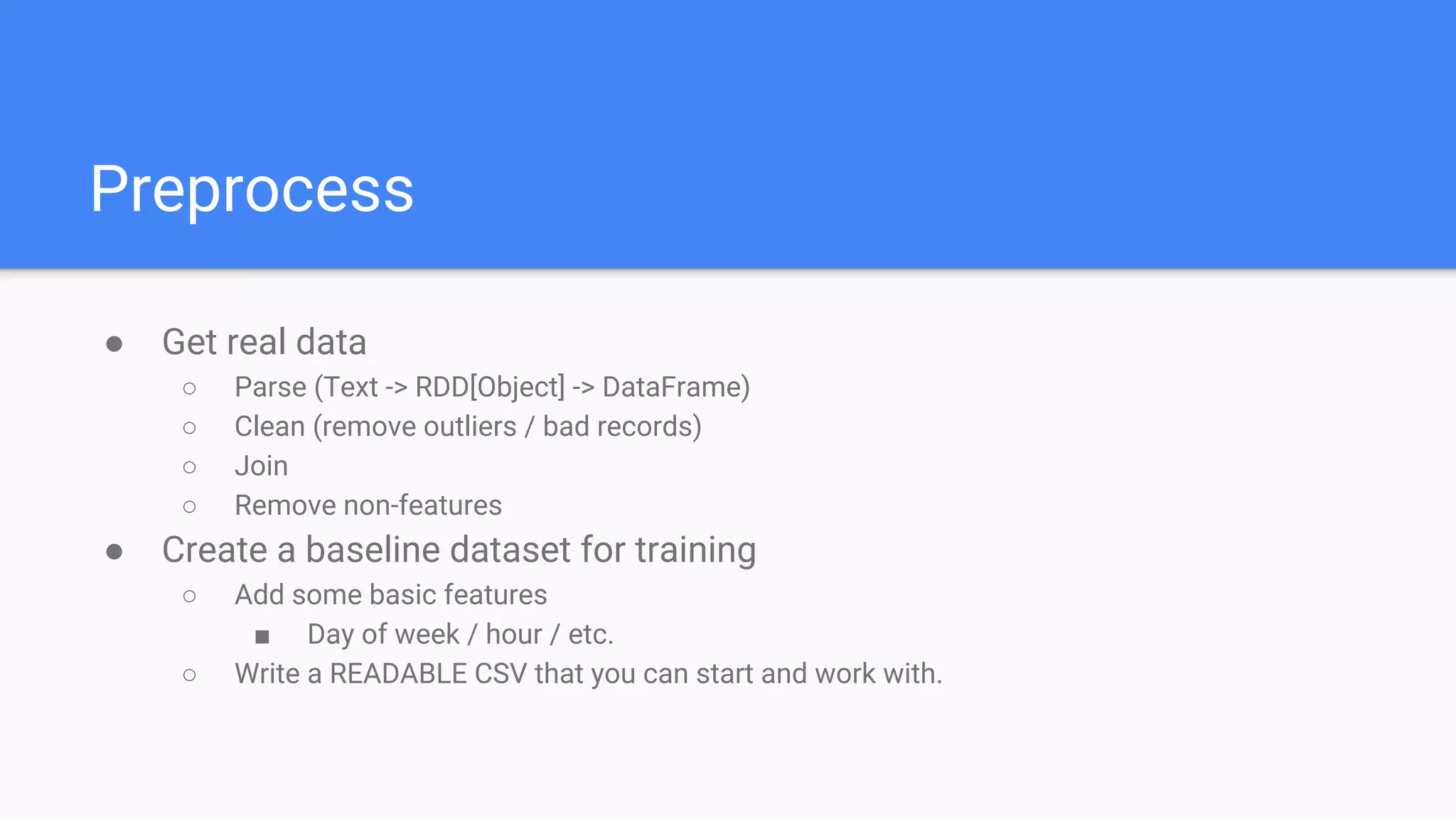

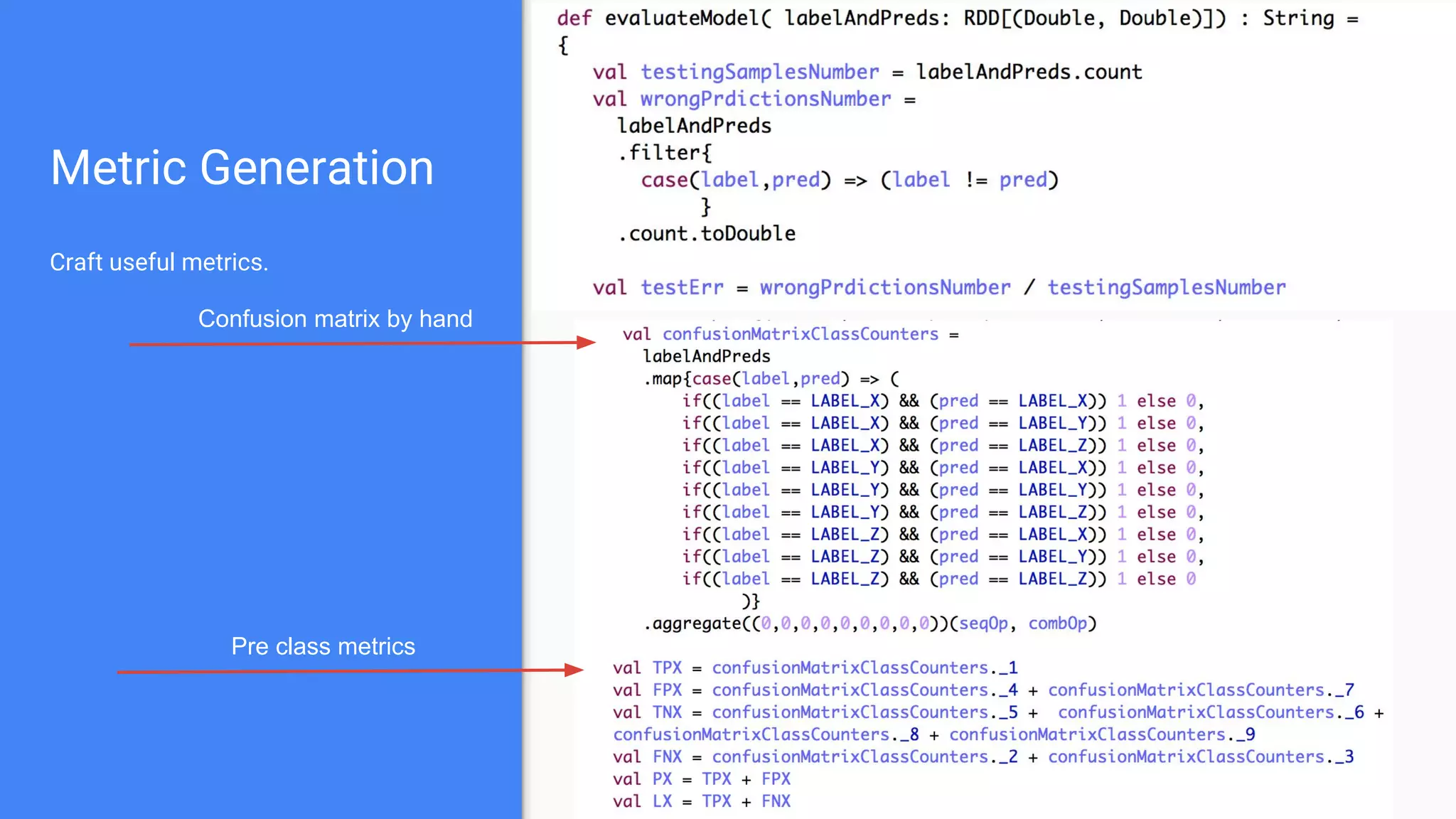

![Preprocess

Case Class RDD to DataFrame

RDD[String] to Case Class RDD

String row to object](https://image.slidesharecdn.com/bigmlwithsparktoproduction-160530111447/75/Production-Ready-BIG-ML-Workflows-from-zero-to-hero-18-2048.jpg)