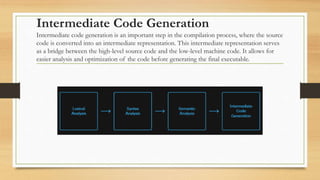

This document provides an overview of the principles of compiler design. It discusses the main phases of compilation, including lexical analysis, syntax analysis, semantic analysis, intermediate code generation, code optimization, and code generation. For each phase, it describes the key techniques and concepts used, such as lexical analysis using regular expressions and finite automata, syntax analysis using parsing techniques, semantic analysis using symbol tables and type checking, and code optimization methods like dead code elimination and loop optimization. The document emphasizes that compilers are essential tools that translate high-level programming languages into executable machine code.